When did your team last define what “done” means for an API?

The honest answer for most teams: when it returns 200 OK against the test suite. The integration test passes. The endpoint is live. The deploy goes out. Done.

That bar was good enough when humans wrote the consumers. A human reading a 200 OK can tell the difference between a healthy response and a malformed one. A human can notice that latency drifted up. A human can ask in Slack whether anyone changed the field shape this week.

In the AI era, agents are the consumers. Agents do not notice latency drift. They do not ask in Slack. They get a 200 OK with a malformed payload, hallucinate a response, and move on. The first your team hears about it is when a developer files a ticket about a Copilot session that gave the wrong answer last Tuesday.

A capability returning 200 OK is not done. A capability returning 200 OK is the start of done.

The four dimensions of done

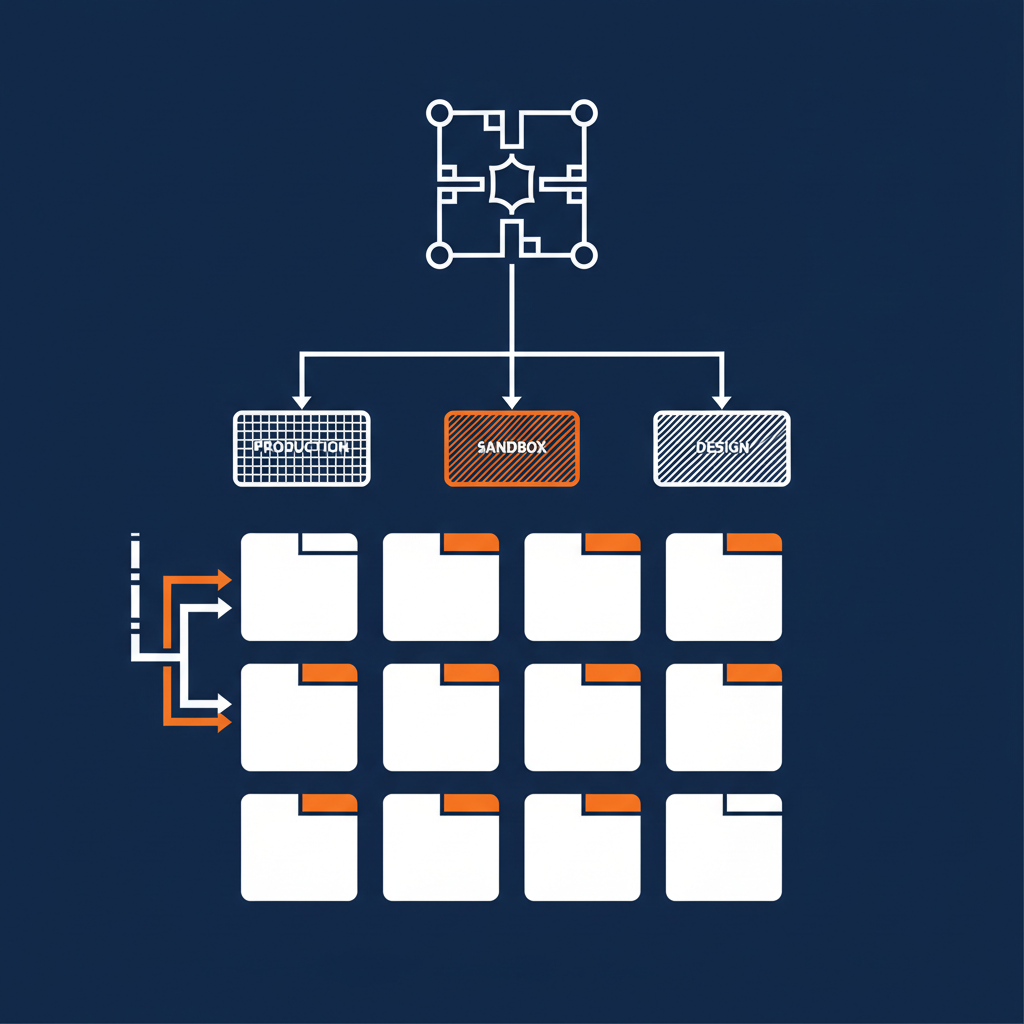

The Naftiko Framework treats four things as part of the runtime contract — not as integration projects:

1. Telemetry. Every Naftiko capability exposes a Prometheus-compatible /metrics endpoint with request count by operation and status code, latency histograms in durationMs, error rates, and resilience-pattern state. Every log entry carries capability, namespace, operation, statusCode, durationMs — the same five dimensions whether the call came in over REST, MCP, or an Agent Skill. You aggregate the metrics in whatever observability stack you already run. No glue code per capability.

2. Identity. Every capability call carries an identity binding — who the agent ran as, which scopes it presented, what authentication path the call took. The runtime captures it. The audit trail records it. When a security team asks “who did this”, the answer is in the structured log, not in a Confluence page.

3. Governance. Every capability YAML carries the governance metadata it expects to be enforced — sensitivity tags, agent-safety classifications, persona allow-lists, Spectral rules, Kyverno gates. The runtime enforces the policies the spec declares. The governance engine validates the spec against the org’s ruleset before deploy.

4. Resilience. Every capability ships with hooks for circuit breakers, retries, rate limiters, bulkheads. The metrics endpoint exposes the state of each. When a circuit trips, you know. When a rate limiter exhausts, you know. The patterns themselves are filling in across the alpha and beta milestones — but the telemetry surface for them is in place today.

These four dimensions are not “added on” to a capability. They are how the capability ships. A YAML file that does not declare them does not deploy. The runtime that does not surface them is not Naftiko-compatible.

Why this matters more for agents than for humans

Humans tolerate ambiguity. Agents do not.

A human user reading “your request returned 200 but the field shape looks odd, let me try again” can recover. An agent making the same observation hallucinates a downstream call that does not exist, fails silently, and produces a wrong answer the user trusts. Multiply that across thousands of agent calls per day across thousands of developers and the failure mode is no longer “an occasional bug” — it is “the AI integration is unreliable.”

The fix is not better prompts. The fix is making the failure observable to the runtime, so the agent — or the platform team behind the agent — can react. That requires telemetry, identity, governance, and resilience baked into the capability layer, not bolted on.

The Naftiko Framework Wiki documents the discipline behind this. Spec-Driven Integration as a doctrine, Rightsize AI Context as a use case, Agent Skills as an exposure surface — each of them is a written argument that the four dimensions of done are spec concerns, not deployment concerns.

Three dimensions of the cost of skipping done

Technology: you cannot debug what you cannot see. A capability without telemetry is a black box at runtime. A capability without identity binding is a black box at audit time. The cost of finding a regression in a black box is approximately the same as rewriting the capability.

Business: every silent failure compounds. The agent that returns wrong answers does so for weeks before anyone notices. Customer trust degrades on a curve that is invisible in the dashboard, because the dashboard does not exist. The cost of a single visible incident is much lower than the cost of a sustained quiet degradation.

Politics: “we have logs” is not the same as “we have logs the SRE can search across the whole fleet without renaming fields.” The ad-hoc-logs-per-team approach pushes the cost of incident response onto the on-call rotation. Standardized telemetry across capabilities pushes the cost back onto the capability authors — where it belongs.

What “done” looks like, concretely

A capability is done when:

GET /metricsreturns Prometheus-shaped telemetry for every operation it exposes- Every log line carries the canonical five fields

- Every operation declares its identity scope and the runtime enforces it

- Every consume and expose carries governance tags that match the org’s ruleset

- Every resilience pattern that applies has a metric exposing its state

If any of those five is absent, the capability is not done. It returned 200 OK. That is a different thing.

The Naftiko Framework ships the runtime that makes those five the default. The Naftiko Framework Wiki documents the discipline. The result is a capability layer where “done” means something, and where the on-call rotation can defend the capabilities they own.

200 OK is the start of done. The other four dimensions are how you finish.