As part of Naftiko Signals, we analyzed the technology investments of a global investment management firm across 773 areas, 176 services, 94 tools, and 111 standards. The overall signal score came in at 1186 — a strong number for a firm of this scale and complexity. The data story is impressive: a data score of 94, cloud at 98, security at 51, AI at 49, automation at 41. This organization has clearly invested heavily in the right foundational layers.

But four numbers tell a different story: API at 9. Data pipelines at 7. Event-driven at 7. Testing and quality at 10.

Those four gaps are the difference between having all the right pieces and those pieces working together for AI.

The Gap That Matters Most: API Score of 9

The firm’s SaaS portfolio hovers around 176 services from an outside sampling — a robust, multi-vendor technology portfolio spanning data, cloud, CRM, ITSM, ERP, observability, and more. These are best-in-class tools.

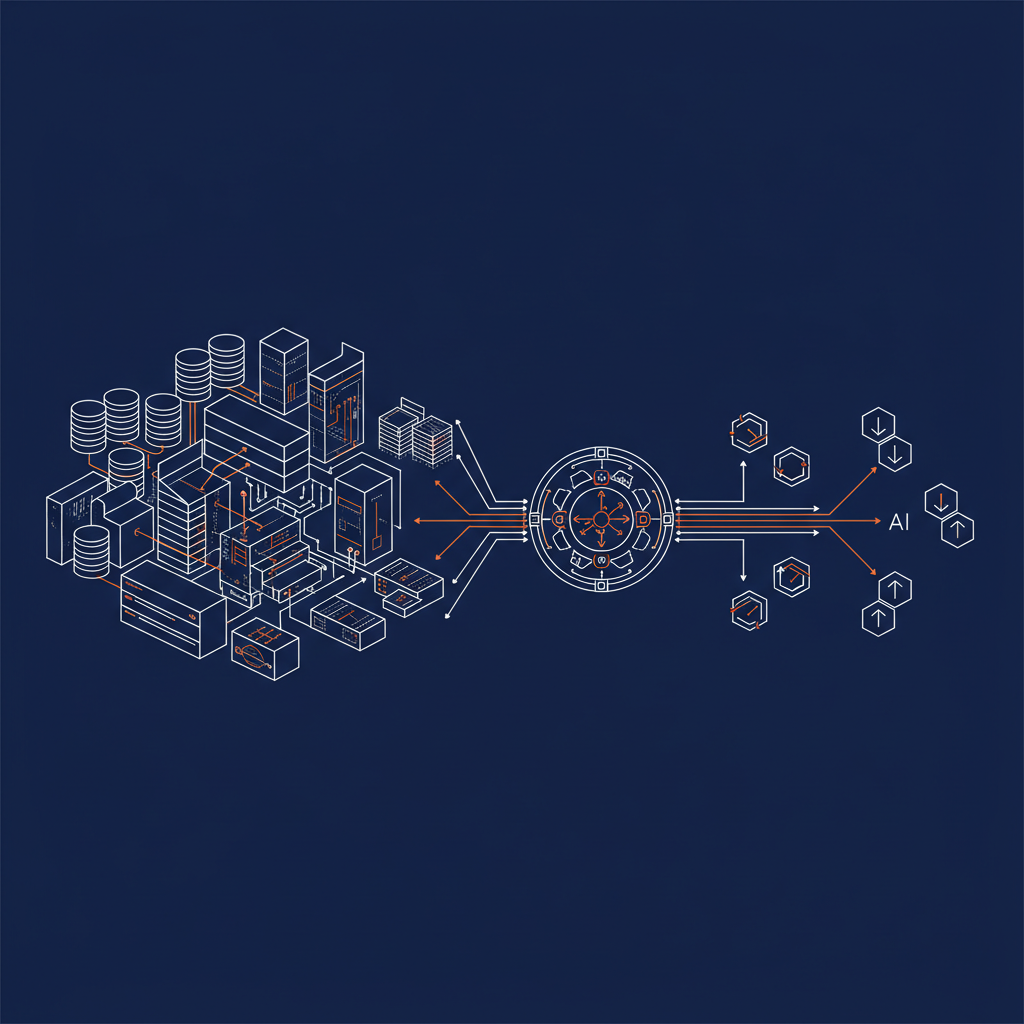

But an API score of 9 means those 176 services don’t have a unified integration layer. Each service is a silo with its own API shape, its own authentication model, its own data contract. When an AI agent needs to pull data from a data warehouse, check a CRM record, and execute an ITSM workflow in one inference cycle, it has to navigate all of that fragmentation itself — or it can’t do it at all.

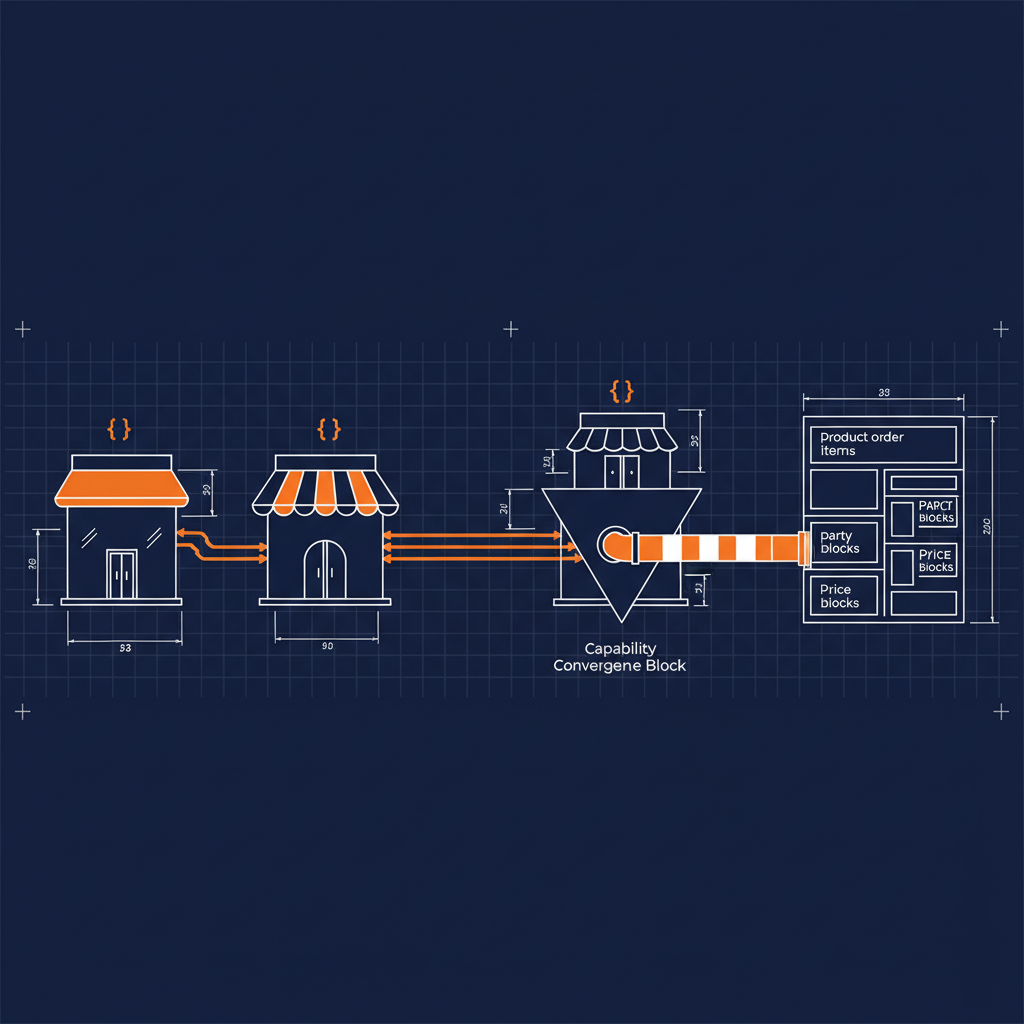

Naftiko addresses this through a capability layer that sits above existing API gateways — normalizing their outputs, handling authentication, and exposing governed tools that AI agents can discover and call through MCP. To be clear: Naftiko is not itself an API gateway. It’s a specification-driven layer that makes what your gateways already expose consumable by AI. The signals data shows Kong and AWS API Gateway already in this firm’s portfolio. The question is whether those gateways surface capabilities that AI agents can discover and call reliably. Right now, the signal says no.

The fix isn’t building more APIs. It’s placing a capability layer above the existing gateways that normalizes their outputs, handles authentication, and exposes governed tools that AI agents can consume through MCP. One YAML spec. One discoverable tool. The data warehouse query, the CRM lookup, the ITSM request — all surfaced consistently, all governed from a single specification.

The shared-api-gateway capability in the Naftiko Fleet does exactly this for Kong and AWS API Gateway — both of which appear in this firm’s signals data. A single spec consumes both gateway management APIs and normalizes routes and services across them into a unified, AI-consumable surface:

naftiko: "1.0.0-alpha1"

info:

label: "API Gateway (Kong + AWS)"

consumes:

- namespace: "kong"

type: "http"

baseUri: "https://{{kong_admin_host}}:8001"

authentication:

type: "bearer"

token: "{{kong_admin_token}}"

resources:

- name: "routes"

path: "/routes"

operations:

- name: "list-routes"

method: "GET"

- name: "services"

path: "/services"

operations:

- name: "list-services"

method: "GET"

- namespace: "aws-apigateway"

type: "http"

baseUri: "https://apigateway.{{aws_region}}.amazonaws.com"

authentication:

type: "aws-sigv4"

region: "{{aws_region}}"

service: "apigateway"

resources:

- name: "rest-apis"

path: "/restapis"

operations:

- name: "list-rest-apis"

method: "GET"

Two gateways. One spec. No custom integration code. That’s what closing an API score of 9 looks like in practice.

Data Maturity Without Pipeline Maturity

A data score of 94 is genuinely impressive. The tooling confirms it: multiple cloud data warehouses, streaming platforms, ETL tools, and columnar data formats. This is a mature data estate.

The problem is a data pipeline score of 7 alongside an event-driven score of 7. High data maturity built on batch-oriented infrastructure means AI models are working with yesterday’s data. Investment management operates on signals that are minutes or seconds old — a batch pipeline that refreshes overnight can’t support the kind of real-time AI inference that matters in markets.

The services are already in the portfolio: managed queuing and messaging services, workflow orchestration tools, and data integration platforms. The firm doesn’t need to buy new infrastructure. It needs to connect what it has into event-driven pipelines that stream data to where AI models can act on it.

Naftiko’s capability-driven integration model maps directly to this: a single YAML specification that consumes from an event source, transforms to a normalized shape, and exposes a streaming endpoint that AI services can subscribe to. The data estate becomes the data pipeline with a specification layer in between.

Automation Without Real-Time Response

An automation score of 41 is strong. Low-code automation platforms, infrastructure-as-code tools, and CI/CD pipelines show up across the portfolio. There’s real automation depth here.

But automation that runs on schedules and an event-driven score of 7 means the automation stack responds to time, not to events. In financial services, the events that matter — a market move, a client request, a regulatory flag — don’t arrive on a schedule.

Event-driven architecture closes this gap. The managed messaging infrastructure is already deployed. What’s missing is the capability layer that routes events into AI-ready workflows and exposes those workflows as governed tools. Naftiko’s consumes-and-exposes pattern makes this tractable: define the event source as a consumed resource, define the downstream action as an exposed capability, ship the specification.

A shared-event-broker capability in the Naftiko Fleet could provide a shared event pattern for Kafka-based messaging — listing topics, querying schemas, and retrieving consumer groups through a single normalized spec that any AI workflow can subscribe to. The same pattern applies to the existing queue and notification services: point the consumes namespace at the existing message infrastructure, define the output shape, expose as a governed MCP tool. The automation layer starts responding to events instead of clocks.

AI Investment Without AI-Grade Testing

An AI score of 49 reflects genuine investment. Managed ML platforms, LLM providers including Anthropic, and cloud-native machine learning services are active in the estate.

A testing and quality score of 10 against an AI score of 49 is a reliability gap waiting to become an incident. AI models that ship without validation pipelines, bias testing, or integration testing for their API endpoints are models that will fail in production in ways that are difficult to diagnose and expensive to remediate. In a regulated financial services environment, that’s not an acceptable risk profile.

The services are already in place to address this. Enterprise observability platforms — APM, log management, error tracking — are deployed across the estate. The observability infrastructure exists. What’s needed is a capability layer that makes AI service endpoints first-class observable objects — with typed interfaces, defined output contracts, and structured validation that monitoring tools can attach to.

Naftiko’s context engineering approach delivers exactly this: output projections define the contract for every AI-facing endpoint. The projection is the test specification. If the response doesn’t match the projection, the capability fails before the model ever sees the output.

The Readiness Assessment Gap

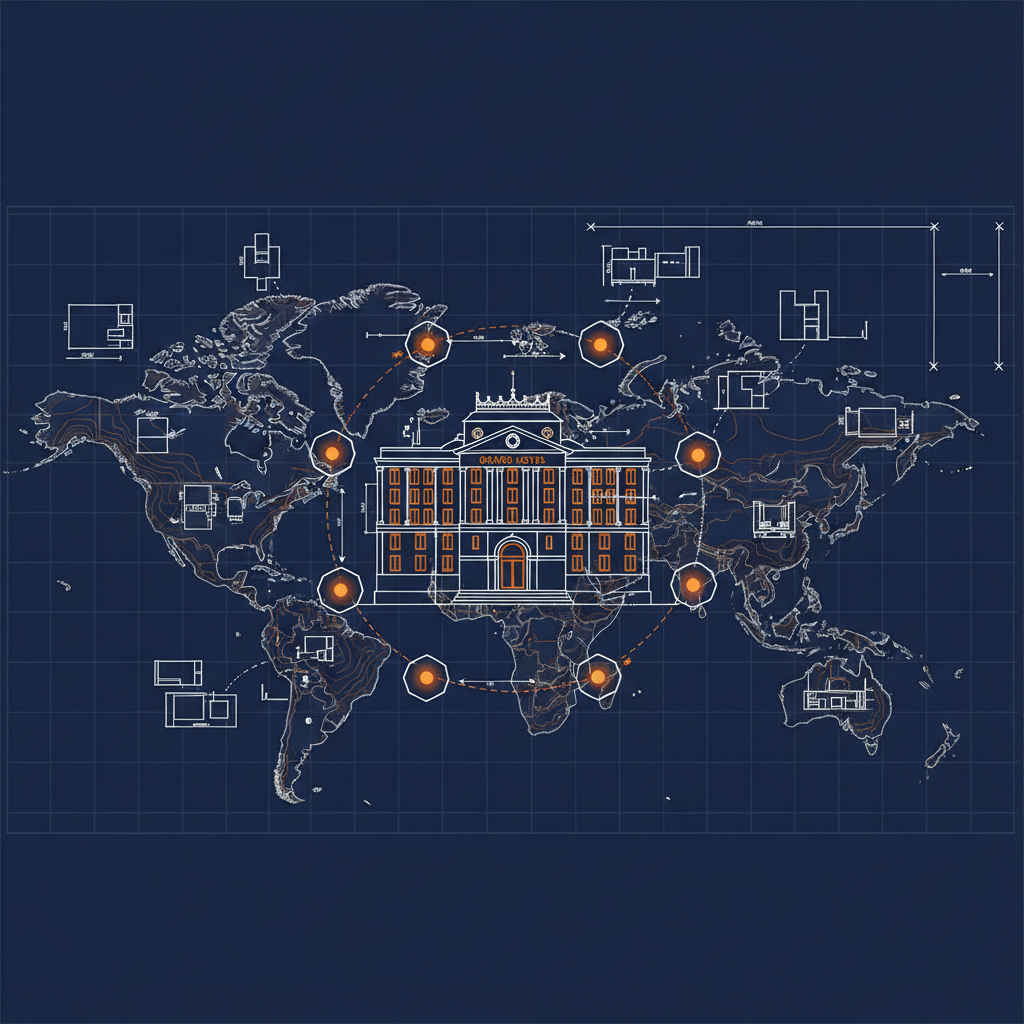

A domain specialization score of 0 with a total signal score of 1186 is the most interesting finding in this data. The firm is one of the most sophisticated technology investors in financial services. But the signals show no structured assessment of AI readiness at the domain level — no mapping of which business domains are ready for AI augmentation, which need data infrastructure investment first, and which face regulatory constraints that require specific governance patterns.

This isn’t a criticism. Most enterprises at this scale have the same gap. The investment in technology has outpaced the investment in structured readiness methodology.

The Naftiko Signals framework provides this structure. The same methodology that produced this impact report — analyzing investments across areas, services, tools, and standards against a readiness model — can be applied at the business domain level to produce a prioritized AI readiness roadmap. Which business unit’s data is clean enough to act on? Which service dependencies block AI deployment in specific workflows? Where does the regulatory posture create constraints that affect which capabilities can be exposed?

Those questions don’t have easy answers, but they have structured ones — and structured answers produce prioritized investment decisions rather than broad AI programs that spread resources across every opportunity simultaneously.

What Navigation Looks Like From Here

The signal data points to a clear sequence:

First: Deploy a governed capability layer above the existing API gateway investments. Normalize the 176-service portfolio into discoverable, AI-consumable capabilities. This unblocks every downstream AI initiative because every AI agent now has a reliable way to call the services it needs.

Second: Convert the data estate’s batch pipelines into event-driven streams. The managed messaging and workflow infrastructure is already there. Connect it with capability specifications that route data to where AI inference can act on it in real time.

Third: Extend event-driven connectivity into the automation layer. When automation responds to events rather than schedules, the automation portfolio becomes reactive to what’s actually happening in the business.

Fourth: Apply Naftiko to AI service endpoints. Every AI-facing capability gets a defined output contract. The existing observability tools attach to those contracts. Testing becomes continuous rather than pre-deployment.

Fifth: Map the readiness picture at the domain level. Use the Signals framework to identify which business domains are AI-ready today, which need infrastructure investment, and which face constraints that need to be resolved before AI deployment makes sense.

The estate is strong. The score of 1186 across 773 areas reflects serious, sustained investment. The four gaps — API at 9, data pipelines at 7, event-driven at 7, testing quality at 10 — are not signs of a company behind on AI. They’re signs of a company with the right foundation that hasn’t yet placed the capability layer that connects that foundation to AI outcomes.

That’s the work Naftiko is built to do.

This analysis is drawn from Naftiko Signals — our methodology for assessing enterprise technology investment against AI readiness. Learn more at signals.naftiko.io.