I am walking back through the Naftiko Framework use cases in order, one post at a time, because the more copilot and agent projects I see inside real companies, the more I notice the same failure mode. Every team connecting an LLM to an internal system ends up writing glue code. A bespoke tool wrapper. A one-off adapter. A file named copilot_helpers.py that nobody else can reuse and that nobody is governing.

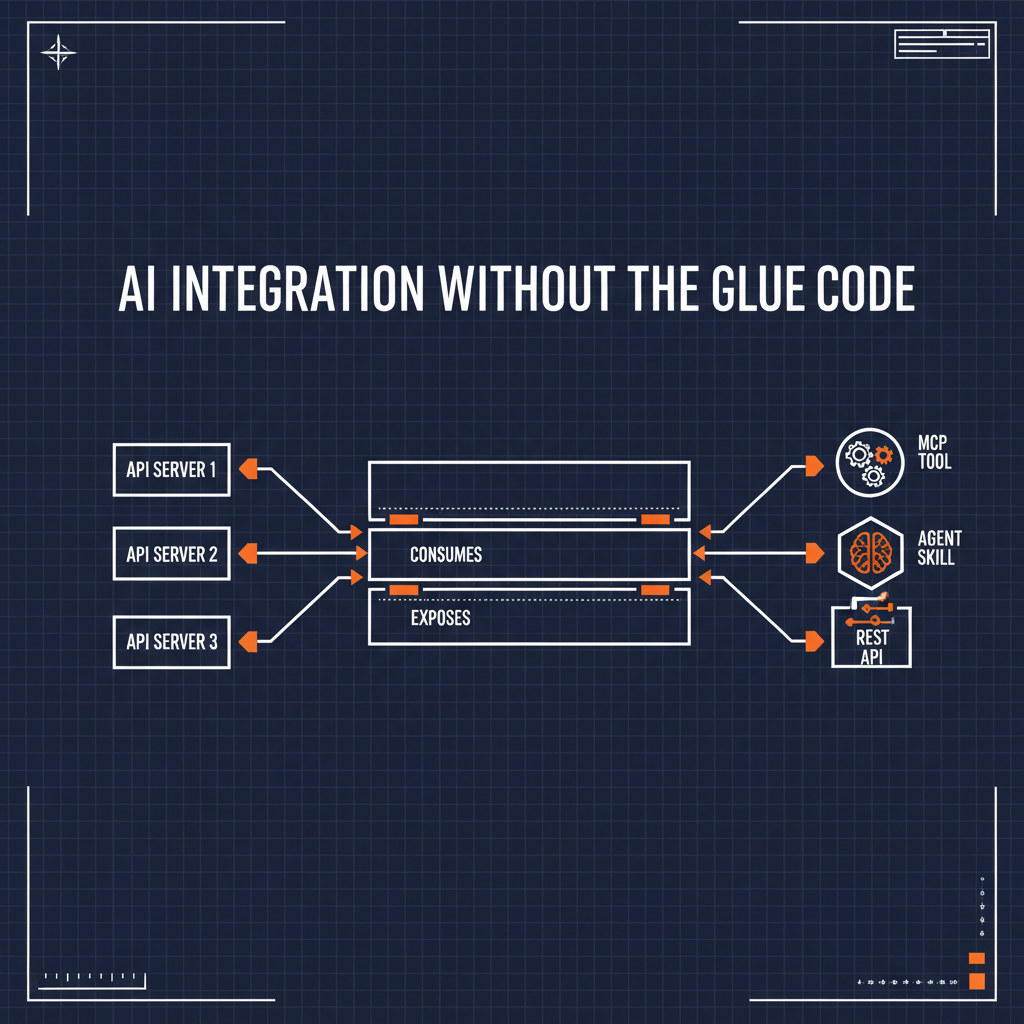

That is the problem the first use case — AI Integration — is about. Connect AI assistants to your systems through capabilities, so they can access trusted business data and actions without custom glue code.

The shape of the glue-code problem

Let me describe what I keep seeing in practice.

A team wants Claude, or Copilot, or their homegrown agent framework, to do something useful inside an existing product — read open orders, look up a customer, file a support ticket, pull an invoice. The system already has an API for it. Usually a REST API. Sometimes SOAP. Sometimes an internal RPC thing that was built in 2017 and nobody has touched since.

So an engineer writes a function that calls that API. Then wraps the function as an MCP tool. Or as an OpenAI function-calling tool. Or as an agent skill. Sometimes all three, in three different repos, with three different code styles and three different auth patterns. Each one is a bespoke adapter.

Multiply that across dozens of systems and you have the exact thing APIs were supposed to fix — sprawl — reappearing one layer up, inside the AI tooling.

What the capability spec actually does

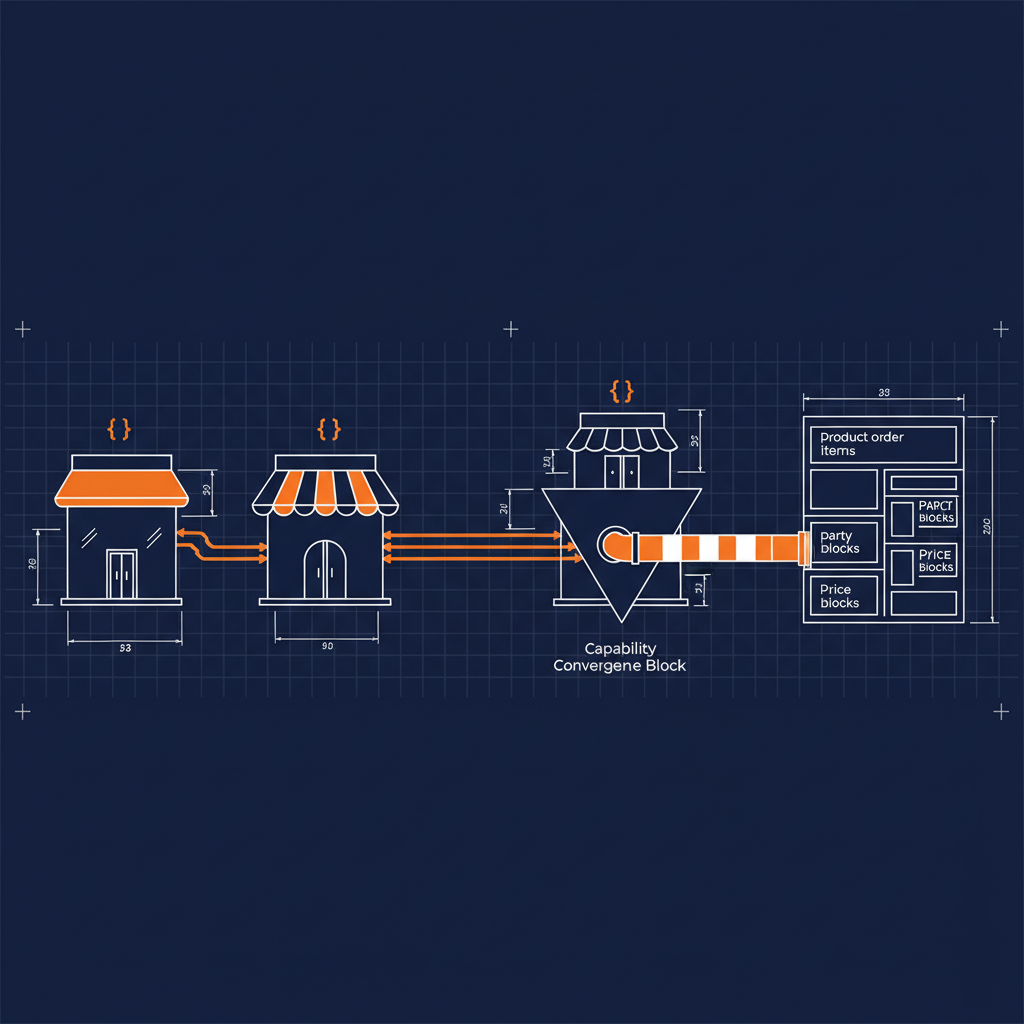

The Naftiko capability spec flips this. Instead of writing AI tooling code per system, you describe the system and the exposure surface in a single YAML file. The Naftiko Engine does the adapter work.

Two blocks carry the weight. consumes describes the upstream HTTP API — baseUri, auth, resources, operations. exposes describes how that same domain is presented outward — as MCP tools, as Agent Skills, as REST resources, or all three from one declaration.

Here is the shape:

naftiko: "1.0.0-alpha1"

info:

title: Customer Orders

description: "Read customer orders from the billing platform — exposed to copilots and REST clients"

capability:

consumes:

- namespace: billing

type: http

baseUri: "https://api.billing.internal"

authentication:

type: bearer

token: "{{BILLING_API_TOKEN}}"

resources:

- name: orders

path: "/v1/orders"

operations:

- name: list-open-orders

method: GET

inputParameters:

- name: customerId

in: query

type: string

required: true

exposes:

- type: mcp

namespace: customer-orders

tools:

- name: list-open-orders

description: "List open orders for a given customer"

hints:

readOnly: true

idempotent: true

call: billing.list-open-orders

inputParameters:

- name: customerId

type: string

required: true

mapping: "queryParameters.customerId"

outputParameters:

- type: array

mapping: "$.data.orders"

items:

type: object

properties:

- name: orderId

type: string

mapping: "$.id"

- name: amount

type: number

mapping: "$.totalAmount"

- name: status

type: string

mapping: "$.status"

- type: rest

basePath: "/api/orders"

endpoints:

- method: GET

path: "/open"

description: "List open orders for a given customer"

call: billing.list-open-orders

That is one file. No Python, no TypeScript, no bespoke adapter. It describes the upstream contract and the exposed contract and the reshaping that happens between them.

Three dimensions, one spec

The thing that interests me about this pattern is how it maps onto the three-dimensional lens I use for every API topic — technology, business, and politics.

Technology. The capability is declarative. Auth lives in one place. The output shape is explicit, typed, and JSONPath-mapped, so the model receives clean agent-task-shaped data instead of raw provider envelopes. Tokens drop. Accuracy climbs. The MCP surface and the REST surface are two different listeners on the same underlying integration — not two different codebases.

Business. The integration lives in Git as a file, which means it can be reviewed, linted, versioned, and audited. That is what “governed capability” actually means in practice. A platform team can own the capability layer. Product teams can consume it. A security review happens once per capability, not once per tool in every agent repo.

Politics. This is where it gets interesting, and where I think most AI integration stories quietly fail. When the AI tooling is scattered across fifteen bespoke wrappers, the platform team has no surface to govern. Every product team gets to decide its own auth pattern, its own error handling, its own tool naming. The capability spec puts the integration surface back in the hands of the people who are accountable for it — which is usually not the team who just got excited about MCP last week.

What the framework gives you for free

The features I pulled from the wiki for this use case are the ones that matter most in real AI integration work:

- Declarative HTTP consumption with namespace-scoped adapters — bearer, API key, basic, digest auth all built in

- Templated baseUri and path parameters — one capability can serve multiple environments

- Polyglot exposure from one capability — Agent Skills, MCP tools, and REST resources side-by-side

- Output shaping with typed parameters and JSONPath mapping — you pick what the model sees, not the upstream API

- Nested object and array output parameters — the model never chews through a

$.data.results[0].envelope.payloadtree constvalues for computed fields — inject a constant or tag into the output without an upstream call- Externalized secrets via

binds— file, vault, or environment, never in the spec itself

Those features are what let a capability replace a folder full of glue functions.

Where I am using this right now

Every Naftiko capability I have written in the last month — partner capabilities, the internal manage-feeds capability, SAP CPI wrappers, sandboxes — follows this same shape. Consumes block on top. Exposes block below. Output parameters reshaping upstream payloads into something a model can actually use.

The interesting part is not the technology. The interesting part is that once you have a capability spec, the conversation with the security reviewer, the platform team, and the product owner changes. It is no longer “we wrote some glue code, please approve.” It is “here is the contract — what should we change?”

That is how I want AI integration to feel.

Next

Next post in this series is on Rightsize AI context. Same three-dimensional lens — what it looks like technically, what it changes for the business, and what it lets a platform team do that they could not do before.

- Wiki: Guide — Use Cases

- GitHub: github.com/naftiko/framework

- Fleet Community Edition: github.com/naftiko/fleet

I will keep walking the list.