Every enterprise platform team has a version of the same three problems.

Nobody can find the APIs. They are scattered across a GitHub estate that grew faster than any catalog, an AWS API Gateway account someone configured two reorgs ago, and a developer portal that has not been updated in eighteen months. Asking “what APIs do we have?” produces a different answer depending on who you ask.

Nobody can prove which APIs are reused. Reusability is the word every API platform pitch deck used in the last ten years, and almost none of those programs ever produced a real number for it. The data exists — every gateway and APM stack records it — but the report nobody asked for in 2018 is the report nobody runs in 2026.

Nobody can run governance against a runnable artifact. Reviews happen against slide decks or wiki pages that drift the moment they are saved. The reviewer signs off on the description; the runtime does whatever the code happens to do.

Meanwhile, leadership has been asking for an AI story for six quarters. The platform team usually answers with a Slack bot or an internal copilot demo. Neither closes the loop with the existing API platform — and so the AI program and the API program continue to be two separate budgets, each underfunded, each unable to make the other’s case.

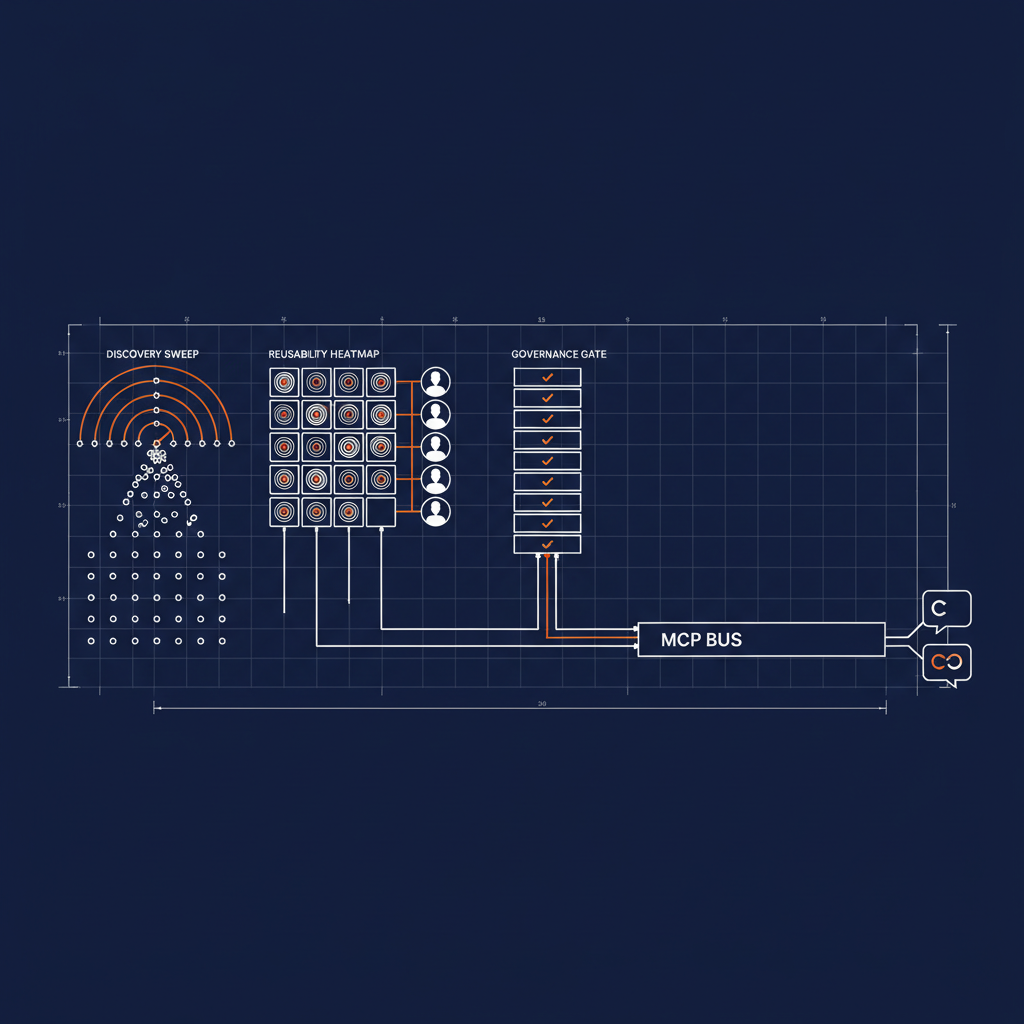

The fix is to stop treating them as separate things. The same capability layer that answers the API discovery, reusability, and governance questions is the layer that grounds the AI surface. Three capabilities, one runtime, one MCP namespace developers reach from inside the IDE.

Capability one — Discovery across every source where a spec might live

The first capability crawls every place an OpenAPI spec might live: every GitHub org and repo in the estate, every API gateway account, every internal portal that publishes machine-readable artifacts. It normalizes what it finds — a YAML in a repo, an export from a gateway, a JSON dropped in a CMS — and exposes the unified result as a single deterministic find-specs operation.

That operation answers the “what APIs do we have?” question the same way every time. Same input, same output. Same audit trail of where each spec came from, when it was last touched, and whether the gateway has it registered.

It is not a portal you have to maintain. It is a runtime capability that re-derives the answer on every call. The catalog is no longer a thing the platform team curates by hand and watches drift. It is a query.

naftiko: "1.0.0-alpha2"

info:

title: API Spec Aggregation

description: >-

Crawls every place an OpenAPI spec might live — GitHub Search across the

enterprise GitHub estate, plus AWS API Gateway — and exposes the unified

result as REST + MCP + Agent Skills so any developer or agent can discover

specs across the whole estate from one tool call.

tags:

- API Discovery

- OpenAPI

- GitHub

- AWS API Gateway

binds:

- namespace: github-env

keys: { GITHUB_TOKEN: GITHUB_TOKEN }

- namespace: aws-env

keys:

AWS_ACCESS_KEY_ID: AWS_ACCESS_KEY_ID

AWS_SECRET_ACCESS_KEY: AWS_SECRET_ACCESS_KEY

AWS_REGION: AWS_REGION

capability:

consumes:

- namespace: github

type: http

baseUri: "https://api.github.com"

authentication: { type: bearer, token: "" }

resources:

- name: search-code

path: "/search/code"

operations:

- name: search-openapi-specs

method: GET

inputParameters:

- { name: q, in: query }

- namespace: apigateway

type: http

baseUri: "https://apigateway..amazonaws.com"

authentication:

type: aws-sig-v4

accessKeyId: ""

secretKey: ""

region: ""

service: apigateway

resources:

- name: rest-apis

path: "/restapis"

operations:

- { name: list-rest-apis, method: GET }

- name: rest-api-export

path: "/restapis//stages//exports/oas30"

operations:

- name: export-rest-api

method: GET

inputParameters:

- { name: rest_api_id, in: path }

- { name: stage_name, in: path }

exposes:

- type: rest

port: 8080

namespace: spec-aggregation-rest

resources:

- name: specs

path: "/find-specs"

operations:

- method: GET

name: find-specs

inputParameters:

- { name: org, in: query, type: string }

- { name: source, in: query, type: string }

- { name: query, in: query, type: string }

- type: mcp

port: 3010

namespace: spec-aggregation-mcp

tools:

- name: find-specs

description: "Discover OpenAPI specs across GitHub orgs and AWS API Gateway. Returns spec_url, source, repo, last_updated, and gateway_registered for each match."

hints: { readOnly: true }

- type: skill

port: 3011

namespace: spec-aggregation-skills

skills:

- name: spec-discovery

description: "Discover OpenAPI specs across the whole estate."

allowed-tools: "find-specs"

tools:

- name: find-specs

from: { sourceNamespace: spec-aggregation-mcp, action: find-specs }

Capability two — Reusability scored from telemetry, not asserted from intent

The second capability does the thing every platform team has been meaning to do for ten years: it consumes API-call telemetry from Splunk, New Relic, and Datadog, and produces a deterministic reusability summary.

Which endpoints have more than one consumer. Which consumers are calling shadow instances of an API another team already runs. Which endpoints have zero traffic and are candidates for retirement. Which teams are duplicating work because nobody connected them to the team that already shipped it.

The signal exists in the observability stack. The capability is the deterministic layer that reads it, shapes it, and reports the resulting summary back to the same observability stack — so the reusability number lands in the dashboard the platform team already watches every morning, instead of in a quarterly slide deck nobody trusts.

The output is the artifact governance has been asking for. Not “are we reusing APIs?” but “here are the eleven endpoints that account for sixty percent of redundant traffic, ranked by team.”

naftiko: "1.0.0-alpha2"

info:

title: API Reusability Summary

description: >-

Consumes API-call telemetry from Splunk, New Relic, and Datadog and

produces a deterministic API-reusability summary — endpoints with multiple

consumers, shadow-instance duplication, zero-traffic candidates for

retirement — and reports the summary back to the same observability stack.

tags:

- API Reusability

- Splunk

- New Relic

- Datadog

binds:

- namespace: splunk-env

keys: { SPLUNK_TOKEN: SPLUNK_TOKEN, SPLUNK_HOST: SPLUNK_HOST }

- namespace: newrelic-env

keys: { NEWRELIC_API_KEY: NEWRELIC_API_KEY, NEWRELIC_ACCOUNT_ID: NEWRELIC_ACCOUNT_ID }

- namespace: datadog-env

keys: { DD_API_KEY: DD_API_KEY, DD_APP_KEY: DD_APP_KEY }

capability:

consumes:

- namespace: splunk

type: http

baseUri: "https:///services"

authentication: { type: bearer, token: "" }

resources:

- name: search

path: "/search/jobs/export"

operations:

- name: search-api-calls

method: POST

body: |

search=index=apigw earliest=-7d | stats count by api_name, consumer_team, endpoint

- namespace: newrelic

type: http

baseUri: "https://api.newrelic.com/graphql"

authentication: { type: api-key, header: "API-Key", value: "" }

resources:

- name: nrql

path: ""

operations:

- { name: query-traffic, method: POST }

- namespace: datadog

type: http

baseUri: "https://api.datadoghq.com/api/v2"

authentication:

type: headers

headers: { "DD-API-KEY": "", "DD-APPLICATION-KEY": "" }

resources:

- name: logs

path: "/logs/events/search"

operations:

- { name: search-logs, method: POST }

# Reporting back: post the reusability summary as a custom metric

# so the same dashboard the platform team already watches picks it up.

- name: metrics

path: "/series"

operations:

- { name: post-reusability-metric, method: POST }

exposes:

- type: rest

port: 8080

namespace: reusability-rest

resources:

- name: summary

path: "/reusability-summary"

operations:

- method: GET

name: get-reusability-summary

inputParameters:

- { name: window, in: query, type: string } # e.g. "7d"

- { name: team, in: query, type: string }

- type: mcp

port: 3010

namespace: reusability-mcp

tools:

- name: reusability-summary

description: "Return endpoints ranked by redundant traffic, with consumer teams and shadow-instance flags. Same input → same output."

hints: { readOnly: true }

- name: report-reusability-to-observability

description: "Post the latest reusability summary back to Splunk + New Relic + Datadog as a custom metric the platform dashboard already watches."

hints: { readOnly: false, idempotent: true }

Capability three — Governance against a runnable spec, fed back into the platform

The third capability closes the loop. It pulls specs from every gateway in the estate, normalizes them, applies the governance ruleset against the runnable artifact, and republishes the enriched, governance-checked specs back to the developer portal and into an MCP surface that developers consume from inside the IDE.

The portal is no longer the thing developers ignore. The portal is the thing the runtime keeps current.

The MCP surface is what makes the loop matter to the AI program. A developer in Claude or Copilot asks “is there already an API that does X?” — and the answer is grounded in the live spec inventory, the live reusability data, and the live governance posture, because all three live in the same capability runtime. There is no intermediate prompt-engineering layer, no agent guessing at filenames, no hallucinated endpoint. The MCP tool returns the spec the gateway is actually running.

naftiko: "1.0.0-alpha2"

info:

title: Gateway OpenAPI Crawl + Governance Feedback

description: >-

Pulls OpenAPI specs out of every API gateway in the estate, normalizes

them, runs the governance ruleset against the runnable artifact, and

republishes the enriched, governance-checked specs to the developer

portal AND to an MCP surface IDE-attached agents call.

tags:

- API Governance

- Gateway

- Developer Portal

- MCP

binds:

- namespace: aws-env

keys:

AWS_ACCESS_KEY_ID: AWS_ACCESS_KEY_ID

AWS_SECRET_ACCESS_KEY: AWS_SECRET_ACCESS_KEY

AWS_REGION: AWS_REGION

- namespace: portal-env

keys: { PORTAL_TOKEN: PORTAL_TOKEN, PORTAL_BASE: PORTAL_BASE }

capability:

consumes:

- namespace: apigateway

type: http

baseUri: "https://apigateway..amazonaws.com"

authentication:

type: aws-sig-v4

accessKeyId: ""

secretKey: ""

region: ""

service: apigateway

resources:

- name: rest-apis

path: "/restapis"

operations:

- { name: list-rest-apis, method: GET }

- name: rest-api-export

path: "/restapis//stages//exports/oas30"

operations:

- name: export-rest-api

method: GET

inputParameters:

- { name: rest_api_id, in: path }

- { name: stage_name, in: path }

# Republish target — the developer portal becomes a thing the runtime

# keeps current, not a thing the platform team curates by hand.

- namespace: portal

type: http

baseUri: ""

authentication: { type: bearer, token: "" }

resources:

- name: specs

path: "/specs/"

operations:

- name: upsert-spec

method: PUT

inputParameters:

- { name: spec_id, in: path }

governance:

rules:

- id: requires-operation-summary

severity: error

- id: requires-tagged-owner

severity: error

- id: deprecated-operations-flagged

severity: warning

exposes:

- type: rest

port: 8080

namespace: governance-rest

resources:

- name: crawl

path: "/crawl-and-publish"

operations:

- { method: POST, name: crawl-and-publish }

- name: posture

path: "/posture/"

operations:

- method: GET

name: get-governance-posture

inputParameters:

- { name: api_id, in: path }

- type: mcp

port: 3010

namespace: governance-mcp

tools:

- name: get-current-spec

description: "Return the spec the gateway is actually running for an API id — not the wiki version, not the slide-deck version."

hints: { readOnly: true }

- name: get-governance-posture

description: "Return current governance findings against the live spec — error/warning counts, owner, deprecated operations."

hints: { readOnly: true }

The MCP surface is what makes this an AI story, not a chatbot story

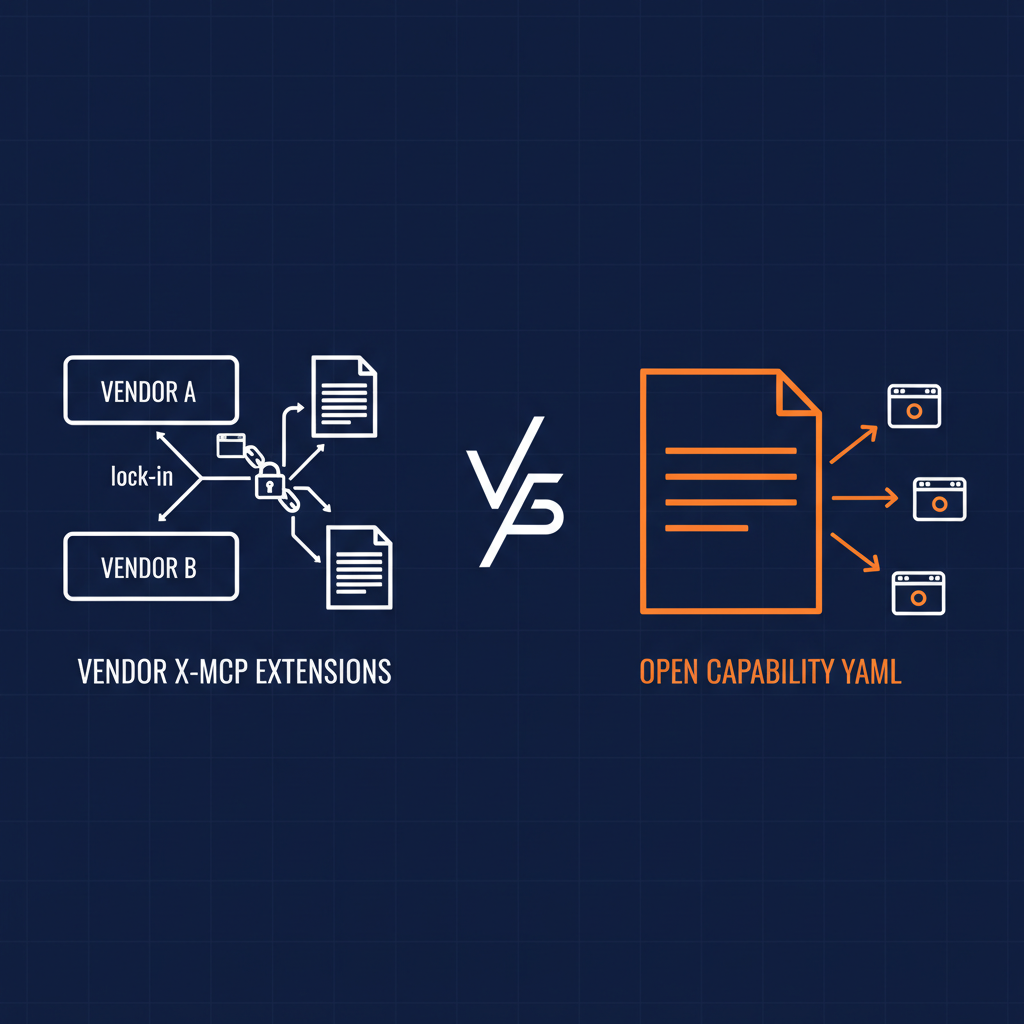

Every enterprise has been pitched some version of an internal AI assistant. Most of them are a model with a long tool list and a wiki crawl behind it. The wiki was already stale. The tool list was already unbounded. The agent hallucinates inside the same blast radius every prior internal “search” tool did, plus a confidence the previous tools never had.

A capability with an MCP surface is the opposite shape. The agent is not reasoning over crawled prose; it is calling a deterministic operation that returns a typed, audited result. The reasoning surface stays where reasoning belongs — composing the answer for the developer’s intent — and the data surface stays in the runtime where governance, identity, and cost attribution already live.

Three capabilities, one MCP namespace, and every developer’s IDE is suddenly grounded in the same authoritative API inventory the platform team is producing. “Is anyone else calling this endpoint?” returns the team behind the other consumer. “Is this spec still current?” returns the diff against what the gateway is actually running. “What APIs do we have for ledger postings?” returns a real list, with reusability scores and governance posture.

That is the AI story. Not a chatbot bolted onto a wiki — a grounded, deterministic surface over the same data the platform team is already producing. The AI program and the API program become the same investment.

Why this maps cleanly onto an enterprise budget

Technology. Three capabilities, one runtime, one MCP surface. The deterministic substrate is the same one every audit, identity binding, and cost attribution uses. The AI surface is a thin facade over capabilities the platform team would have built anyway. There is no “AI infrastructure” line item separate from the API platform line item, because they are the same line item.

Business. The reusability number is finally a number. The discovery surface is finally a queryable inventory. The governance loop is finally tied to a runnable artifact. Each one of these is a thing the platform team has been losing budget arguments about for years. They are now answerable. The CFO can see redundant API spend by team. The portfolio review can see which APIs are candidates for retirement. The platform-as-product story has the metric it was always supposed to have.

Politics. The AI checkbox is no longer separate from the API platform checkbox. Leadership gets the AI story they were asking for. The platform team gets the funding for the work they were already going to do. The security team gets a deterministic, governed surface they can audit, instead of an unbounded agent they would have to reject. Nobody has to pick a side.

What this looks like in practice

A platform team adopts the three capabilities. The discovery capability is pointed at the GitHub orgs and the gateway accounts. The reusability capability is pointed at Splunk and New Relic. The governance-and-feedback capability is pointed at the gateways that feed the developer portal.

Every developer’s Claude Code, Copilot, or other MCP-capable IDE picks up the same MCP namespace. The platform team’s quarterly report writes itself, because the data is already running through the same observability stack the team uses for everything else. The AI demo writes itself too, because the MCP surface is already grounded in the deterministic capability layer.

There is no second migration. There is no “now we move it to the AI platform.” The API platform is the AI platform, because the same capabilities answer both sets of questions.

The rule

Three capabilities. One runtime. One MCP surface.

If your API platform program and your AI program are still two separate budgets, you have not yet noticed that the same capability layer answers both sets of questions. Discovery, reusability, and governance are not AI questions — but the MCP surface is what makes the answers reachable from inside the tools developers and leadership are already paying for.

The Naftiko Framework runs the capability layer. The Naftiko Fleet manages it at scale. The MCP surface is what makes the answer reachable from inside Claude, Copilot, and every other agent the enterprise is going to keep buying.

That is the API governance story. That is the API reusability story. That is the API discovery story. And, almost incidentally, that is the AI story the board has been asking for.