I am at APIDays NYC for the second year in a row. The conference is still very much about AI and agents — that is the center of gravity and probably will be for a while. What has changed in twelve months is the texture of the conversation.

In 2025 it felt like a hype cycle pitching itself at an audience that had not yet shipped any of it. Every keynote was an unbounded future. Every vendor booth was selling agents to enterprises that had not deployed agents. Every panel ended with a slide of bullet points about what would be possible next year. It was the kind of energy where the room agreed on the destination without anyone agreeing on what week the bus left.

In 2026 it sounds like operations.

The talks come from inside the building now

Look at who is actually on the schedule this year. Four speakers from Capital One. A governance-automation manager from Citi. A VP from U.S. Bank. An executive director from Morgan Stanley walking the room from UDDI to MCP. A senior director from Early Warning / Paze on AI Agent Skills. JPMorgan, Mastercard, Synchrony, Discover, Equifax, Navy Federal. Telco (Verizon, AT&T). Retail (Macy’s, Home Depot, BJ’s). Healthcare. Insurance. Hospitality (Mews, eviivo). This is the regulated, slow-moving, real-systems half of the economy, and they are not on stage to ask whether agents are going to happen. They are on stage to explain what they ran into when they shipped.

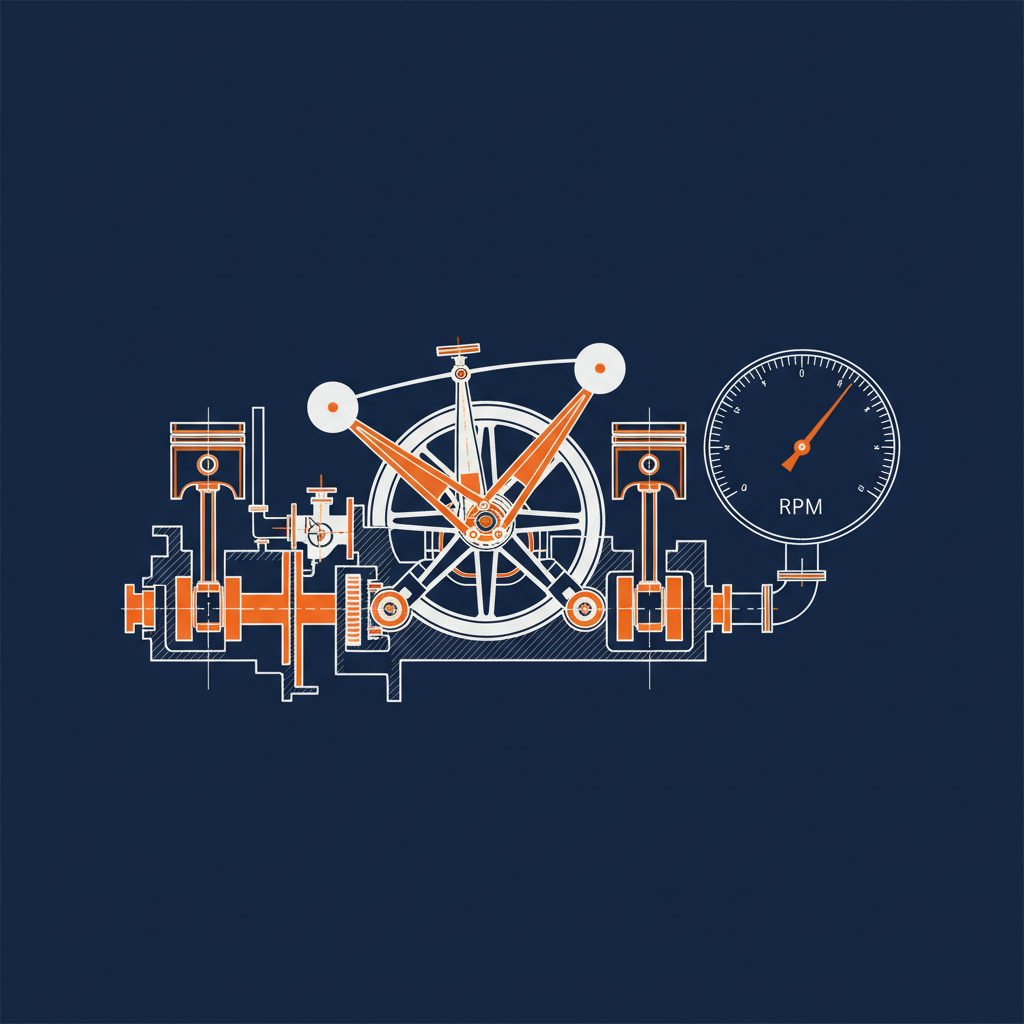

The vendor talks reflect the same shift. Twelve months ago “MCP” was a slide-deck word. This week MCP shows up in talk titles next to “in practice,” “context bloat,” “cost,” “governance,” and “scale.” Kong on multi-server MCP routing. WSO2 on governing agentic API costs in the enterprise — the talk title literally says “The $1.6M Weekend.” IBM on rethinking AI gateways for agentic architectures. Tetrate on platforms for agentic scale. These are operational talks. They land because the people in the audience have hit the problems they describe.

The hallway conversation matches the agenda

The hallway conversation matches. The threads on enterprise token budgeting and on the hundreds-of-models future that large enterprises are stumbling into are being quoted by people who have a quote in mind for their CFO, not for a panel. The Meta “Claudeonomics” leaderboard came up in conversations I was not part of, twice as a cautionary tale and once as something an engineer’s company was about to do anyway. The questions in those conversations are budgeting questions, attribution questions, governance questions. They are the same questions enterprises were asking about API spend a decade ago, applied to agent compute.

Twelve months ago I was in this same hallway listening to people imagine what would happen. This year I am listening to them describe what already did, and ask each other how they handled it. That is the shift.

The work is starting to look like API work

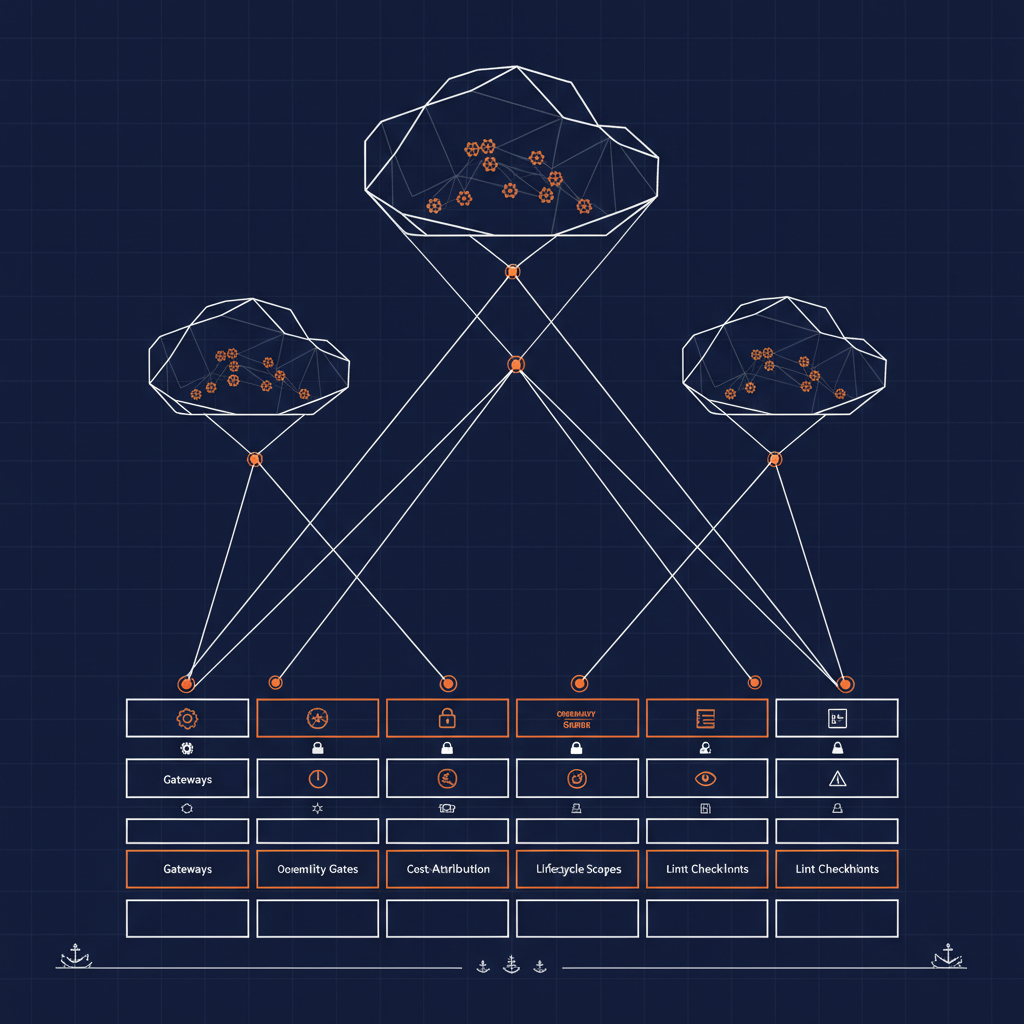

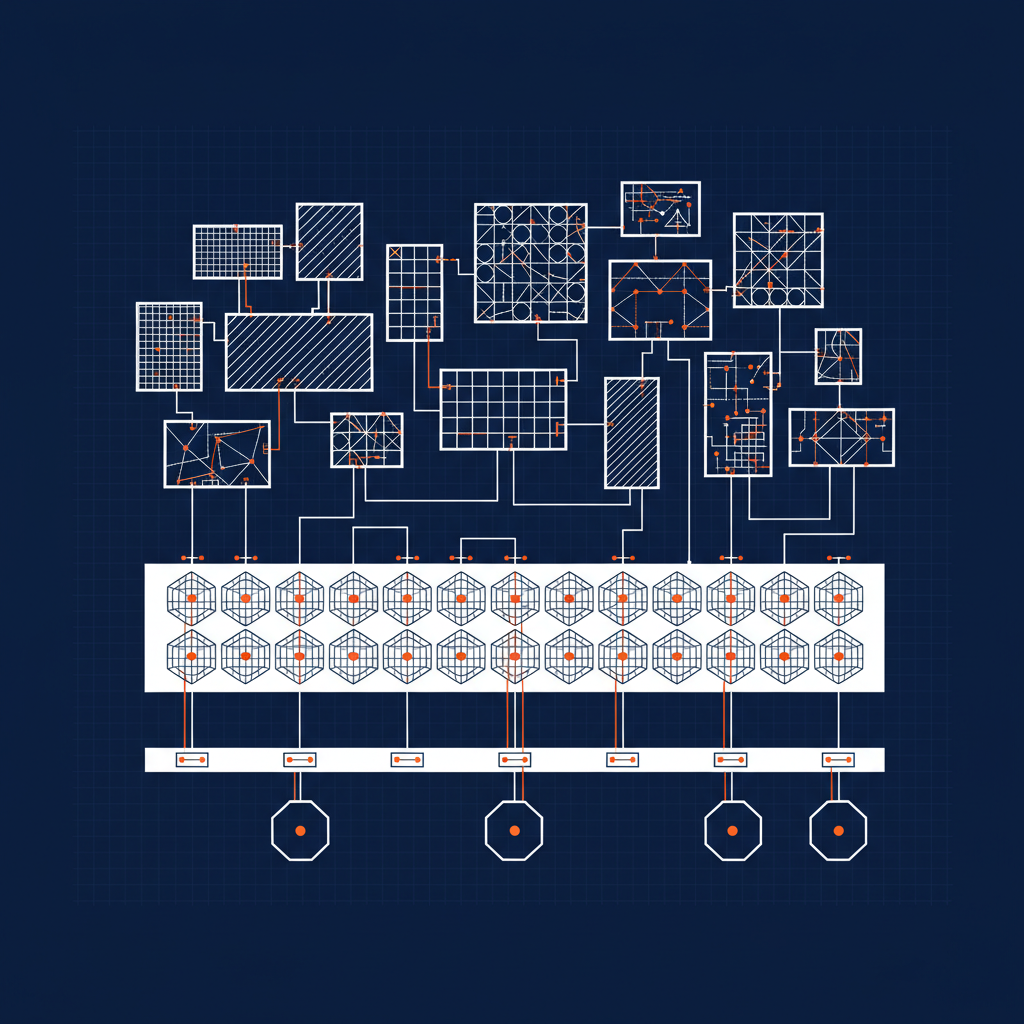

The most interesting consequence of this grounding is that the agentic conversation is converging back onto the operational disciplines that the API community spent the last fifteen years building. Discovery. Governance. Identity. Lifecycle. Cost attribution. Schema enforcement. Lint rules. Style guides. The same lifecycle that linted OpenAPI specs in CI is the lifecycle that is now linting MCP tool definitions, capability declarations, and agent skill metadata. The same governance language we used for gateways is the language enterprises are reaching for to govern model gateways and consumption layers. The same product-management instincts that mattered for treating an API as a product are mattering now for treating an agent capability as a product.

This is not the conference rediscovering APIs. This is the conference acknowledging that the AI layer needs the same operational backbone the API layer needed — and that the people who built the API operational backbone are the same people in the room.

The frothier vendors got quieter

There is still hype. I am not going to pretend otherwise. There are still booths selling “the platform that solves all of it.” There are still keynotes that imagine an autonomous enterprise inside of eighteen months. But the proportion has changed. The center of mass moved from “imagine this” to “here is what happened when we ran it in production for six months and the bill came in.” A few of the loudest vendors from last year are noticeably quieter this year. Some of them did not come back. Some of them came back with a much narrower, much more honest pitch.

I think this is what a market growing up looks like, and I think it is the healthiest version of the AI conversation I have heard at any conference this year. The center of gravity is still AI and agents. But the conversation underneath it is now made of the same operational concerns that have always made enterprise software work — cost, risk, velocity, identity, governance, lifecycle, observability, change management.

Last year felt like a fever. This year feels like a Tuesday. Both are AI conferences. The Tuesday is the version that produces production systems.

What this means for the next twelve months

If 2025 was will it happen and 2026 is here is how we are running it, the conversation we should be having heading into 2027 is here is what we wish we had built from the start. The audiences in those banks-and-insurers rooms are going to tell that story whether the vendors are ready for it or not. The vendors who listen are going to be fine. The ones who keep selling the 2025 keynote are going to find themselves talking to an emptier room.

Heading back into Morgan Forum tomorrow for Thursday’s OpenAPI examples and tags talk. The conversation is grounded. That is the news.