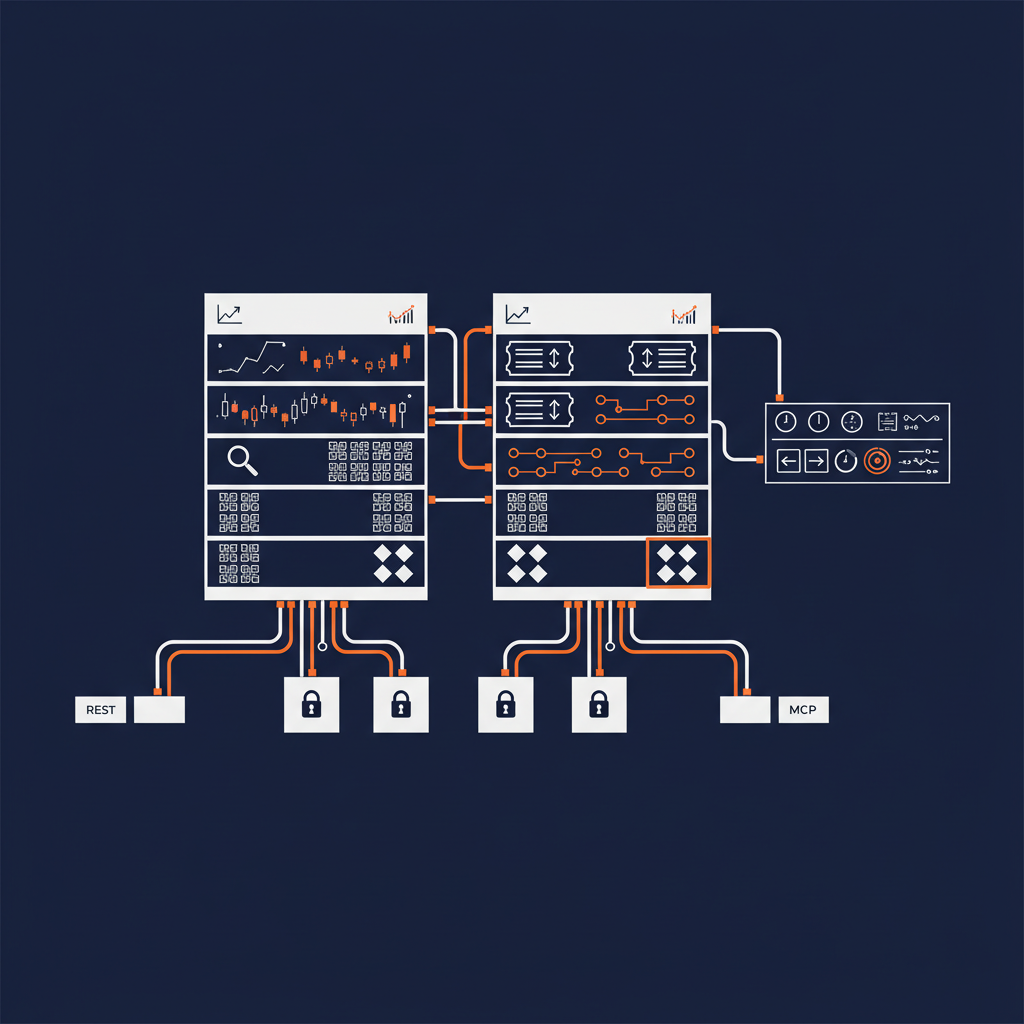

If you are a buy-side firm and your AI strategy involves Bloomberg AIM, you have probably already discovered the problem. Bloomberg’s developer surface is not one API. It is a set of interlocking APIs — the Data License HAPI for bulk financial, pricing, and reference data; the HTTP API for real-time reference, historical, and intraday data; EMSX for order, route, and fill management. Each one has its own auth, its own shape, its own latency profile, and its own regulatory weight.

The naive AI integration story for that surface is “stand up an MCP server per API and let the agent figure it out.” That works in a demo. It will not survive a compliance review at any firm that is regulated as a fiduciary.

The work that actually survives — and that we have been quietly building partner artifacts for — is to wrap that surface in governed Naftiko capabilities, expose them through both REST and MCP, and run them under Naftiko Fleet so every call has a context, a policy, and an audit trail.

This post walks through what that looks like with two real capability files from the open-source api-evangelist/bloomberg-aim repository.

The shape of the integration

Two capability files. Each one self-contained — the upstream adapters are inlined directly into the consumes: block, no external imports.

capabilities/

├── market-data-and-analytics.yaml # buy-side analyst / PM workflow

└── trading-and-execution.yaml # algo trader / execution workflow

Each capability declares the upstreams it talks to (HAPI, HTTP API, EMSX) inline, the credentials it binds, and the surfaces it exposes. The two capabilities are composed into business workflows — one for the analyst sitting in front of a portfolio, one for the trader sitting in front of a blotter. Both expose a REST surface for traditional service consumers and an MCP surface for AI agents and copilots.

Same spec. Two consumption models. One governance layer.

Capability one — market data and analytics

Quantitative analysts and portfolio managers do not call APIs. They ask questions. What catalogs do I have access to? What’s in my universe? Get me reference data on these tickers. Pull historical end-of-day for the last three years.

The market-data capability turns those questions into a small, named surface. Here is the shape (excerpted from capabilities/market-data-and-analytics.yaml):

naftiko: "1.0.0-alpha1"

info:

label: "Bloomberg Market Data and Analytics"

description: "Workflow for accessing Bloomberg market data combining the Data License HAPI for bulk data with the HTTP API for real-time reference and historical data."

binds:

- namespace: env

keys:

BLOOMBERG_TOKEN: BLOOMBERG_TOKEN

capability:

consumes:

- type: http

namespace: data-license

baseUri: https://api.bloomberg.com/eap

description: "Bloomberg Data License HAPI for financial data"

authentication:

type: bearer

token: ""

resources:

- name: catalogs

path: /catalogs

operations:

- name: list-catalogs

method: GET

- name: universes

path: /universes

operations:

- name: list-universes

method: GET

- name: create-universe

method: POST

body:

type: json

data:

identifier: ""

title: ""

- type: http

namespace: http-api

baseUri: https://api.bloomberg.com/hapi

description: "Bloomberg HTTP API for reference and historical data"

authentication:

type: bearer

token: ""

resources:

- name: reference-data

path: /reference-data

operations:

- name: get-reference-data

method: POST

- name: historical-data

path: /historical-data

operations:

- name: get-historical-data

method: POST

exposes:

- type: rest

port: 8080

namespace: market-data-api

- type: mcp

port: 9090

namespace: market-data-mcp

transport: http

tools:

- name: list-catalogs

hints: { readOnly: true }

call: "data-license.list-catalogs"

- name: create-universe

hints: { readOnly: false }

call: "data-license.create-universe"

- name: get-historical-data

hints: { readOnly: true }

call: "http-api.get-historical-data"

- name: search-instruments

hints: { readOnly: true }

call: "http-api.search-instruments"

Notice three things.

The credential is bound at the capability boundary. BLOOMBERG_TOKEN is declared once, used by the consumed adapters as a bearer header, and never reaches the agent. The agent never sees a Bloomberg credential. The agent calls a tool. The capability handles the auth.

Read versus write is declared, not inferred. Every tool has a readOnly hint. Catalog browsing, universe listing, reference data lookup — all readOnly: true. Universe creation — readOnly: false, because it produces server-side state. That hint flows through into MCP, into observability, and into any allow-list or policy gate that wraps the capability.

Twelve tools, two upstreams, one composed surface. The agent does not need to know that some tools route to the HAPI and others route to the HTTP API. From the agent’s point of view, this is one tool surface. From the platform team’s point of view, this is two upstream adapters with different rate limits and different SLAs — all reconciled inside the capability spec.

That is what context-engineered means in this context. The agent gets the right tool surface for the question being asked. The implementation of that surface, including which Bloomberg endpoint each tool actually calls, is a platform decision, not a prompt decision.

Capability two — trading and execution

The trading capability is where governance starts to matter very differently. This surface mutates positions. Reads are cheap; writes can be expensive in ways that matter to a regulator.

exposes:

- type: mcp

port: 9091

namespace: trading-mcp

transport: http

tools:

- name: create-order

hints: { readOnly: false }

call: "emsx.create-order"

- name: modify-order

hints: { readOnly: false }

call: "emsx.modify-order"

- name: delete-order

hints:

readOnly: false

destructive: true

call: "emsx.delete-order"

- name: route-order

hints: { readOnly: false }

call: "emsx.route-order"

- name: get-orders

hints: { readOnly: true }

call: "emsx.get-orders"

- name: get-fills

hints: { readOnly: true }

call: "emsx.get-fills"

The destructive: true hint on delete-order is the move. It says, in machine-readable form, that this tool changes state in a way that cannot be quietly retried. An MCP client that respects the hint will require explicit confirmation before invoking it. A policy gate at the Fleet layer can enforce a stricter rule — destructive tools require human-in-the-loop, period — without modifying the capability or rewriting the agent prompt.

That is the difference between an MCP server that exists and an MCP server that you can defend. The defensibility is not in the prompt. It is in the spec.

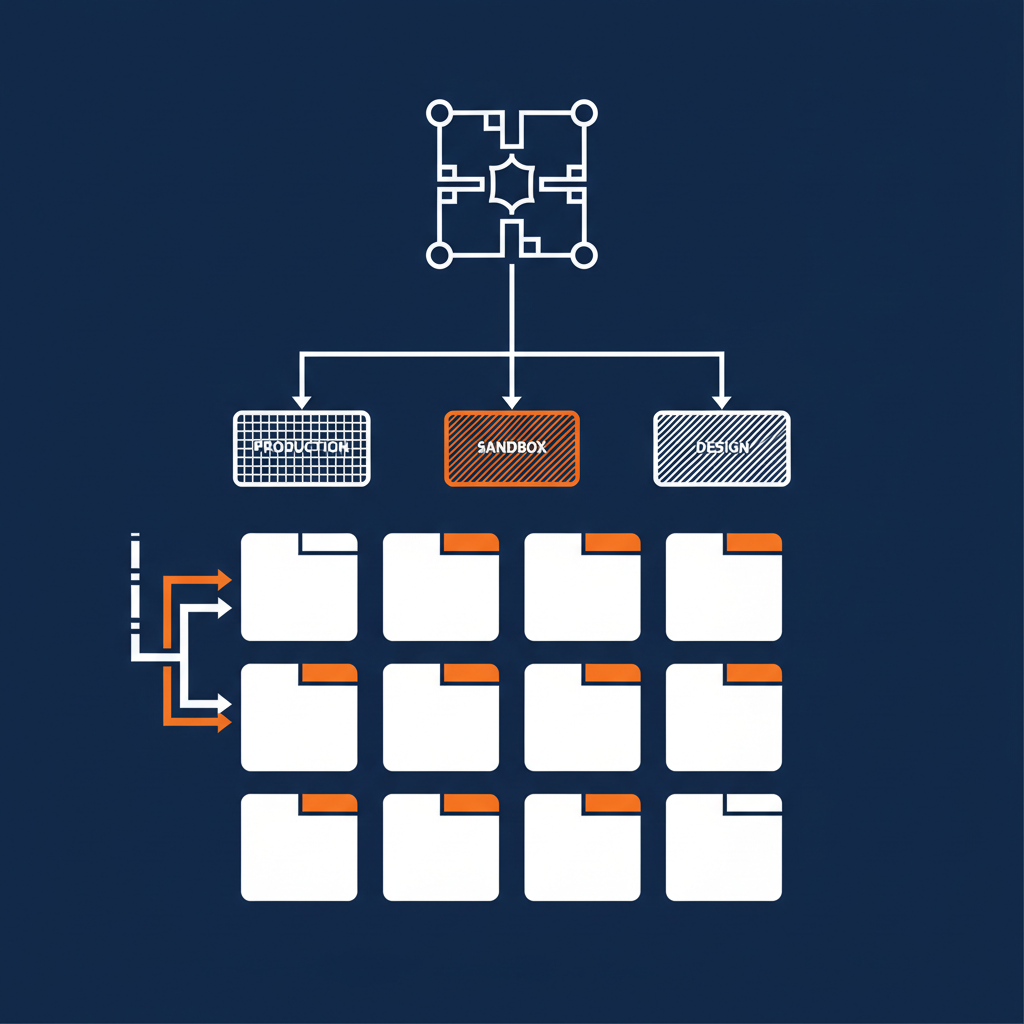

Why two capabilities, not one big one

A single mega-capability that wraps every Bloomberg endpoint at once would be easier to ship and harder to govern.

Splitting the surface into “market data and analytics” versus “trading and execution” produces a clean security boundary. The analyst-facing capability does not consume the EMSX adapter at all — its consumes: block declares only the data-license and HTTP API upstreams. The trading capability adds EMSX. There is no path from a market-data agent to an order-placement endpoint, because there is no consumed adapter exposing that path.

This is the capability as a security perimeter pattern. Two separate capabilities, two separate consumed-adapter bundles, two separate ports, two separate MCP namespaces. An agent that gets market-data-mcp access is structurally incapable of placing a trade. Not because of a prompt instruction. Because of how the spec is wired.

You cannot get that property from a thin REST wrapper, and you cannot get it from a one-MCP-server-per-API approach. You get it because the capability is the unit of design, and the capability is what determines which upstreams are reachable.

Running it under Naftiko Fleet

Two capability files alone are not the full story. The full story is what happens when Naftiko Fleet runs them.

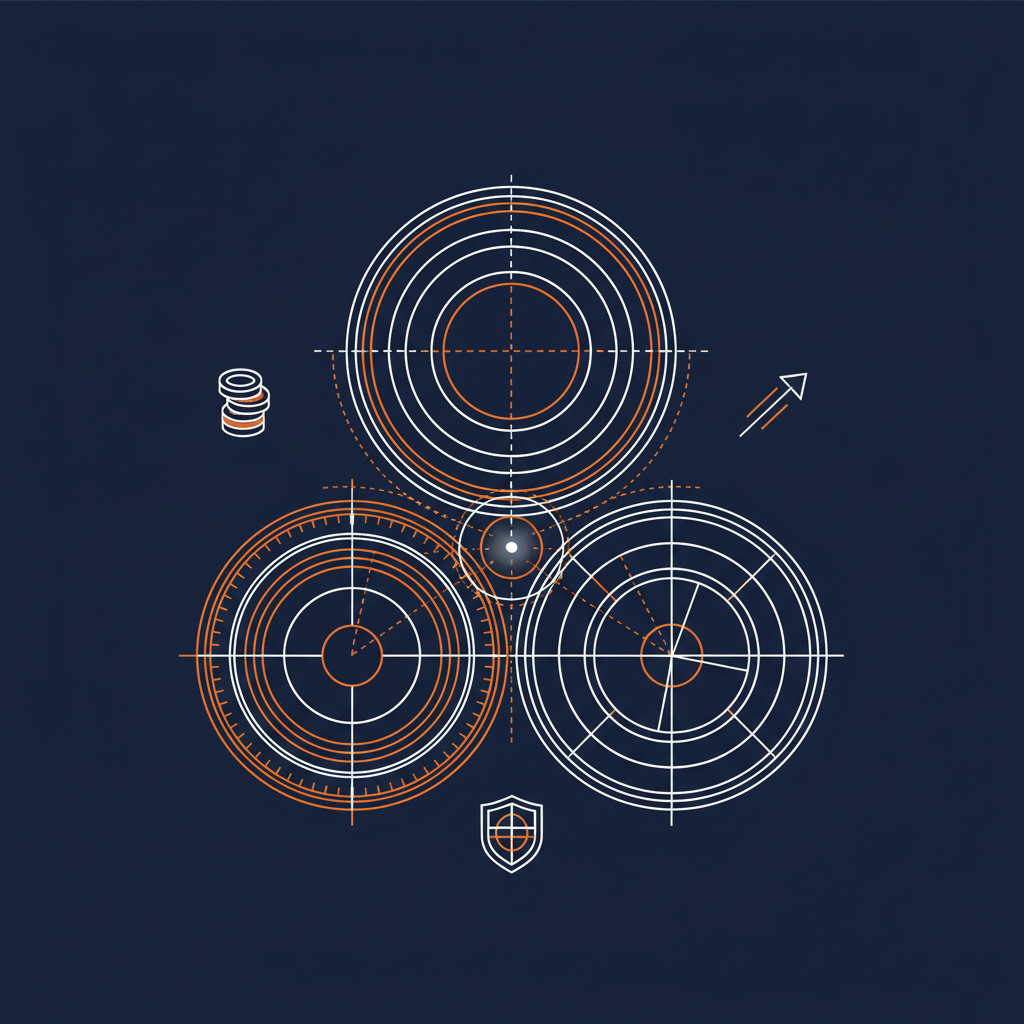

Observability. Each capability exposes a /metrics endpoint. Fleet aggregates across capabilities. You get per-call latency, per-tool invocation counts, error rates per upstream, and circuit-breaker state — all attributable to the capability, not to a generic Bloomberg traffic blob. When the historical-data tool starts timing out, you see it on a dashboard tied to market-data-mcp.get-historical-data, not buried inside a generic outbound HTTPS counter.

Allow-listing. The MCP namespace and tool name are the unit of allow-listing. Production policy can declare “agents in the analyst tier may use the tools in market-data-mcp with readOnly: true only” — and the policy enforces itself against the spec, not against a hand-curated string match. New tools added to the capability inherit the policy correctly because the policy is shaped against the hint, not the name.

FinOps attribution. Bloomberg API consumption is a chargeable line item at most firms. The bloomberg-aim repository ships a FOCUS-aligned FinOps mapping for exactly this reason. When the capability’s metrics are tagged by team, environment, and tool, the FOCUS dimensions roll up cleanly. The CFO question — which capability is paying off, which one is a quiet cost sink — becomes a query against the Fleet metrics layer instead of a forensic exercise across vendor invoices.

Audit trail. Every MCP invocation is logged with the bound identity, the tool name, the input parameters, the upstream operation, the latency, and the outcome. A regulator question — who placed this order, on whose behalf, with what context — has an answer that does not start with “let me grep some Slack threads.”

The three-dimensional read

Technology. A buy-side firm can integrate the most sensitive parts of its data and execution stack into AI workflows without giving an agent direct access to a Bloomberg credential, a routing endpoint, or a destructive operation. The capability is the seam. The seam is reviewed, version-controlled, and lintable.

Business. The conversation with a CIO or CCO changes shape. They are no longer being asked to approve “an MCP server.” They are being asked to approve a capability — a named, scoped, declared surface with explicit auth boundaries, explicit destructive-operation flags, and explicit per-upstream cost attribution. That is something a regulated business can actually sign off on.

Politics. This is the part most platform teams under-budget for. Once the capability is the unit of delivery, ownership becomes clear. The market-data capability is owned by the data-platform team. The trading capability is owned by the execution-platform team. The Fleet layer is owned by the AI platform team. There is no contested middle ground where everybody owns nothing and nobody can ship.

What ships, what doesn’t

Two self-contained capability files. One repo. Inline upstream adapters with bearer-token auth. REST and MCP surfaces on every capability. FOCUS-aligned FinOps. Spectral rules for spec quality. Rate-limit declarations co-located with the API definitions.

What does not ship is the part most teams expect to write — the bespoke per-endpoint glue code, the per-tool credential-passing logic, the hand-rolled MCP server, the ad-hoc telemetry, the one-off allow-list. The framework gives you the substrate. The two capability files declare the workflow. Fleet runs the result.

The full open-source artifact is at github.com/api-evangelist/bloomberg-aim. The Naftiko Framework spec the capabilities are written against is at github.com/naftiko/framework. Fleet — the runtime that aggregates, observes, and governs — is at github.com/naftiko/fleet.

If you are a buy-side firm staring at the gap between “we have Bloomberg AIM” and “we have governed AI workflows over Bloomberg AIM,” this is the bridge. Two capability files, two REST endpoints, two MCP servers, one Fleet running it. Reviewable, observable, and defensible — in that order.