I sat down last week with Kate Holterhoff on the RedMonk MonkCast to talk about what happens to APIs and SDKs when AI shows up — co-pilots, MCP servers, agentic IDEs, the whole mess. Kate is a sharp interviewer, and she kept pulling the conversation toward a specific question I did not fully answer in the moment. Around minute 33 she asked it bluntly: “Where do you think this should be happening in the stack? Do we need a new abstraction layer to help this succeed?”

I muttered through something about Office 365 Copilot and GitHub Copilot and MCP bridging product and engineering. That answer was honest but incomplete. Watching the video back, I can hear myself working the problem out in real time and not quite landing it.

Here is the cleaner version I should have given.

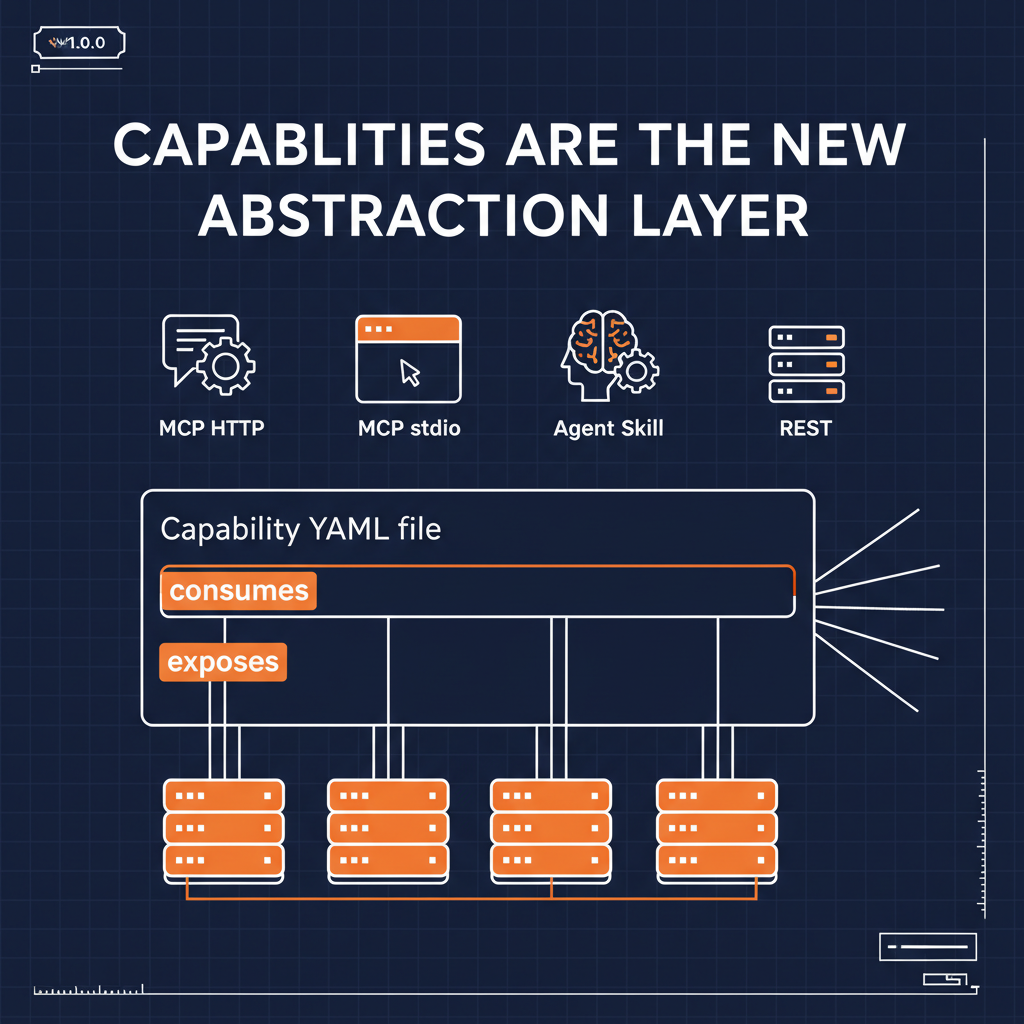

The abstraction layer already exists. It is not a new tool. It is not a new IDE. It is not a new client. It is a capability — a single declarative artifact that absorbs the work that used to be spread across the SDK, the docs, the MCP server, the agent skill, the governance rules, and the product requirements document, and then republishes it over every surface any consumer might want.

What the SDK used to do

Kate walked me through the SDK lifecycle carefully at the top of that episode, and the piece we kept circling is what an SDK actually is. An SDK is not code. An SDK is a contract, wrapped in code, bundled for a specific language. It carries the auth. It carries the endpoint surface. It carries the error handling. It carries the retry policy. And crucially it carries an opinion — shaped by whoever wrote it — about what the API is for.

That is the part most SDK conversations miss. The opinion is the abstraction. The code is the delivery.

What breaks when AI shows up

The reason the “SDKs are dead” claim comes back every three to five years is that somebody notices the delivery layer is optional. In 2014 it was interactive docs and Postman. In 2019 it was CLIs. In 2025 it is OpenAPI-fed co-pilots. Every time, what is actually being replaced is the delivery — a JAR file, a pip package, a npm install. The opinion underneath never goes away. Someone always still has to decide what the API is for.

What is new with AI is that the delivery layer is fragmenting faster than it consolidates. Every enterprise team I talk to is shipping into four surfaces at once — an MCP server (HTTP) for remote copilots, an MCP server (stdio) for local IDE clients, an Agent Skill for agent frameworks, a REST API for traditional services. Pick any one of those and write a wrapper, and in six months half your consumers are using a surface you did not ship.

So “just ship an SDK” is a bad answer now. “Just ship an MCP server” is also a bad answer. Both of those are delivery layers. You need the opinion above them.

The capability as the opinion

Here is what a capability actually is:

naftiko: "1.0.0-alpha1"

info:

title: Customer Orders

description: "Read open orders from the billing platform"

capability:

consumes:

- namespace: billing

type: http

baseUri: "https://api.billing.internal"

authentication:

type: bearer

token: ""

resources:

- name: orders

path: "/v1/orders"

operations:

- name: list-open-orders

method: GET

exposes:

- type: mcp

transport: http

tools:

- name: list-open-orders

call: billing.list-open-orders

hints:

readOnly: true

destructive: false

idempotent: true

outputParameters:

- type: array

mapping: "$.data.orders"

- type: mcp

transport: stdio

tools: [...same set...]

- type: skill

skills: [...same set...]

- type: rest

endpoints: [...same set...]

One file. One opinion about what the integration is for. Four delivery surfaces generated from it. Auth lives in one place. Output shape lives in one place. The rightsized agent payload lives in one place. The governance rules live alongside it in Spectral and JSON Schema and are enforceable in CI.

That is the abstraction Kate was asking about.

Why it carries more than an SDK ever did

This is the part worth sitting with. A capability carries things the SDK never could.

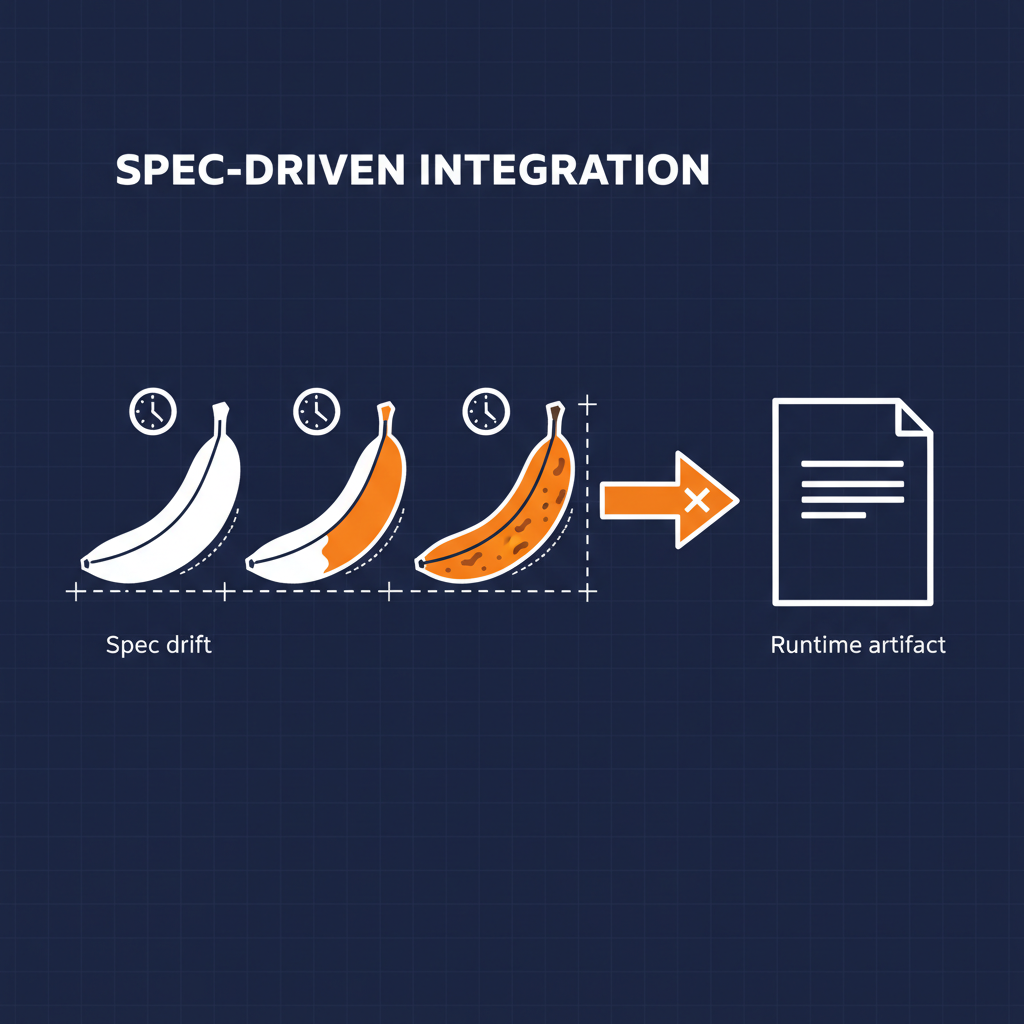

It carries deterministic and non-deterministic side-by-side. Kate was spot-on when she pointed out the tension between AI and determinism. The capability spec is deterministic — JSON Schema validates it, Spectral lints it, the engine runs it the same way every time. What the model does with the exposed tool surface is non-deterministic. The capability is where those two worlds are clamped together by design, not by accident.

It carries the product opinion, not just the engineering opinion. I talked with Kate about drift — how business intent evaporates between the PRD and the generated code. The capability spec is a file the PM can read. It is a file where the PM can see which upstream fields the model sees and which it does not. When the spec is the contract, product has somewhere to stand.

It carries the governance, not a compliance report. This is the piece I spent 13 months at Bloomberg wrestling with. Governance as enforcement is a losing game. Governance as guidance — built into IDE autocomplete, Spectral rules, and the schema itself — shifts left by default. Every capability YAML I write is governed the moment I save the file.

It carries the composition. The SAP CPI iflow, the Snowflake REST API, the Google Sheet, the internal microservice — all of them can live as shared upstream capabilities and get composed into one agent-task-shaped tool. That is not something any SDK format has ever pulled off.

The political version

Kate caught me quoting what I keep saying in interviews — I am an interface expert, I do not care about AI. That is true, but there is a corollary I did not say out loud on the podcast. The reason the SDK debate keeps coming back is not technical. It is political. Who owns the opinion about what the integration is for?

In 2010 the answer was the platform engineer on the producer side. In 2020 it had started to drift toward the consumer side — Postman, Bruno, internal dev platform teams. In 2026 the AI companies want that opinion to live in the model. I do not trust any of those answers on their own.

The capability spec puts the opinion back in Git, back in a file the platform team, the product team, the security team, and the agent all have to read the same way. That is the politics of it. The capability is not a neutral technical artifact. It is a statement that the opinion belongs to the organization, not to whichever tool vendor happens to be winning this quarter.

Where this is going

I have been spending the last month stress-testing this pattern against real provider surfaces. Snowflake — 38 OpenAPIs compressed into 6 workflow capabilities exposing 104 MCP tools, 34 Agent Skills, 52 REST endpoints. SAP CPI — iflows wrapped as governed capabilities without rewriting a single one. The Dex personal CRM — 11,680 contacts wrapped into a capability that surfaces the API’s shape as a schema.org Person. Naftiko’s own internal feeds — 26 company blog RSS feeds wrapped as one capability exposing MCP and REST sides.

Every one of those would have been a Python adapter and a bespoke wrapper a year ago. None of them are, now.

That is what I should have told Kate.

Where Naftiko Lives

- RedMonk MonkCast episode — SDKs in the AI Era with Kin Lane — the conversation this post is a continuation of.

- Naftiko Framework — the Apache 2.0 framework. Capability spec, HTTP source adapters, MCP/Skill/REST exposure types, output reshaping, Spectral ruleset.

- Naftiko Fleet Community Edition — the free edition. Framework + VS Code extension + Backstage templates.

- Naftiko Capabilities Schema — the JSON Schema and related examples that define what a valid Naftiko capability looks like. This is where the abstraction actually lives.

I will keep reporting on what I am seeing.