I keep running into the same conversation. A team wants to put an agent in front of “the data,” and somebody finally has to admit that a meaningful slice of “the data” lives in CSV files. A payroll export from ADP. A vendor price list a partner emails on the first of the month. A nightly inventory drop the warehouse system writes to an SFTP bucket that somebody put behind nginx so it could be curled.

None of those are JSON. None of them are MCP. All of them are real, in production, and quietly load-bearing. And every consumer — the BI tool, the agent prototype, the script some director wrote in 2019 — is parsing them differently.

This post is about the Naftiko capability pattern for that exact mess. Convert CSV to JSON from multiple sources, in one capability, with no upstream rewrite.

The CSV-as-API problem

The honest shape of CSV in the enterprise is not “spreadsheet.” It is “the cheapest contract two systems could agree on.” That contract has known problems.

- No types. Every column is a string until somebody coerces it.

- No schema discovery. Column order in row 1 is the only thing standing between you and chaos.

- No versioning. Adding a column at the end is fine. Adding one in the middle breaks everyone.

- No discoverability. The consumer learns about the file from a Confluence page that was last edited three years ago.

The reflex is to “modernize” — stand up a new service, rewrite the producer, get the data into a real API. That is a multi-quarter project before anyone touches an agent. The capability-first answer is the opposite: declare what is already there, exactly as it is, and put a JSON-shaped contract on top.

One capability, three CSV drops

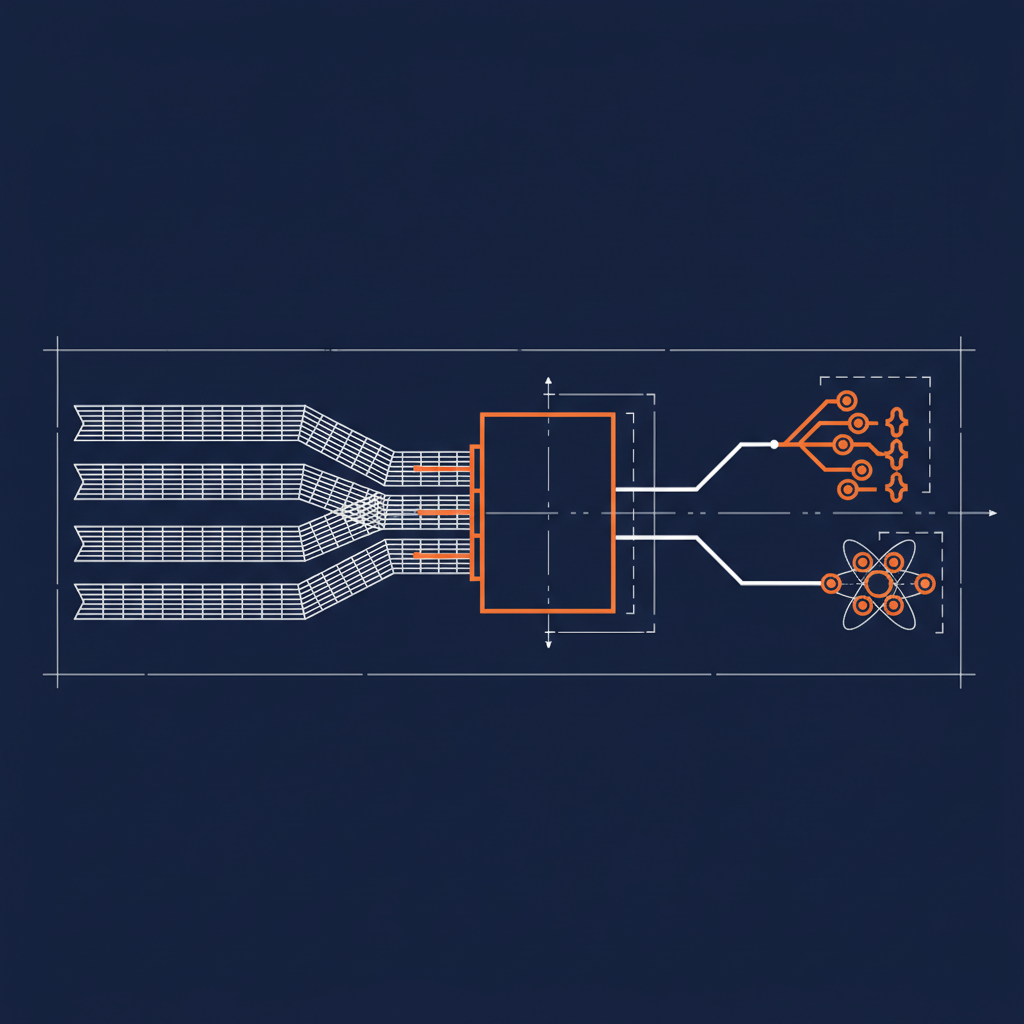

The Naftiko spec already has the primitive that makes this work — outputRawFormat: "csv" on a consumed operation. The framework decodes the response body, treats row 1 as headers, and gives every downstream outputParameters mapping a JSON-shaped view rooted at $.

That single field is the difference between “we need a CSV ingestion service” and “we need a YAML file.”

Here is the shape of a single capability that consumes three different CSV drops — payroll headcount, vendor pricing, warehouse inventory — and exposes all three as JSON via REST and MCP.

naftiko: "1.0.0-alpha1"

info:

title: Operational Snapshots From CSV

description: "Three legacy CSV drops normalized into one governed JSON capability"

capability:

consumes:

- namespace: payroll

type: http

baseUri: "https://exports.payroll.internal"

authentication:

type: bearer

token: ""

resources:

- name: headcount

path: "/exports/headcount.csv"

operations:

- name: get-headcount

method: GET

outputRawFormat: csv

- namespace: vendor

type: http

baseUri: "https://files.vendor-portal.com"

authentication:

type: apiKey

in: header

name: X-Vendor-Key

value: ""

resources:

- name: pricing

path: "/monthly/pricing.csv"

operations:

- name: get-pricing

method: GET

outputRawFormat: csv

- namespace: warehouse

type: http

baseUri: "https://drops.warehouse.internal"

authentication:

type: basic

username: ""

password: ""

resources:

- name: inventory

path: "/nightly/inventory.csv"

operations:

- name: get-inventory

method: GET

outputRawFormat: csv

exposes:

- type: rest

namespace: snapshots

resources:

- path: /headcount

name: headcount

operations:

- method: GET

call: payroll.get-headcount

outputParameters:

- type: array

mapping: "$"

items:

type: object

properties:

employeeId:

type: string

mapping: "$.employee_id"

department:

type: string

mapping: "$.department"

costCenter:

type: string

mapping: "$.cost_center"

employmentType:

type: string

mapping: "$.employment_type"

- path: /pricing

name: pricing

operations:

- method: GET

call: vendor.get-pricing

outputParameters:

- type: array

mapping: "$"

items:

type: object

properties:

sku:

type: string

mapping: "$.sku"

vendor:

type: string

mapping: "$.vendor"

unitPrice:

type: number

mapping: "$.unit_price"

effectiveDate:

type: string

mapping: "$.effective_date"

- path: /inventory

name: inventory

operations:

- method: GET

call: warehouse.get-inventory

outputParameters:

- type: array

mapping: "$"

items:

type: object

properties:

sku:

type: string

mapping: "$.sku"

warehouseId:

type: string

mapping: "$.warehouse_id"

onHand:

type: integer

mapping: "$.on_hand"

reorderPoint:

type: integer

mapping: "$.reorder_point"

- type: mcp

namespace: snapshots

tools:

- name: list-headcount

description: "Current headcount snapshot from the payroll CSV export"

call: payroll.get-headcount

outputParameters:

- type: array

mapping: "$"

items:

type: object

properties:

employeeId:

type: string

mapping: "$.employee_id"

department:

type: string

mapping: "$.department"

- name: list-pricing

description: "Latest vendor pricing snapshot from the monthly CSV"

call: vendor.get-pricing

outputParameters:

- type: array

mapping: "$"

items:

type: object

properties:

sku:

type: string

mapping: "$.sku"

unitPrice:

type: number

mapping: "$.unit_price"

- name: list-inventory

description: "Nightly warehouse inventory snapshot"

call: warehouse.get-inventory

outputParameters:

- type: array

mapping: "$"

items:

type: object

properties:

sku:

type: string

mapping: "$.sku"

onHand:

type: integer

mapping: "$.on_hand"

Three sources. One capability. Six exposed surfaces — three REST resources for traditional clients, three MCP tools for agents. Zero upstream rewrites.

What the framework is doing for you

The piece that earns its keep is small but specific. When you set outputRawFormat: csv on a consumed operation, the engine:

- Fetches the CSV exactly as the upstream system serves it — bytes on the wire, no transformation.

- Decodes the CSV using the first row as headers.

- Hands you a JSON-shaped view at the root

$— an array of row-objects keyed by header name. - Lets your

outputParametersuse ordinary JSONPath ($.sku,$.unit_price) to map to your contract.

Same primitive works for tsv, psv, yaml, xml, avro, protobuf, html, and markdown. The capability spec does not care that the upstream is a CSV drop. It cares that you declared the contract you want to expose.

The CSV is not your API. The capability is your API.

Three dimensions

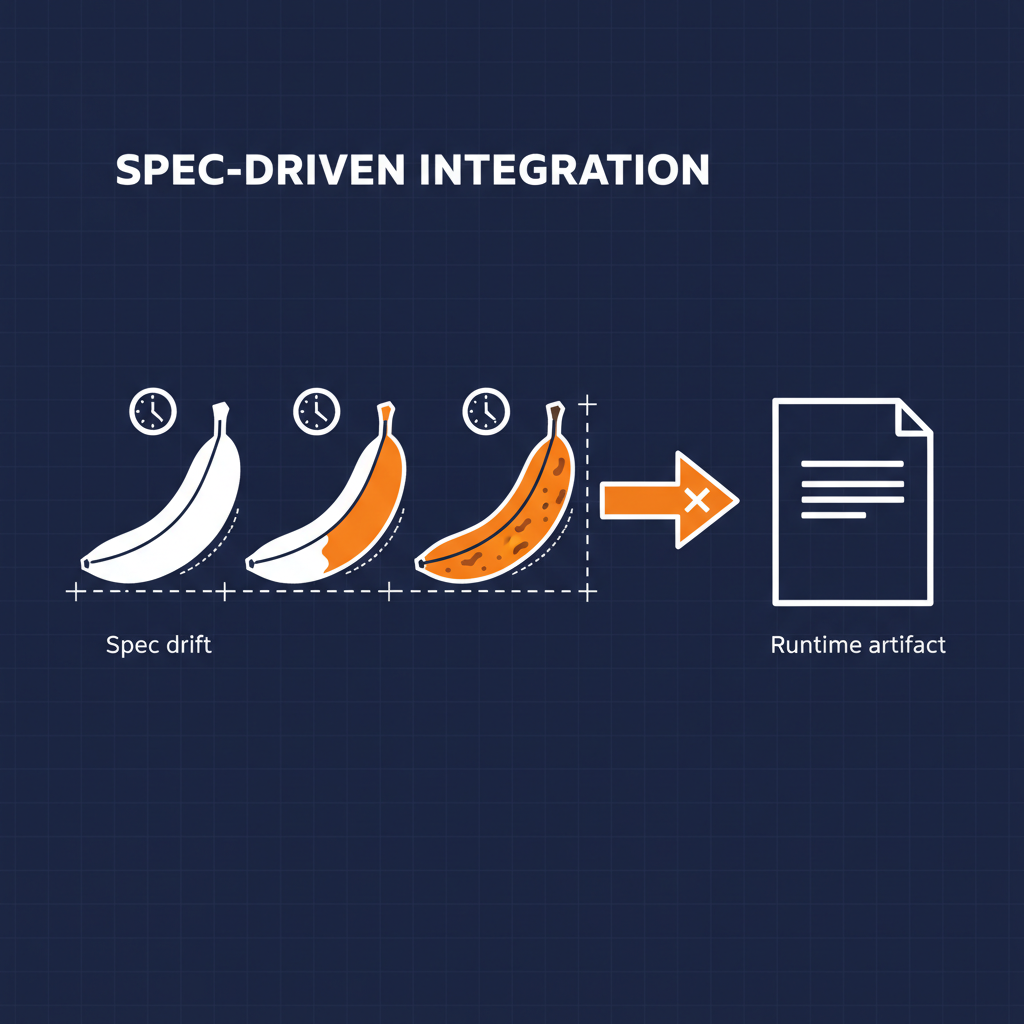

Technology. The hidden cost of CSV-as-an-API is not parsing. Every language has a CSV parser. The cost is that every consumer reimplements the type coercion, the column-name handling, the missing-row defaults, and the “what happens when the vendor adds a column” logic in its own way. Centralizing that into a capability spec makes the contract a file, not a tribal practice. When the vendor adds a new column, you change one mapping in Git — not five different downstream consumers.

Business. CSV drops tend to be where the real money lives — payroll exports, pricing files, inventory feeds, regulatory filings. They are the integrations leadership cannot afford to break. Declaring them as capabilities means they keep working exactly as they do today, while the team finally has a JSON-shaped contract to point at when somebody asks “can the agent see headcount?” The answer becomes “yes, here is the MCP tool” instead of “let me schedule a call with the payroll team.”

Politics. This is the quiet win. The owner of the payroll CSV does not have to change a thing. The owner of the warehouse drop does not have to change a thing. The vendor portal stays the way the vendor wrote it. The capability lives next to the consumers, not the producers. Nobody’s roadmap moves. Nobody’s on-call rotation expands. The CSV producers keep doing what they have always done, and the AI conversation moves forward anyway.

Where this fits

This is the same shape as Use Case 4 — Elevate Google Sheets API and Use Case 3 — Elevate existing APIs. The Sheets case takes a positional JSON array and gives it column names. The CSV case takes an actual CSV file and does the same thing. Multi-source is just the same pattern with more consumes blocks and more namespaces.

If your enterprise has more CSV drops than it has modern APIs — and most do — this is the shortest path from “we have data” to “we have an API our agents can use.”

- Wiki: Guide — Use Cases

- Spec: Specification — Schema (

outputRawFormat) - GitHub: github.com/naftiko/framework

- Fleet Community Edition: github.com/naftiko/fleet

The CSVs are not going anywhere. Stop pretending they will. Wrap them.