An OpenAPI spec tells your agent how to call the API. It does not tell the agent what the call costs.

That is a gap nobody noticed when the only consumer of OpenAPI was a developer building a one-off integration. The developer reads the docs, signs up for the service, and notices the bill at the end of the month. The cost discovery loop is human-paced and human-bounded.

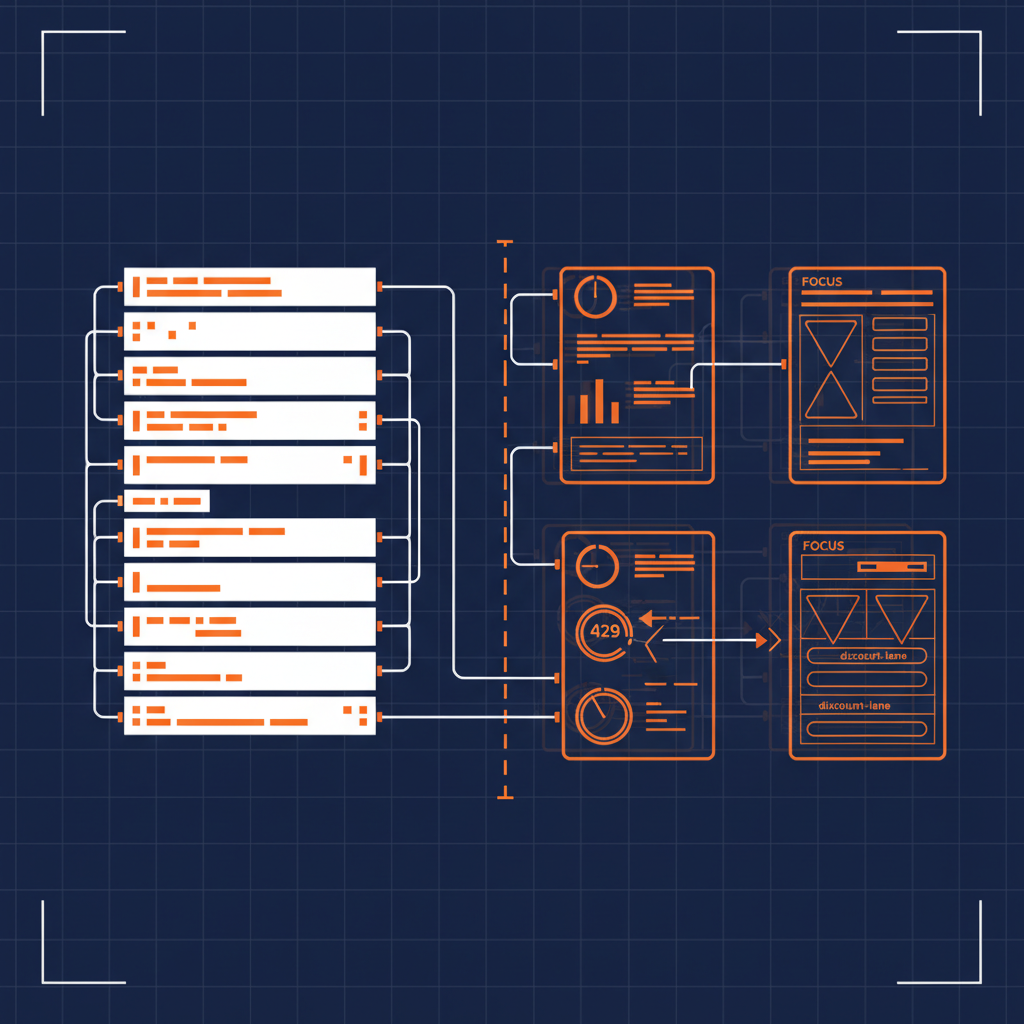

That gap becomes load-bearing the moment the consumer is an agent. The agent fetches the spec, picks a tool, and calls it — at machine speed, in a loop, with whatever cardinality the prompt produces. It has no way to know whether each call is a tenth of a cent or fifty dollars. It has no way to know whether the call counts against a free tier, an included quota, or an overage rate. It has no way to know whether calling the same endpoint a hundred times in a minute is well below a steady-state limit or thirty seconds away from a 429.

Every OpenAPI spec on the public internet is missing the financial half of the contract. Until that gets fixed, every “AI agent for FinOps” demo is reading prose pricing pages and hallucinating the answer.

The three artifacts the OpenAPI spec assumed someone else would publish

The financial half of an API contract has three parts. None of them belong in the OpenAPI spec itself — they describe the consumer’s commercial relationship with the provider, not the wire protocol. But they need to live next to the spec, in the same git repository, served from the same apis.json index, with the same machine-readable rigor.

Plans and Pricing. The catalog of tiers the provider offers, what each tier costs, what it includes, and what every charged unit (token, request, transaction, segment, MAU, container-hour) costs at each tier. Free, Pro, Enterprise — and the actual numbers, not “contact sales” prose.

Rate Limits. The throughput contract. Per-account, per-token, per-endpoint, per-tier. The headers the provider returns. The HTTP code the provider sends when you exceed. The recovery behavior. Burst allowance vs steady-state. The specific 429 the agent should expect to get back if it calls in a tight loop.

FinOps Framework. The unit-economics contract. Which dimensions the provider bills on. Which dimensions the consumer can use to allocate cost back to a team, an environment, a feature, a customer. The FOCUS columns the provider’s billing export populates. The discount lanes (caching, batch, reserved, committed-use). The meters the agent will see show up on the invoice.

These three artifacts are the difference between an agent that can call an API and an agent that can responsibly call an API. The first one is a 2024 demo. The second one is a 2026 production system.

A schema for each

API Commons already defines Plans and Rate Limits. The FinOps Foundation already defines FOCUS. What is missing is the convention that every API provider publishes one of each, in their apis.json repo, alongside the OpenAPI spec, with the same properties references that make OpenAPI itself discoverable.

A minimal plans/ artifact for an API provider:

specification: API Commons Plans

specificationVersion: '0.1'

provider: Anthropic

plans:

- id: anthropic-claude-sonnet-4-6

name: Claude Sonnet 4.6

type: usage-based

entries:

- label: Input tokens

type: metered

metric: tokens

unit: 1000000

price: '3.00'

- label: Output tokens

type: metered

metric: tokens

unit: 1000000

price: '15.00'

- label: Cache read

type: metered

metric: tokens

unit: 1000000

price: '0.30'

A minimal rate-limits/ artifact:

specification: API Commons Rate Limits

provider: Anthropic

algorithm: token-bucket

headers:

limit: anthropic-ratelimit-requests-limit

remaining: anthropic-ratelimit-requests-remaining

reset: anthropic-ratelimit-requests-reset

retryAfter: retry-after

responseCodes:

throttled: 429

limits:

- tier: Tier 1

model: Claude Sonnet 4.x

rpm: 50

itpm: 30000

otpm: 8000

- tier: Tier 2

model: Claude Sonnet 4.x

rpm: 1000

itpm: 450000

otpm: 90000

A minimal finops/ artifact:

specification: FinOps Framework

provider: Anthropic

alignedWith:

framework: FinOps Foundation Framework

dataSpec: FOCUS

dataSpecVersion: '1.3'

focusColumns:

ServiceName: Anthropic API

ServiceCategory: AI and Machine Learning

PricingCategory: Usage-Based

PricingUnit: MTok

BillingCurrency: USD

meters:

- name: input_tokens

unit: token

aggregation: sum

dimensions: [model, workspace, service_tier]

- name: cache_read_input_tokens

unit: token

aggregation: sum

discountModels:

- name: Prompt caching

discount: Cache reads at 0.1x base input price

- name: Batch API

discount: 50% off both input and output tokens

Three files. None of them belong in the OpenAPI spec. All of them belong next to it.

Capability runtime, not capability bolt-on

The reason this matters for capability-based architectures is the loop. A capability runtime is the deterministic substrate the agent calls into. The agent reasons; the capability returns a typed, audited result. That is the contract that keeps the agent inside an auditable blast radius.

Every capability that proxies a paid third-party API needs to know the cost of the underlying call to do its job. Cost is part of the contract the capability honors back to the runtime. Without machine-readable plans, rate limits, and FinOps metadata, the capability is operating with the same blindness as the agent above it. With them, the capability can:

- Reject obviously bad calls before they hit the wire. A loop calling a $0.50/call endpoint a thousand times is a $500 bill. The capability should refuse, log it, and bubble up an exception the agent can read.

- Pick the right model or tier per call. Claude Sonnet for the easy work, Opus for the hard work, Haiku for the bulk work — and the choice driven by the FOCUS-aligned cost meter the capability already knows about.

- Honor the rate limit before the upstream sends back the 429. Token-bucket math is deterministic; the capability should know it has 30 requests of headroom left in this minute and queue the rest.

- Attribute the spend back to a workspace, project, or feature. The dimensions the capability already tags every call with line up with the FOCUS columns the provider’s billing export populates. Chargeback writes itself.

A capability runtime that does not consume cost metadata is a runtime that can do everything except the one job a finance team would actually pay for: predict the bill before it is incurred.

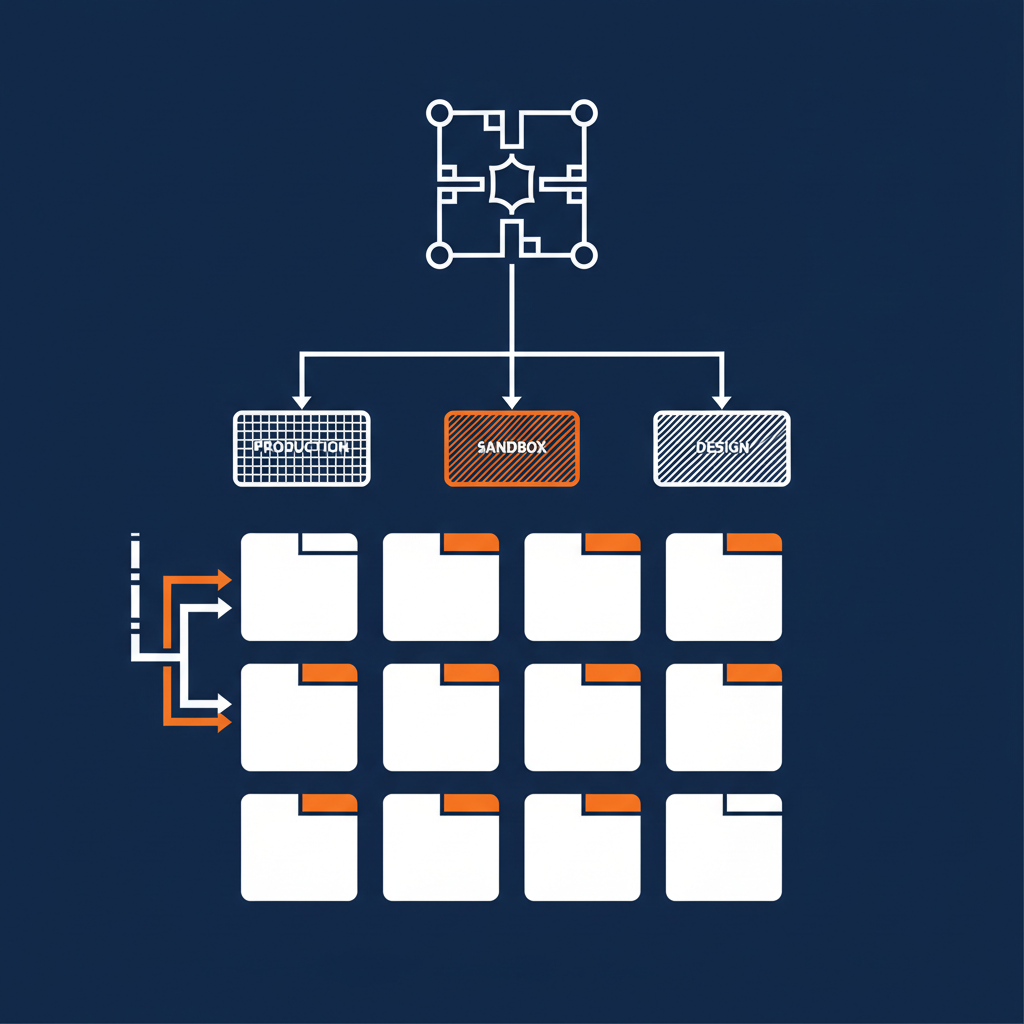

What this looks like at scale

We just generated 11,511 of these artifacts — three each for 3,837 API provider repositories — as scaffold defaults. We then went back and reconciled 184 well-known providers (Anthropic, OpenAI, Stripe, Twilio, GitHub, AWS, Datadog, Snowflake, MongoDB, Salesforce, Cloudflare, Vercel, Supabase, all the AI labs, all the comms platforms, all the major databases) with researched values from each vendor’s actual pricing page.

The reconciled artifacts cite the source URLs they came from. They carry a reconciled: true flag so a downstream consumer can distinguish “we know this is right” from “this is a templated guess.” The plans match the actual tier prices on each provider’s pricing page. The rate limits match the actual throttling headers each provider documents. The FinOps meters match the actual FOCUS columns each provider exports.

That is the substrate. That is what an agent in a Claude Code session, a Copilot in an IDE, a FinOps platform aggregating spend across vendors, or a Naftiko capability gating a costly call — needs to be looking at instead of guessing.

The rule

Every API provider publishes three more files alongside the OpenAPI spec.

Plans. Rate limits. FinOps. Same git repository. Same apis.json index. Same properties link the OpenAPI spec itself uses. Same machine-readable rigor.

If the provider sells access — and almost every API provider does — the financial half of the contract is part of the contract. Publishing the OpenAPI spec without the cost contract is publishing half a spec.

Capability runtimes can read all three. Agents grounded on the capability runtime stop guessing about cost. FinOps platforms stop scraping HTML pricing pages. The CFO finally gets the API spend report nobody could produce from a wiki crawl. And the AI program and the API platform program become the same investment, because the same machine-readable substrate answers both sets of questions.

Three files. The OpenAPI spec assumed someone else would publish them. Nobody did. That is the gap. That is the fix.