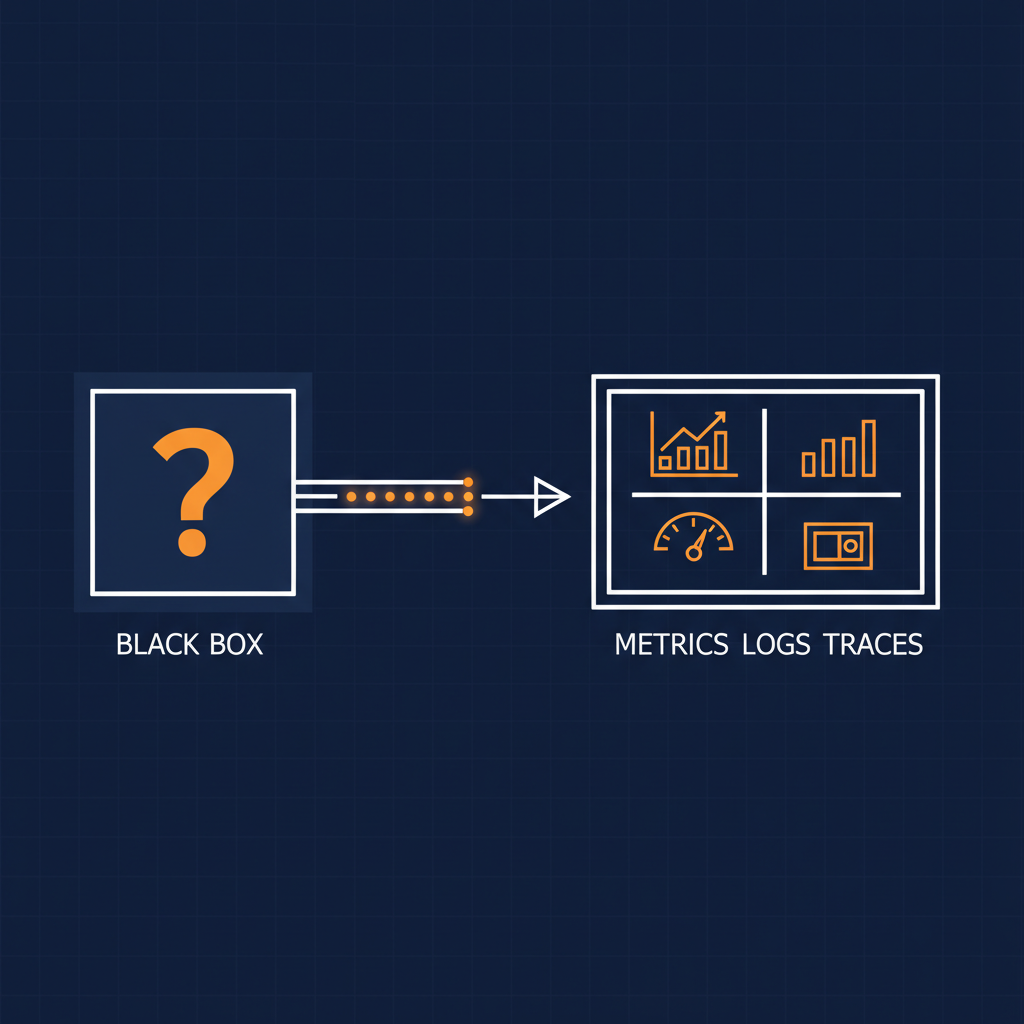

Most MCP servers in production today are observability dead-zones.

You can call them. You cannot see what they did. You cannot see how long it took. You cannot see whose token paid for it. And when one of them silently starts returning malformed responses, you find out from a developer’s frustrated Slack message — not from a dashboard.

The reason is structural. The Model Context Protocol specifies how a tool gets discovered and called. It specifies nothing about how that call should be measured. So the people implementing MCP servers do what is in front of them: ship the protocol, ship the tool, and assume someone else will figure out the metrics later.

Someone else is rarely going to figure out the metrics later. Not at enterprise scale. Not when an MCP server quietly absorbs ten thousand agent calls a day across five thousand developers, each one paying a token bill with no per-call attribution.

The question heads of AI, platform leads, and security teams should be asking right now: does your MCP runtime give you observability for free, or do you have to bolt it on per server?

Here is what Naftiko gives you today, and what is coming next.

What Ships Today in the Naftiko Framework

Every Naftiko capability — the unit of API + MCP + Agent Skills exposure — comes with telemetry baked in at the runtime. Not as an opt-in. Not as a sidecar. As the default shape.

Prometheus-compatible metrics endpoint per capability. GET /metrics exposes request count by operation and status code, latency histograms in durationMs, error rates, circuit-breaker state, and the rest of the resilience-pattern surface. Every capability you deploy is automatically scrapeable by any Prometheus-compatible system, which is most of them.

Structured JSON logs with consistent fields. Every log entry carries capability, namespace, operation, statusCode, and durationMs — the same five dimensions whether the call came in as REST, MCP, or an Agent Skill. Aggregate them in Splunk, Datadog, New Relic, Loki, or whatever your security operations team already runs. No glue code per capability.

Liveness and readiness probes. GET /health is wired for Kubernetes from day one. Container orchestrators get the signals they need to decide whether to route traffic to a capability or restart it.

Resilience-pattern metrics. When a circuit breaker trips, the metrics endpoint says so. When the bulkhead queue fills, the metrics endpoint says so. When a rate limiter exhausts its tokens, the metrics endpoint says so. The patterns themselves are filling in across the alpha and beta milestones, but the telemetry surface for them is already in place.

That is what an MCP server should look like. Not a black box that responds when called. A measurable, scrapeable, debuggable runtime artifact.

What the Naftiko Framework Wiki Says About the Discipline

The framework wiki is where the discipline behind these features lives. The Spec-Driven Integration page makes the argument that observability is a spec concern, not a deployment concern — if you cannot describe how a capability should be measured in the same artifact that describes what it does, you have already lost.

The wiki also documents the structured-log and metrics conventions so that anyone instrumenting their own capability follows the same shape. This is the difference between “we have logs” and “we have logs an SRE can search across the whole fleet without renaming fields.”

If you are evaluating Naftiko for an MCP runtime decision, the wiki is the document to send to the security team and the platform team at the same time. Both audiences answer the same question — can we trust what this thing tells us in production — and the wiki gives them a written answer instead of a sales-deck assertion.

What Ships Today and Is Coming in the Naftiko Fleet

A single capability with /metrics is one thing. A fleet of hundreds of capabilities, all reporting cleanly into the same observability stack, is what an enterprise actually needs.

Cross-Engine Observability Dashboards ship in the Enterprise Edition today. Aggregate metrics and logs across every capability in a fleet. Cross-capability latency dashboards. Dependency health visualization. The dashboards that the Enterprise Edition includes are the kind that take a quarter to build in-house against a Grafana plugin and a half-finished metrics convention. Naftiko Fleet ships them.

Fleet-Wide Dependency & Impact Tracing is also Standard Edition today. When an upstream API goes down, the dependency graph tells you which capabilities are now degraded. Blast-radius assessment without a hand-maintained service map. The same primitive that makes the dashboards useful also makes the on-call rotation defensible.

OpenTelemetry integration with Prometheus or Datadog is on the Fleet roadmap for the Second Alpha (end of April 2026). Once that lands, the per-capability metrics endpoint becomes one input into the broader OpenTelemetry pipeline you already run. No separate scraping config. No bespoke exporter. Standard OTLP traces and metrics flowing into your existing observability stack.

Backstage Kubernetes plugin integration is on the Fleet roadmap for the Third Alpha (end of May 2026) — developer-centric monitoring inside the Backstage capability page. The point is not that developers want yet another dashboard. The point is that when a developer is debugging a capability they own, the metrics for that capability should be one click from where they live.

Where the Naftiko Fleet Is Going

Naftiko Fleet is where the operational story sits — how a capability moves from a developer’s IDE to a production cluster, with the telemetry intact at every step.

The Naftiko Templates for Backstage page already documents the scaffolding pattern. The Second Alpha is adding TechDocs integration, so the operational documentation for a capability lives next to the capability itself in the catalog. The Third Alpha brings the Tech Radar plugin, focused on capabilities registered in the catalog.

For telemetry specifically, the direction is clear: the same fleet that runs the capability should be the fleet that exposes the metrics, the logs, the traces, the dependency graph, and the dashboards — without anyone wiring it up by hand. Not because hand-wiring is impossible, but because hand-wiring is what every other MCP runtime asks of you, and that is exactly the cost Naftiko Fleet is built to remove.

The Real Question

The real question is not whether MCP needs telemetry. Anyone running MCP servers in production for more than a few weeks already knows the answer.

The real question is whether your MCP runtime gives you telemetry for free, or whether you have to bolt it on per server, every time, forever.

If the answer is “we have to bolt it on”, the cost compounds with every new MCP server you ship — and at enterprise scale, that cost is not measured in engineering hours. It is measured in the agent calls you cannot account for, the regressions you do not see, and the security incidents that take six weeks to investigate because the audit trail was a wishful Confluence page.

A capability is not done when it returns 200 OK. A capability is done when you can answer “what did it do and how did it perform” without writing custom integration code per server.

Naftiko ships that answer in the runtime. The framework ships the per-capability surface. The framework wiki documents the discipline behind it. The fleet ships the cross-fleet dashboards and the operational story for running them at scale. And the roadmap pulls in OpenTelemetry, Backstage Kubernetes, and Tech Radar to make the integration into existing stacks invisible.

If your MCP servers do not have telemetry today, that is not an MCP problem. That is a runtime choice you can change.