Context engineering is becoming a real job. Not a buzzword, not a rebranding of prompt engineering, but a distinct discipline with its own set of responsibilities: optimizing context windows for agents, translating upstream APIs and MCP servers into efficient tool interfaces, evaluating which techniques work for which models, and building evaluation frameworks for agent-API interaction quality.

We’ve been tracking this role through our persona work at Naftiko, and the problems context engineers face today are specific, structural, and largely unsolved. Here are five of them.

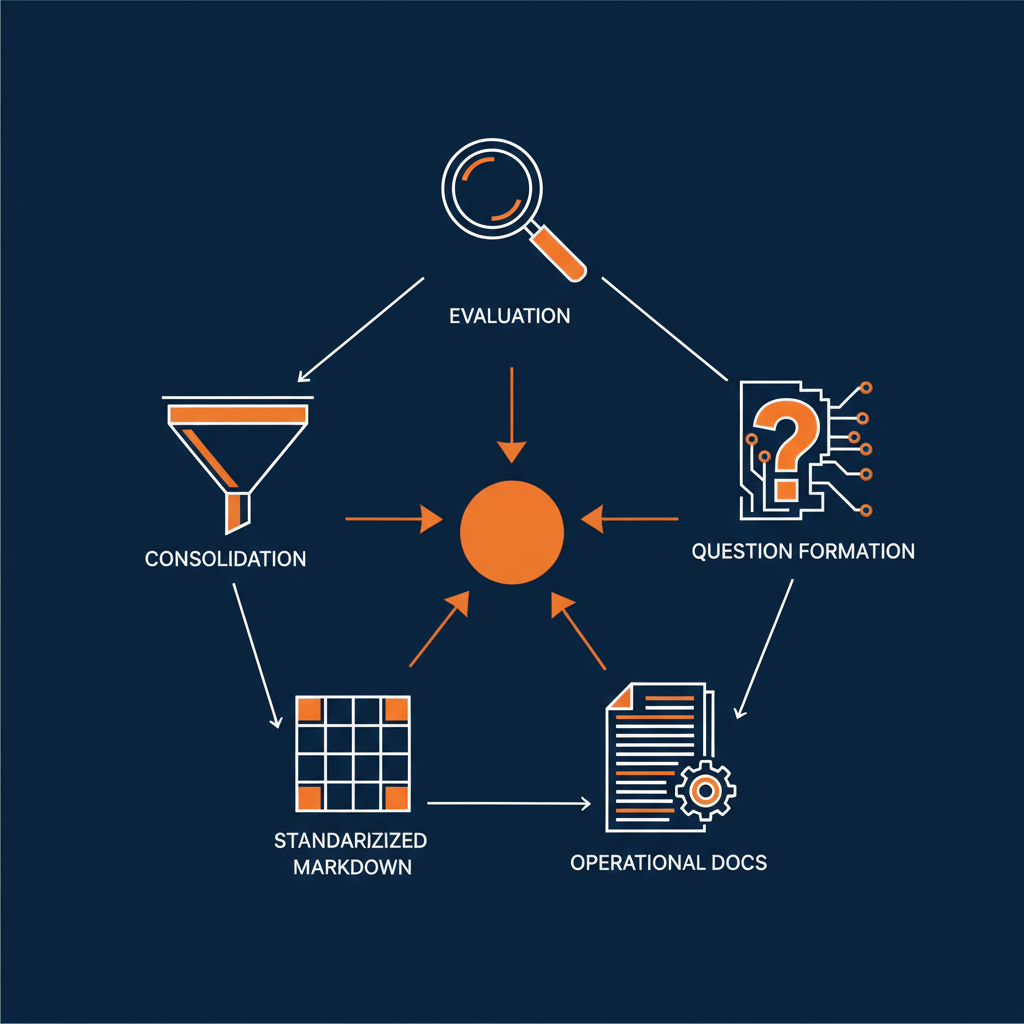

1. There Is No Agent Evaluation Framework

Context engineers need to evaluate whether AI agents called APIs correctly — with the right parameters, in the right order — not just whether the final output looks correct. Today, most evaluation stops at the surface: did the agent produce a reasonable-looking response? That is not the same as verifying that the agent made the correct API call with the correct payload to the correct endpoint in the correct sequence.

This matters because agents that produce plausible outputs while making incorrect API calls are worse than agents that fail visibly. They create a false sense of reliability. Building evaluation frameworks that inspect the actual state of upstream services after an agent acts — not just the text it returns — is one of the most important unsolved problems in the space.

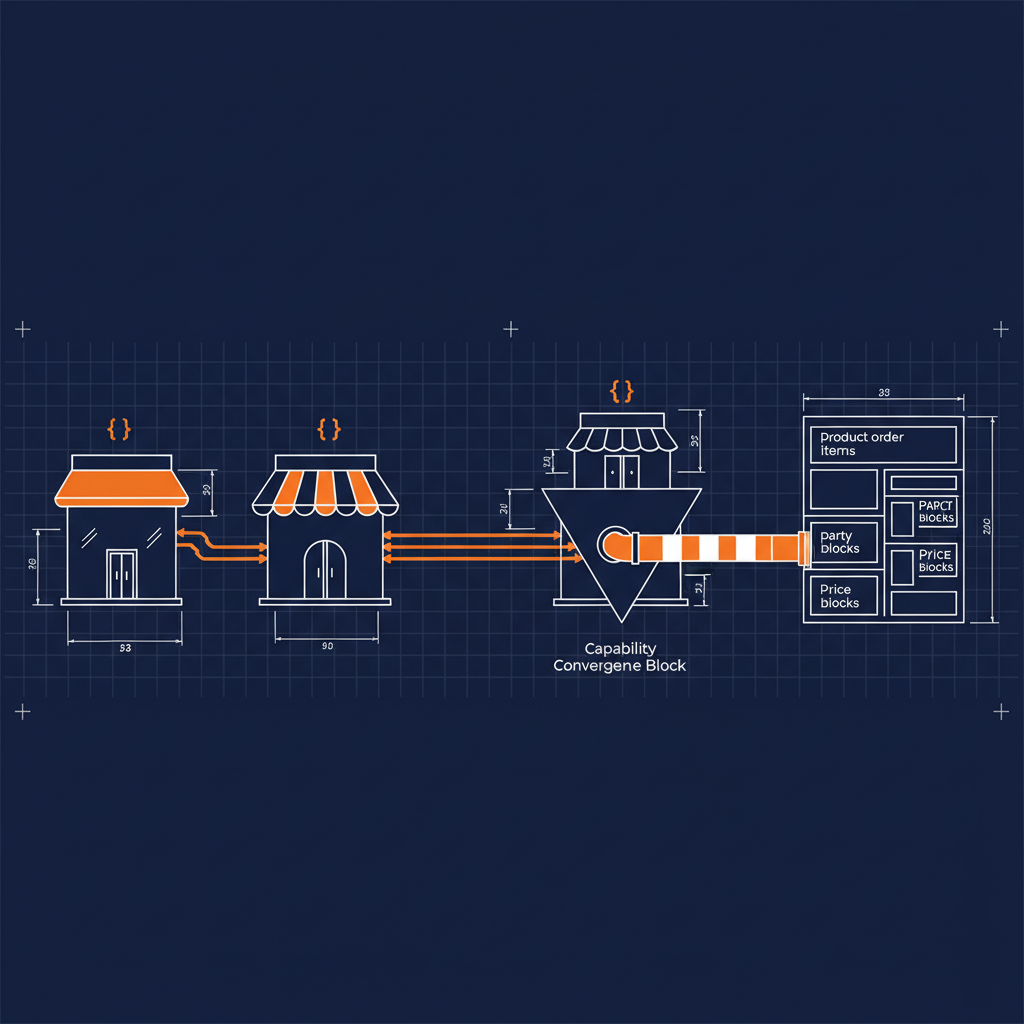

2. Too Many MCP Tools, Not Enough Consolidation

Context engineers need to consolidate many upstream APIs and MCP servers into a smaller set of efficient tools that agents can use effectively. The current landscape is proliferating MCP servers and tool definitions faster than anyone can reason about them. An agent with access to forty tools performs worse than one with access to four well-designed tools, because the context window fills up with tool descriptions and the model spends tokens deciding which tool to use rather than doing the work.

The discipline of translating a sprawling API surface into a minimal, precise tool interface is where context engineering becomes genuinely hard. It requires understanding both the upstream API semantics and the downstream model behavior — and the translation is different for different models.

3. MCP Workflows Need Embedded Question Formation

MCP-enabled documentation should accelerate implementation work inside IDEs and copilots, but “ask anything” interfaces fail when users don’t know the right prompts. Context engineers are discovering that the documentation layer needs to do more than expose information — it needs to embed question formation into the workflow itself.

This means structuring MCP-served content so that it suggests the next question, not just the current answer. It means building documentation that understands where a developer is in a workflow and surfaces the context they need before they know to ask for it. The “ask anything” paradigm assumes the user already knows what they don’t know, and that assumption breaks down fast in complex integration scenarios.

4. Repositories Need Minimum Agent Operational Documentation

Repositories can look documented but still fail basic agent tasks because the docs were written for humans, not for agent execution. A README that says “run the setup script” is fine for a developer. An agent needs to know which script, what arguments, what environment variables, what the expected output looks like, and what to do when the output doesn’t match.

Context engineers are starting to define what “minimum agent operational documentation” looks like — the baseline set of structured, machine-readable documentation that a repository needs before an agent can reliably operate within it. This is not about replacing human documentation. It is about establishing a parallel layer that agents can consume without ambiguity.

5. Markdown Needs Standardized Structures for Agent Consumption

Markdown docs must follow predictable, machine-reliable structures so agents can consistently locate authoritative answers. Today, most markdown documentation is written for human readability — which means inconsistent heading hierarchies, ambiguous section boundaries, and context that depends on the reader having read previous sections.

Context engineers need markdown that agents can parse deterministically. That means consistent heading structures, explicit section boundaries, self-contained sections that don’t depend on prior context, and metadata that tells an agent what kind of information each section contains. The gap between “human-readable markdown” and “agent-consumable markdown” is wider than most teams realize, and closing it is foundational work for anyone building agent-powered developer tools.

Where This Is Heading

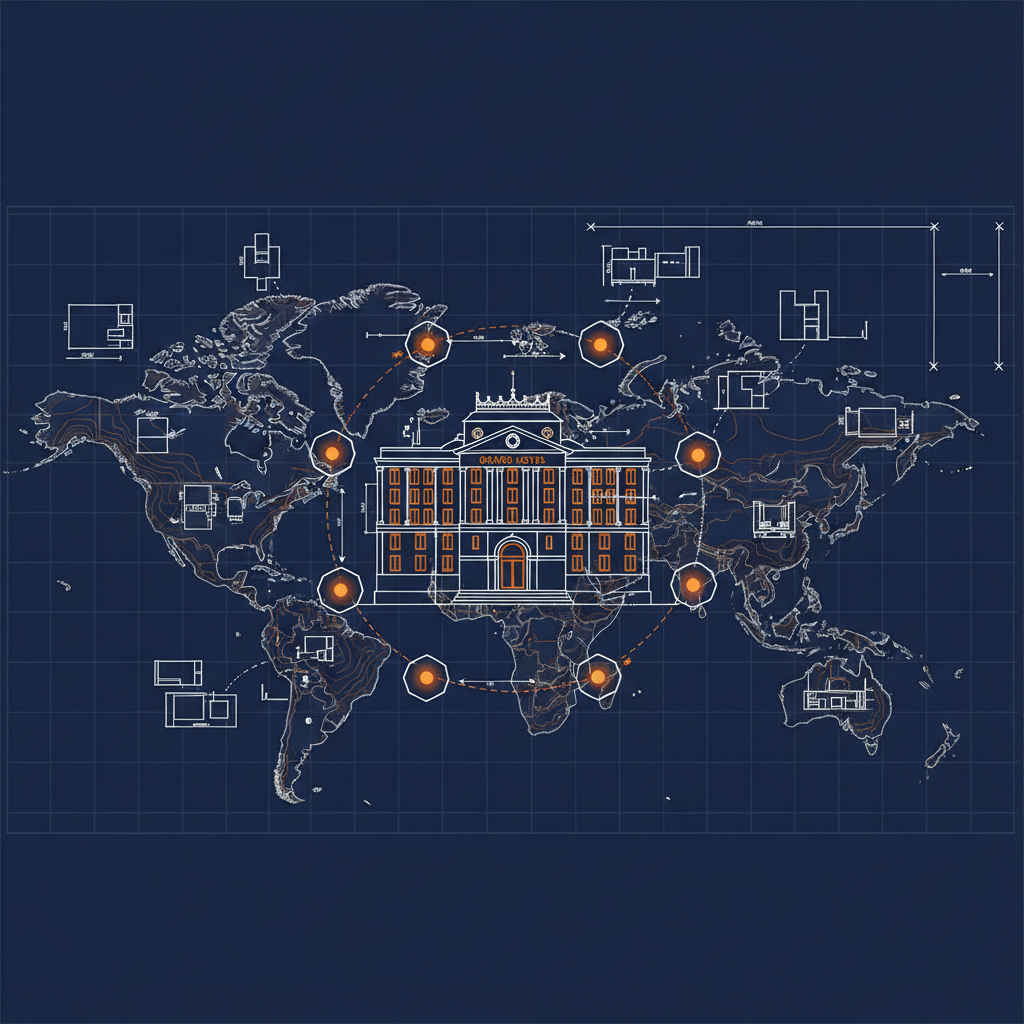

These five problems share a common thread: the infrastructure and conventions that worked for human-only workflows do not transfer cleanly to agent-augmented workflows. Context engineering as a discipline exists precisely because that translation layer is missing and someone needs to build it.

At Naftiko, we are building the capability layer that sits between enterprise APIs and AI agents — governed, spec-driven, and designed for exactly the kind of structured context delivery that these problems demand. If you are working on any of these problems, we would like to hear from you.