Clemens Vasters has spent decades at Microsoft building the infrastructure that moves data at scale — messaging platforms, event systems, schema registries, real-time data pipelines. He has been in the standards trenches with AMQP, MQTT, and CloudEvents. He knows what a schema language needs to do, and he knows when one isn’t doing it.

JSON Schema wasn’t doing it.

I sat down with Clemens twice — once in February and again last week — to dig into JSON Structure, the schema language he built to replace JSON Schema for the work he does at Microsoft Fabric. What I heard was one of the most honest and technically grounded indictments of a foundational web specification I’ve come across, followed by a clear explanation of what he built instead and why.

The problem with JSON Schema

JSON Schema started as a validation language. It answers the question: does this document conform to this shape? That is a legitimate and important question, but it is not the same question as: what is the canonical structure of this data type?

Clemens articulated this distinction with precision when we talked. JSON Schema is good at defining the shape of a JSON document, but it’s lousy as a data definition language. If you try to use it as a DDL — to describe types that you then translate into database columns, generate code from, or share across systems — you immediately run into a wall.

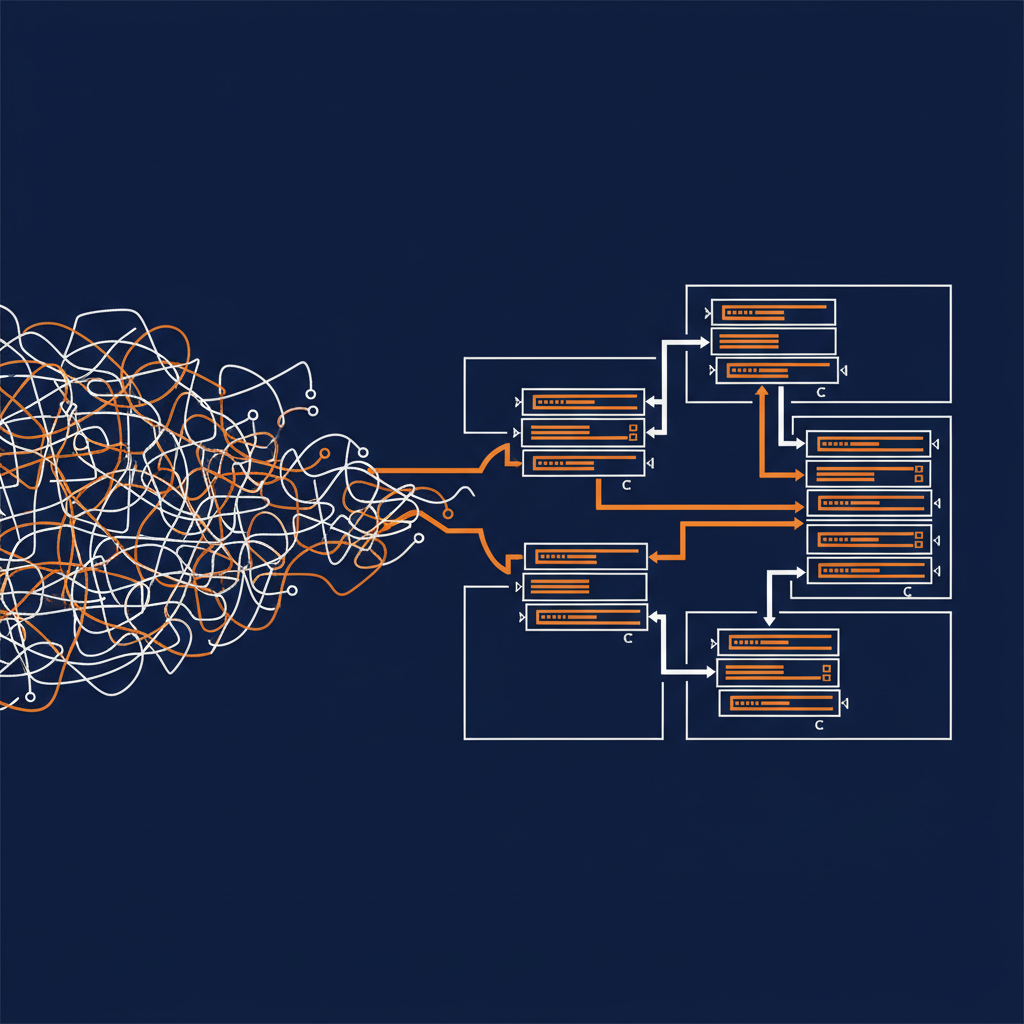

The wall has a specific texture. JSON Schema’s compositional constructs — anyOf, allOf, oneOf — are expressive enough that you can use them to model inheritance, type unions, and complex relationships. But they carry no semantic guarantees that tooling is required to honor. The convention that you represent inheritance as an allOf with a base type is exactly that: a convention. There is no rule. There is no standard. There is no requirement that code generators or validators interpret it consistently.

The result is that everyone who has tried to do serious code generation from JSON Schema — and that includes every team that has built an OpenAPI code generator — hits the same wall at a different place. The tools work until they don’t, and where they stop working is undefined by the spec. Clemens put it directly: you will not find any implementation of OpenAPI code generation that doesn’t give up at some point.

He also pointed to the specification itself as part of the problem. Very few people actually learn JSON Schema from the specifications because the specifications are not very good. You can start reading and be halfway in without understanding what you’re looking at. That is a failure mode that compounds over time — a spec that practitioners can’t read is a spec that practitioners can’t implement consistently.

Why Avro wasn’t the answer either

Before building JSON Structure, Clemens considered Avro. Avro has real strengths: it’s well-scoped, self-contained, has namespacing, and supports extensibility annotations that tooling can safely ignore. It was designed by people who thought carefully about schema as a first-class concern.

But Avro’s type system is bounded by its binary serializer, which limits what it can express. More critically, Avro’s JSON serialization model is — his word — abominable. If you want to use Avro to govern JSON documents, the way the spec defines that mapping is not something you can work with seriously.

He tried to take the question of alternate JSON serialization to the Avro project. The project is essentially in maintenance mode. People are doing great work keeping it stable, but there’s no appetite for changes that would force the whole ecosystem to move. That’s a legitimate choice for a mature project, but it meant Avro wasn’t going to become what Clemens needed.

What JSON Structure is

JSON Structure is designed to look familiar. If you already know the basic structure of a JSON Schema object — a type field, a properties block, a map of property names to type definitions — JSON Structure will read naturally. Clemens kept the shape intentional. The goal was not to build something that required practitioners to unlearn everything, but to fix the specific problems that JSON Schema left unaddressed.

The core of the fix is a proper type system. JSON Schema’s type system is anchored to JSON’s native types, which gives you strings, numbers, booleans, arrays, and objects. That is not enough for real data modeling. If you need to express a datetime, a decimal, a duration, or any of the dozens of domain-specific types that enterprise systems use daily, you’re working around the type system rather than with it. JSON Structure builds a richer type system that can represent these types truthfully and defines how they map to JSON representations — without pretending JSON’s native types are sufficient.

The second major fix is references. Dollar-ref in JSON Schema can appear anywhere in a document and can point to arbitrary locations in arbitrary external files. That sounds flexible until you try to implement it. Authentication, access control, caching, relative versus absolute paths, what happens when a network call fails — none of that is defined. The spec hands you the construct and leaves every operational concern to you.

JSON Structure makes dollar-ref illegal except within a defined reusable-types section. If you want external references, you use the import extension: you explicitly import a whole document, optionally namespace it to avoid collisions, and then reference types from that import. Two steps. No ambiguity about what is being loaded or where it lives. The operational implications are well-defined.

The third fix is extensibility. JSON Schema’s vocabulary mechanism exists, but it is entirely foreign to the schema language itself — an external, unusual thing that isn’t expressed using the same primitives the rest of the spec uses. JSON Structure’s extension mechanism works via a $uses clause that declares exactly which extension specs a document relies on, expressed using the same language as everything else. Clear, inspectable, and self-documenting.

The tooling story

Specs without tooling are academic. Clemens built the tooling.

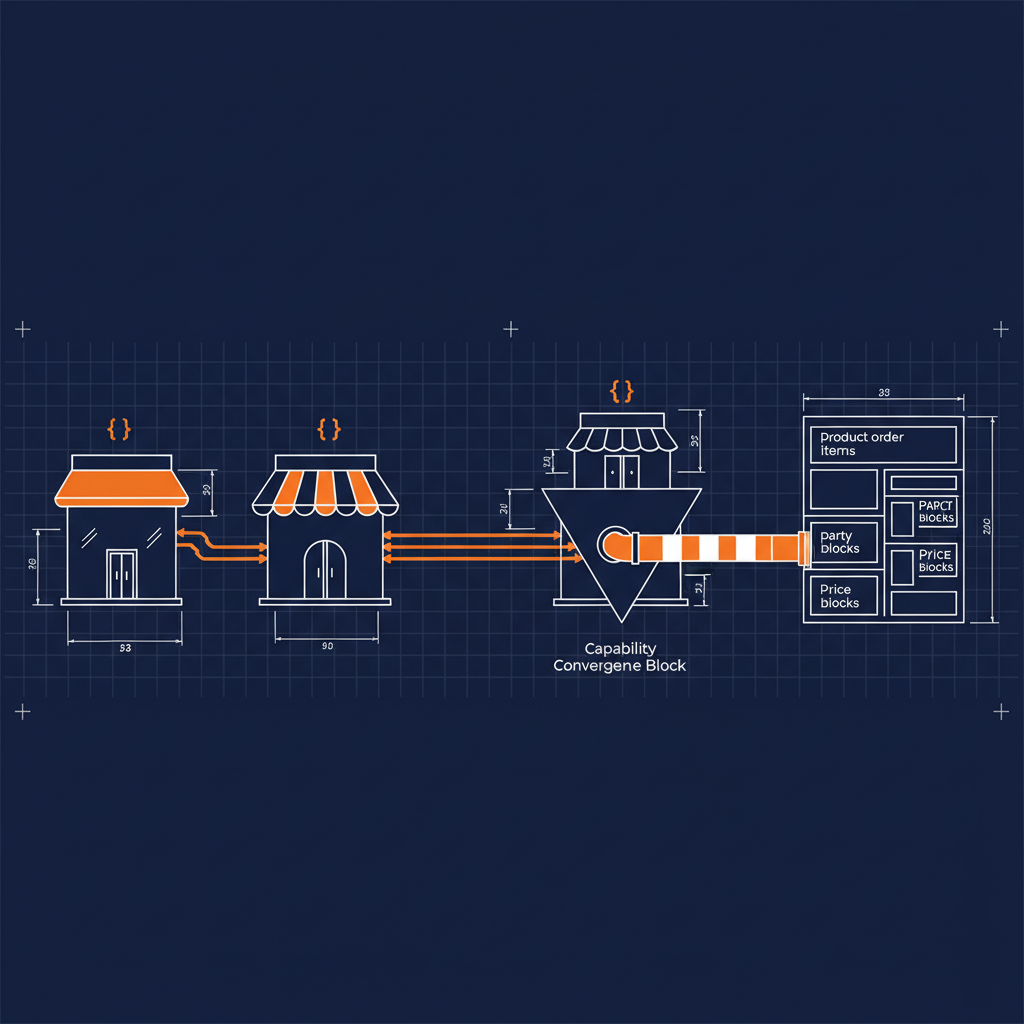

The SDK is available in eight languages — C#, C++, Rust, Python, Perl, TypeScript, JavaScript, and others. It validates schemas and validates documents against schemas. The foundation is complete enough to build on. All packages are distributed through the standard package managers for their respective ecosystems.

The code generator lives in a separate project called Avrotize. If you have a JSON Structure schema, Avrotize can generate C#, Java, Rust, Go, C++, JavaScript, TypeScript, and other language implementations of your types. What it generates isn’t a wall of undifferentiated code — it’s organized projects that compile out of the box, with documentation generated from the descriptions on your fields and self-serializing objects that understand how to read and write conformant JSON documents. The C# implementation can also handle CBOR, MessagePack, and XML, making each generated class a translation hinge between serialization formats.

The spec is in the IETF as a draft. Clemens was deliberate about this. He built CloudEvents, he’s been through the standardization process before, and he knows that adoption matters more than process. The IETF placement is a commitment: no rug pull, no lock-in, no surprise licensing. The spec is neutral and it’s going to stay there.

Why this matters now, and why it matters for AI

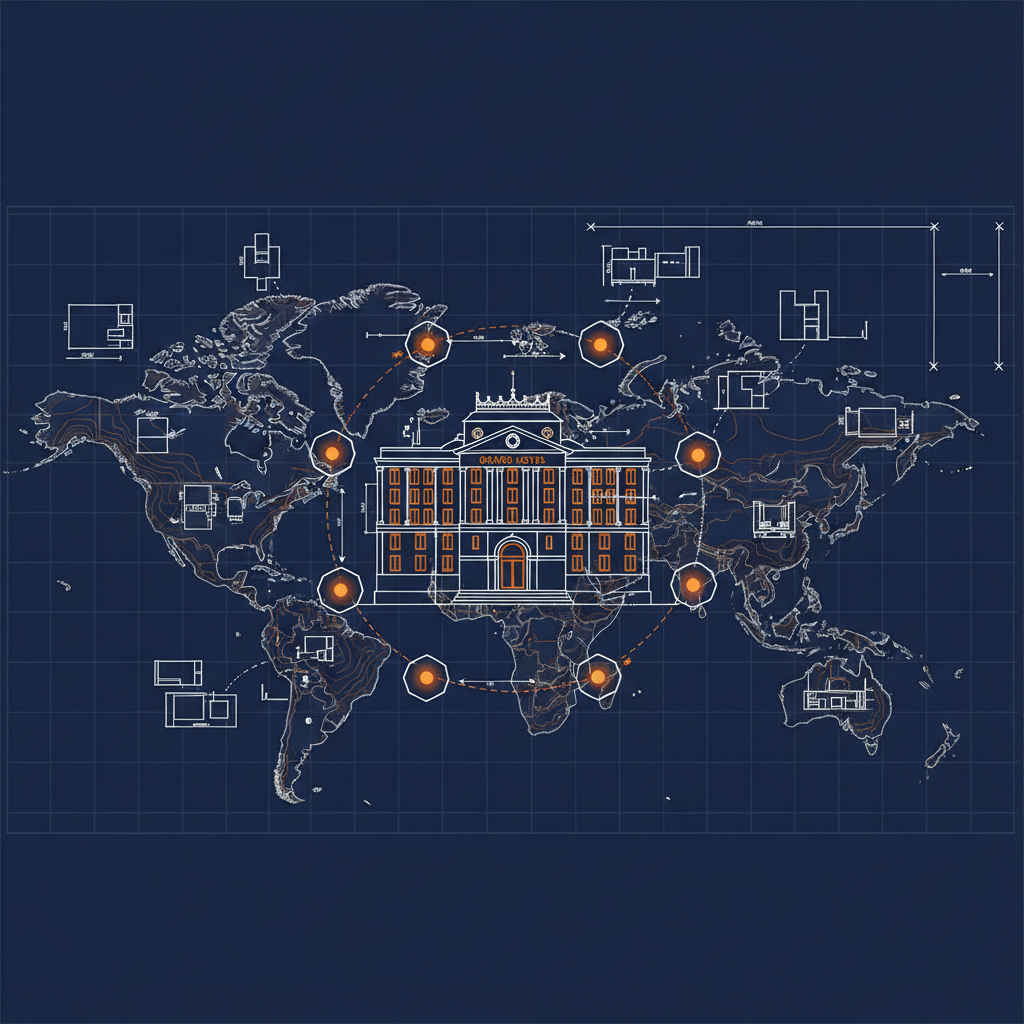

Clemens made a point in our second conversation that I keep returning to. The reason most Kafka systems work today is that the people building the publisher are also the people building the consumer, or they’re all in the same room. There’s so much being done by convention and out-of-band communication that the formal schema is almost incidental.

That model does not scale to AI. When an agent is consuming a data stream, it’s not in the room. It doesn’t have access to the Git history where someone commented on what a field actually means. It doesn’t know the unwritten convention that timestamps in this system are always UTC even though the schema says string. The agent gets the schema and nothing else. The quality of the schema determines the quality of what the agent can do with the data.

A richer, more precise schema language isn’t a nice-to-have in the age of AI — it’s essential infrastructure. The more faithfully a schema describes what data actually is, the more the agent can do with it correctly. Vague type systems and undefined compositional semantics are noise in the signal. JSON Structure is designed to reduce that noise.

Where it fits in the landscape

Clemens is clear about the intended relationship with existing specifications. The baseline case for JSON Structure is identical to the baseline case for JSON Schema: define the shape of a request body or response object in an HTTP API. The shape of a JSON Structure schema looks like the shape of a JSON Schema because Clemens wanted OpenAPI teams to be able to pick it up without a cliff.

If OpenAPI adopted JSON Structure, it would get a better type system and better-defined compositional semantics immediately. If AsyncAPI did the same, stream definitions would become genuinely portable in a way they aren’t today. These are invitations, not demands. The spec is available. The tooling exists. The rest is adoption.

At Naftiko we’re working through how JSON Schema and JSON Structure sit alongside each other in the capabilities framework. They solve different problems with different precision, and there’s real work to be done in understanding where you want each. I’ll be writing more about that as we figure it out.

For now, the thing I want to leave you with is this: Clemens has been thinking carefully about metadata, data fidelity, and schema quality for a long time, and he built something because what existed wasn’t good enough for the work he needed to do. That kind of motivated engineering — built to solve a real problem in production at scale — tends to produce specifications worth paying attention to.

json-structure.org is the starting point. The SDKs are there, the spec is there, and the community is still small enough that if you have questions, you can get answers from the person who wrote it.