Something interesting is happening in the AI tooling space that hasn’t gotten the attention it deserves. The npx command — the same one developers have used for years to run Node packages without installing them globally — has become the primary distribution mechanism for AI agent skills. If you’ve been watching the agent ecosystem evolve and wondering how all of these capabilities were going to get distributed at scale, the answer is already here. It’s npm. The infrastructure was sitting right in front of us the whole time.

What Are Agent Skills

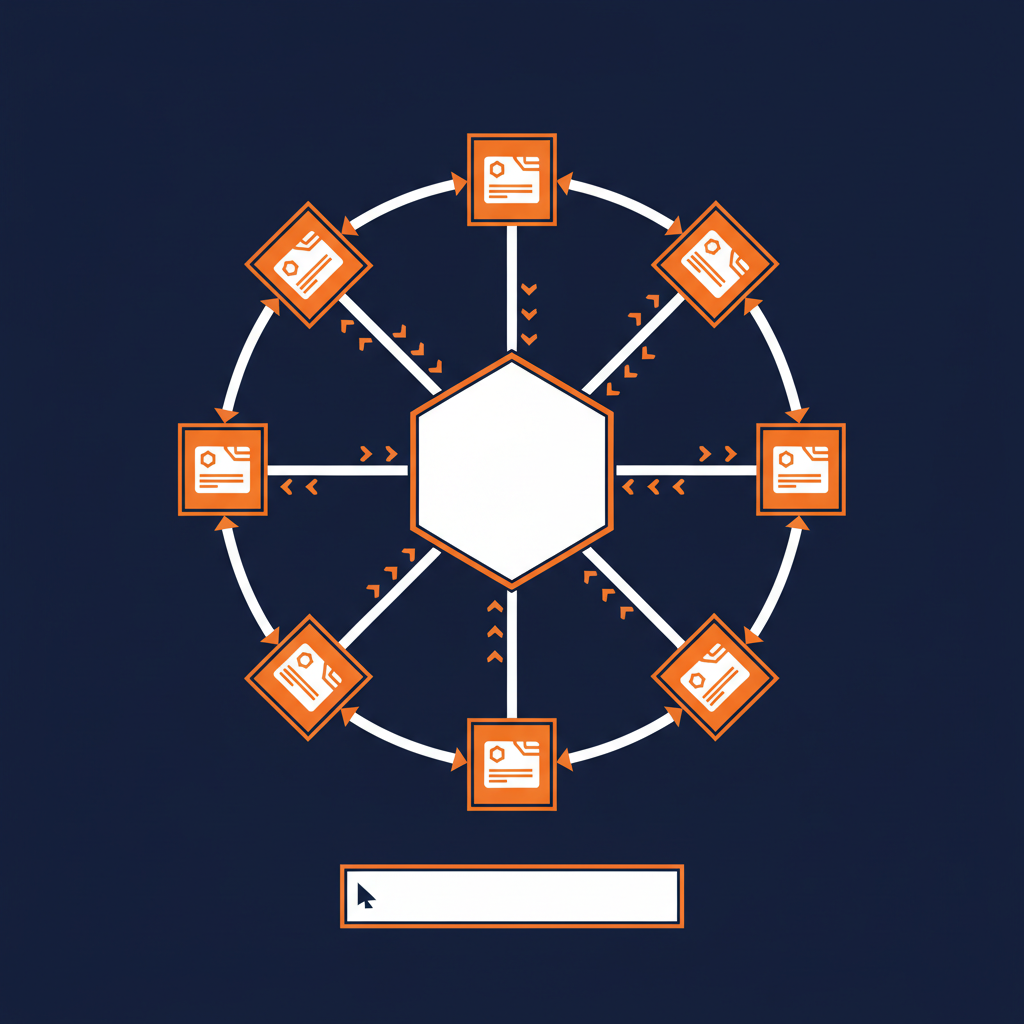

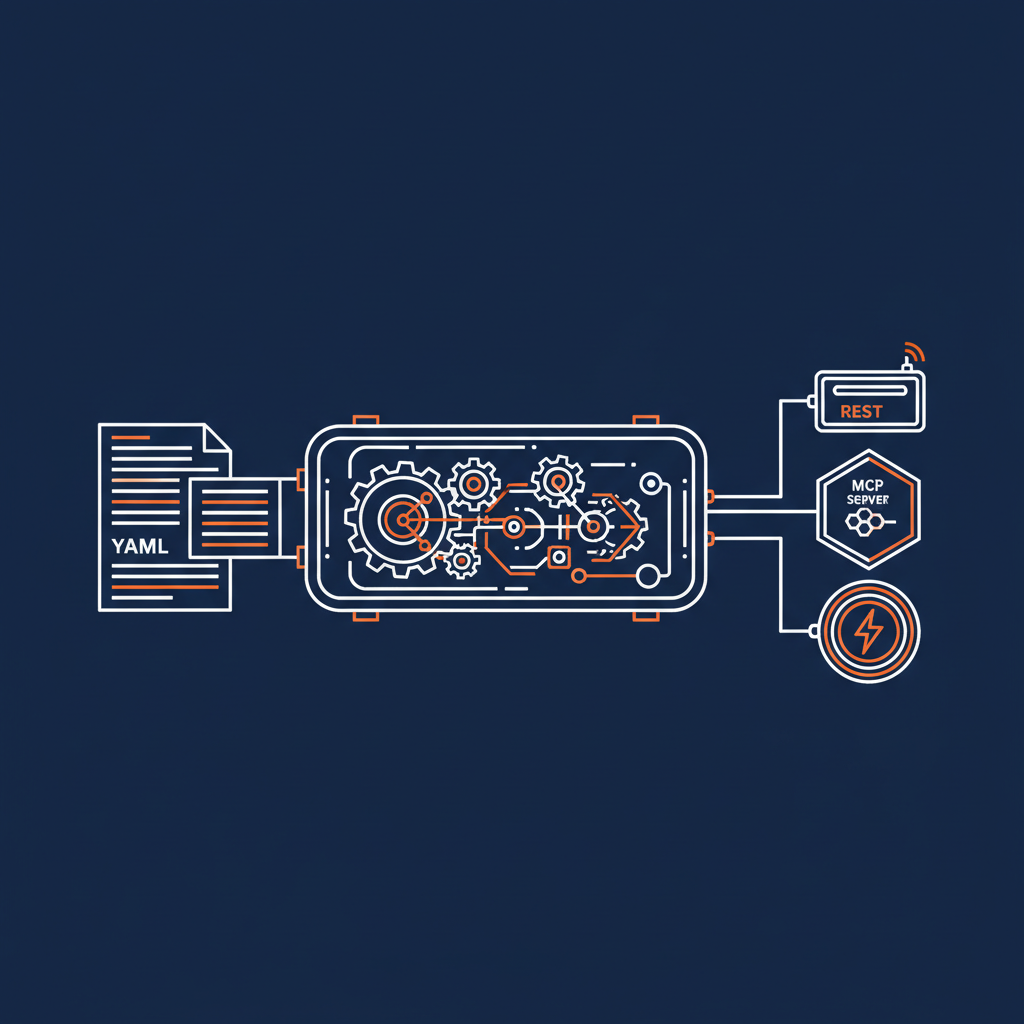

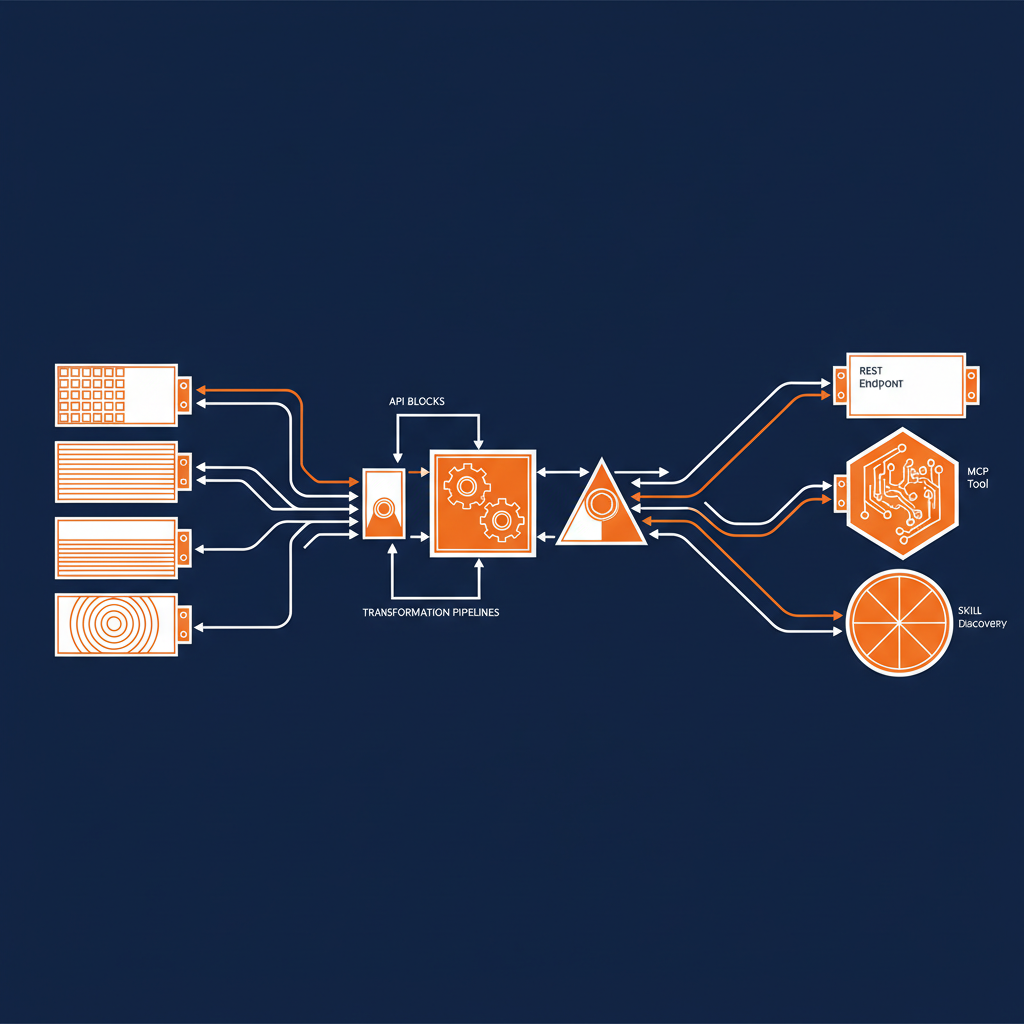

Before getting into the distribution story, it’s worth being precise about what we’re talking about. Agent skills are structured context packages — SKILL.md files and supporting scripts — that teach AI coding agents how to work with specific libraries, frameworks, APIs, and best practices. They’re not plugins in the traditional sense. They don’t extend the agent’s runtime. They extend the agent’s knowledge. They tell an agent like Claude Code, Cursor, or Windsurf how to correctly use a library, what patterns to follow, what mistakes to avoid, and how to interact with specific APIs.

That’s a meaningful distinction. We’re not distributing code that runs inside the agent. We’re distributing context that shapes how the agent writes code for you.

The NPX Distribution Model

The distribution model is straightforward. You run npx skills add <skill-name> and the skill gets pulled from a GitHub repository or a specialized registry like skills.sh, then placed into a local folder like ~/.claude/skills. From that point forward, your coding agent has access to that skill’s context whenever you’re working. No manual configuration. No copy-pasting documentation. No training the agent yourself on how a library works.

Vercel and Vercel Labs launched the skills.sh registry and the skills CLI that made this possible. The command npx skills add [owner/repo] gives you a one-line installation path for any skill published to the registry or hosted on GitHub. That’s a developer experience that people already understand. It’s the same mental model as npm install. The barrier to adoption is essentially zero for anyone who has ever worked with Node.

Who Is Using This

The list of organizations publishing skills through npx is growing quickly, and it tells you something about where this is heading.

Better Auth publishes skills that teach agents how to work with their authentication library using npx skills add better-auth/skills. Neon Database offers npx skills add neondatabase/agent-skills to help agents understand serverless Postgres best practices. Nx ships skills for managing monorepo complexities and package linking. Google Workspace released a CLI that lets you run npx skills add github:googleworkspace/cli to integrate Google service interactions directly into your agent’s context.

Mintlify auto-generates skills from documentation sites, which means any API with decent docs can automatically become a skill that agents consume directly. Individual developers are publishing specialized skills for everything from newspaper-style content formatting to framework-specific coding patterns.

The pattern is clear. Library maintainers, platform providers, and individual developers are all converging on the same distribution mechanism. That’s how you know it’s working.

Why This Matters for the Enterprise

Here’s where my perspective on this differs from most of what I’ve been reading. The npx skills model isn’t just a convenience for individual developers. It’s an enterprise distribution strategy that solves real problems organizations are struggling with right now.

Every enterprise I talk to is dealing with the same challenge: how do you ensure that AI coding agents across your organization follow your internal standards, use your APIs correctly, and don’t generate code that violates your architectural patterns? The answer isn’t a training program. The answer isn’t a wiki that developers are supposed to read before they prompt. The answer is distributing that knowledge as skills that get installed once and applied automatically.

Think about what this means in practice. Your platform team publishes an internal skill for your authentication service. Every developer who installs it gets an agent that knows your auth patterns, your token handling, your error codes — without ever reading the internal documentation. Your API team publishes a skill for each production API. Agents consuming those skills generate correct client code on the first try because they have the context they need.

This is context engineering at the distribution layer. You’re not just engineering the context for one conversation or one developer. You’re packaging it and shipping it to every agent in the organization through the same infrastructure you already use for code dependencies.

The Registry Question

Right now, skills.sh serves as the primary public registry, and GitHub repositories serve as the decentralized alternative. For enterprises, the question becomes whether you need a private registry — and the answer is almost certainly yes. The same way organizations run private npm registries for internal packages, they’ll need private skills registries for internal agent context.

The good news is that this infrastructure already exists. If you’re running Artifactory, Verdaccio, or GitHub Packages for your npm dependencies, extending that to skills distribution is not a significant lift. The packaging format is simple. The distribution mechanism is proven. The authentication and access control patterns are well-understood.

NPX as the Package Manager for AI

What we’re witnessing is npx becoming the package manager for AI agent capabilities. Not for model weights. Not for inference infrastructure. For the context and knowledge that determines whether an agent produces useful output or generic noise. That’s a different layer than what most people think about when they think about AI infrastructure, but it might be the most important one for day-to-day development.

The agents themselves are commoditizing. Claude Code, Cursor, Windsurf, GitHub Copilot, Gemini CLI, Roo Code, Trae — they all work with this model. The differentiator isn’t which agent you use. It’s what skills that agent has access to. And the organizations that figure out how to package and distribute their institutional knowledge as skills are going to have agents that are dramatically more useful than the ones running on generic context alone.

What This Means for Naftiko

From where I sit, the npx skills distribution model validates a lot of what we’ve been building toward at Naftiko. Our capability specification is designed to make API integrations discoverable, testable, and consumable by agents. The skills ecosystem is the distribution layer that gets those capabilities into the hands of developers — or more precisely, into the context windows of their agents.

The intersection of structured API capabilities and npx-distributed skills is where enterprise AI integration actually becomes practical. Not theoretical. Not a demo. Practical, repeatable, and scalable through infrastructure that development teams already know how to operate.

We’re past the point of wondering whether agents will become a primary development interface. They already are. The question now is how you distribute the knowledge they need to be useful in your specific context. NPX has answered that question with something developers have been comfortable with for years: a package manager and a one-line install command.