Accenture is dismantling silos. EY is embedding AI across its assurance and tax practices. Across the professional services industry, the conversation has shifted from “how do we use AI?” to “how do we restructure everything around it?” The ambition is real – integrated reinvention teams, AI-embedded business units, flattened pyramids, platform-based delivery. It sounds like the future.

But there’s a problem that none of these transformation narratives address directly: the integration layer underneath all of it is still held together with duct tape.

What the Signals Tell Us

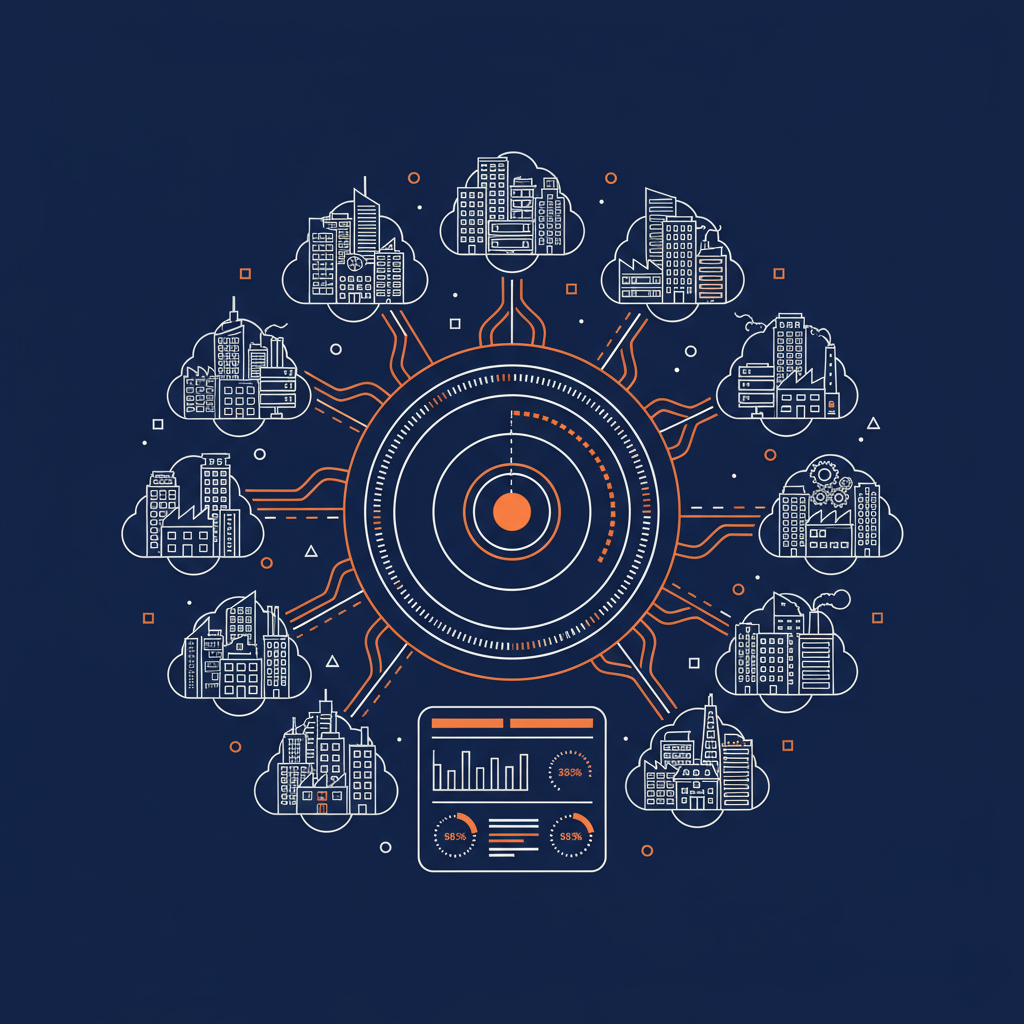

Naftiko’s signal-based analysis of 10 professional services companies – spanning 2,561 technology areas, 435 services, 260 tools, and 239 standards – paints a picture that is both impressive and revealing.

The strengths are where you’d expect them. Services investment leads at 1,762, followed by Data at 905 and Cloud at 896. Security and Operations round out the top five. These firms have deep technology footprints. They are not under-invested in the raw materials of AI transformation.

But look at where the gaps are, and the structural challenge comes into focus.

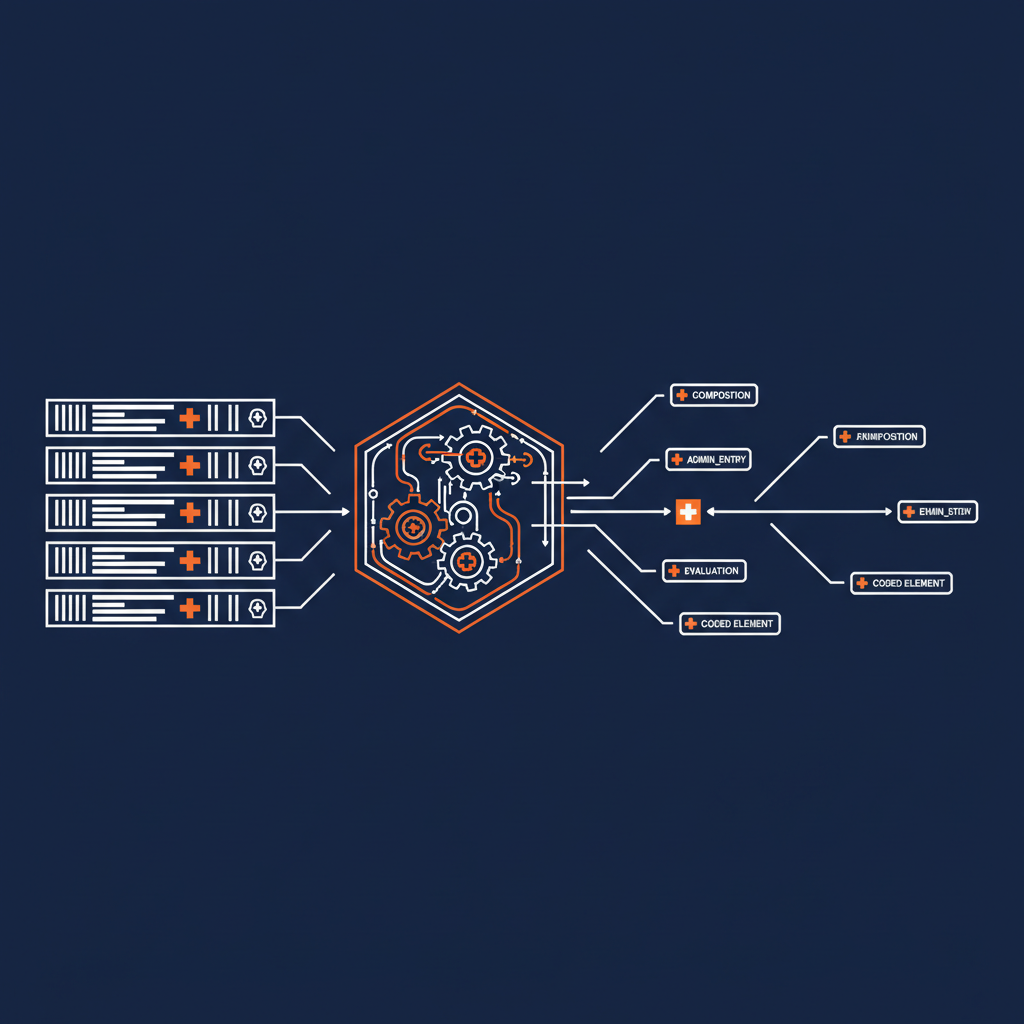

Specifications scored 50. In an industry that is trying to shift from bespoke projects to reusable platform models, the investment in the standards that make reuse possible – OpenAPI, AsyncAPI, JSON Schema – is barely registering. You cannot build a governed, discoverable capability inventory on top of undocumented, unspecified APIs.

Context Engineering scored 0. Zero. The very discipline of delivering the right context to AI systems – the thing that determines whether copilots and agents produce useful outputs or hallucinated garbage – has no measurable investment signal across the entire industry.

Domain Specialization scored 13. Despite every firm talking about industry-specific AI solutions, the actual investment in domain-specialized infrastructure is negligible.

SaaS scored 28. Privacy & Data Rights scored 34. AI FinOps scored 43. The economic and governance layers that would make AI-native operations sustainable at scale are all in the bottom five.

The Operating Model Problem

The research coming out of the professional services world right now tells a compelling story about structural reinvention. Accenture is moving from siloed business units to integrated, outcome-focused teams. EY is weaving AI into its core service lines – using machine learning to automate audit procedures, accelerate tax compliance workflows, and deliver real-time advisory insights across its global practice. Both firms are redistributing technology ownership from central IT into business teams. Work is being redesigned around the assumption that AI handles execution while humans focus on orchestration and judgment.

These are the right instincts. But they run headlong into a practical reality: if your 14,000+ internal APIs are undocumented, ungoverned, and invisible to the AI systems that are supposed to orchestrate them, your reinvention stalls at the integration layer.

Consider what Accenture achieved in finance – freeing up 20% of idle cash with machine learning, saving 57,000 hours annually with AI-generated narratives, tripling receivables automation. Or look at EY, which has invested over $1.4 billion in AI and has been rolling AI-driven automation into audit, tax, and consulting engagements – reducing cycle times and enabling teams to focus on higher-value judgment work. These are genuine, measurable outcomes. But each one required custom integration work against specific systems, specific data models, specific APIs. They are victories of craftsmanship, not of a repeatable capability architecture.

The question isn’t whether Accenture or EY can build impressive AI-powered solutions one at a time. They clearly can. The question is whether their operating models can scale that approach across thousands of use cases without the integration layer collapsing under its own weight.

Adoption vs. Outcomes – and the Infrastructure Between Them

One of the sharpest critiques in the current discourse is the distinction between measuring AI adoption and measuring AI outcomes. Making AI a promotion requirement, as Accenture has done, drives compliance. But as several observers have pointed out, adoption of a technology doesn’t necessarily lead to value creation from that technology.

What’s missing from this debate is the infrastructure gap that sits between adoption and outcomes. An AI agent cannot automate a workflow that has never been defined. It cannot generate reliable insights without access to high-quality data. It cannot scale expertise if that expertise is not captured in a structured way. These are real constraints, and they are precisely what shows up as zeros and single-digit scores in our signal analysis.

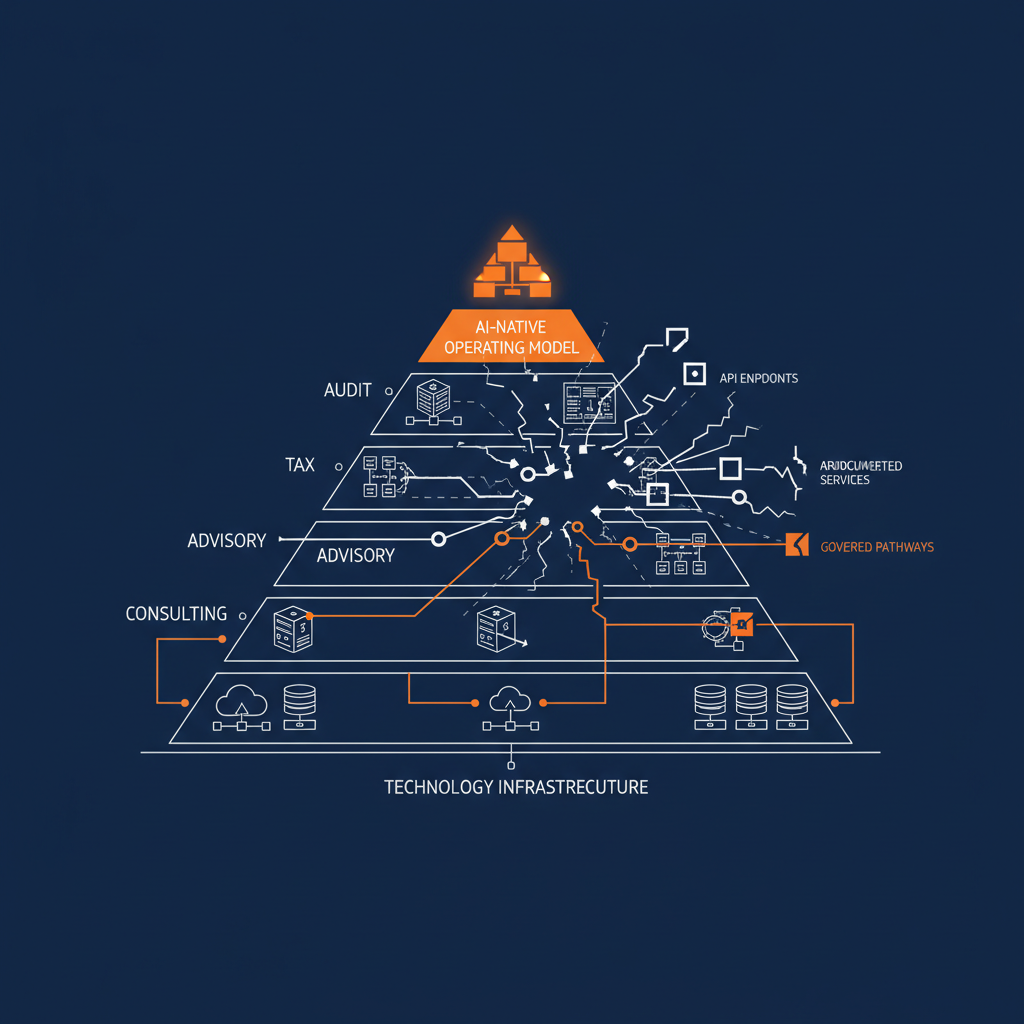

When firms like Accenture and EY talk about shifting from projects to platforms, from bespoke delivery to reusable assets, they are describing a world that requires:

- Discoverable APIs – not just documented, but findable and evaluable by both humans and AI systems.

- Governed integration patterns – not shadow MCP servers and ad-hoc agent connections, but policy-driven, auditable capability boundaries.

- Specification-first infrastructure – where what a service consumes, exposes, and governs is declared explicitly, not reverse-engineered from production traffic.

- Context engineering – delivering right-sized context to AI rather than dumping raw API complexity into ever-growing tool lists.

This is the capability layer. Not another tool or platform, but the structural pattern that turns existing technology investments into something AI can actually work with at scale.

The Pyramid Is Flattening – Into What?

The disruption of the traditional consulting pyramid is one of the most discussed consequences of AI in professional services. When AI automates many junior-level tasks, the economics of scaling human effort as a growth mechanism break down.

But the firms that navigate this transition successfully won’t be the ones that simply cut headcount. They’ll be the ones that replace the pyramid’s function – structured knowledge transfer from senior to junior staff through supervised project work – with something better: a governed inventory of capabilities that encode institutional knowledge in a form that both humans and AI systems can discover, compose, and build on.

This is fundamentally a specification and governance problem, not a hiring problem. And it’s exactly where the professional services industry is currently under-invested.

What Comes Next

The professional services industry is at an inflection point. The firms leading the conversation about AI-native operating models are asking the right strategic questions. But the signal data suggests a meaningful gap between the ambition and the infrastructure needed to realize it.

The firms that close that gap – that invest in specifications, context engineering, governed integration, and capability architecture – will be the ones that actually deliver on the promise of platform-based, AI-native service delivery. The ones that don’t will continue to achieve impressive results one project at a time, but they won’t compound.

The professional services industry’s aggregate technology score of 11,425 reflects serious investment. The question is whether that investment is structured in a way that AI can actually leverage – or whether it remains an archipelago of capabilities that no agent can navigate.