I keep watching teams copy-paste an entire JSON response into a prompt and then wonder why the model is slow, expensive, and distracted. This is the second post in a nine-part walk-through of the Naftiko Framework use cases, and the one I probably care about the most personally — because it is the one that separates people who understand context engineering from people who think context engineering is prompt engineering with a different hat on.

Use case 2 in the framework wiki is Rightsize AI context. Expose only the context an AI task actually needs, reducing noise, improving relevance, and keeping prompts efficient. The wiki version is here: Rightsize AI context.

Upstream APIs were never designed for a model

Here is the part of this that nobody wants to say out loud. The REST APIs inside our enterprises were designed by humans for humans, on product timelines that had nothing to do with LLMs. They carry pagination cursors, audit fields, soft-delete flags, _embedded wrappers, HATEOAS links to things the model will never follow, envelope fields like success, meta.requestId, data.results[0].payload.orders that are three layers deep before you reach anything real.

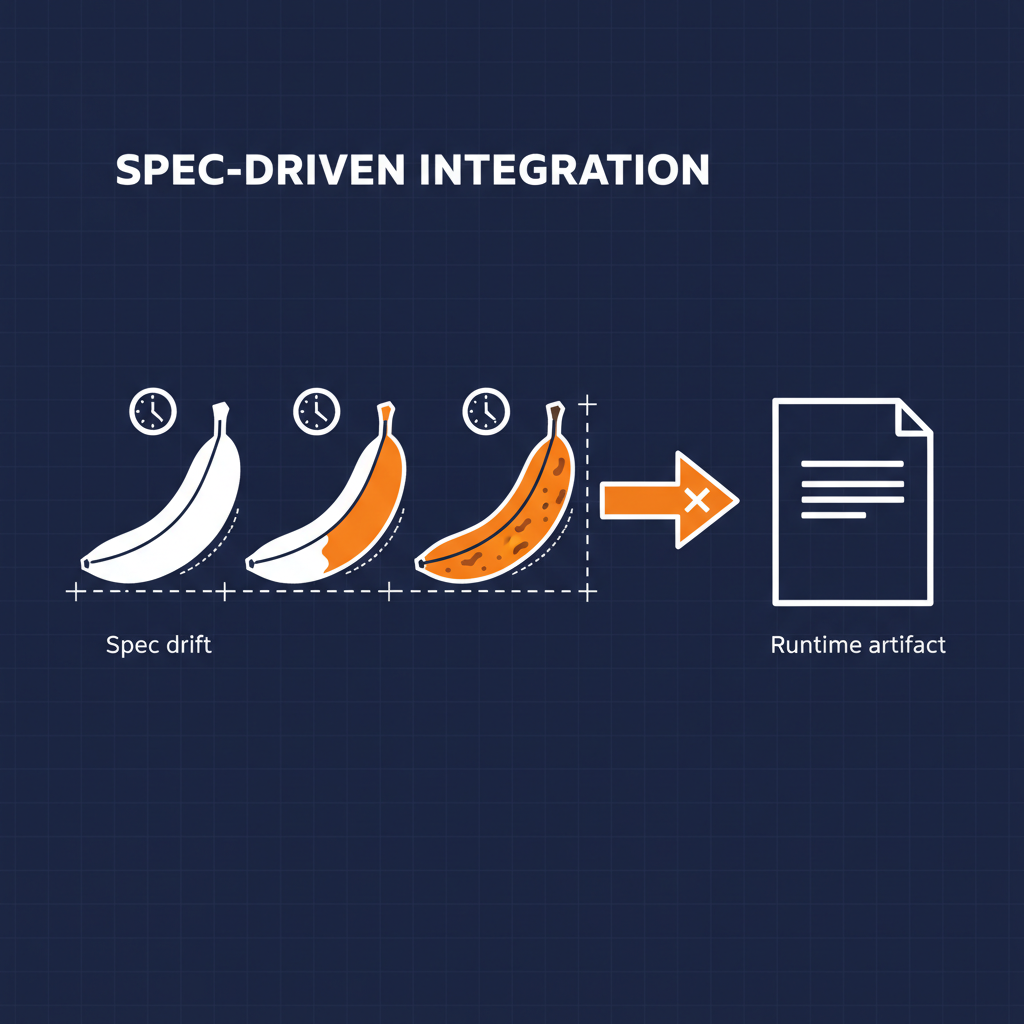

None of that was a mistake at the time. It was the envelope the product team needed. But when you pipe that response straight into a tool-call result and then into a prompt, you are asking the model to pay tokens for every audit field, every null, every internal flag that is irrelevant to the task. And then you are asking it to pick the important thing out of the noise. Token bills climb. Accuracy drops. Latency gets worse. The team blames the model.

The model is not the problem. The pipe is the problem.

The capability spec takes this seriously

The Naftiko capability spec treats this as a first-class concern. The same outputParameters block that makes AI integration feel declarative is where rightsizing lives. You describe the upstream operation in a consumes block. You describe the exposed tool or resource in an exposes block. And between the two, you use typed outputParameters with JSONPath mapping to pick exactly which fields the agent sees — and what shape they arrive in.

Here is the pattern I keep reaching for.

naftiko: "1.0.0-alpha1"

info:

title: Customer Orders (AI Surface)

description: "Trimmed order context for AI assistants — only the fields a task needs"

capability:

consumes:

- namespace: billing

type: http

baseUri: "https://api.billing.internal"

authentication:

type: bearer

token: ""

resources:

- name: orders

path: "/v1/orders"

operations:

- name: list-open-orders

method: GET

inputParameters:

- name: customerId

in: query

type: string

required: true

exposes:

- type: mcp

namespace: customer-orders-ai

description: "Customer orders, rightsized for AI tool calls"

tools:

- name: list-open-orders

description: "List only the fields an AI task needs about a customer's open orders"

hints:

readOnly: true

idempotent: true

call: billing.list-open-orders

inputParameters:

- name: customerId

type: string

description: "The customer whose open orders we need"

required: true

mapping: "queryParameters.customerId"

outputParameters:

- type: array

mapping: "$.data.results[0].orders"

items:

type: object

properties:

- name: orderId

type: string

mapping: "$.id"

- name: amount

type: number

mapping: "$.totalAmount"

- name: status

type: string

mapping: "$.status"

- name: source

type: string

const: "billing-platform"

The upstream response to GET /v1/orders?customerId=... in real life probably looks like this:

{

"success": true,

"meta": { "requestId": "abc123", "latencyMs": 42, "version": "2019.3" },

"data": {

"results": [

{

"orders": [

{

"id": "ord_001",

"customerId": "cus_abc",

"totalAmount": 149.99,

"status": "open",

"lineItems": [ /* twenty nested objects */ ],

"_embedded": { /* a tree of related resources */ },

"_links": { /* HATEOAS */ },

"createdAt": "...",

"updatedAt": "...",

"deletedAt": null,

"softDelete": false,

"auditFields": { /* who changed what when */ }

}

]

}

]

}

}

What the model actually needs to answer “what open orders does this customer have?” is the array of three-field objects at the top. The rest is noise. The capability spec, not the prompt, decides that.

Three dimensions again

Same lens I apply to every API topic — technology, business, politics.

Technology. Token count per tool call drops by an order of magnitude in most cases I have measured. The model is not re-parsing _embedded links it cannot follow. The typed schema on the output means the tool description surfaced to the agent is tight and self-explanatory. Discovery improves because the MCP tool schema is clean, not a pass-through of a sprawling upstream contract.

Business. Somebody pays for every token. Rightsizing is cost engineering. The capability owner decides what the model sees, which means the cost and accuracy of every AI tool call can be reviewed as a code diff, not discovered six weeks in when the bill arrives.

Politics. This is the one that matters to me most. When you let the upstream envelope reach the model, you have ceded context design to whichever team shipped the upstream API, whenever they shipped it, for whatever reason. Rightsizing in the capability spec moves that decision back to the team accountable for the AI surface. That is a governance shift, not a performance tweak.

What the framework wiki calls out for this use case

These are the features the wiki highlights specifically for rightsize AI context — and every one of them earns its place:

- Declarative applied capability exposing MCP — the exposed surface is a declaration, not code

- Applied capabilities with reused source capabilities — one upstream, many rightsized exposures for different tasks

- MCP over Streaming HTTP and Standard IO — remote and local agent clients both work

- Output shaping with typed parameters and JSONPath mapping — the core primitive that makes rightsizing real

- Fine-grained field selection and nested object mapping — pull exactly the fields a task needs, no more

constvalues to inject static context — add provenance or tag fields without a separate call- Typed MCP tool input parameters with descriptions — discovery is semantic, not guesswork

- Required/optional parameter declarations for agent discovery — the model learns the contract from the spec

Each one is a knob you would otherwise be building inside your tool code.

Where I keep landing on this

I think context engineering is going to be the area where the difference between teams that ship quietly and teams that keep missing quarterly targets becomes the most visible. Not model selection. Not prompt templates. Not agent frameworks. The humble work of deciding what the model actually needs to see, and making that decision in a place where somebody else can review it.

The capability spec is where that decision belongs. outputParameters with JSONPath mapping is the primitive. Everything else is commentary.

Next

Next post in the series is Elevate an existing API — wrapping a legacy or cranky internal API as a capability so it becomes easier to reuse across teams and channels. Same three-dimensional lens.

- Wiki: Guide — Use Cases

- GitHub: github.com/naftiko/framework

- Fleet Community Edition: github.com/naftiko/fleet

Walking the list.