Snowflake publishes 38 OpenAPI specs. I have been staring at them for a week. They cover everything — Account, Database, Warehouse, Cortex, Dynamic Tables, Iceberg Tables, Notebooks, and on and on. If you are writing code against Snowflake, that catalog is what you want.

It is not what you want if you are wiring a copilot, an agent, or a Claude-Desktop-style IDE client into Snowflake.

38 OpenAPIs means 38 surfaces, 38 auth patterns to reason about, 38 sets of request envelopes a model has to understand. That is not an integration layer. That is raw material.

So I spent today turning the raw material into a capability surface. Everything I am going to describe is in Git, and the pattern is the same one the Naftiko framework wiki has in its Guide — Use Cases.

The shape

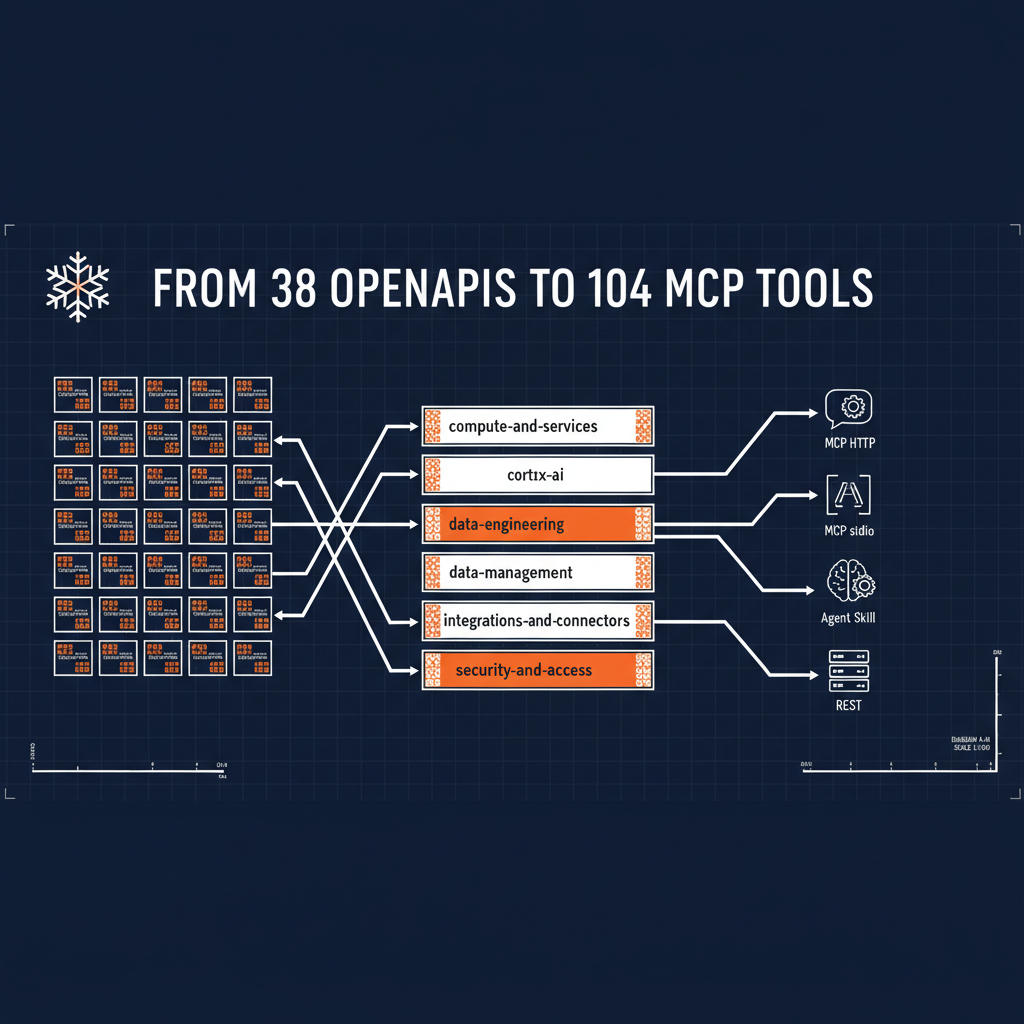

Six workflow capabilities wrap the 38 Snowflake APIs, each built for a specific audience:

- compute-and-services — for platform engineers and DevOps: warehouses, compute pools, Snowpark Container Services, image repositories, alerts

- cortex-ai — for ML engineers and data scientists: LLM inference, natural language analytics (Cortex Analyst), Cortex Search, notebooks

- data-engineering — for data engineers: SQL execution, tasks, streams, pipes, stages, functions

- data-management — for DBAs and data engineers: databases, schemas, tables, views, dynamic tables, iceberg tables, event tables

- integrations-and-connectors — for platform engineers and data architects: API integrations, catalog integrations, notification integrations

- security-and-access — for security and platform admins: users, roles, grants, database roles, network policies, account administration

Each one is a single Naftiko capability YAML. Each one imports the matching OpenAPI-derived shared capabilities in its consumes block, and exposes the operations across four surfaces in its exposes block — MCP over HTTP, MCP over stdio, Agent Skills, and REST.

The totals across all six:

- 104 MCP tools (same set exposed over both HTTP and stdio transports)

- 34 Agent Skill tools

- 52 REST operations

- Every tool and every REST operation has typed

inputParameters, typedoutputParameterswith JSONPathmapping, and explicitreadOnly,destructive,idempotenthints

What the output-shaping buys you

This is the part most teams skip and then regret. A Snowflake API returns everything it feels like returning — timestamps, metadata envelopes, pagination junk, internal trace IDs. If you forward that straight into a prompt, the model pays for every byte and has to filter through three levels of nesting to find the field it actually needs.

The capability spec pins down what the agent sees. A list-warehouses tool looks like this, shortened:

- name: list-warehouses

description: "List all warehouses in the account"

hints:

readOnly: true

destructive: false

idempotent: true

call: snowflake-warehouse.list-warehouses

outputParameters:

- type: array

mapping: "$.data"

items:

type: object

properties:

- name: name

type: string

mapping: "$.name"

- name: size

type: string

mapping: "$.size"

- name: state

type: string

mapping: "$.state"

The model never sees whatever verbose envelope Snowflake returns. It sees an array of three fields. That is what “Rightsize AI context” means in practice — and it is the framework wiki’s use case 2.

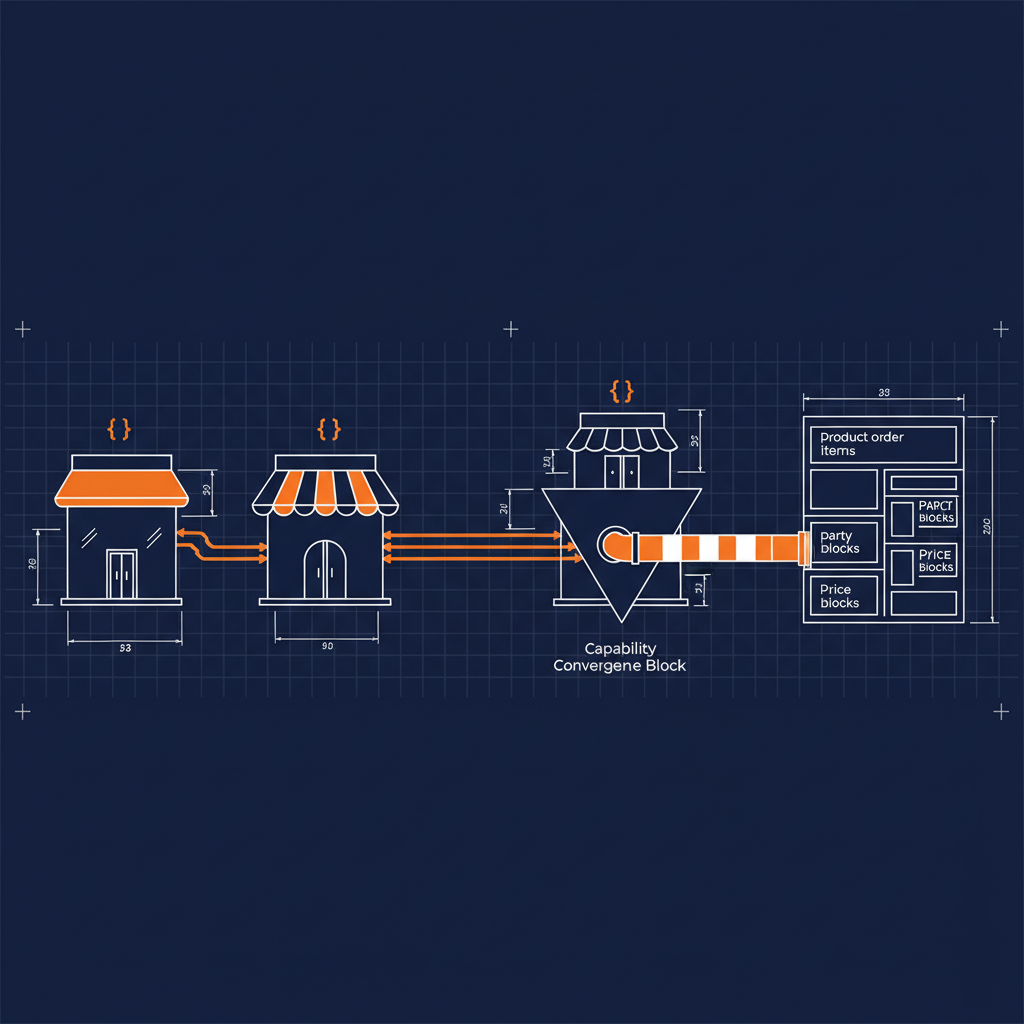

Why four surfaces, not one

Every capability exposes four listeners over the same underlying integration. That is not a flex. It is how you stop rewriting the same wrapper four times.

- MCP over HTTP for remote MCP clients — hosted copilot products, web agents

- MCP over stdio for local IDE/agent clients — Claude Desktop, Cursor, your own CLI

- Agent Skills for agent frameworks that consume the agentskills.io shape

- REST for everything else — internal services, traditional apps, scripts

Same consumes block, same output shaping, same auth, same governance rules. You pick the transport at startup. That is the use case 1 “polyglot exposure” pattern, made concrete across a real provider surface.

What I did not do

I did not rename a single tool. Every call: target I touched today already existed in the shared capabilities. I am not reshaping Snowflake — I am reshaping what the model sees of Snowflake.

I also did not add anything Snowflake does not already expose. The 104 MCP tools correspond to real Snowflake REST operations. The governance, the typing, and the rightsizing happen in the capability layer, not in a fork of Snowflake’s API.

Where to find

- Capability YAMLs: github.com/api-evangelist/snowflake/tree/main/capabilities

The YAMLs in api-evangelist/snowflake provide the canonical spec. The capabilities are derived mirrors for the apis.io index. The Snowflawke page lists all 47 Snowflake APIs, all 6 workflow capabilities, and the tool-count totals.

Why this matters past Snowflake

Snowflake is the proof. The pattern is the point.

Every large enterprise provider I look at — Salesforce, ServiceNow, SAP, Workday, Databricks — ships the same raw material. A large OpenAPI catalog, a lot of auth complexity, a lot of envelope noise, no governed agent surface. The capability layer is what turns “we have APIs” into “we have an agent-ready product.”

That is the work. Six workflow capabilities per major provider. Four surfaces per capability. Typed inputs, rightsized outputs, explicit hints, one spec in Git.

Snowflake was today. Next one is coming.

- Naftiko Framework (Apache 2.0): github.com/naftiko/framework

- Fleet Community Edition: github.com/naftiko/fleet

- Wiki — Guide — Use Cases: github.com/naftiko/framework/wiki/Guide-%E2%80%90-Use-Cases