These are observations I’ve been collecting over the past several months — from building with AI tools, watching the space evolve, and talking to engineers at every stage of adoption. A few of them genuinely changed how I’m thinking about the industry. I’ve tried to do more than just list them — I want to explain why they matter, and what they mean taken together.

The best prototype wins. Not the finished product.

There’s a quiet revolution happening in how software gets shipped, and it doesn’t look like what anyone predicted. The best teams aren’t spending weeks polishing a single build — they’re racing to create many rough prototypes simultaneously and shipping whichever one wins. Products that once took months to ship are launching in days.

There’s even a name for the underlying method: slot machine programming. Build twenty implementations. Pick the best. Throw the rest away. It sounds wasteful, but it’s not — because the cost of generating a working prototype has collapsed. The build-then-discard cycle isn’t waste anymore; it’s strategy.

What this means for teams is a shift in competitive advantage. The premium is no longer on who can build something slowly and carefully — it’s on who can iterate fastest and judge quality most accurately. Taste, judgment, and the ability to spot a winner quickly are becoming the scarcest resources on an engineering team.

The IDE is evolving. So is the person sitting in front of it.

We tend to assume that our tools are fixed and our habits adapt. But the trajectory flips this: the next generation of IDEs won’t be built around editing code at all. They’ll be built around managing agent workflows, having conversations with AI, and monitoring what those agents are doing.

The current popularity of tools like Claude Code — which our latest AI tooling survey confirms — is just the opening act. The real transformation is that the IDE is becoming a conversation and monitoring interface rather than a text editor. Code, in this framing, is an output of decisions — not the surface where decisions get made.

And yet there’s a bottleneck nobody’s talking about: reading. Five paragraphs of terminal output is already too much for many developers. The direction of travel is clear: most programming will soon happen through voice and visual interfaces — talking to an avatar rather than parsing walls of monospace text. The tools that win won’t just be technically capable. They’ll be legible. Comprehensible. Human.

The constraint on AI-assisted development isn’t intelligence — it’s interface. What developers can’t quickly understand, they can’t effectively direct.

Most engineers are still at the bottom of the curve.

Think of AI adoption as a spectrum of eight levels, running from “no AI” at the bottom to full multi-agent orchestration at the top. The vast majority of working engineers today sit at levels one or two: accepting IDE suggestions, carefully reviewing whatever the model spits out, occasionally asking it to write a function.

That’s not nothing, but it’s also not close to the ceiling. Those who stay at the bottom of the curve will be left behind — not because AI will take their jobs directly, but because the engineers who move up will become so much more capable that the gap becomes unrecoverable.

The engineers who level up will become so much more capable that the gap becomes unrecoverable.

The good news is that most of the rungs on the ladder are accessible right now, with existing tools. The barrier is mostly mindset and habit, not access.

Legacy architecture is now an AI readiness problem.

Here’s something that didn’t fully land for me until I sat with it: AI agents work effectively only up to roughly half a million to a few million lines of code. If your codebase is a monolith that doesn’t fit inside a context window, agents simply can’t navigate it well. They lose the thread. They hallucinate. They get lost.

This reframes technical debt in an entirely new way. It was always a burden — slowing releases, complicating onboarding, accumulating interest across every sprint. But now a sprawling monolith is also a structural barrier to an entire category of productivity tooling. It’s not just that refactoring is hard; it’s that not refactoring now has compounding costs that weren’t on the balance sheet two years ago.

Enterprise AI adoption will stall — or never happen — at companies whose architecture can’t accommodate agents. That’s a strategic problem, not just a code quality problem.

The knowledge floor has always shifted. This time is not different.

In the 1990s, every serious developer knew Assembly. Today, almost none do — and nobody considers that a crisis. Each generation of tooling has raised the floor of what you need to know and lowered the ceiling of what you need to manage manually. AI is doing the same thing to another layer of the craft.

We will grumble about it. We always do. The grumbling won’t slow it down. What matters is recognizing the pattern early enough to position yourself on the right side of the transition — learning to work at the new level of abstraction rather than defending the old one.

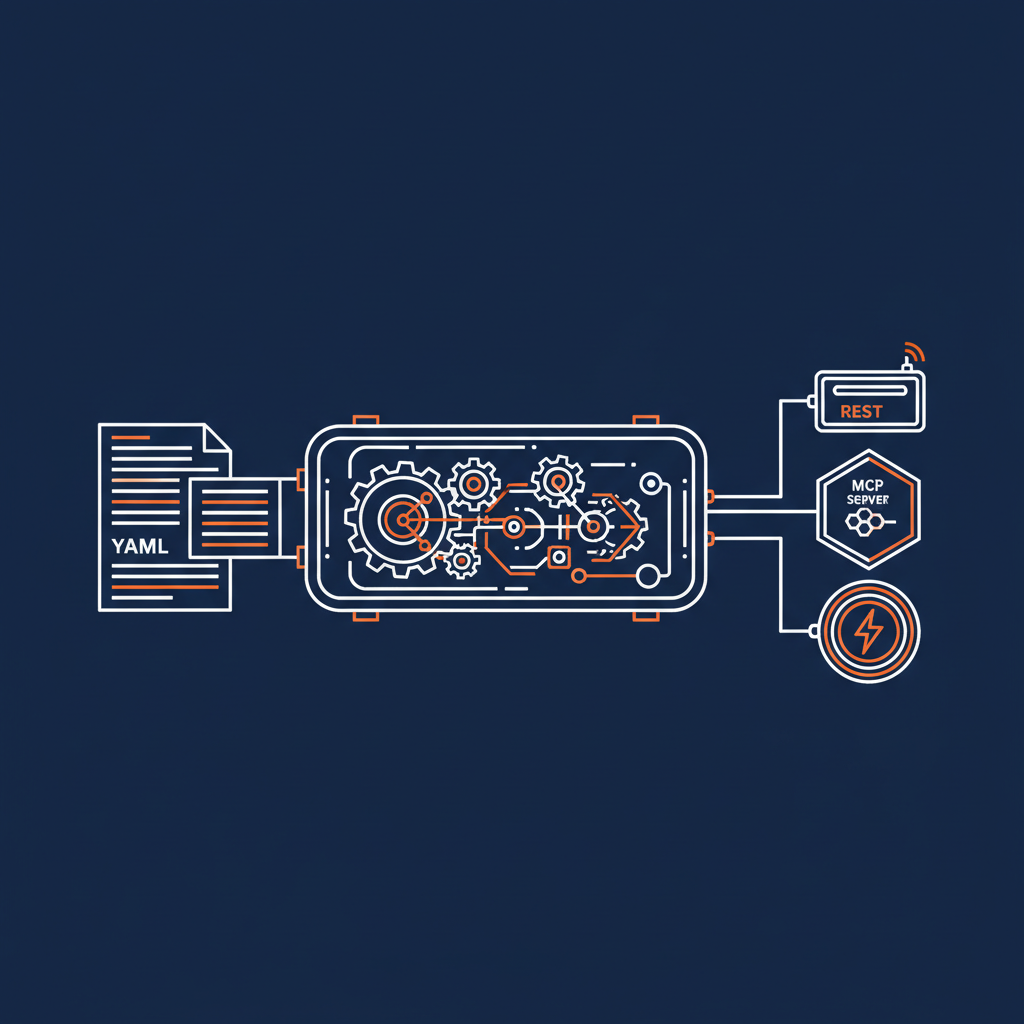

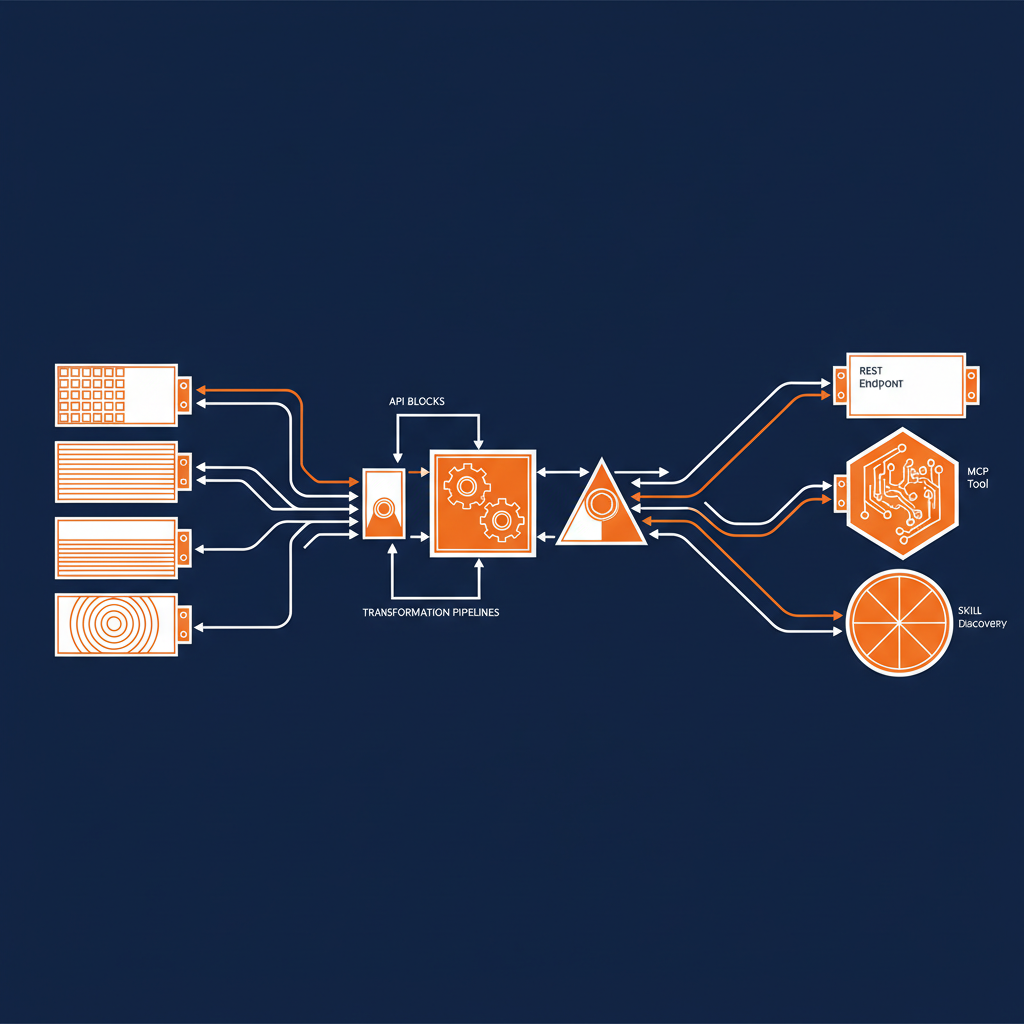

SaaS without an API is a liability, not a product.

The logic is simple: if an incumbent SaaS product doesn’t make itself a platform, AI-native companies will simply build bespoke replacements. Any SaaS product that doesn’t expose APIs is essentially asking customers to route around it.

The logic is straightforward. AI agents need to interface with software programmatically. If your product doesn’t permit that, an agent can’t help a user work with it. And if an agent can’t work with it, a leaner competitor — one that exposes everything through a clean API from day one — will eat your customer base from below.

The moat is no longer the product. It’s the platform. Interoperability is becoming a survival trait.

The Dracula Effect: productivity gains aren’t free.

This one is the most honest thing I’ve heard said about AI-assisted work in a long time. When AI handles the easy tasks, what’s left? The hard ones. Every day, all day, nothing but hard problems.

I think of it as the **Dracula Effect**: AI drains the easy work from your day, leaving only the cognitively intense tasks behind. You might get three genuinely productive hours out of a session — but in those three hours, you might produce what previously would have taken a week.

That’s powerful. It’s also exhausting in a way that’s different from ordinary exhaustion. The work is denser. The cognitive load is higher per hour. And that changes how you need to think about stamina, focus, and sustainable pace — not just for individuals, but for teams.

One hundred times the output. Three hours of maximum intensity. Both things can be true simultaneously, and both matter.

Even if the models stop improving, learning this is worth it.

This is the argument I find most useful for anyone still on the fence. We already have models capable enough that better orchestration matters more than smarter models. The marginal gain from learning to work well with agents exceeds the marginal gain from the next benchmark improvement.

And here’s the floor case — the worst-case scenario for investing in AI skills right now: the models plateau. Progress stalls. The hype deflates. What do you have? A durable, transferable skill set for working with systems that already exist and already work. That’s not a bad outcome. That’s a solid career move regardless of what happens next.

The upside scenario, of course, is that progress continues — in which case you’re positioned well for a world that will reward exactly what you’ve been building.

The field is moving fast, but the underlying shift is actually simple: AI isn’t replacing engineers — it’s changing which parts of engineering actually matter. Taste, judgment, architecture, and the ability to direct systems you don’t fully control are moving up the value stack. The engineers who understand this early will have an enormous head start.