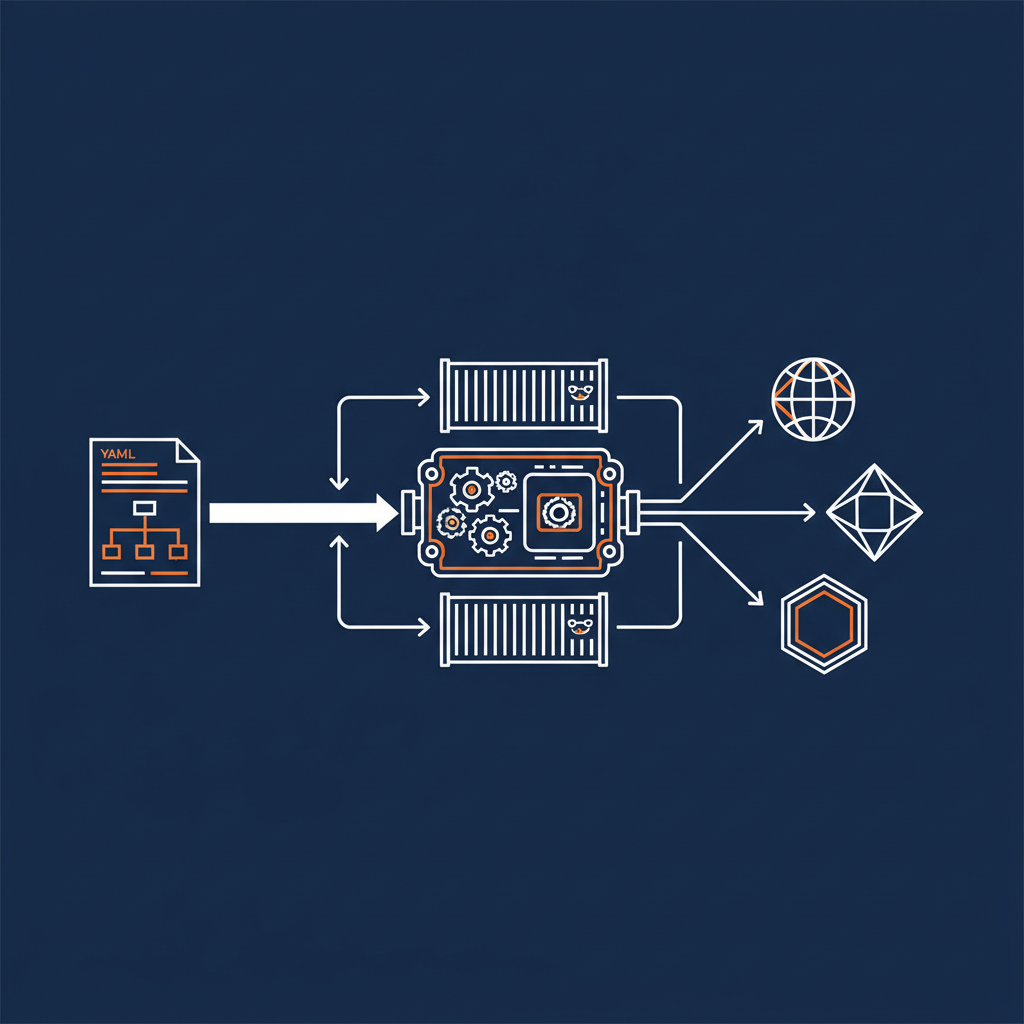

The Naftiko Framework is the open-source runtime engine that powers Spec-Driven Integration. It reads a declarative YAML capability file, runs it inside a Docker container, and immediately exposes live integrations over REST, MCP, or Agent Skills. There is no code generation step, no build pipeline, and no language runtime to manage. The specification is the integration.

The Problem It Solves

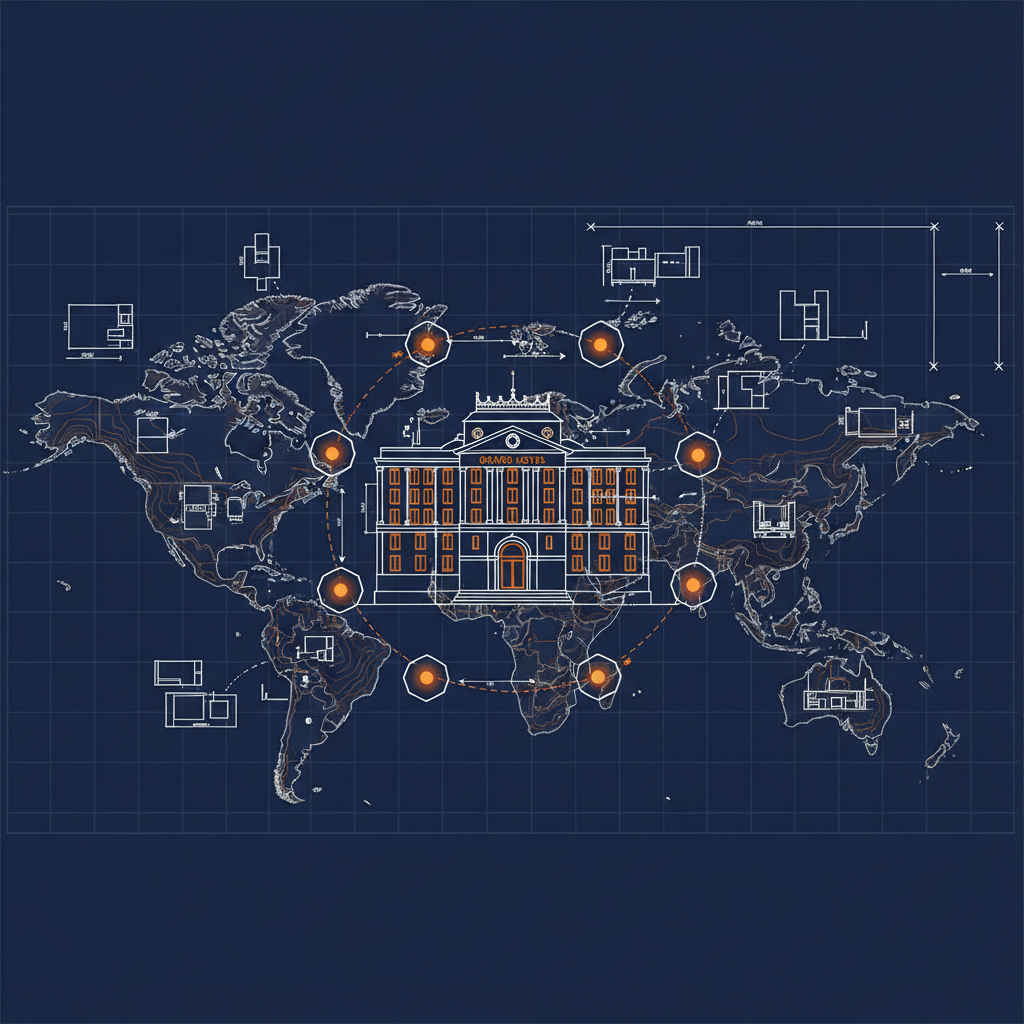

Modern enterprises are drowning in API sprawl. Internal services, third-party SaaS, AI model endpoints, and agent orchestration surfaces multiply faster than teams can document or govern them. The result is fragmented context, duplicated work, and integration logic scattered across code, configuration, and institutional knowledge that drifts out of sync with reality.

The Naftiko Framework treats this as a specification problem. Instead of writing bespoke glue code for every integration, you declare what an integration consumes and what it exposes in a single YAML file. The framework engine handles everything else at runtime.

How It Works

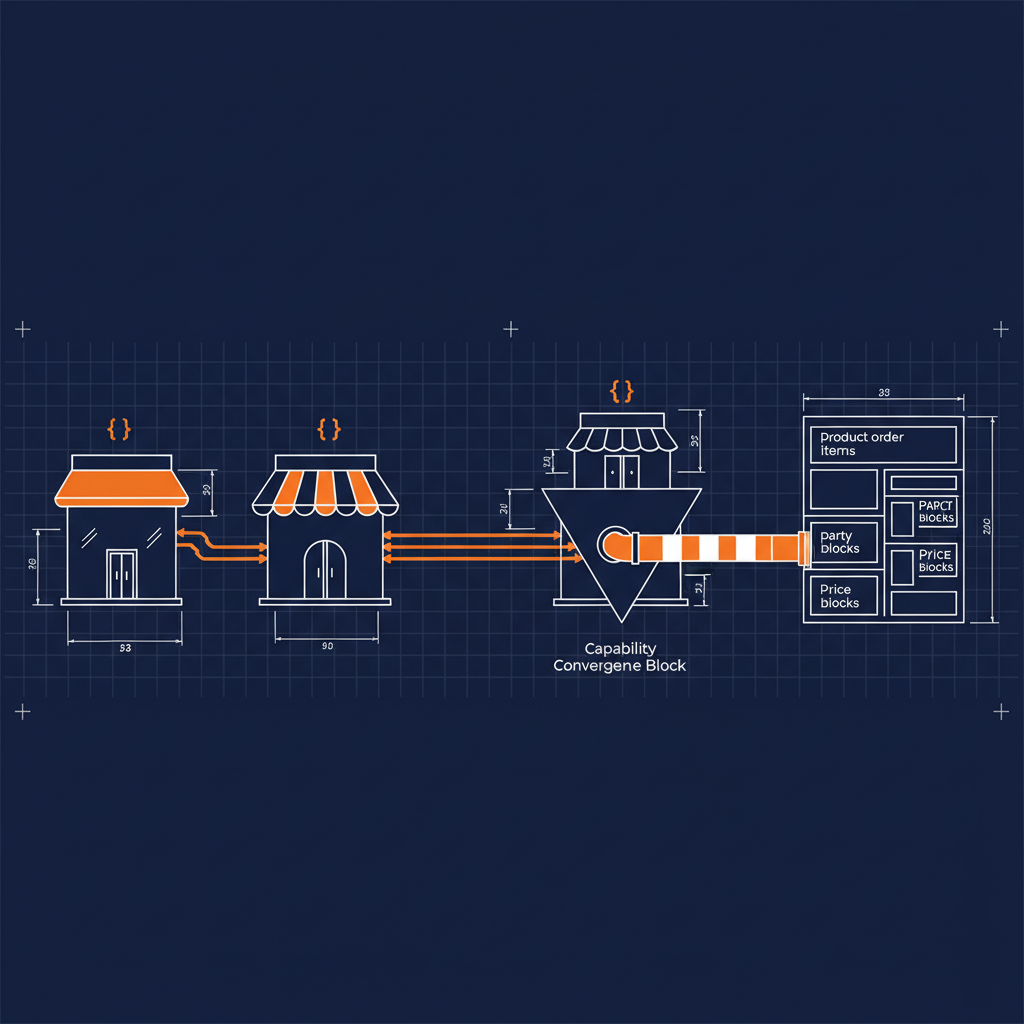

A Naftiko capability file has two sides. The consumes section declares the upstream HTTP APIs the capability connects to — base URIs, authentication, endpoints, parameters, and output extraction via JSONPath. The exposes section declares how the capability is made available to downstream consumers — as REST resources, MCP tools, or Agent Skills.

Between those two sides, the engine handles authentication (bearer, API key, basic, digest), data format conversion (JSON, XML, Protobuf, Avro, CSV, HTML, Markdown, and more), Mustache-based templating, and multi-step orchestration that lets you call multiple APIs in sequence and compose their results.

Here is the simplest version of the workflow:

- Write a YAML capability file that declares what you consume and what you expose.

- Pull the Naftiko Docker image from GitHub Packages.

- Run the container with your capability file mounted as a volume.

- Your integration is live — query it over REST or connect it to an AI agent via MCP.

docker pull ghcr.io/naftiko/framework:latest

docker run -p 8081:8081 \

-v /path/to/capability.yaml:/app/capability.yaml \

ghcr.io/naftiko/framework:latest /app/capability.yaml

That is it. No compilation, no deployment pipeline, no application code.

Multi-Protocol Exposure

One of the framework’s most practical features is that a single capability file can expose the same integration over multiple protocols simultaneously. You can serve a REST API for traditional clients and an MCP server for AI agents from the same YAML — same consumed sources, same data shaping, different access patterns.

This matters because the integration surface is fragmenting. Developers need REST endpoints. AI agents need MCP tools. Context engineers need right-sized data. The framework lets you serve all of them from one governed specification rather than maintaining parallel integration stacks.

The CLI

The framework ships with a CLI tool that simplifies the authoring and validation workflow. You can scaffold a new capability file with naftiko create capability and validate any file against the specification schema with naftiko validate. The CLI catches structural errors, missing fields, and schema violations before you ever start the engine — the same way a linter catches code issues before you run your application.

What Spec-Driven Integration Means in Practice

Spec-Driven Integration is the methodology behind the framework. The core idea is that the specification is the primary integration artifact — not documentation written after the fact, not generated code that drifts from the original intent, but the executable definition itself.

When the specification is the integration, several things follow naturally:

- Governance becomes inspectable. You can lint, diff, and review a YAML file the same way you review code. Changes to an integration show up in version control as changes to the spec.

- AI agents can reason about integrations. A structured, declarative specification is something an AI can parse, validate, and even propose refinements to. Opaque runtime behavior is not.

- Drift disappears. There is no gap between what is documented and what is deployed, because they are the same artifact.

- Reuse becomes measurable. When every integration is a capability file with a clear namespace, consumed sources, and exposed surfaces, you can actually inventory what exists and what is duplicated.

Use Cases

The framework supports a range of integration patterns out of the box:

- AI integration — Connect AI assistants to business systems through capabilities that expose MCP tools and Agent Skills.

- Context engineering — Shape and filter API responses into right-sized payloads for AI consumption, reducing noise and improving relevance.

- API elevation — Wrap existing APIs as governed capabilities to make them easier to discover, reuse, and consume across teams.

- Microservice composition — Create a simpler capability layer over many microservices to reduce client complexity.

- Monolith rightsizing — Extract focused capabilities from a broad API so consumers get only what they need.

Getting Started

The framework is open source and available on GitHub. The wiki includes a two-part tutorial that walks you through building your first capability, a use case guide, the full specification schema, and an FAQ. The engine is packaged as a Docker image, so if you have Docker installed, you can have a running integration in under a minute.

- Naftiko Framework on GitHub

- Installation Guide

- Tutorial Part 1

- Tutorial Part 2

- Specification Schema

- Community Discussions

The Naftiko Framework is the engine. The YAML capability file is the integration. Everything else is derived.