The conversation around enterprise AI has shifted. A year ago, the dominant question was “how do we get started?” Today, the question keeping senior executives up at night is “how do we stay in control?”

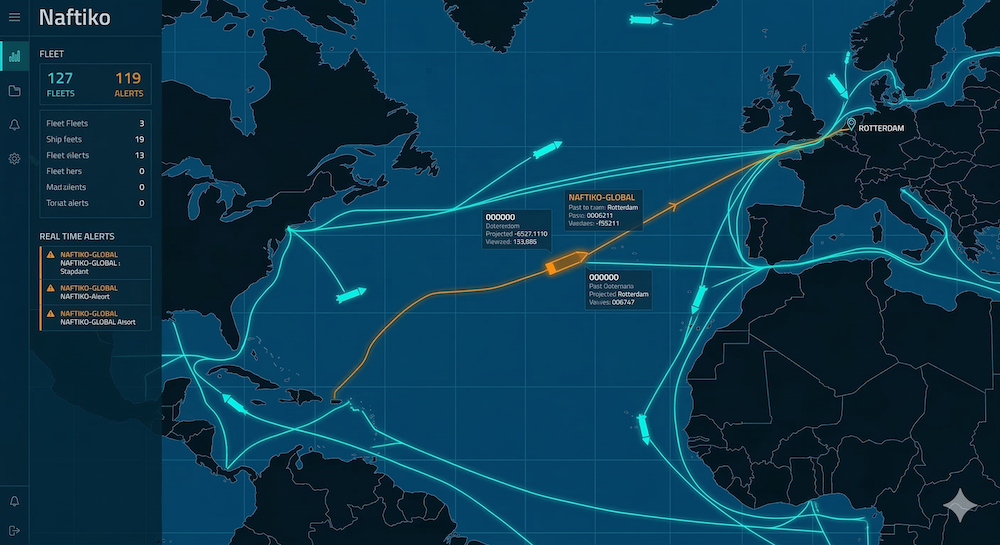

At Naftiko, we spend a lot of time talking to people who sit at the intersection of business and technology — advisors, operators, and architects who are helping large organizations navigate the leap into AI-powered workflows. A recent conversation with a veteran of 30+ years in professional services consulting, focused on financial services clients across Europe and Asia, crystallized something we’ve been seeing in our own market: the enterprises who get AI right aren’t starting with the technology — they’re starting with governance.

Governance isn’t a checkbox. It’s the center of gravity.

For enterprise clients, particularly those in regulated industries like banking and wealth management, governance isn’t one item on a long requirements list. It’s the organizing principle around which everything else is structured.

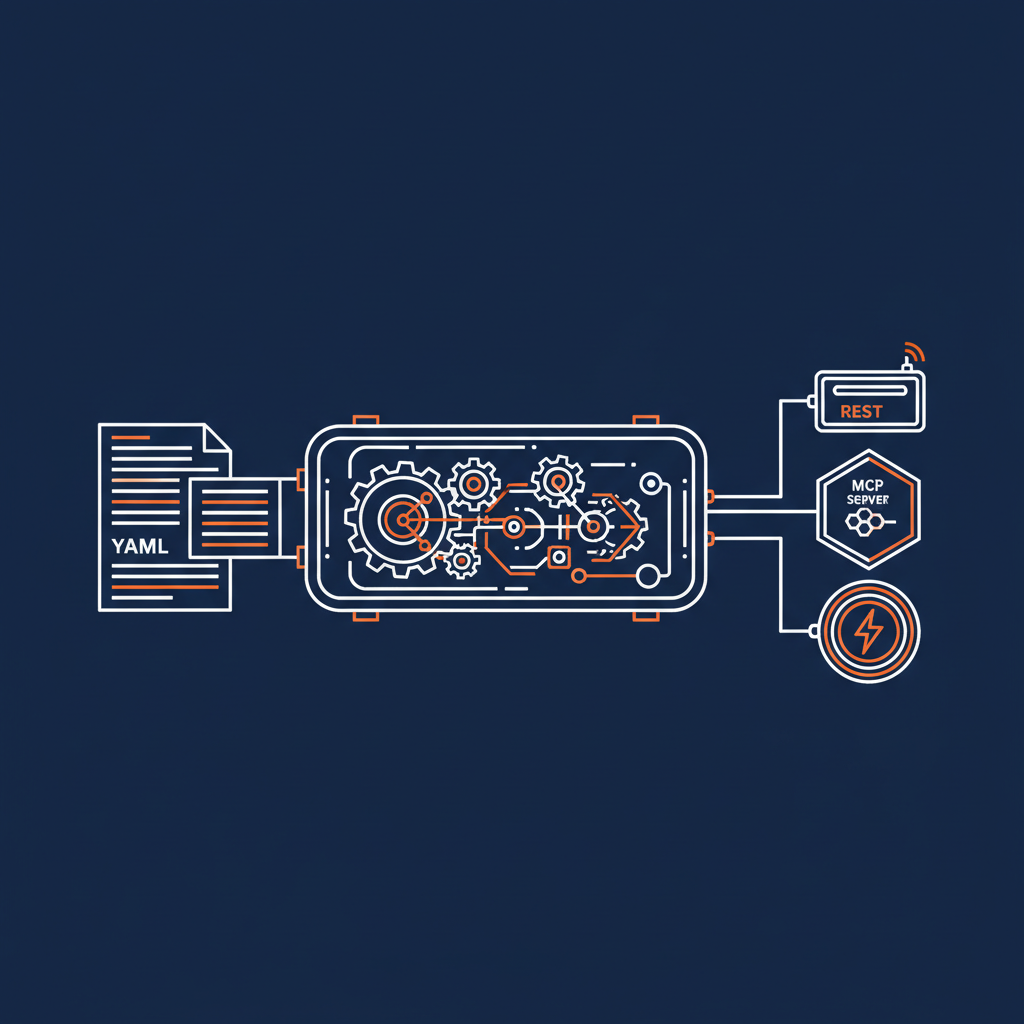

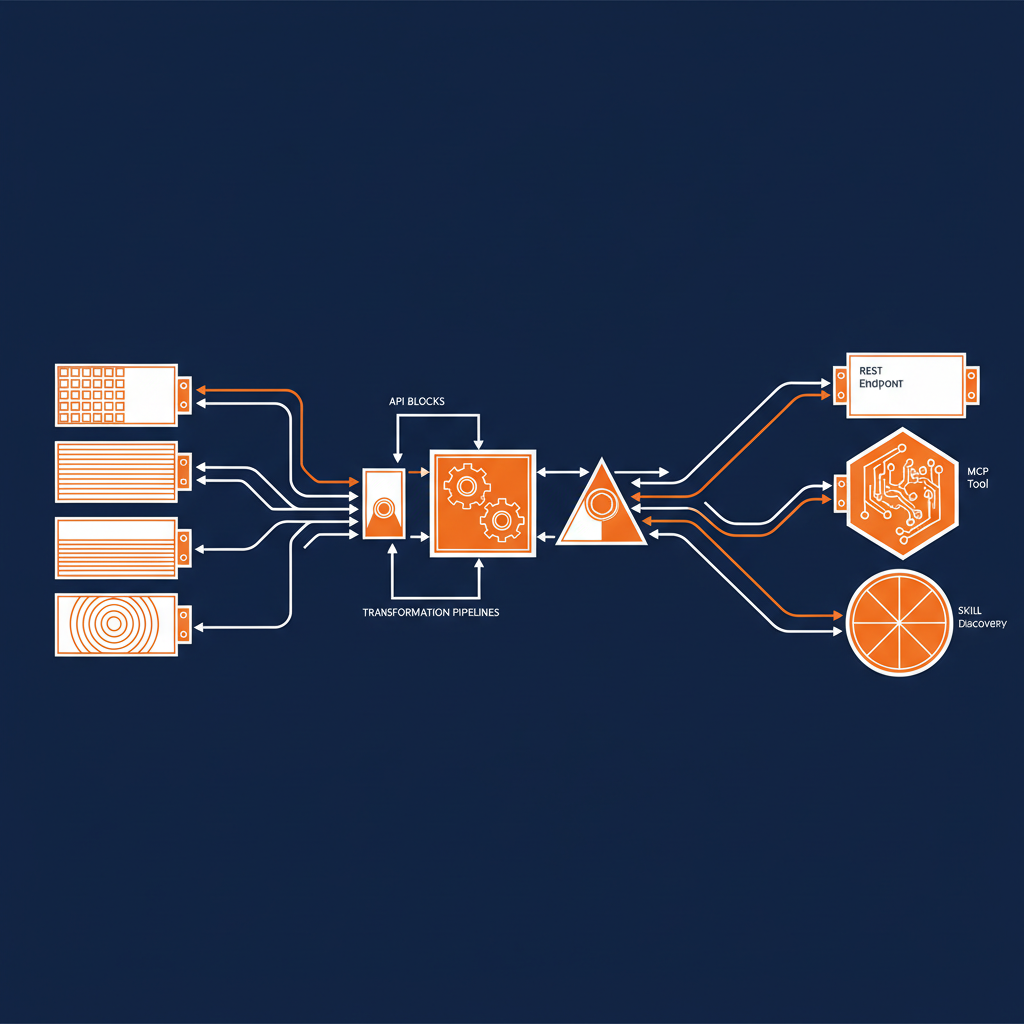

The most effective frameworks we’ve seen treat governance in layers. The inner layer covers technical guardrails — things like agent compute limits, spend caps, and resource constraints that keep autonomous systems operating within defined boundaries. The outer layer addresses the higher-stakes concerns: client data confidentiality, internet access policies, and regulatory compliance. Both layers have to work together, and both have to be visible to the people responsible for them.

What this means for any team building AI products for enterprise: don’t treat governance as an afterthought or a sales objection to be managed. Come to the conversation having already thought it through. Enterprises aren’t looking for one-size-fits-all solutions here — they want to see that you’ve considered the problem seriously and can adapt your approach to their specific context.

Monitoring matters as much as building

Knowing you have guardrails in place is one thing. Being able to demonstrate that they’re working — in real time, in the client’s environment — is another.

The most compelling governance demonstrations involve rules-based dashboards with client-configurable inputs, deployed in sandbox environments (ideally on-premise or within the client’s own infrastructure). A practical example: a three-agent workflow handling something as sensitive as tax filing — one agent for data collection, one for processing, one for validation, all overseen by an agent manager, all operating within predefined guardrails. The power isn’t just in the automation. It’s in the auditability.

Cloud-first is no longer the default

This one surprised us less than it might have a couple of years ago, but it’s accelerating faster than expected: the geopolitical climate is fundamentally reshaping where enterprises want their data to live.

European organizations in particular are driving a significant trend toward data sovereignty — on-premise storage, in-region cloud deployments, and hard limits on where data can flow. Senior executives who previously would have accepted a standard cloud deployment without much pushback are now asking detailed questions about jurisdiction, vendor nationality, and what happens to their data under adverse regulatory scenarios.

The practical implication is simple: if your go-to-market messaging is still built around cloud-first infrastructure, it’s not landing the same way it did 12 months ago. Flexibility isn’t a differentiator anymore — it’s a baseline requirement.

And underneath all of this sits a challenge that no amount of AI sophistication makes easy: legacy system integration. The time, cost, and organizational complexity of connecting modern AI workflows to decades-old infrastructure remains one of the biggest friction points in enterprise adoption. It’s where a lot of well-intentioned AI projects stall.

What “AI-ready” actually means

At Naftiko, we’ve developed a 25+ point AI readiness scoring framework to help us think about which organizations are positioned to move quickly and which ones have foundational work to do first. The dimensions that matter most: data pipeline maturity, API coverage and quality, integration architecture, and operational instrumentation.

The organizations that score highest on this framework share a common trait: they invested in the plumbing before the AI conversation started. Internal connectivity — a unified data fabric, domain-specific data structures, clean API surfaces — meant that when AI capabilities became available, they didn’t need to rebuild. They just needed to add a secure layer on top of infrastructure that was already in place.

The lesson isn’t “you should have started three years ago” (though that helps). It’s that the technical foundations for AI readiness are largely the same foundations that good integration architecture has always required: clean data, well-governed APIs, and systems that can actually talk to each other. If you’re targeting enterprises with complex integration needs, that’s where the real conversation starts.

For the teams building AI products for enterprise

The window for “we’re figuring out governance as we go” has closed. Enterprise clients — especially in financial services, but increasingly across all regulated industries — are now sophisticated enough to probe your framework, test your sandbox, and push hard on data residency questions.

The good news: if you’ve built your product with governance, deployment flexibility, and integration complexity in mind, you’re not just checking boxes. You’re speaking the language your buyers have already started to use among themselves.

That’s where we’re focused at Naftiko. We’d love to hear how you’re thinking about these challenges — whether you’re building, buying, or somewhere in between.