Executive Summary

Enterprise organizations have made substantial investments in API gateway infrastructure. Apigee, MuleSoft Anypoint, Azure API Management, Kong, Tyk, NGINX, Ambassador, Apache APISIX — these platforms govern API traffic, enforce policy, manage developer access, and produce the authoritative record of what APIs exist and how they are used.

These investments are load-bearing. They are also increasingly insufficient on their own.

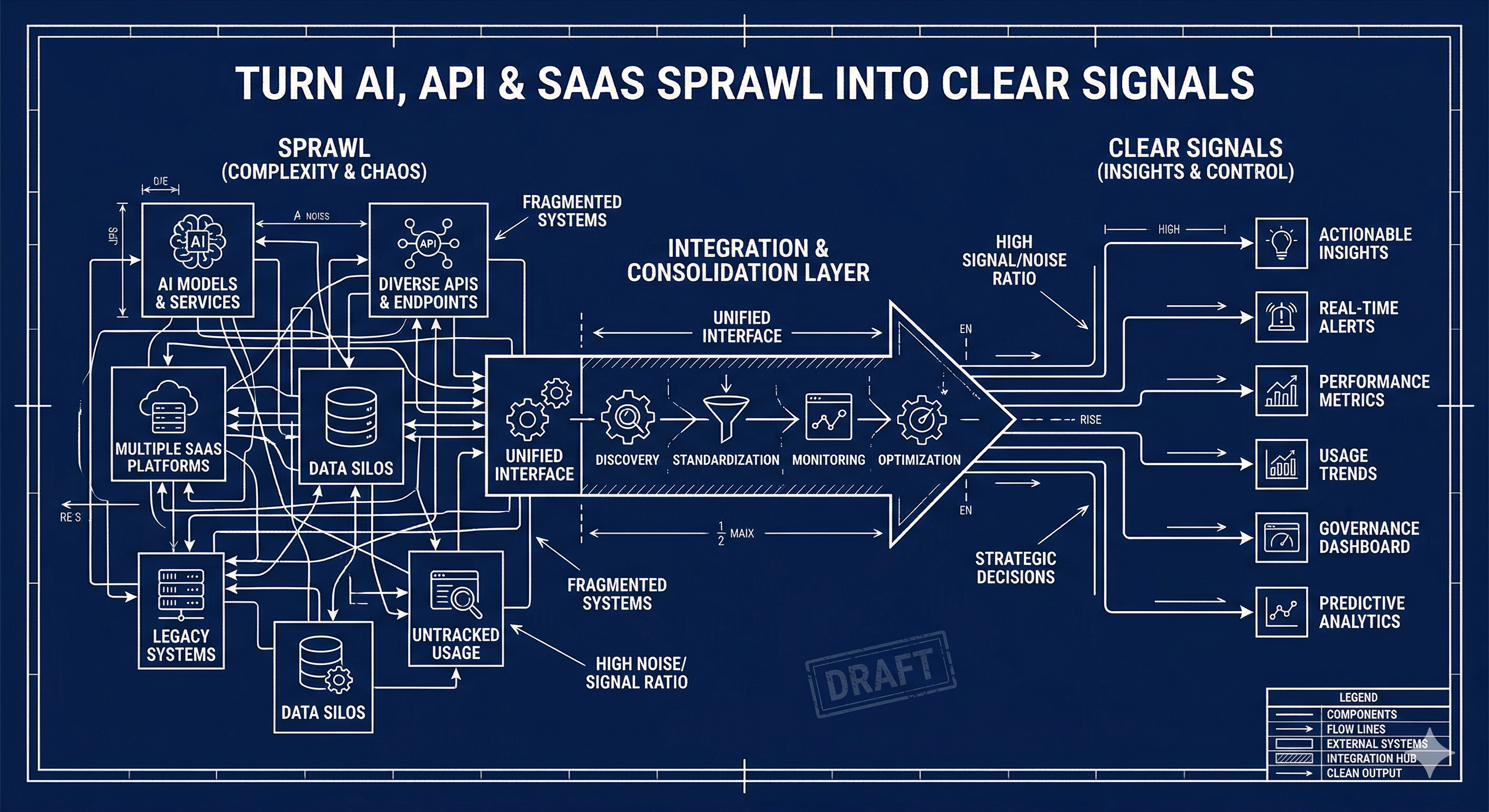

The primary new consumer of enterprise APIs is not a developer writing an integration. It is an AI agent — an autonomous reasoning system that needs to call APIs to produce business outcomes. AI agents do not read developer portals. They call tools. And the tools they need are not the same as the endpoints your gateway exposes.

The gap between your gateway investment and your AI strategy is the capability layer.

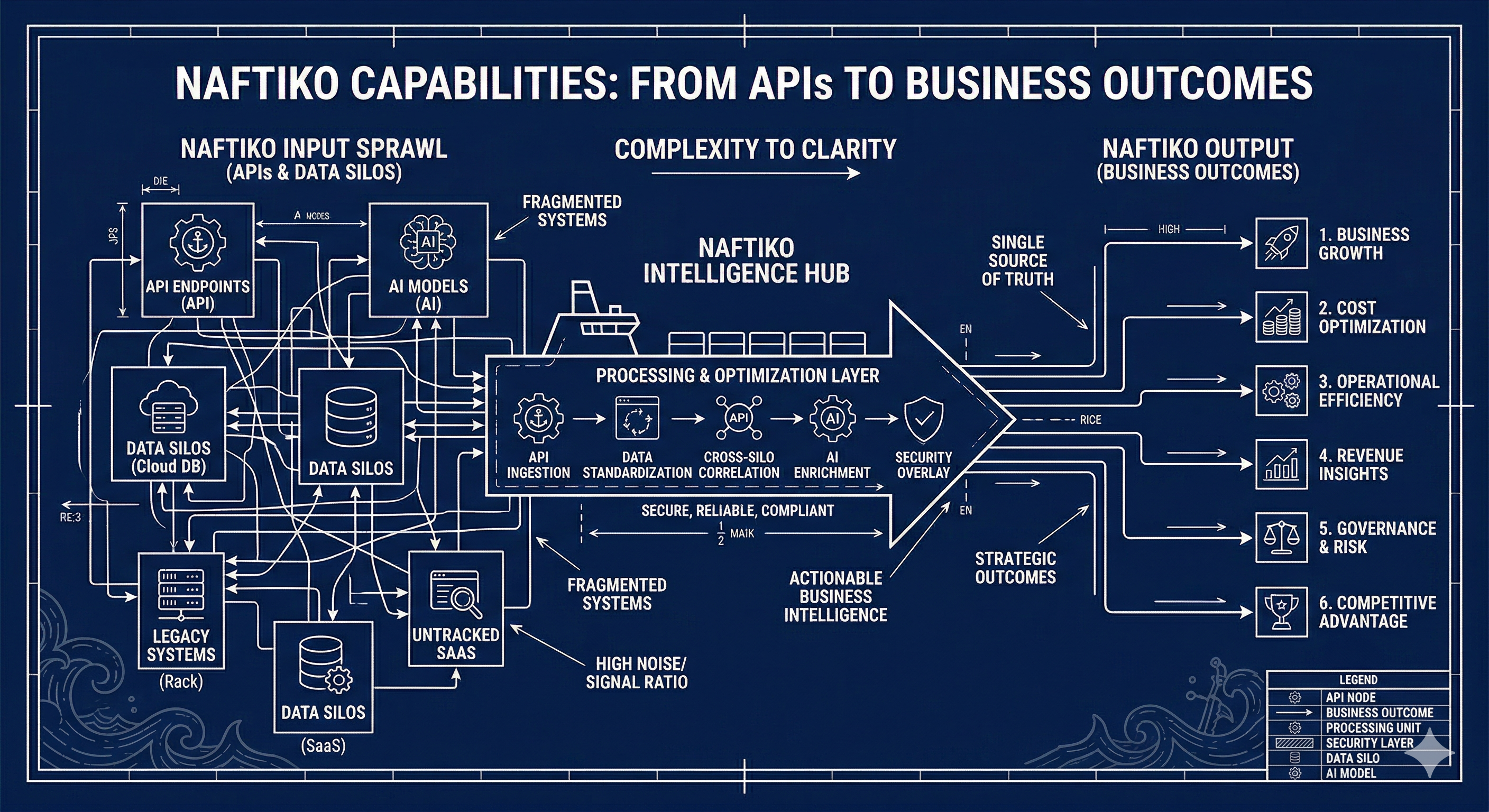

Naftiko capabilities bridge this gap. They are YAML specification files that declare what an integration consumes from upstream gateway management APIs and what it exposes to downstream AI agents as structured MCP tools and governed REST endpoints. The specification is the integration — no code generation, no custom server to maintain, no drift between documentation and behavior.

This white paper explains why the gateway layer alone is no longer sufficient, what the capability layer is and how it works, and how Naftiko’s open-source capability set for 15+ API gateways makes your existing infrastructure immediately AI-consumable.

The Problem: Gateways Were Built for Developers, Not for Agents

The Gateway’s Original Contract

API gateways were designed for a specific consumption model: a developer reads the documentation, writes code that calls the API, ships the integration, and the gateway governs the traffic. Developer portals, interactive testing consoles, and API catalogs were all built to serve the human developer in this loop.

This model worked. For a decade, the API gateway was the right answer for enterprise API infrastructure. The gateway made APIs governable — it enforced authentication, applied rate limits, logged traffic, and gave platform teams a single control plane for everything touching their APIs. The value was real and it remains real.

The Consumption Model Has Changed

AI agents do not follow this model. An agent begins with a goal and discovers available tools through structured, machine-readable declarations. When it needs to call a capability, it invokes a named tool with typed input parameters and expects a structured output it can reason about. The agent is not a developer — it does not browse, it does not experiment, it does not read prose documentation.

The requirements of an AI agent interacting with your API infrastructure are fundamentally different from those of a developer:

- Discovery through tool definitions — not documentation pages.

- Typed, described parameters — not informal documentation.

- Structured, projected output — not raw API responses.

- Authentication handled by the integration layer — not by the agent.

- Governance and inspectability — not convention.

Your gateway is excellent at enforcing (3), (4), and (5) for developer traffic. It provides none of (1) or (2) in a form AI agents can consume directly. That gap is not a criticism of the gateway — it is a statement about the new consumption context the gateway was not designed for.

What Agents Actually Need

When an AI agent discovers a tool, it reads a structured definition: the tool’s name, a natural-language description, the typed input parameters it accepts, and the shape of the output it returns. This is what MCP (Model Context Protocol) tool definitions provide. The agent reasons about which tool to call based on these declarations, constructs a well-typed call, and incorporates the structured response into its reasoning chain.

None of that comes from your gateway’s management API as-is. The Apigee Management API, the Kong Admin API, the Tyk Dashboard API — these are comprehensive, well-designed REST APIs. But they return raw JSON envelopes with pagination wrappers, internal IDs, and fields no agent needs in its context window. They have no tool descriptions. They have no typed parameter schemas surfaced in a form an agent’s tool-use system can read directly.

The capability layer transforms these raw management APIs into governed, agent-ready tool surfaces.

The Glue Code Problem

When enterprise teams start building AI workflows on top of their gateway infrastructure, the typical response is to write custom MCP server code. These servers are written by whoever had the most time, documented in READMEs nobody reads, and completely invisible to governance teams.

Six months later, the organization has a dozen of these servers. Authentication is scattered — some servers have credentials hardcoded, some read from environment variables in undocumented ways, some require the agent to pass tokens directly. Output schemas are inconsistent between servers. There is no specification, no version history, and no way to audit what any of these servers actually do without reading the source.

This is the glue code problem reproduced at the AI layer. It is the same pattern that motivated API governance in the first place — undisciplined integrations multiplying until nobody understands the full surface. Naftiko extends the governed posture of your API program to AI tool consumption.

What the Capability Layer Is

Specification as Integration

A Naftiko capability is a YAML file that declares two things: what the integration consumes from upstream APIs and what it exposes to downstream consumers. The Naftiko engine reads this specification at runtime and serves live integrations — no code generation, no compiled artifact, no drift between specification and behavior.

This is Spec-Driven Integration: the specification is the integration.

naftiko: "1.0.0-alpha1"

info:

name: Kong API Gateway Management

description: Configure services, routes, plugins, and consumers on Kong Gateway

binds:

- namespace: env

keys:

KONG_ADMIN_URL: KONG_ADMIN_URL

capability:

consumes:

- import: kong-admin

location: ./shared/kong-admin.yaml

exposes:

- type: mcp

port: 9080

namespace: kong-gateway-mcp

description: "MCP tools for Kong Gateway management"

tools:

- name: list-services

description: "List all upstream services configured in Kong."

hints:

readOnly: true

call: "kong-admin.list-services"

outputParameters:

- type: object

mapping: "$."

The consumes block declares the upstream API surface the capability draws from — in this case, a shared Kong Admin API definition that describes available operations. The exposes block declares what downstream consumers see: an MCP server on port 9080 with a named tool, a description the agent uses for tool selection, operation hints for governance enforcement, and an output projection controlling what crosses into the agent’s context.

There is no server to write. There is no build step. The YAML is deployed to the Naftiko engine and immediately serves live traffic.

The Shared Definition Pattern

For gateways with complex management surfaces, capabilities are organized into two layers. Shared definitions declare the upstream API surface — the base URL, authentication mechanism, available resources, and operations. Workflow capabilities import one or more shared definitions and compose targeted tool sets for specific personas and use cases.

This pattern means the gateway surface is declared once and composed flexibly. A platform engineering team might build a read-only observability capability that imports the shared Kong definition and exposes only list-services, list-routes, and list-plugins. A deployment automation workflow might import the same shared definition and expose full CRUD operations. The shared definition is the authoritative source; the workflow capability is the access policy.

Output Projection

Every MCP tool declares an outputParameters block controlling what crosses the boundary into the agent’s context. This projection is the last structural control point before data reaches an LLM.

Raw gateway management API responses are designed for programmatic consumption, not LLM context windows. A Kong GET /services response returns pagination envelopes, internal UUIDs, millisecond timestamps, and dozens of fields most agents have no use for. Passing that full response into an agent’s context wastes tokens, introduces noise, and increases the risk of the agent reasoning about irrelevant fields.

The outputParameters mapping uses JSONPath to select and reshape what the tool returns. A mapping: "$.data[*].name" projection returns only service names. A mapping: "$." passes the full response for cases where the agent genuinely needs it. The capability author, not the gateway, controls the agent’s view of the data.

Gateway Capabilities in the Naftiko Fleet

The Naftiko Fleet covers 15 platforms across every major category of API gateway deployment.

Enterprise API Management

Google Apigee — Five shared definitions covering the full Apigee surface (API Management, API Hub, APIM, Registry, Integrations) and three workflow capabilities for specification management, developer portal administration, and analytics/traffic observability. 25+ MCP tools. The Apigee capability set is the largest in the fleet, reflecting the breadth of the Apigee platform surface.

MuleSoft Anypoint Platform — Organizational governance and CloudHub application lifecycle. Deploy, monitor, update, and undeploy applications. The get-application-status tool is particularly useful in AI workflows that monitor deployment pipelines — an agent can detect a failed deployment and trigger a redeployment without human coordination.

Azure API Management — Four workflow capabilities including AI Gateway Management for Azure OpenAI integration, semantic caching, and token-based rate limiting alongside full API lifecycle, developer onboarding, and gateway operations. The AI Gateway capability makes Azure API Management’s OpenAI routing features accessible to AI agents managing AI workloads — a capability-on-capability deployment pattern.

APIIDA — Federated management across multiple gateways from a single control plane. Validate specifications, deploy to multiple gateway targets simultaneously, retrieve aggregated cross-gateway metrics. Naftiko capabilities over APIIDA enable AI-assisted multi-gateway governance.

Axway API Management — Four capability files covering application analytics, identity and access, SAML/OIDC identity provider management, and organization management. The most comprehensive identity federation capability set in the fleet, making Axway’s strong identity governance features available to AI agents.

Open-Source API Gateways

Kong Gateway — Full CRUD for services, routes, consumers, plugins, and upstreams. 26 MCP tools. The list-plugins + list-services combination enables AI agents to audit full plugin coverage across services — useful for compliance workflows that need to verify rate limiting or authentication plugins are applied everywhere.

Tyk — API Management and Platform Administration capabilities. 27 tools including export-system / import-system for environment migration automation. An AI agent can export a full Tyk configuration, validate it, and import it to a new environment as part of a governed promotion pipeline. The most complete open-source gateway capability set in the fleet.

Ambassador Edge Stack — 26 MCP tools covering all Ambassador configuration resources: mappings, hosts, TLS contexts, rate limits, and modules. Complete Kubernetes ingress management through MCP tooling, enabling AI agents to manage Kubernetes-native API routing configuration.

Apache APISIX — Route, upstream, and consumer management for the APISIX Admin API. The APISIX capability covers the core configuration surface for the high-performance open-source gateway.

Infrastructure Gateways

NGINX — Monitoring and Observability + Traffic Management. Two complementary capabilities: real-time metrics across connections, requests, server zones, caches, and upstreams; and dynamic upstream server manipulation without configuration reload. An AI agent can poll upstream health, identify overloaded servers, and add capacity dynamically — without any NGINX reload required.

Amazon API Gateway — REST API discovery and creation for the AWS-managed gateway service. The Amazon capability covers the core REST API lifecycle for teams managing AWS-native API infrastructure.

AI-Native Gateways

Bifrost — Chat completion routing, streaming, and health tools for the multi-provider AI gateway. Naftiko capabilities over Bifrost create a fully governed AI capability surface — an AI agent can call Bifrost tools to route requests to different model providers, check provider health, and manage routing configuration, all through the same governed capability pattern.

AIMLAPI — 400+ model access gateway. Chat completions, image generation, embeddings, and model catalog tools with governed, credential-isolated access. The AIMLAPI capability makes a large model catalog accessible to AI workflows without exposing API keys directly to agents.

Policy and Monitoring Gateways

Apinizer — Gateway, endpoint, policy, and metrics management. The list-endpoints + list-policies + get-metrics combination enables AI-driven policy compliance auditing: an agent can identify endpoints that lack required policies and surface compliance gaps without human review of every endpoint.

APIPark — Open-source AI developer platform. Service management, AI model catalog, team management, and subscriber management. The APIPark capability set covers the full platform surface for teams using APIPark to govern AI service consumption.

The Governance Case for Capabilities

Specification as the Governance Artifact

When the capability YAML is the integration, the same governance practices that apply to API specifications apply to integrations. A capability lives in version control. It has a pull request history. Changes are reviewed before deployment. CI pipelines can validate the YAML against the Naftiko schema before any integration reaches production.

This is fundamentally different from the governance posture of a custom MCP server. A Node.js server in a repo with a single contributor and no review history is invisible to governance teams. A YAML file in a governed repository — with the same review gates, the same catalog registration, the same version control discipline as your OpenAPI specifications — is auditable.

Capability specifications can be registered in APIs.json catalogs, making the full integration surface discoverable by tooling. They can be forked and composed: a base capability for a gateway’s management API, specialized variants for different teams with different tool subsets and different output projections. The fork history is governance history.

The Hints System

Every MCP tool declares operation hints — readOnly, destructive, idempotent. These are machine-readable policy signals embedded in the specification.

- name: delete-application

hints:

readOnly: false

destructive: true

idempotent: true

Orchestration layers can enforce policies against these hints without reading tool implementations. An orchestrator can restrict a class of agents to tools where readOnly: true. It can require human approval before invoking any tool where destructive: true. It can log all invocations of non-idempotent operations for audit trails.

These policies are enforced structurally — by reading the hints in the specification — not conventionally, by trusting that tool authors followed naming patterns or documentation conventions. The hint is the machine-readable contract.

Authentication Isolation

Gateway management credentials — Google Access Tokens, Kong Admin tokens, MuleSoft OAuth tokens, Tyk Dashboard API keys — are declared in the binds block and injected from environment variables at runtime.

binds:

- namespace: env

keys:

KONG_ADMIN_TOKEN: KONG_ADMIN_TOKEN

The agent never sees the credential. It calls a tool by name; the Naftiko engine injects the credential into the upstream request. The separation is structural. There is no way for an agent to extract the credential from the tool call — the credential is never in the agent’s context.

This matters for enterprise deployments where gateway management credentials have broad privilege. A credential that can create or delete APIs on your production Apigee organization should never be accessible to the agent reasoning about which API to manage. The binds pattern enforces that boundary at the specification level.

Deployment

Every gateway capability in the fleet runs the same way:

docker pull ghcr.io/naftiko/framework:latest

docker run -p 9080:9080 \

-v ./capabilities/api-gateway-management.yaml:/app/capability.yaml \

--env-file .env \

ghcr.io/naftiko/framework:latest /app/capability.yaml

Set gateway credentials in .env, point the volume mount at the capability file, run. MCP tools are available immediately on the declared port. The REST endpoint (if the capability exposes one) is available on its declared port.

Multiple capabilities can run in a single Docker Compose stack, each on its own port:

services:

kong-management:

image: ghcr.io/naftiko/framework:latest

command: /app/capability.yaml

ports:

- "9080:9080"

volumes:

- ./capabilities/kong/api-gateway-management.yaml:/app/capability.yaml

env_file: .env

tyk-management:

image: ghcr.io/naftiko/framework:latest

command: /app/capability.yaml

ports:

- "9081:9081"

volumes:

- ./capabilities/tyk/api-management.yaml:/app/capability.yaml

env_file: .env

Each capability is isolated: separate container, separate port, separate credential scope. An AI agent connected to port 9080 has Kong tools; an agent connected to port 9081 has Tyk tools. The gateway-to-capability mapping is explicit and auditable in the Compose file.

Conclusion

The API gateway investment your organization has made is the foundation your AI strategy should build on. The capability layer does not replace the gateway — it extends the gateway’s reach into the AI consumption model. Where the gateway governs what developers can access, the capability layer governs what AI agents can call.

The capability layer answers the same questions that motivated the gateway investment in the first place: who can call what, under what authentication, with what output, subject to what governance. The gateway answered those questions for developer traffic. The capability layer answers them for agent traffic. Together, they cover the full consumption surface of your API program.

Organizations that build this governed capability layer now will have a structural advantage over those that let it grow as undocumented integration code. The glue code problem is predictable — it happened with integration middleware, it happened with microservices, and it is happening now at the AI tool layer. The organizations that governed their integration surfaces early came out ahead. The same pattern holds here.

The gateway is not enough. The capability layer is what makes it AI-ready.