Executive Summary

Enterprise AI adoption is no longer a question of if but how deeply and how strategically. Yet most organizations lack visibility into how their peers are investing, what technology bets are compounding, and which patterns separate leaders from followers.

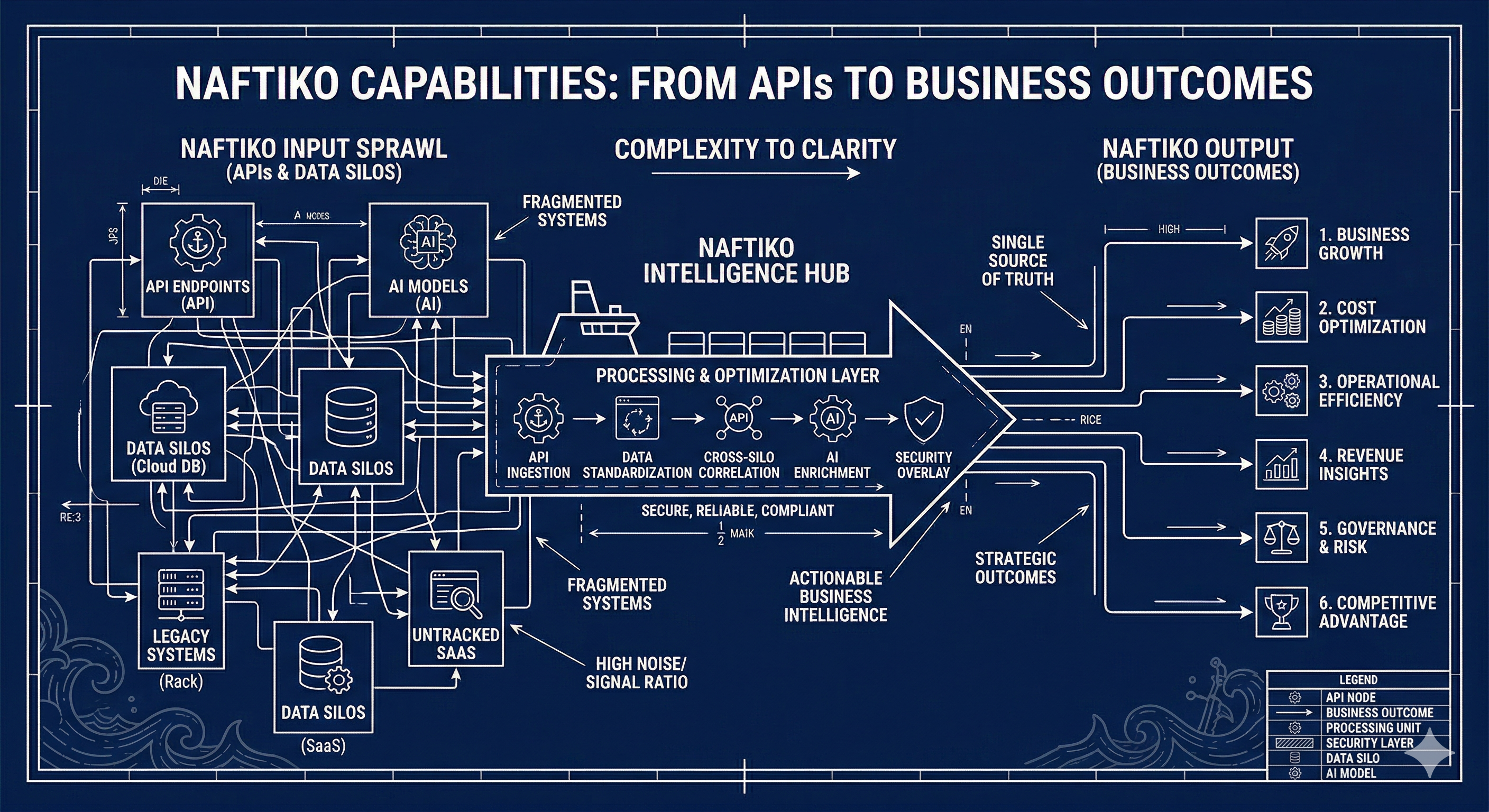

Naftiko Signals is an enterprise technology intelligence platform that tracks investment patterns across 30+ industries and 315+ companies to surface early signals about where AI and technology strategy is actually heading. By measuring 37 distinct signal dimensions and mapping them against recurring waves of AI adoption, Naftiko Signals provides the intelligence enterprises need to manage cost, velocity, and risk while enabling AI innovation.

Manage: Cost + Velocity + Risk = Enable: AI Innovation

This whitepaper explains what Naftiko Signals tracks, how the scoring and analysis system works, what patterns have emerged across enterprise technology portfolios, and how organizations can use these signals to inform their own strategic decisions.

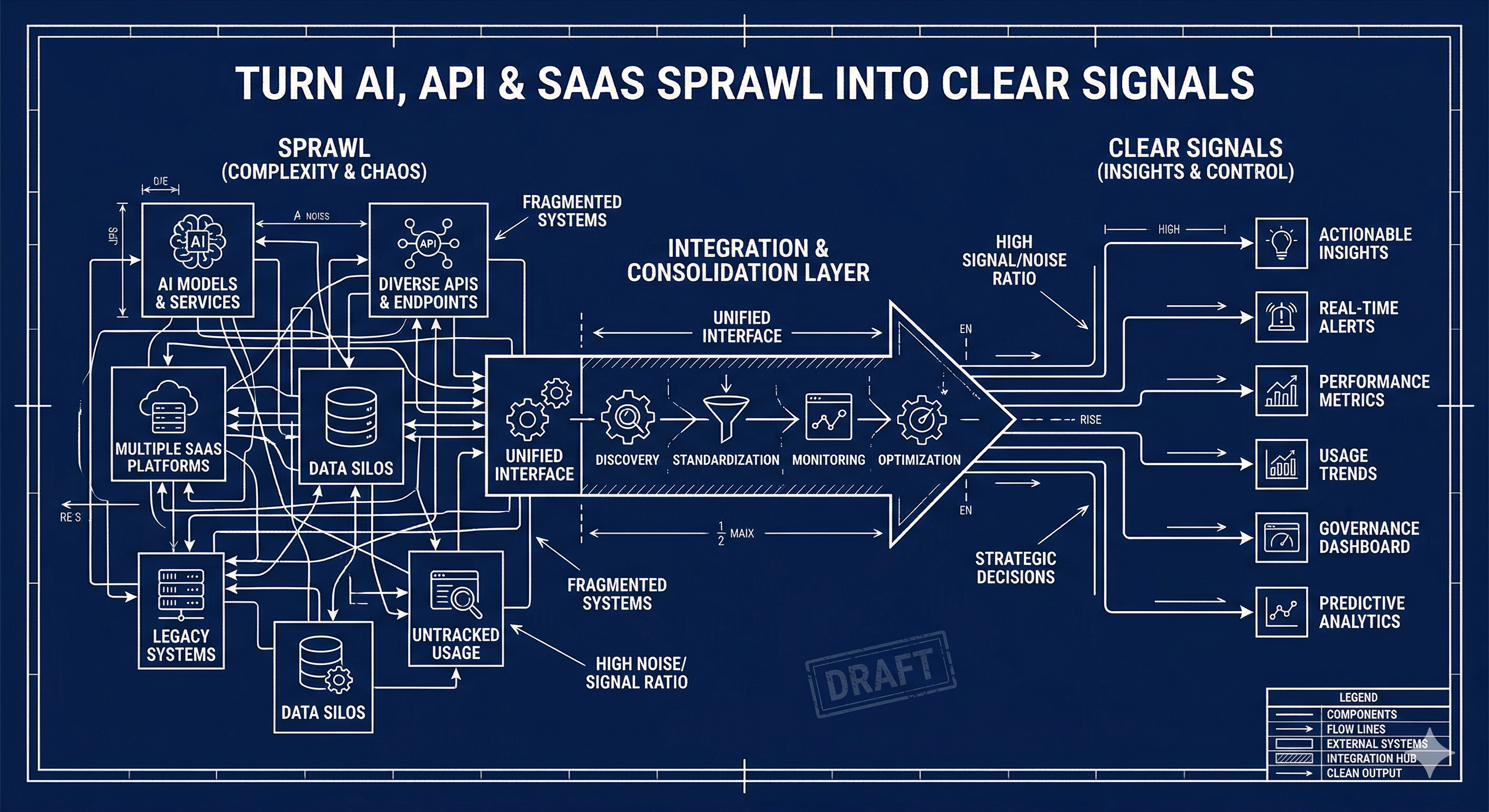

The Problem: Blind Spots in Enterprise AI Strategy

Enterprises face a compounding challenge. AI technology is evolving faster than organizational strategy can keep pace. New model architectures, infrastructure patterns, governance frameworks, and integration protocols emerge monthly. Meanwhile, technology leaders must make investment decisions that will compound over years.

The result is a set of persistent blind spots:

- What are peers actually investing in? Conference keynotes and vendor marketing obscure what enterprises are doing in practice. Job postings, open-source contributions, SaaS adoption, and standards participation tell a different story than press releases.

- Which technology bets are compounding? Some investments build on each other. Cloud infrastructure enables containerization, which enables platform engineering, which enables AI deployment at scale. Understanding these dependencies changes how you sequence investments.

- Where is the industry converging? When 200+ enterprises independently adopt the same tools, standards, and patterns, that convergence is a signal. It reveals where interoperability will matter and where proprietary bets carry increasing risk.

- How do you benchmark readiness? Without a common framework for measuring AI maturity across dimensions like governance, FinOps, provider strategy, and developer experience, organizations cannot assess where they lead and where they lag.

Naftiko Signals was built to close these gaps.

What We Track

For each company, Naftiko Signals profiles investment across four primary dimensions to understand the depth and direction of their technology strategy.

Top Areas of Technology

The technical domains a company actively invests in, from AI/ML and APIs to cloud infrastructure and security. We track 2,000+ technology areas and skills, measuring adoption counts across companies to identify where investment is concentrating and where it is diversifying.

Top SaaS Portfolio

The services and platforms a company buys, builds, or integrates, revealing where they rely on the ecosystem versus building in-house. We catalog 500+ platforms across segments including API gateways, iPaaS, embedded iPaaS, SaaS management, service mesh, unified APIs, SDKs, and API clients.

Top Standards Participation

Engagement with open standards and specifications, including OpenAPI, AsyncAPI, JSON Schema, GraphQL, gRPC, and emerging AI-era specifications like MCP (Model Context Protocol) and A2A. Standards participation is a strong signal of interoperability commitment and long-term platform thinking.

Top Tooling

The developer tools, frameworks, and open-source projects a company adopts or contributes to, spanning 600+ tools across programming languages, databases, CI/CD, observability, and AI/ML frameworks. Tooling choices reveal engineering culture, build philosophy, and operational maturity.

The 37 Signals

Naftiko Signals measures enterprise investment across 37 distinct dimensions, organized into seven categories. Each signal is scored per company based on observable indicators including job postings, technology adoption, open-source activity, standards participation, and SaaS portfolio composition.

Infrastructure and Foundation

| Signal | What It Measures |

|---|---|

| Artificial Intelligence | AI investment depth from ChatGPT usage to MCP to agentic automation |

| Cloud | Cloud investment including multi-cloud strategy and business-side management |

| Open-Source | Open-source usage, contribution, and inner source investment |

| Languages | Programming language diversity and relationship to services and tooling |

| Code | Libraries, frameworks, and SDKs in use across engineering teams |

| Data | Data team strength, focus areas from access and quality to governance |

| Databases | Database platforms and tooling providing data access across teams |

| Virtualization | API mocking, synthetic data, and resource virtualization approaches |

| Containers | Docker and Kubernetes adoption and cloud-native platform maturity |

Architecture and Integration

| Signal | What It Measures |

|---|---|

| Specifications | Standards in use including OpenAPI, AsyncAPI, JSON Schema, MCP, A2A |

| APIs | Overall API investment from API-first design to full lifecycle management |

| Integrations | iPaaS, embedded iPaaS, ETL, batch, and other integration approaches |

| Event-Driven | Event-driven architecture investment and async API adoption |

| Services | Full SaaS portfolio breadth including infrastructure, platform, and business tiers |

| Patterns | Diversity of API and integration patterns in use |

Data and AI Specialization

| Signal | What It Measures |

|---|---|

| Context Engineering | Context window optimization, prioritization frameworks, and summarization pipelines |

| Data Pipelines | Training and fine-tuning data curation, labeling, versioning, and governance |

| Model Registry & Versioning | Model tracking, version lineage, performance baselines, and rollback capabilities |

| Multimodal Infrastructure | Document extraction, image/video analysis, audio transcription pipelines |

| Domain Specialization | Domain-specific versus general-purpose model strategy and compliance drivers |

Operations and Platform

| Signal | What It Measures |

|---|---|

| Automation | Sophistication and breadth of automation across operations |

| Platform | Platform journey maturity including common services, guardrails, and roles |

| Operations | Strategic operational investment and continuous improvement posture |

| Observability | Monitoring, testing, tracing, reporting, and dashboard maturity |

| Governance | API governance alignment with security, compliance, and organizational policy |

| Security | Security investment evolution from application-focused to API-centered and AI-aware |

| Testing & Quality | Eval frameworks, hallucination detection, RAG accuracy scoring, agent task completion |

Business Alignment

| Signal | What It Measures |

|---|---|

| Alignment | Bridging engineering with business and API productization investment |

| Standardization | Intentional and strategic approach to standards adoption |

| Mergers & Acquisitions | M&A activity and how operations are shaped by acquisition-driven innovation |

| Experimentation & Prototyping | Hackathons, bot experimentation, and prototype-to-production pipelines |

Enterprise AI Governance

| Signal | What It Measures |

|---|---|

| AI Review & Approval | Review boards, use case approval workflows, and model risk tiering |

| Regulatory Posture | EU AI Act classification, risk assessments, and proactive versus reactive compliance |

| Privacy & Data Rights | Consent management, right-to-deletion compliance, and cross-border data flow |

| Developer Experience | Coding assistant adoption, productivity baselines, and AI platform feedback loops |

| ROI & Business Metrics | Connection between AI system performance and business outcomes |

Ecosystem and Economics

| Signal | What It Measures |

|---|---|

| AI FinOps | Token-level cost tracking, inference forecasting, GPU utilization, chargeback models |

| Provider Strategy | Single versus multi-provider strategy, switching costs, and open-source balance |

| Partnerships & Ecosystem | Cloud AI partnerships and how they shape or constrain architectural choices |

| Talent & Organizational Design | New AI roles, team structures, and skills gaps revealed by hiring patterns |

| Data Centers | Owned versus leased versus cloud GPU capacity and infrastructure constraints |

AI Waves: Mapping the Adoption Curve

Beyond static signals, Naftiko tracks 30+ recurring waves of AI adoption, patterns that emerge across companies and industries as technology matures and spreads. Each wave reshapes roles, workflows, and the cost/velocity/risk balance inside enterprises.

Foundation Waves

- Large Language Models (LLMs) — The foundational reasoning and generation layer for modern AI applications. LLMs serve as the base upon which all other waves build.

- Generative Pre-trained Transformer (GPT) — General-purpose AI engines pre-trained on broad datasets and fine-tuned for specific tasks.

- Open-Source LLMs — Self-hosting alternatives like Llama and Mistral that enable customization, transparency, and ecosystem innovation outside proprietary platforms.

Retrieval and Grounding

- Vector Databases — Datastores optimized for semantic search and similarity matching via high-dimensional embeddings.

- Retrieval-Augmented Generation (RAG) — Combining external data retrieval with language model generation to ground responses in domain-specific information.

Control and Customization

- Prompt Engineering — Designing inputs and instructions to guide model behavior without changing model weights.

- Context Engineering — The discipline of managing everything a model sees before generating a response, from system prompts and retrieved documents to tool outputs and memory.

- Fine-Tuning & Model Customization — Further training pre-trained models on proprietary data for deeper behavioral customization.

Capability and Modality

- Multimodal AI — Processing and generating across text, images, audio, video, and documents.

- Coding Assistants — AI tools embedded in development environments for writing, refactoring, and debugging code.

- Copilots — AI assistants designed to work alongside humans within specific business workflows.

Efficiency and Routing

- Small Language Models (SLMs) — Compact models optimized for edge deployment, trading scale for controllability and cost.

- Reasoning Models — Models optimized for multi-step reasoning, planning, and problem decomposition.

- Model Routing / Orchestration — Dynamically selecting and combining multiple models and tools to optimize for cost, latency, and accuracy.

Integration and Autonomy

- MCP (Model Context Protocol) — A protocol for exposing tools, APIs, and data sources to models in a structured, machine-readable way.

- Agents — Autonomous systems that use models, tools, and memory to pursue goals over time.

- Skills — Discrete, reusable capabilities exposed to models or agents as callable functions.

- Memory Systems — Mechanisms for storing and retrieving information across interactions, enabling personalization and continuity.

Measurement and Governance

- Evaluation & Benchmarking — Systematically measuring model and system performance across accuracy, reliability, safety, and task completion.

- Governance & Compliance — Organizational and regulatory frameworks for managing AI risk, accountability, and transparency.

- Cost Economics & FinOps — Understanding and optimizing the financial cost of AI operations.

- Supply Chain & Dependency Risk — Managing dependencies on model providers, chip manufacturers, and API pricing stability.

Physical Infrastructure

- Data Centers — The GPU clusters, power capacity, and geographic placement underpinning AI capabilities. The material foundation every other wave depends on.

How Scoring Works

Each company receives a numeric score across all 37 signal dimensions based on observable indicators. Scores are not self-reported; they are derived from analysis of job postings, technology stacks, open-source contributions, SaaS usage, standards adoption, and public disclosures.

Company Profiles

Every company profile includes:

- Scoring — Numeric scores across all 37 dimensions

- Areas — 200+ technology areas with adoption depth

- Services — 100-400 SaaS services mapped to the company’s portfolio

- Standards — Standards and specifications in active use

- Tools — Developer tools, frameworks, and languages

- Impact — Layer-based impact assessment across foundational, operational, and strategic tiers

- Jobs — Job postings as talent and capability signals

- Languages — Programming language distribution

- Open-Source Ecosystem — Apache and CNCF project participation

Impact Layers

Signals are organized into impact layers that reflect how investments compound:

Foundational Layer — Core capabilities including AI, cloud, open-source, and languages. Grounded in the waves of LLMs, GPT, and open-source LLMs, this layer captures how enterprises invest in their base infrastructure. Leading organizations like EY, NVIDIA, and Qualcomm demonstrate integrated strategies that separate leaders from followers.

Operational Layer — How foundational investments translate into platform maturity, automation, observability, and governance.

Strategic Layer — How operational capabilities enable business alignment, standardization, ecosystem partnerships, and AI-driven innovation.

Who Is Leading

Based on current scoring, the top enterprises by AI investment depth include:

| Rank | Company | AI Score |

|---|---|---|

| 1 | EY | 100 |

| 2 | NVIDIA | 95 |

| 3 | Qualcomm | 94 |

| 4 | Accenture | 91 |

| 5 | Cisco | 89 |

| 6 | Citi | 87 |

| 7 | UnitedHealth Group | 86 |

| 8 | Adobe | 84 |

| 9 | Visa | 83 |

| 10 | AMD | 83 |

What distinguishes leaders from the field is not investment in any single dimension but integrated strategies across multiple signals. Top scorers treat AI, cloud, open-source, and platform engineering as interconnected investments rather than isolated initiatives.

Industry Coverage

Naftiko Signals tracks companies across 30+ industry verticals, providing cross-industry pattern analysis that reveals how AI adoption differs by sector.

Industries include aerospace, agriculture, automotive, cannabis, consumer goods, energy, financial services, healthcare, hospitality, insurance, logistics, manufacturing, media and entertainment, pharmaceuticals, retail, semiconductors, technology, telecommunications, and more.

Cross-industry analysis reveals that:

- Financial services and healthcare lead in governance and compliance signal strength, driven by regulatory pressure.

- Technology and semiconductors lead in AI and infrastructure investment depth.

- Professional services (EY, Accenture) show the broadest signal coverage across all 37 dimensions, reflecting their role as cross-industry advisors.

- Automotive and manufacturing show concentrated investment in automation, IoT, and domain specialization.

The Service Ecosystem

Naftiko Signals catalogs the SaaS landscape that enterprises consume, organized into functional segments:

| Segment | Platforms Tracked | Purpose |

|---|---|---|

| Gateways | 53 | API management, rate limiting, security |

| iPaaS | 17 | Integration Platform as a Service |

| Embedded iPaaS | 19 | Embeddable integration within SaaS products |

| SaaS Management | 15 | License and cost governance |

| Service Mesh | 10 | Microservices communication |

| Unified API | 15 | Multi-API aggregation |

| ProCode API Composition | 11 | Developer-focused orchestration |

| SDKs | 13 | Code generation and distribution |

| Clients | 13 | API testing and exploration tools |

The most widely adopted services across tracked companies include Dynatrace (242 companies), Azure Machine Learning (227), Bloomberg AIM (222), Hugging Face (201), and Google Cloud Platform (171), reflecting the convergence of observability, AI infrastructure, and cloud platform investment.

The Technology Radar

Naftiko Signals maps technologies, services, tools, and standards across four adoption rings, providing a single view of where the industry is now and where it is heading:

- Adopting — Technologies with broad enterprise adoption and proven production deployment patterns.

- Optimizing — Technologies in active use where enterprises are refining implementation and maximizing ROI.

- Evaluating — Technologies under active enterprise evaluation with pilot deployments and proof-of-concept work.

- Watching — Emerging technologies on the enterprise radar but not yet in active evaluation cycles.

The radar is continuously updated as signal data shifts, providing a living view of technology maturity across the enterprise landscape.

Key Findings

AI Investment Is Broadening, Not Just Deepening

Early enterprise AI investment concentrated on a narrow set of capabilities: LLMs, chatbots, and coding assistants. Current signals show investment broadening into governance, FinOps, provider strategy, context engineering, and organizational design. The enterprises scoring highest are those investing across the full 37-signal spectrum rather than concentrating on a few high-visibility areas.

The Integration Layer Is the Next Battleground

Signals around MCP, agents, skills, and event-driven architecture are accelerating. Enterprises are moving from using AI as a standalone tool to integrating AI into their existing technology infrastructure. This integration wave will determine which organizations can compound AI capabilities across their operations versus those stuck with isolated experiments.

Governance Is Becoming a Competitive Advantage

Companies with strong governance, compliance, and regulatory posture signals are not slower to adopt AI. They are faster to move from pilot to production because they have the review frameworks, risk tiering, and approval workflows that give leadership confidence to scale. Governance is emerging as an enabler, not a blocker.

Open-Source and Standards Participation Predicts Readiness

Companies that actively participate in open-source ecosystems (Apache, CNCF) and adopt open standards (OpenAPI, AsyncAPI, JSON Schema) consistently score higher across AI investment signals. Standards participation correlates with architectural maturity, which provides the foundation for AI integration at scale.

The Cost Equation Is Shifting

AI FinOps signals reveal that enterprises are beginning to treat AI cost management with the same rigor they apply to cloud FinOps. Token-level cost tracking, inference spend forecasting, and GPU utilization monitoring are emerging as standard practices among leaders. Organizations without these capabilities face compounding cost surprises as AI usage scales.

How Organizations Can Use Naftiko Signals

Benchmark Against Peers

Compare your technology investment profile against industry peers across all 37 signal dimensions. Identify where you lead, where you lag, and where the gap matters most for your strategic objectives.

Inform Technology Strategy

Use wave analysis to understand which technology investments are compounding and which are plateauing. Sequence your own investments to build on converging industry patterns rather than betting against them.

Validate Vendor and Partner Choices

Cross-reference your SaaS portfolio and standards adoption against industry patterns. Identify where your choices align with enterprise convergence and where you carry concentration risk.

Shape Organizational Design

Use talent and role signals to understand how leading enterprises are structuring AI teams, which roles are growing, and what skills gaps your hiring strategy should address.

Guide Governance and Compliance

Benchmark your AI governance maturity against peers in your industry. Use regulatory posture and AI review signals to identify gaps before they become audit findings or compliance failures.

Conclusion

Enterprise AI strategy cannot be built on intuition. The pace of change, the breadth of investment dimensions, and the compounding nature of technology decisions require intelligence grounded in observable patterns across hundreds of organizations.

Naftiko Signals provides that intelligence. By tracking 37 signals across 315+ companies in 30+ industries and mapping investment patterns against recurring waves of AI adoption, Naftiko surfaces the patterns that matter: where investment is converging, which capabilities are compounding, and what separates leaders from followers.

The equation is straightforward: Manage cost, velocity, and risk to enable AI innovation. Naftiko Signals shows you how the enterprises around you are solving that equation, so you can solve it better.

For more information or to explore the signals data, visit naftiko.github.io/signals or contact kinlane@naftiko.io.