I have been having the same conversation with technology leaders for the last two quarters. The board has asked for an AI strategy. Three vendors have already pitched their AI platform. The CTO is being asked to choose between Copilot Studio, Agentforce, ServiceNow AI, and Palantir Foundry by the end of the quarter. The board wants a recommendation.

And nobody in the room has actually looked at the data on where the company stands relative to its peers. Not the technology stack. Not the AI investment posture. Not the regulatory exposure. Not the signal data that is already public and visible to anyone who cares to look.

That is the conversation I want to interrupt. Before you decide what to build with AI, look at where you stand. That is Act 1 of how Naftiko sees enterprise AI — and it is the one most enterprises skip.

Three acts, in plain language

Naftiko’s pitch fits into three acts:

- See where you stand. Score your industry and your company on the technology, AI, and platform investments that are public and observable.

- Decide what to build. Translate the signal gaps and leads into a concrete capability set tied to the markets you actually serve.

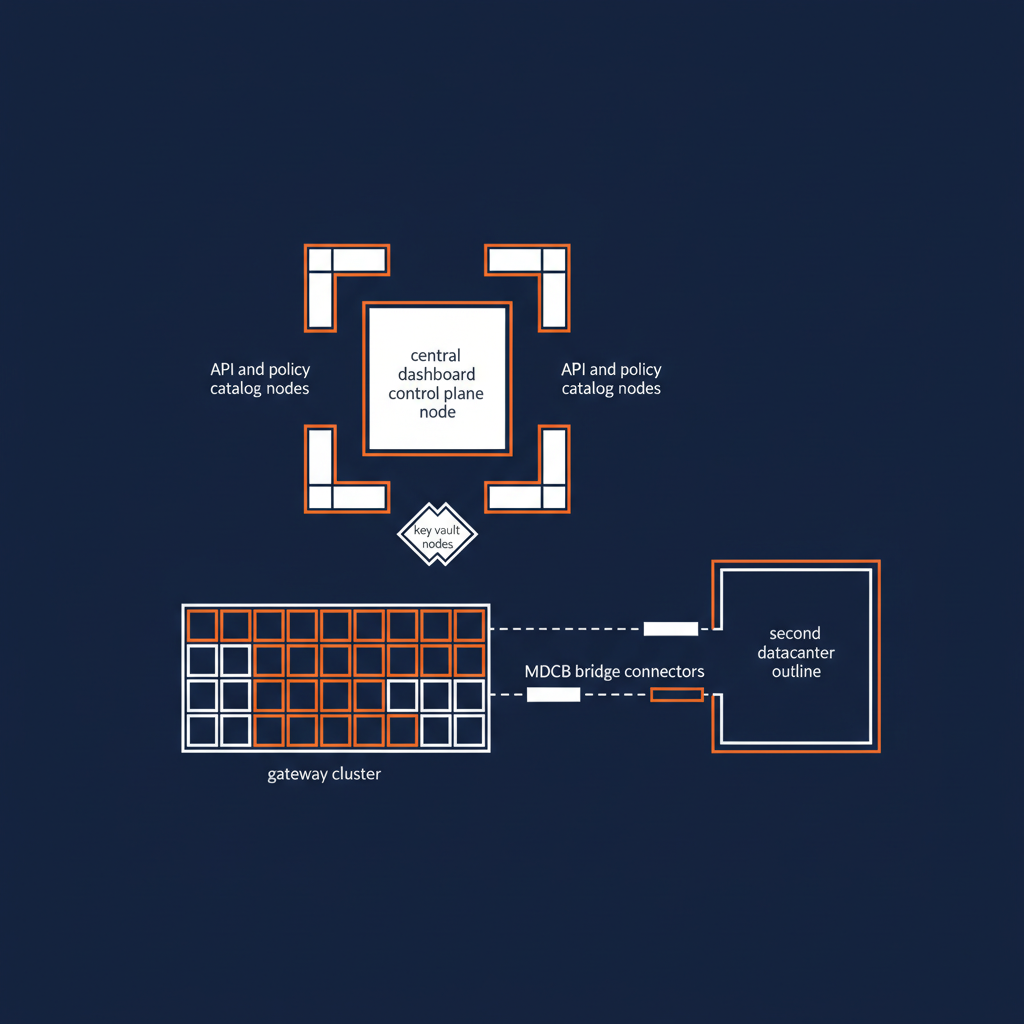

- Control what you build. Run that capability set on open-source infrastructure you can read, fork, and audit — not on a proprietary control plane somebody else owns.

This post is about Act 1. The next two posts will dig into Act 2 and Act 3. But Act 1 is the one I want to anchor on, because skipping it is the most expensive mistake I see enterprises make right now.

What the signals actually say

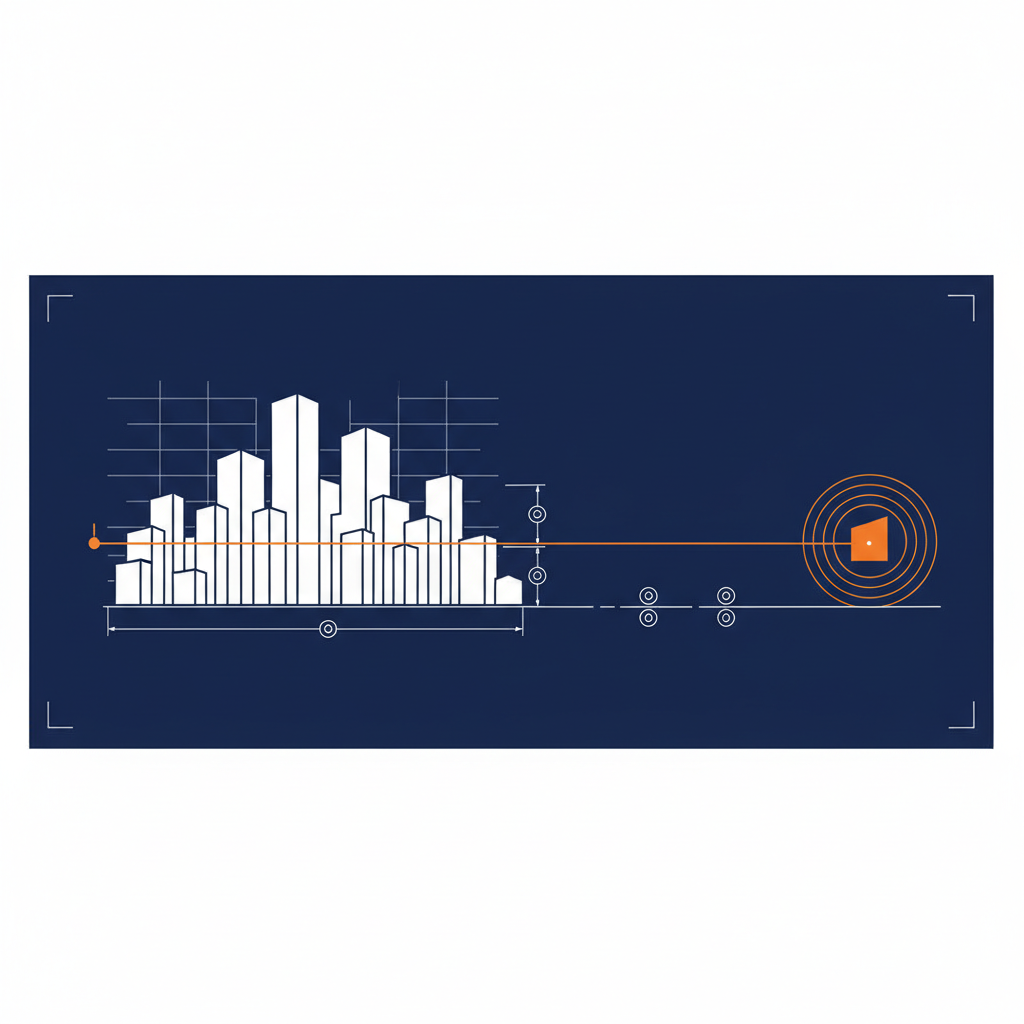

Naftiko Signals scores 290+ companies and 25+ industries on 37 distinct signal dimensions. Areas of technology investment, services adopted, tools deployed, standards followed. Every signal is derived from public data — job postings, open-source contributions, technology partnerships, published case studies, regulatory filings.

The output is a numerical score per dimension per company, rolled up to industry aggregates. The scores tell you, in concrete numbers, what the press cycle and vendor decks obscure.

A few examples from recent industry pulls.

Automotive. The 9 leading automakers we profiled produce an aggregate signal score of 8,464 across 1,876 technology areas, 339 services, 213 tools, and 214 standards. Ford leads infrastructure and operations. BMW leads AI governance and MLOps. Toyota leads security, governance, and regulatory posture. Tesla — the company most publicly identified with AI — sits below the top tier on nearly every dimension we measure. Code (13) and Containers (8) are the clearest gaps for a company whose vehicles run on 100M+ lines of software.

Financial services. Vanguard scores 1,186 total signals across 176 services. Strong on data (94) and cloud (98). Weak on API (9), event-driven (7), data pipelines (7), testing-quality (10). The classic “high data and cloud, low orchestration language” shape. With DORA and SR 11-7 inside an 18 to 36 month compliance window, that orchestration gap is the board-level question.

Healthcare, public sector, manufacturing. Same pattern, different specifics. Strong substrate, thin orchestration, AI investment that is real but not yet governed.

The point is not that these companies are behind. The point is that the data answers the question of where they stand in concrete, defensible numbers — before anybody has to make a build-or-buy decision.

What “where you stand” tells a board

Three executive-grade questions the signal data answers in a single board-pack page.

Where do we lead our peer set, and where do we trail? A peer-set comparison across all 37 dimensions, ranked. The leads are the strategic stories you can tell the market. The trails are the questions you have to answer before the next earnings call.

Where has AI investment already shifted the gradient in our industry? AI investment is not evenly distributed. In automotive, BMW and Toyota lead by a wide margin. In financial services, the gradient is moving toward governance and model registry. The shift is observable in the signals before it shows up in revenue.

What three capabilities, if built, would close the largest signal gap? This is the bridge to Act 2. The signal data tells you where the gap is. The capability spec tells you what to build to close it.

A board does not need 100 dimensions. It needs three answers. The signal data produces them.

Why this is the act enterprises skip

I have a working theory about why most enterprises start with Act 2 — deciding what to build — and skip Act 1. Three reasons.

The vendor sales cycle is faster than the strategy cycle. Vendors arrive with a product to sell. The product comes with a positioning that already implies what the enterprise should build. The board hears the pitch before the strategy team has done the work.

Signal analysis looks like research, and research looks slow. Enterprises are biased toward action. “Let’s pilot something” beats “let’s measure where we stand” on every velocity metric. But the pilot that gets started without an Act 1 grounding is the pilot that does not survive contact with the next quarterly review.

The signal data has not been easy to find. Until recently, the public-data signal thesis was hard to operationalize. Naftiko Signals exists because we got tired of watching enterprises start with Act 2 by default — not by choice.

The pattern is changing. The boards I am talking to now are starting to ask the Act 1 questions first. The CTOs who have done the Act 1 work walk into the AI strategy meeting with a different posture than the ones who have not.

What the next two posts will cover

This is the first post in a three-post series.

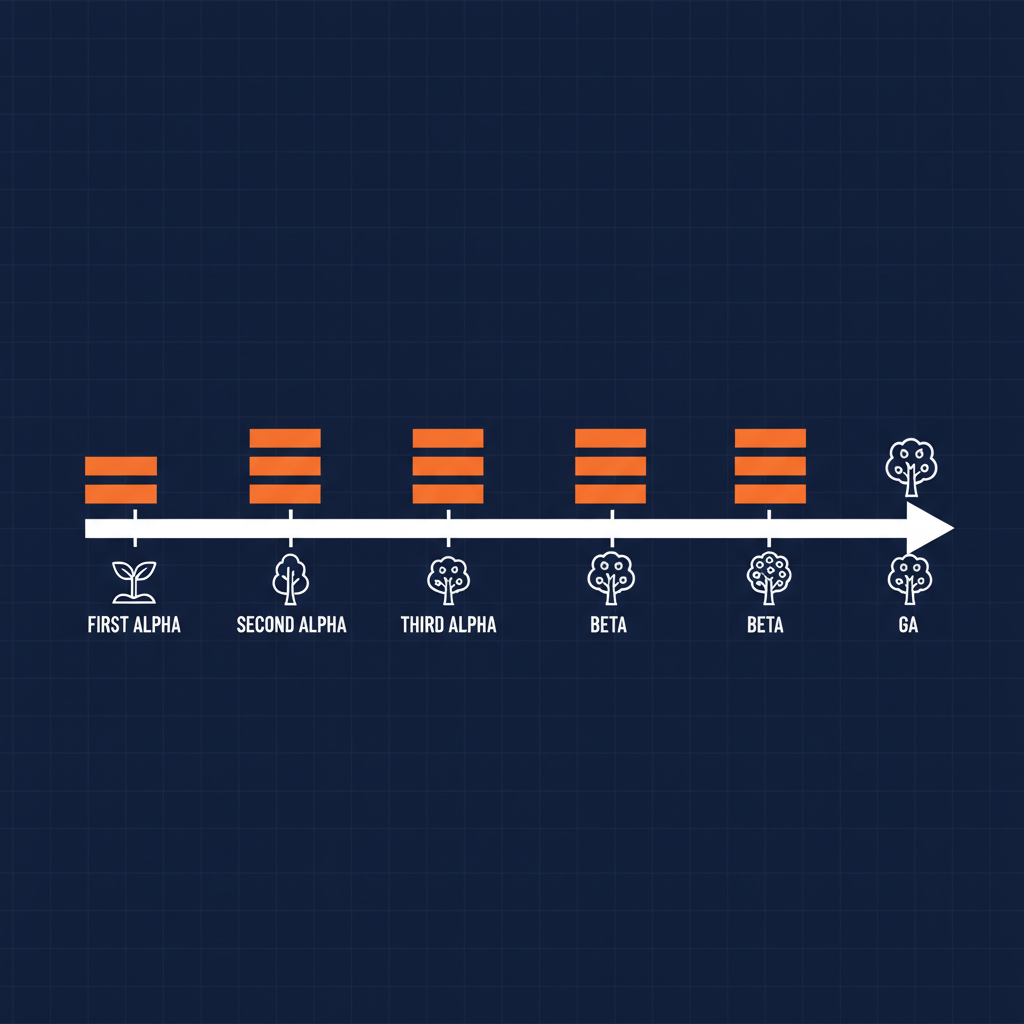

- Act 2 — Decide What to Build. How to translate signal gaps into a concrete capability backlog. Why capability beats microservice as the unit of AI strategy. How the Naftiko capability spec turns sunk API investment into AI-ready surfaces.

- Act 3 — Control What You Build. Why open source is the only AI sovereignty story that holds up under regulatory scrutiny. What “control” means in practice — audit, fork, run anywhere, exit cost, regulatory portability. How the Naftiko Fleet operating model lets you run the platform on infrastructure you actually control.

The three acts are a discipline. Skipping Act 1 to get to Act 2 faster is the most common and most expensive mistake. Skipping Act 3 to ship Act 2 on a proprietary platform is the second most common and the most expensive on a longer time horizon.

What to do this week

If you are inside an enterprise that is about to commit to an AI strategy, here is the smallest useful Act 1 motion.

- Pull your industry on Naftiko Signals —

industries.naftiko.io/signals/<your-industry>. See the aggregate score, the leaders, the gradient. - Pull your company on Naftiko Signals —

companies.naftiko.io/signals/<your-company>. See where you lead and where you trail. - Identify the three largest gaps. Those are the Act 2 questions, ready for the strategy meeting.

- If the gaps are interesting enough that you want to talk through the capability response, become a design partner. We co-build your first capability against your signals, deployed on your fleet, in 90 days.

Act 1 is the cheapest act to run and the one that changes every conversation downstream. Run it before the next strategy meeting.

- Naftiko Signals — signals.naftiko.io

- Become a design partner — naftiko.io/contact-us

- Naftiko on GitHub — github.com/naftiko