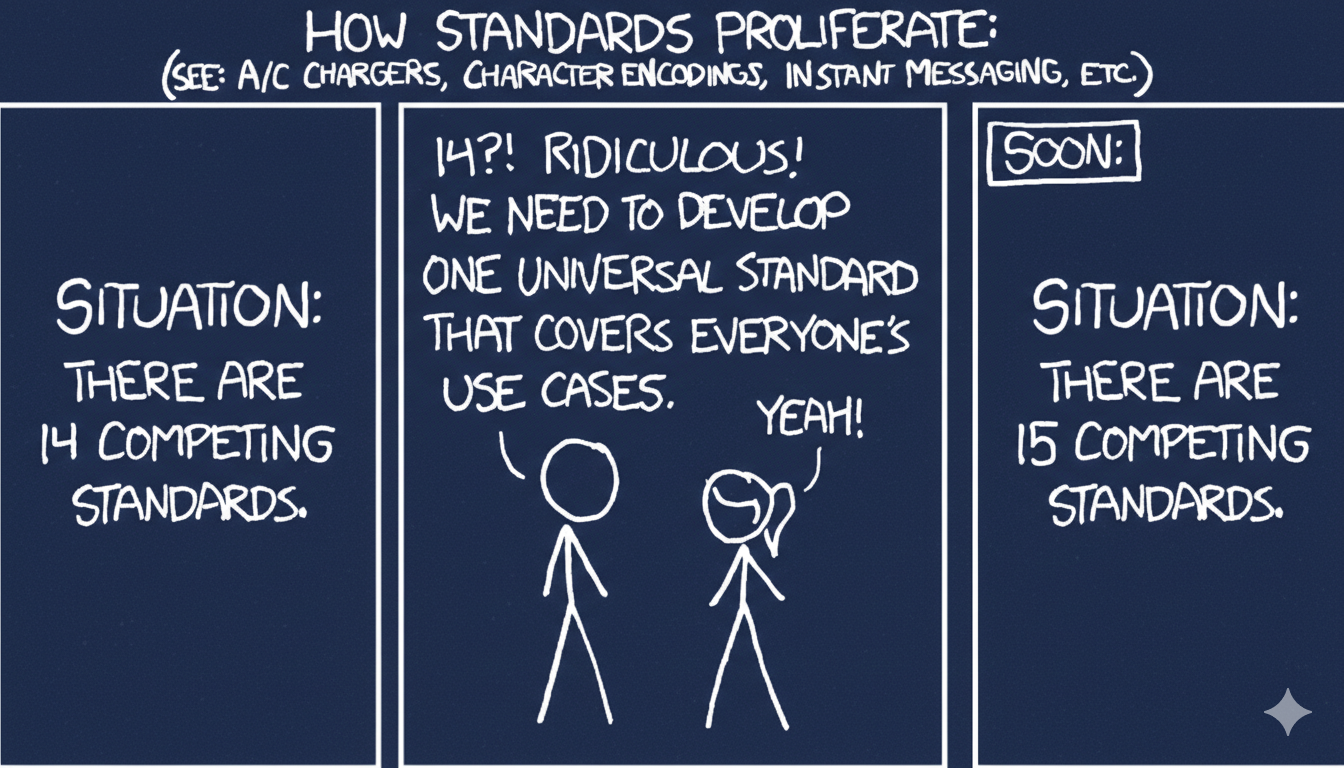

There's a famous XKCD comic about competing standards. "We have 14 competing standards," someone says. "Ridiculous. We need to develop one universal standard that covers everyone's use case." The final panel: "We now have 15 competing standards."

If you work in APIs, you're not living in a simulation. You're living in that cartoon.

The history of API specifications is a story of good intentions, corporate maneuvering, governance battles, and an ever-expanding graveyard of specs that were going to fix everything. Understanding how we got here matters — especially now, as a new wave of AI-driven specs threatens to repeat every mistake of the last fifteen years.

It Started With Words in a Dictionary

In 2010, a developer named Tony Tam had a company called Wordnik — a dictionary API. He needed a configuration file to power two tools: Swagger UI (interactive documentation) and Swagger CodeGen (code generation). That configuration format became Swagger.

There's something poetic about the foundational API specification originating from a dictionary API. Naming things, describing things, creating shared vocabularies — that's really what specs are for.

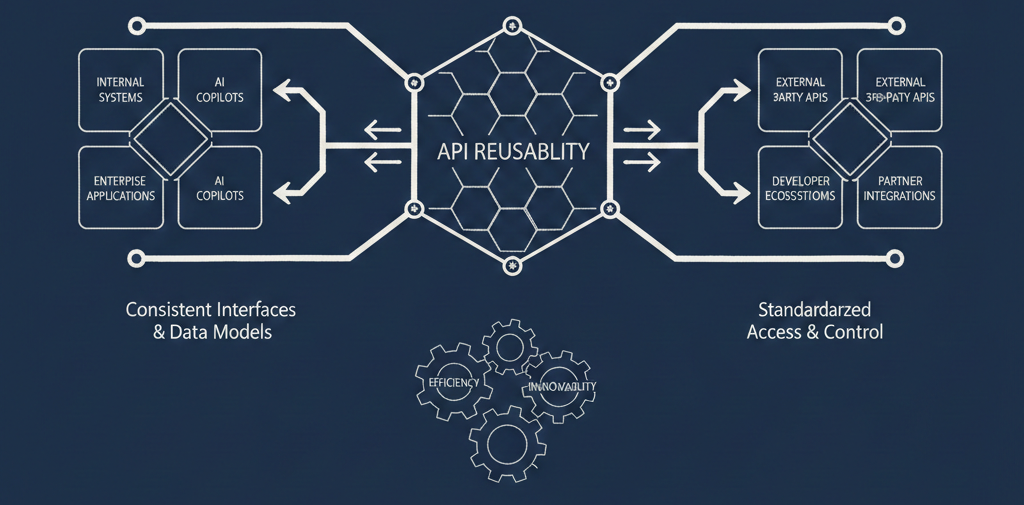

Swagger quickly outgrew its origins as a config file. By the mid-2010s it had become the de facto way to describe RESTful HTTP APIs. But Tam's startup was acquired, and in 2015, SmartBear bought the whole thing. What happened next set a template that would repeat itself across the industry.

SmartBear agreed to put the specification into a foundation — the Linux Foundation, specifically — under a new name: OpenAPI. But they kept the Swagger trademark and the commercial tooling. So Swagger became the brand they sold, and OpenAPI became the open standard they donated. It was a clean split on paper. In practice, the confusion between the two persists to this day.

AsyncAPI, and the Sister Spec

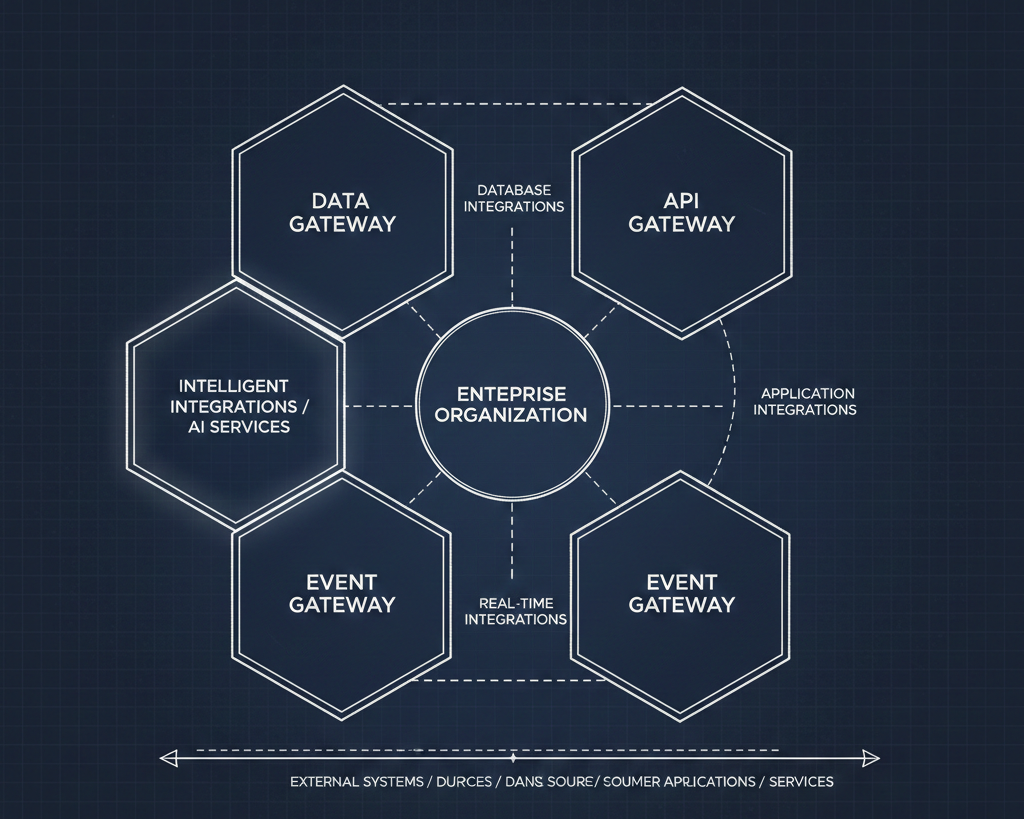

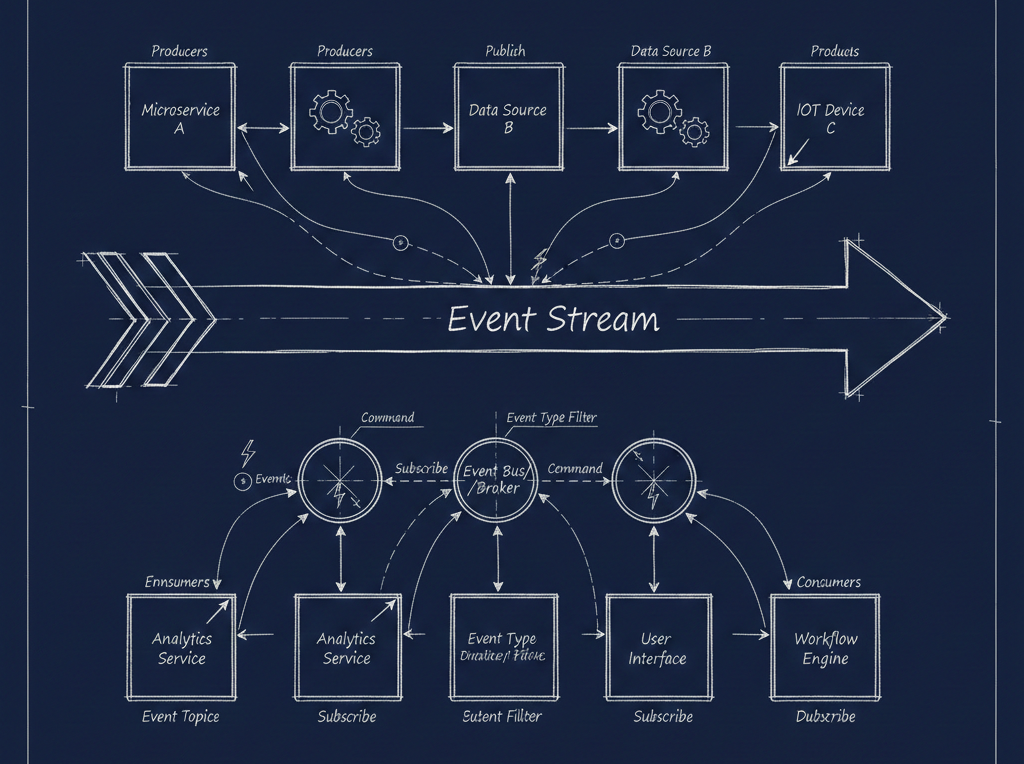

OpenAPI was designed for HTTP request-response APIs. But the world doesn't run on request-response alone. Event-driven architectures, message queues, WebSockets, Kafka streams — none of these fit neatly into OpenAPI's model. When the OpenAPI community was asked to expand the spec to cover asynchronous patterns, the answer was no.

So Fran Méndez created AsyncAPI, a sister specification that uses the same format and the same JSON Schema foundation as OpenAPI but covers the event-driven world. Between the two of them, plus JSON Schema as a shared underpinning, you had a reasonably comprehensive way to describe most of what a modern enterprise runs.

The XKCD Effect

Because this is the API world, and the API world lives inside that XKCD cartoon, OpenAPI and AsyncAPI weren't the only games in town. Not even close.

There was RAML, created by MuleSoft. The backstory is instructive. Around 2014, MuleSoft brought together leaders from several competing spec efforts — people from Apiary (API Blueprint), Kong, Mashery, and the Swagger community — ostensibly to explore interoperability between specifications. Instead, MuleSoft used the gathering to launch a brand new spec.

By most technical accounts, RAML was actually a better specification than OpenAPI. It didn't matter. MuleSoft's aggressive approach alienated the community so thoroughly that people gravitated to Swagger and OpenAPI out of sheer preference for the people involved. When MuleSoft was eventually acquired by Salesforce, RAML's trajectory slowed further — a cautionary tale about what happens when a corporate parent gets acquired and priorities shift.

API Blueprint, created by Apiary, met a similar fate when Oracle acquired Apiary. The spec is still technically out there, open source, but development has effectively halted. Nobody's doing anything with it. It just exists, like digital archaeological evidence of a previous era's ambitions.

Then came GraphQL, approaching the problem from an entirely different angle — a different worldview, a different model for how clients should interact with data. It arrived with the promise of replacing REST. In practice, it found one or two genuine use cases where it excelled and settled into a niche rather than taking over the world.

And then there's TypeSpec from Microsoft, Smithy from AWS — each created to solve their own internal SDK generation problems, each adding another entry to the growing list.

The New Wave: MCP, A2A, and Agent Skills

Now we're in the age of AI agents, and predictably, we have a fresh crop of specifications.

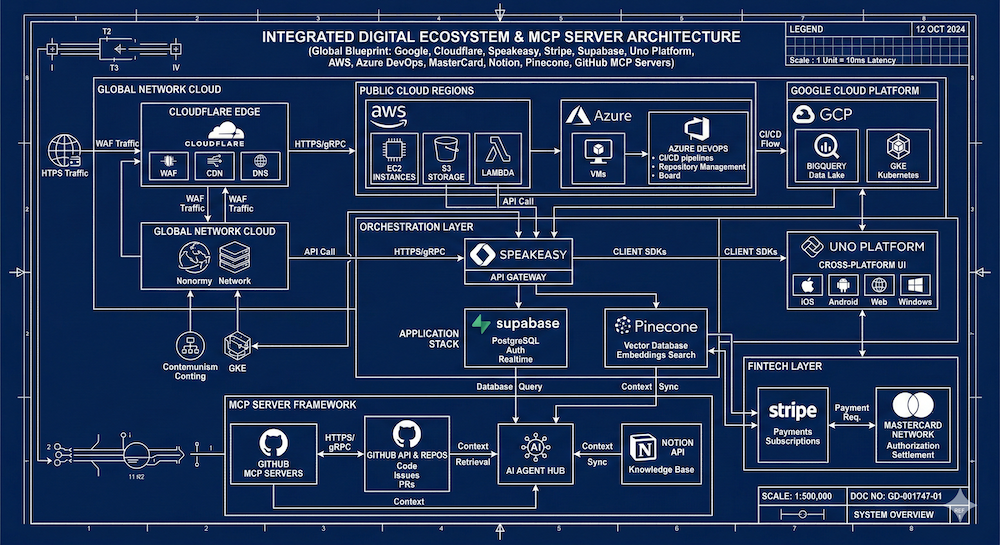

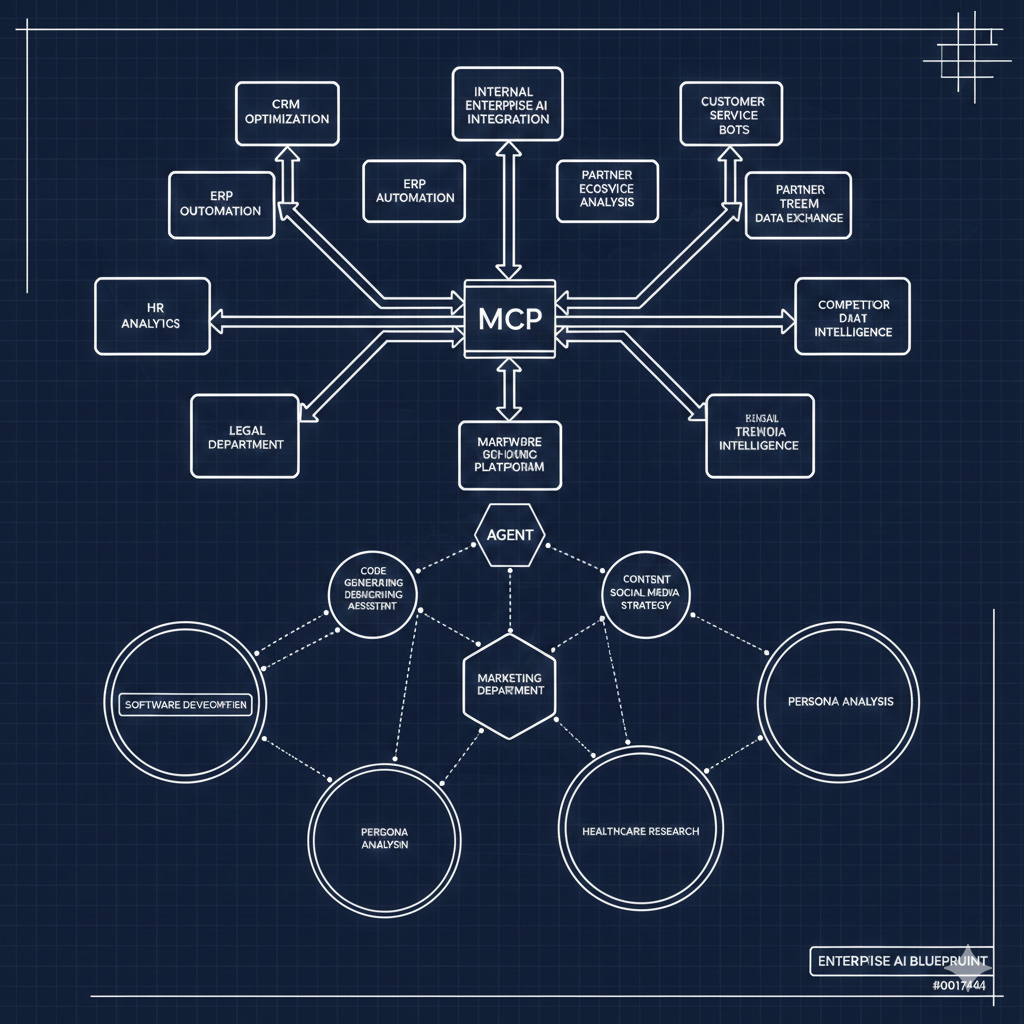

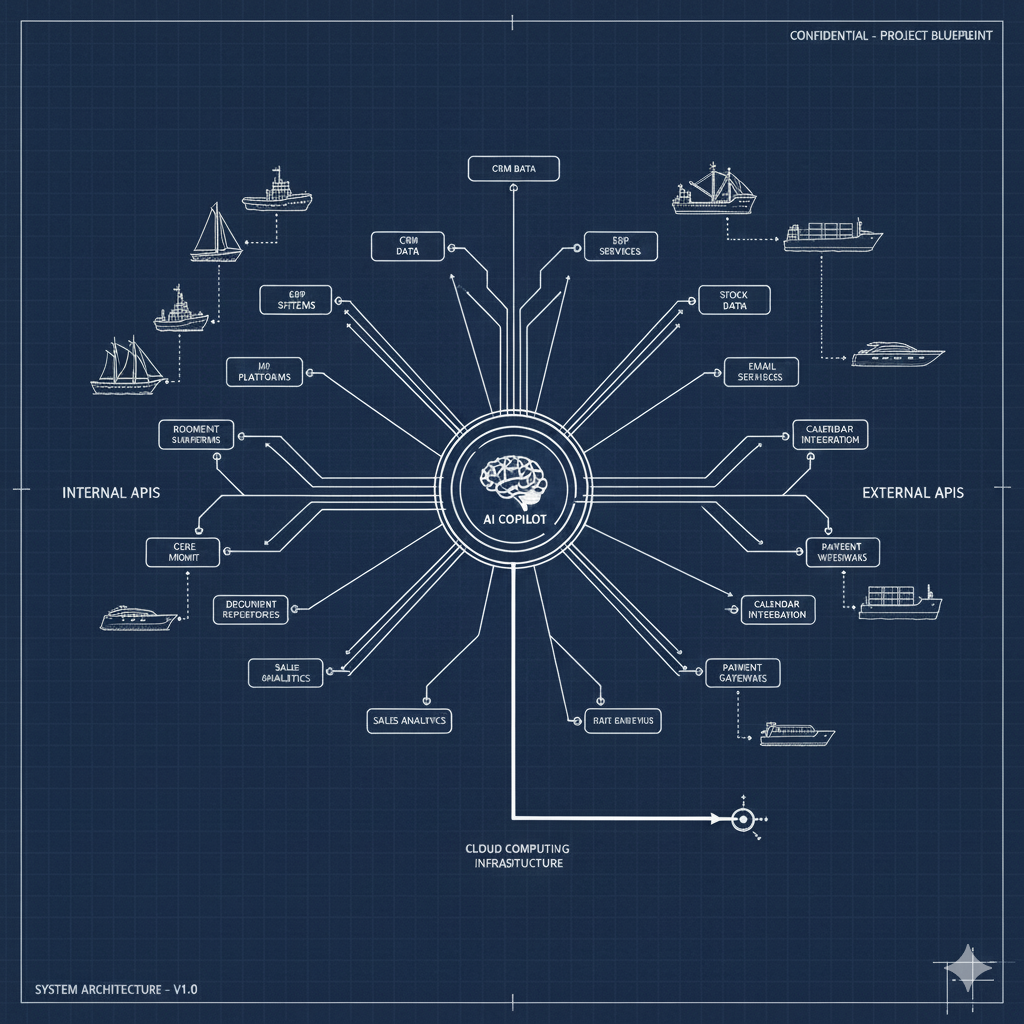

Model Context Protocol (MCP), created by Anthropic and donated to the Linux Foundation, has taken off like a rocket. It's essentially an RPC mechanism for giving AI models access to tools and data. Google and IBM partnered on Agent-to-Agent (A2A) protocol. And then there are agent skills — another emerging approach to defining what AI agents can do.

Each of these is exciting. Each of these is tactical. And each of these carries echoes of every previous specification wave.

MCP in particular is striking in its ambition. Where the previous generation of API thinking was built around careful design — treating APIs as products, implementing rate limiting, understanding who's using them and why — MCP wants to give AI models more or less direct access to data and systems. A decade of deliberate API design, of putting thoughtful interfaces in front of file systems and databases, risks being bypassed by plugging everything directly into copilots and letting agents have at it.

The governance picture is, predictably, complex. MCP is in the Linux Foundation. A2A is in the Linux Foundation. AsyncAPI is in the Linux Foundation. But being in the Linux Foundation isn't a magic wand. Different specs have different governance models once they're inside, different people holding the keys. OpenAPI, for instance, currently lacks strong leadership — there are good people doing important work, but no driving force at the helm.

The Grownup Stuff

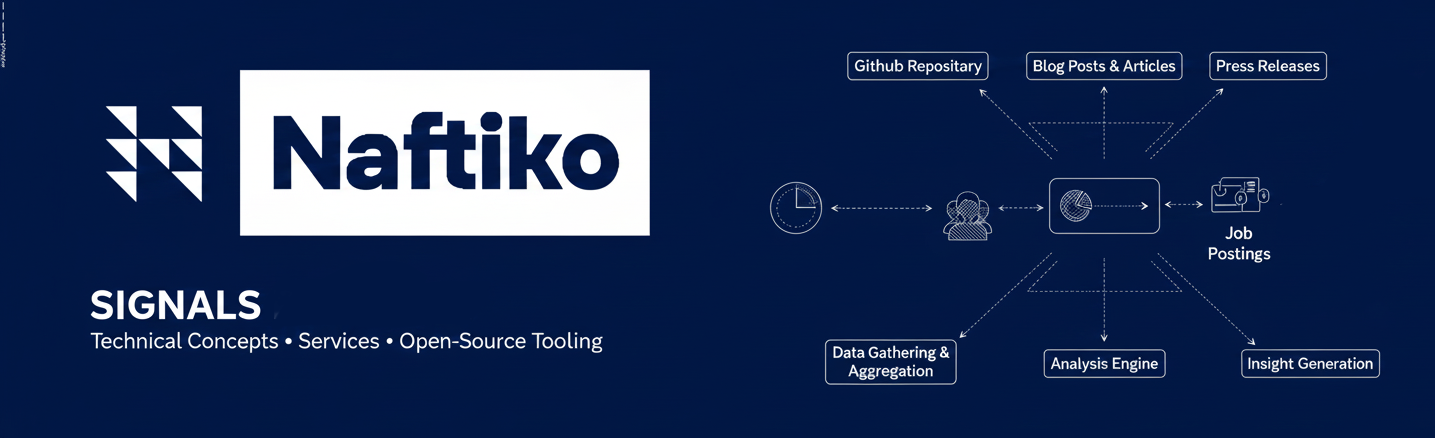

Talk to someone running APIs at a large enterprise and you hear a very different story from the one told at tech conferences. A twenty-year veteran at a major global company will tell you: yes, we still have EDI. Yes, we still have WSDL and SOAP. Yes, we have a lot of Swagger and OpenAPI. We're trying to do more AsyncAPI. MCP is rapidly spreading, and now we are actively developing skills.

Oh, and we have a global business to run, and it has to be stable.

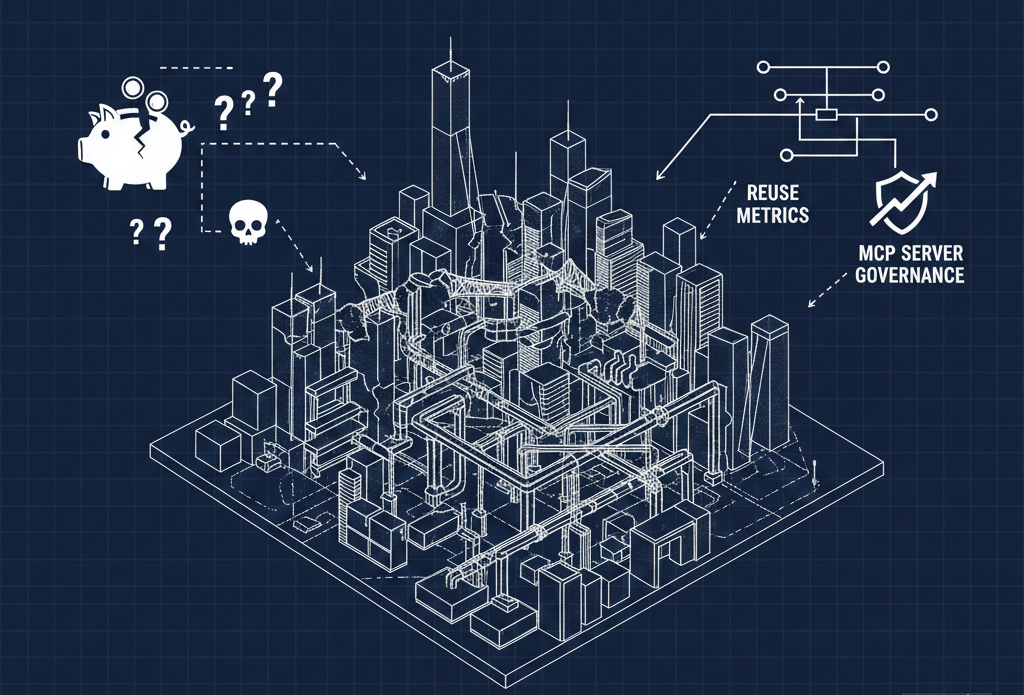

This is the part that gets lost in the excitement. All the governance, compliance, accounting, privacy reviews, security assessments — the stuff that working adults have to deal with — isn't always accounted for when everyone's racing to build the next thing. Companies can only move as fast as their oldest technology. If you're in shipping and logistics and you're still running EDI, agent skills are going to take a while.

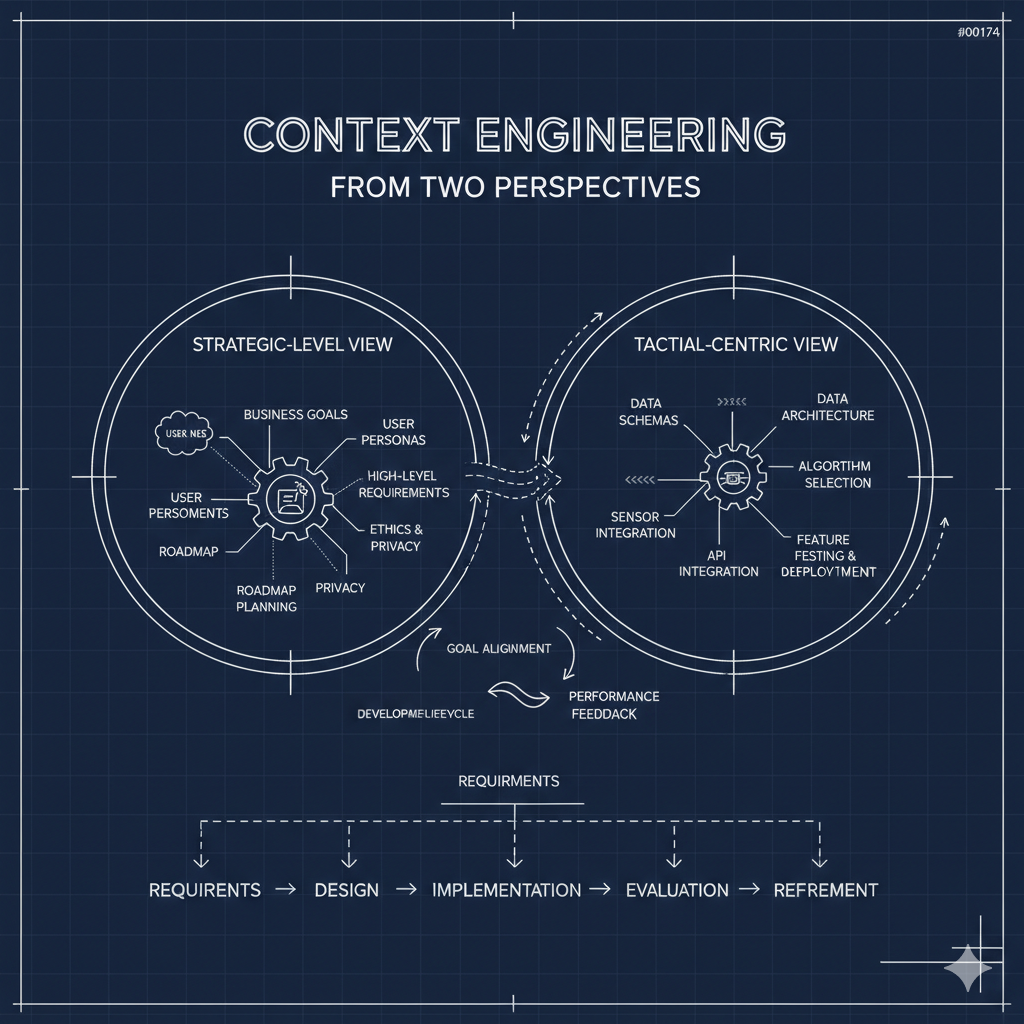

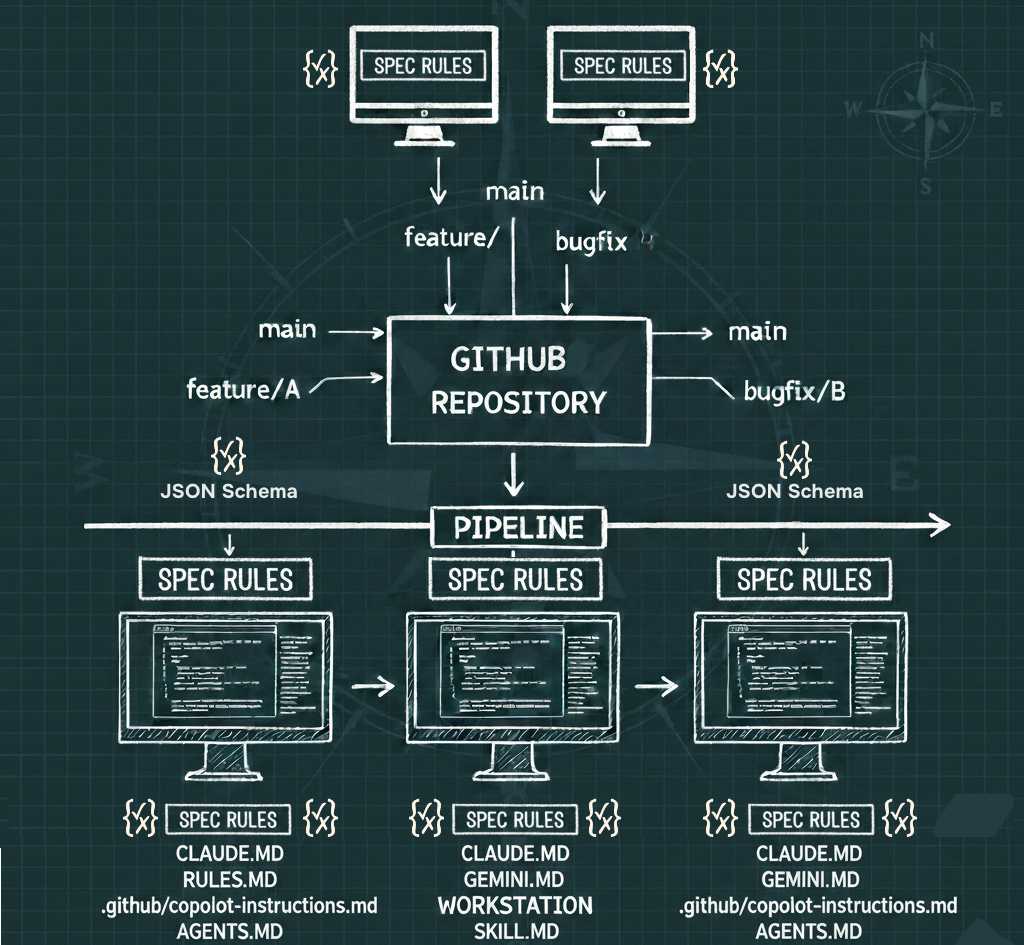

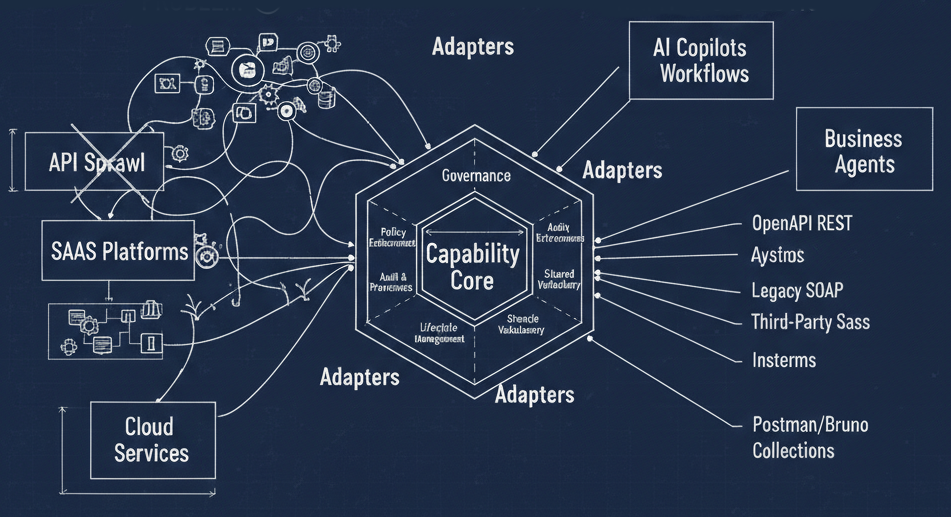

The practical question for most enterprises isn't "which new spec should we adopt?" It's "how do we get an accurate accounting of everything we already have?" That means crawling GitHub and Confluence looking for evidence of APIs in every format — WSDLs, Swaggers, OpenAPI documents — and converting them into a common format so you can see the full picture. Who owns what? Where are the domain boundaries? Which lines of business are producing which APIs?

Only then can you start thinking about generating the tactical stuff — Postman collections, Agent Skills, MCP servers — from that centralized foundation. The boring work comes first.

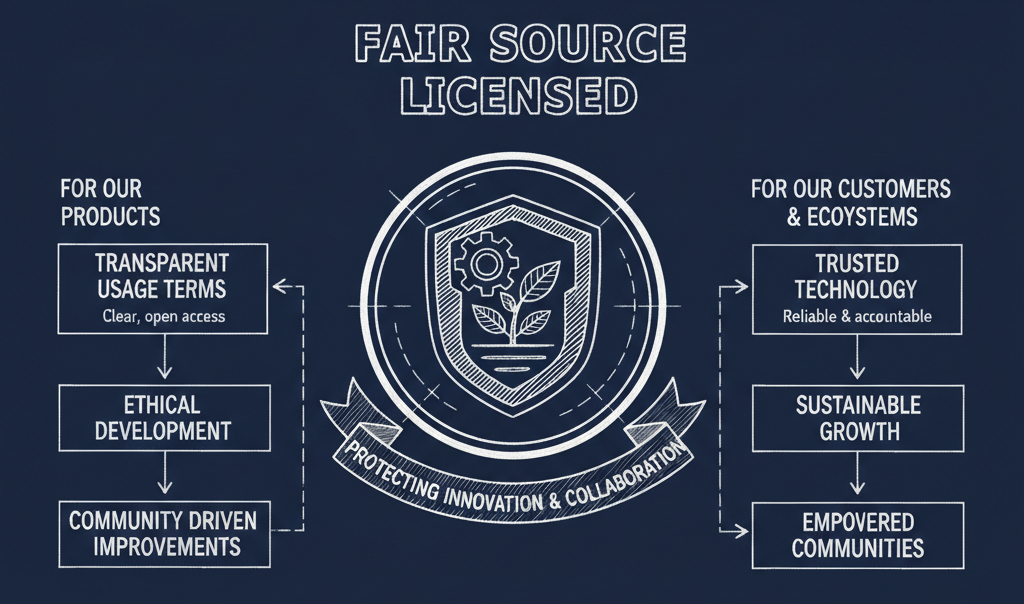

The Power Game

Here's the thing that doesn't get said enough: the complexity isn't an accident. Every wave of API specifications is also a wave of power grabs. Specs are how influence is accumulated, negotiated, and seized in the digital economy.

MuleSoft did it with RAML. Postman did it with Collections. Anthropic is doing it with MCP. Each time, a company creates or champions a specification, builds tooling around it, and uses that position to capture market share while things are chaotic and confusing. Move fast and break things isn't really about innovation — it's about grabbing as many digital bits as you can while everything is messy.

Very few people truly understand the full landscape of API specifications. That asymmetry is itself a source of power for those who do. The complexity serves them.

Where Does That Leave Us?

If you're building something new and you don't have legacy baggage, you might reasonably skip straight to skills or MCP and build natively for the AI agent world. You'll still need to think about governance, security, compliance, and cost — but you won't be weighed down by decades of accumulated technical debt.

If you're a large enterprise — and most of the economy is large enterprises — the advice is different. Start with an inventory. Use OpenAPI and AsyncAPI and JSON Schema as your common language to map what exists. Figure out who owns what. Get the accounting right. Then generate the tactical, transactional interfaces from that foundation.

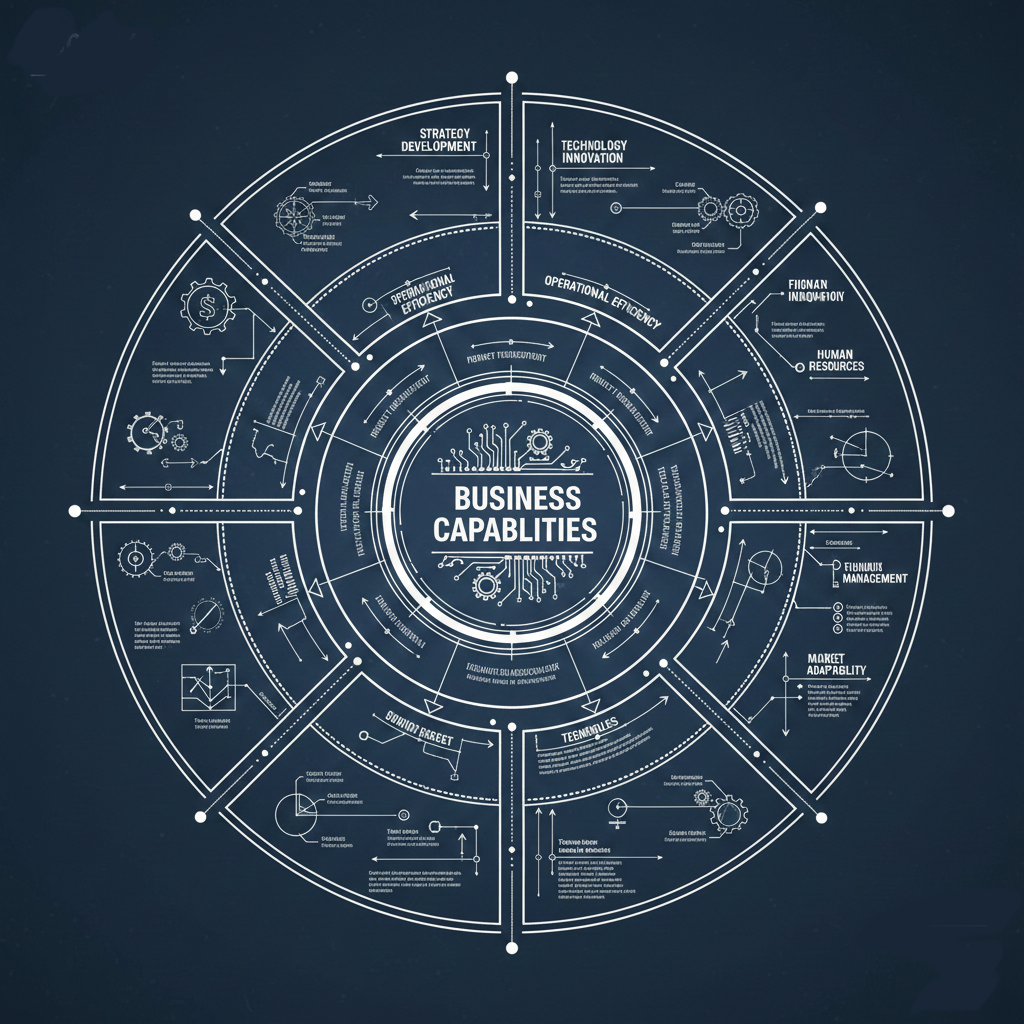

Either way, the question that matters most isn't which spec to use. It's the one that too many organizations still aren't asking: what business outcome are we actually trying to achieve?

Because right now, there are companies pouring millions into AI-enabling their systems without a clear answer to that question. And somewhere, a customer's number one request is: can we turn all this AI stuff off?

We live in an XKCD cartoon. The least we can do is make sure we're drawing a good cartoon.