Every enterprise now needs an AI infrastructure opinion, whether they like it or not. The arrival of large language models didn't just introduce a new tool — it forced organizations into a reckoning with their own readiness. How clean is your data? How portable is your stack? Who owns the models you depend on? These aren't theoretical questions anymore. They're the foundation that determines what you can build next.

A Build-vs-Buy Decision That Arrived Overnight

When LLMs broke into the mainstream, the timeline for strategic planning collapsed. Organizations that had spent years gradually modernizing their data pipelines were suddenly asked to have a position on fine-tuning, prompt engineering, and model hosting — often before they'd finished migrating to the cloud.

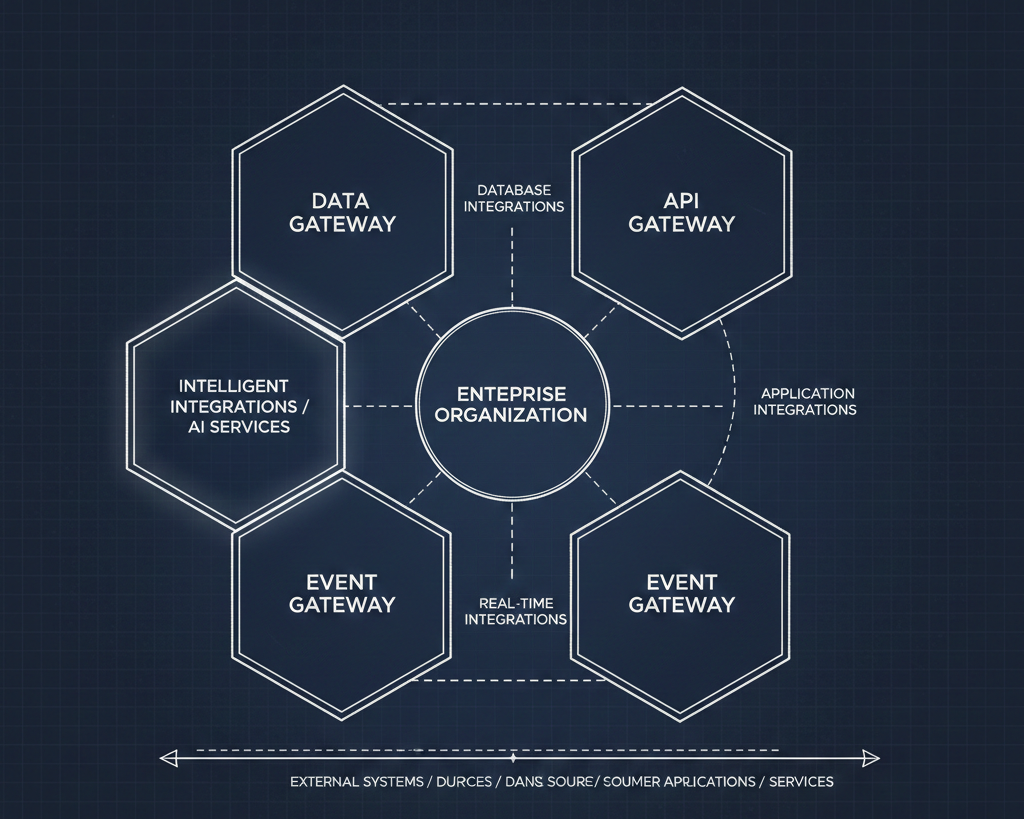

The core challenge was never really about the models themselves. It was about data. LLMs are only as useful as the data you can feed them, and most enterprises discovered quickly that their data was scattered, inconsistent, or locked behind systems that were never designed to serve an AI layer. The arrival of generative AI turned data strategy from a back-office concern into a board-level conversation.

Then open-source models changed the math again. Projects like Llama and Mistral made self-hosting viable for organizations with the engineering capacity to support it. Suddenly the decision wasn't just "which vendor do we go with" — it was "do we need a vendor at all?" Questions about data sovereignty, cost control, and vendor lock-in moved from the periphery to the center of every infrastructure discussion.

The Waves Reshaping Enterprise AI

Three overlapping waves have driven this shift, each building on the last.

Large language models established the foundational reasoning and generation layer for modern AI applications. Trained on massive corpora of text and code, LLMs made it possible for organizations to summarize, translate, plan, and synthesize code at a scale that was previously impractical. They became the substrate — the thing everything else gets built on top of.

Generative pre-trained transformers refined that substrate into something more actionable. GPTs demonstrated that a model pre-trained on broad datasets could be fine-tuned for specific, high-value tasks, producing coherent and context-aware output. They became the general-purpose engines that enterprises started integrating into customer support, internal tooling, content workflows, and beyond.

Open-source LLMs then democratized the entire space. By making model weights, architectures, and training code publicly available, open-source projects enabled self-hosting, deep customization, and transparency. They gave enterprises a real alternative to proprietary platforms — and in doing so, they created an innovation ecosystem that moves faster than any single vendor can.

Each wave didn't replace the previous one. They compounded. And the enterprises paying attention are the ones building strategies that account for all three simultaneously.

Reading the Signals

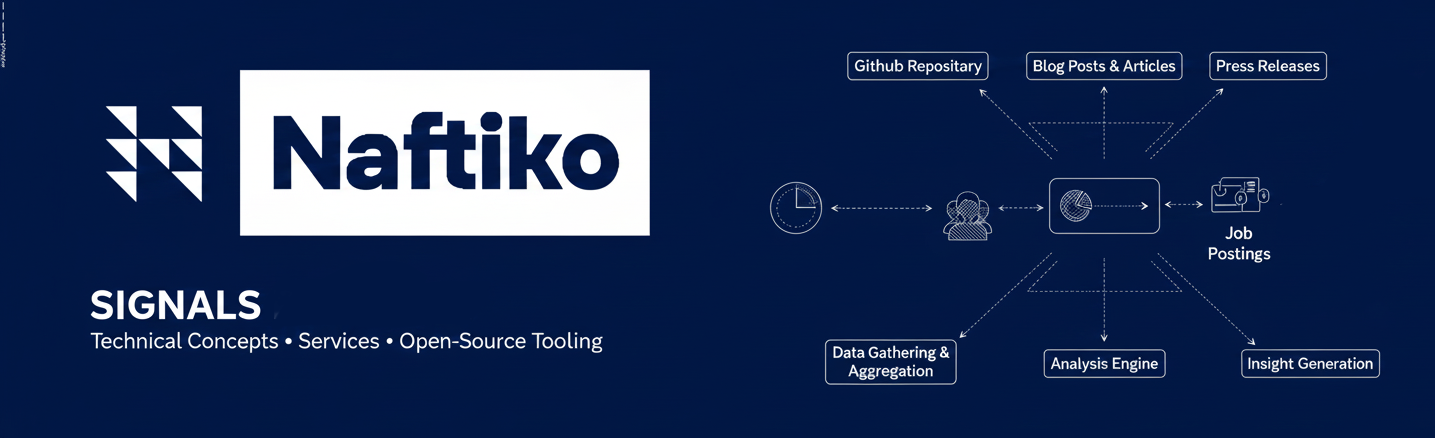

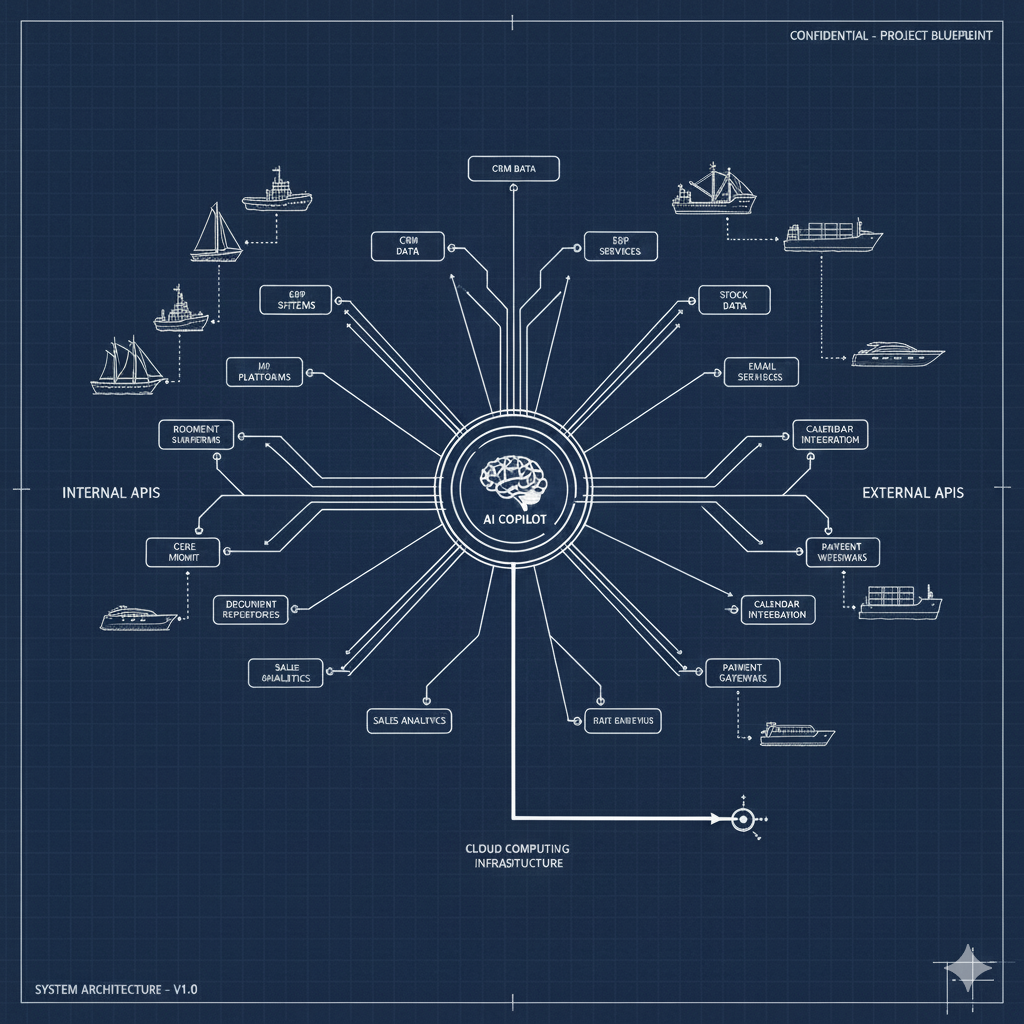

Understanding where a company sits in this landscape requires more than asking whether they've deployed a chatbot. At Naftiko, we track five interconnected signals that reveal the depth and maturity of an organization's AI foundation.

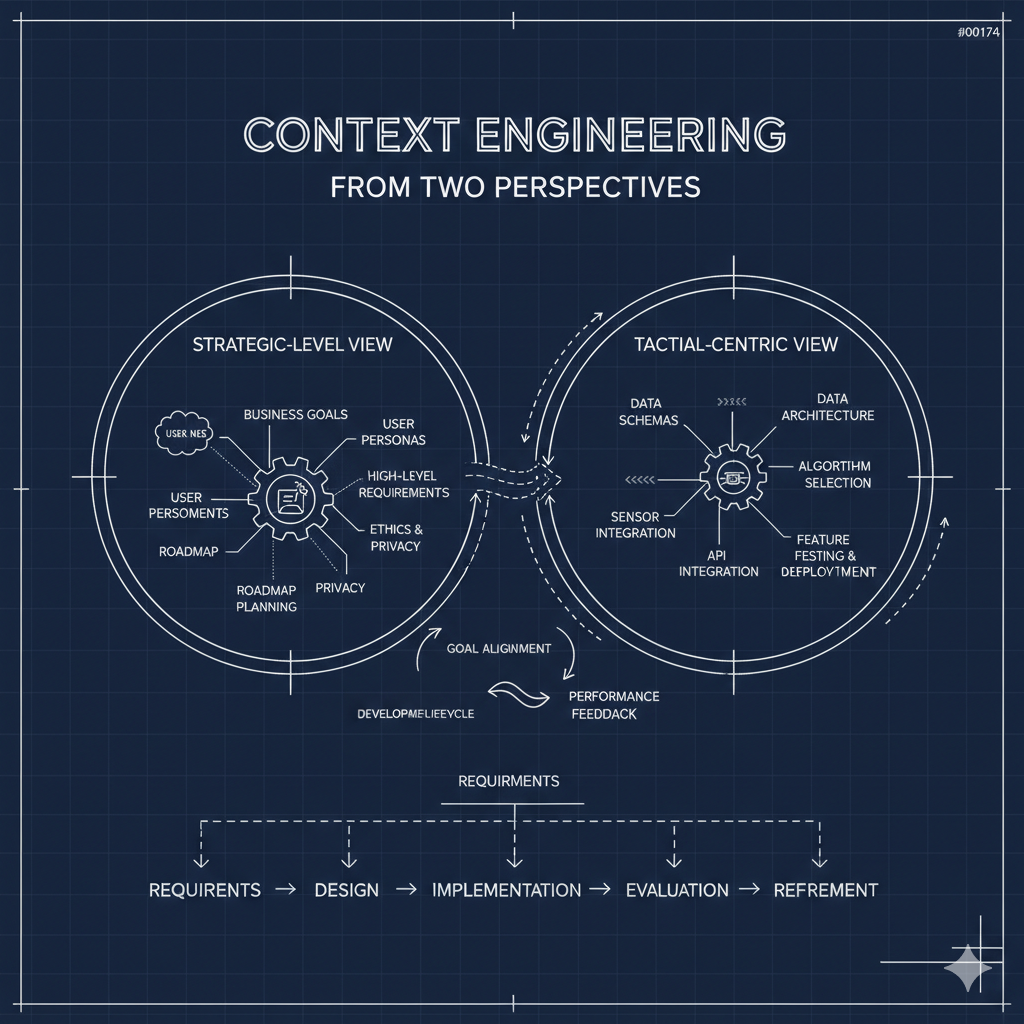

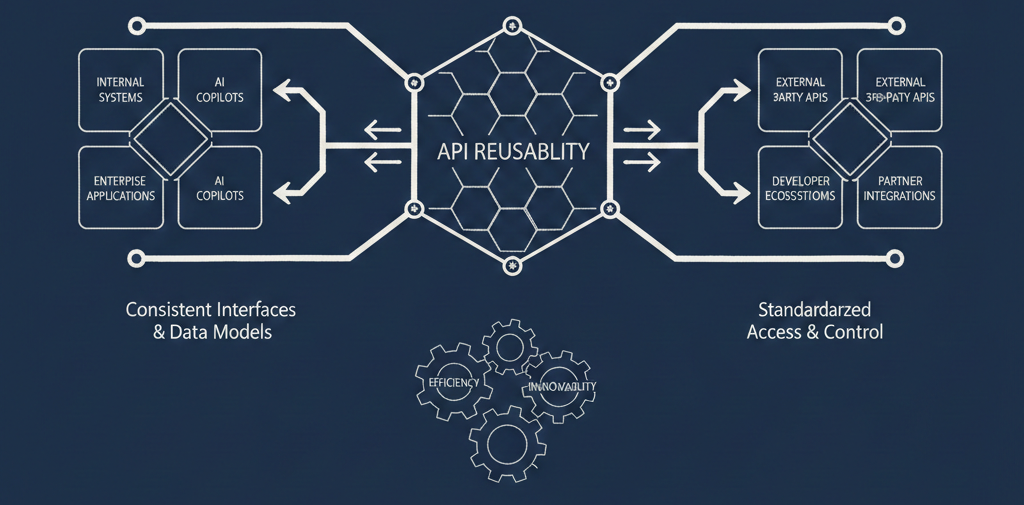

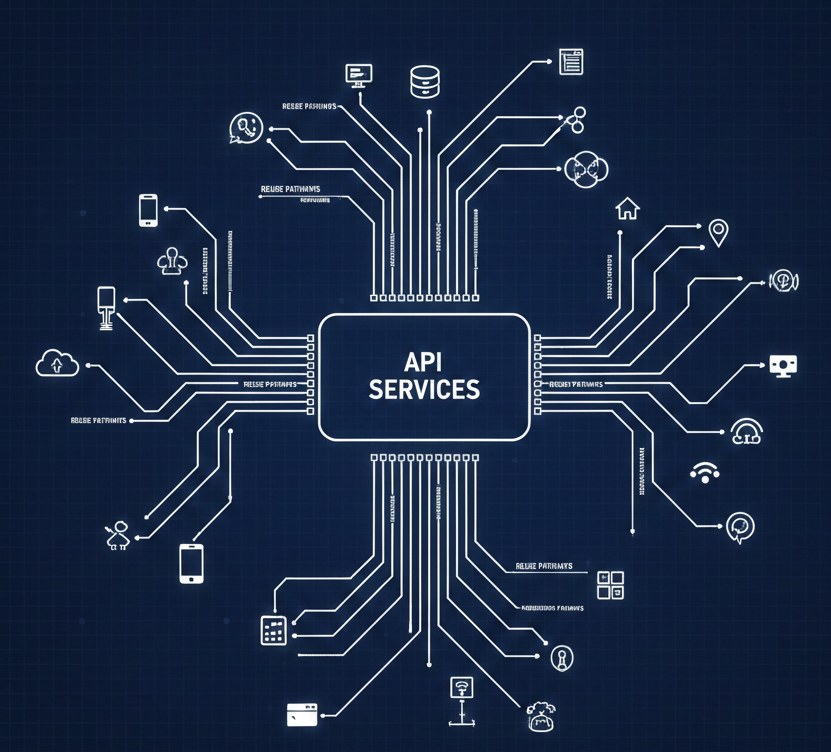

Artificial intelligence adoption is the most visible signal, but it's also the most nuanced. It's not enough to know that a company is using ChatGPT. What matters is the trajectory — are they experimenting with MCP integrations, investing in agentic automation, or still treating AI as a novelty? The gap between surface-level adoption and structural investment is where the real story lives.

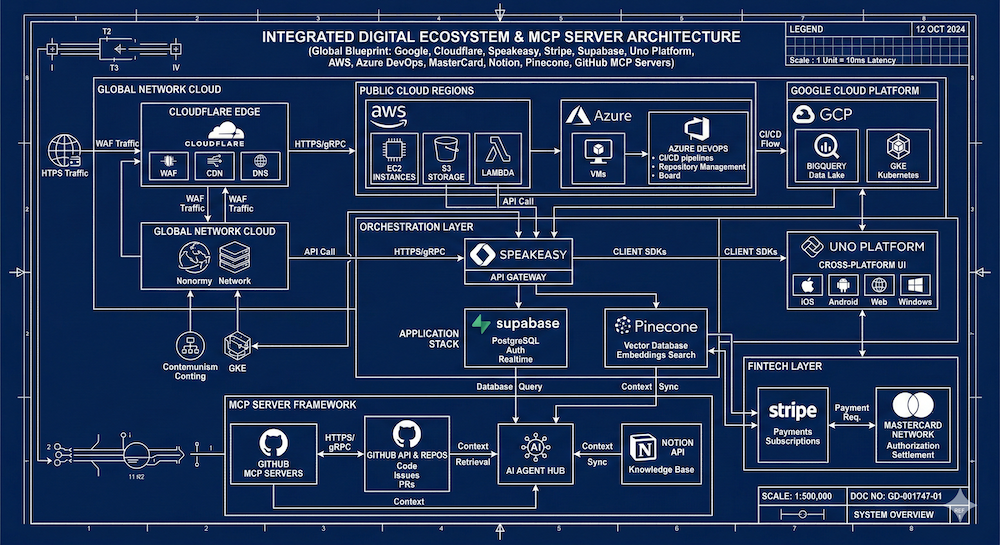

Cloud infrastructure tells you what's possible. Which clouds an organization uses is just the starting point. The deeper question is how they manage the technical and business dimensions of their cloud strategy — whether they've achieved real multi-cloud flexibility or are operationally locked into a single provider. Cloud posture directly constrains AI ambition.

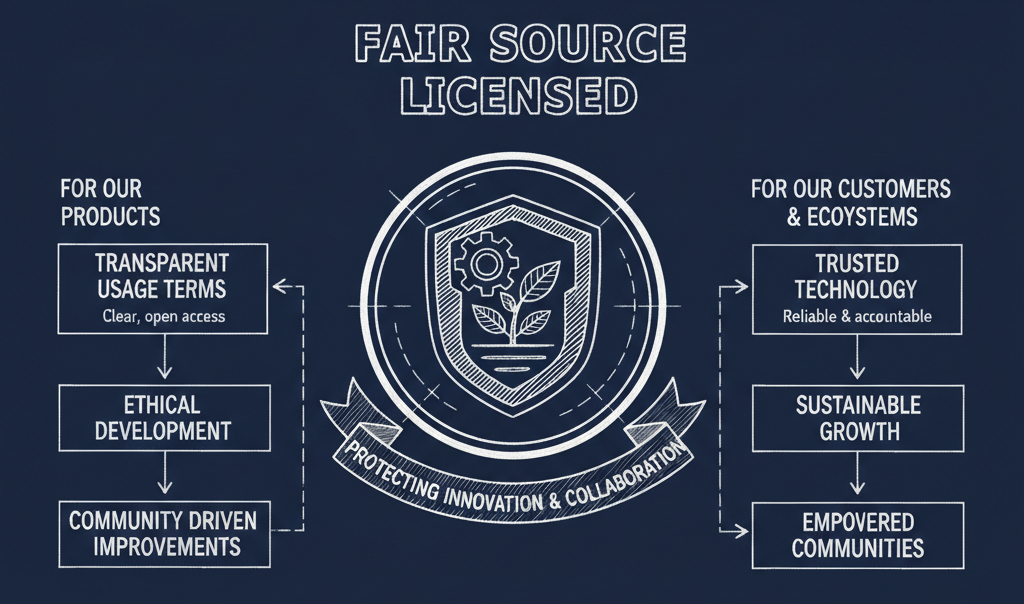

Open-source engagement signals both capability and philosophy. Organizations that actively use open-source tools have more flexibility. Those that contribute back to the ecosystem tend to have stronger engineering cultures. And those investing in inner source — applying open-source principles to internal development — are often the ones best positioned to move quickly when the landscape shifts.

Programming languages offer a window into team composition and technical diversity. The languages in use across an organization reveal how services are structured, what tooling is available, and how adaptable the engineering organization is. A company running five languages across twenty services faces different AI integration challenges than one standardized on two.

Code and dependencies round out the picture. The libraries, frameworks, and SDKs in active use determine how easily an organization can plug into new AI capabilities — or how much refactoring stands between them and their next integration. This is where strategy meets implementation.

The Foundation Sets the Constraints

The choice an enterprise makes at this layer — proprietary, open-source, or hybrid — isn't just a technology decision. It's a constraint that propagates upward through every application, workflow, and product built on top of it.

Go fully proprietary, and you trade flexibility for speed and support. Go fully open-source, and you gain control but take on operational complexity. Most organizations will land somewhere in the middle, and the ones that do it well will be the ones that made the choice deliberately rather than by default.

The foundational layer isn't glamorous. It doesn't demo well. But it's the thing that determines whether an enterprise's AI investments compound into real capability — or collapse under the weight of technical debt they didn't see coming.

The enterprises getting this right aren't necessarily the ones moving fastest. They're the ones who understand that what you build on matters as much as what you build.