I recently sat down with Jennifer Riggins — freelance journalist, tech storyteller, and longtime collaborator — for the latest episode of the Naftiko Capabilities podcast. Jennifer has been covering developer experience and platform engineering for as long as I've been doing API Evangelist, and she's one of the people I turn to when I want an honest, human-centered read on what's happening in our industry.

What came out of our conversation was equal parts hopeful and sobering.

AI Is an Amplifier — For Better and Worse

Jennifer opened with something I think deserves more attention: AI is genuinely expanding who gets to participate in creating technology. Nurses, educators, domain experts of all kinds can now spin up prototypes and engage meaningfully in software creation. That matters. Tech has long been a gated community, and anything that lets subject matter experts shape the tools they actually use is a win for everyone.

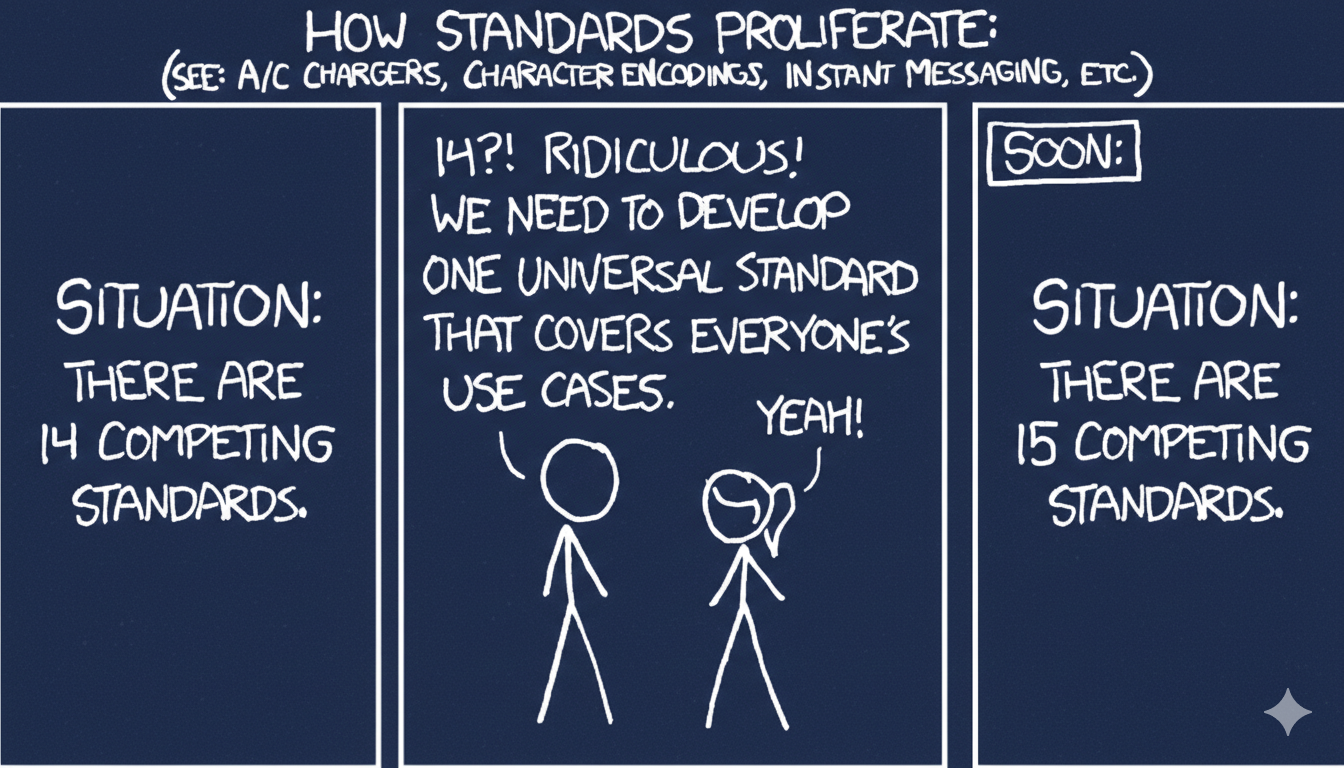

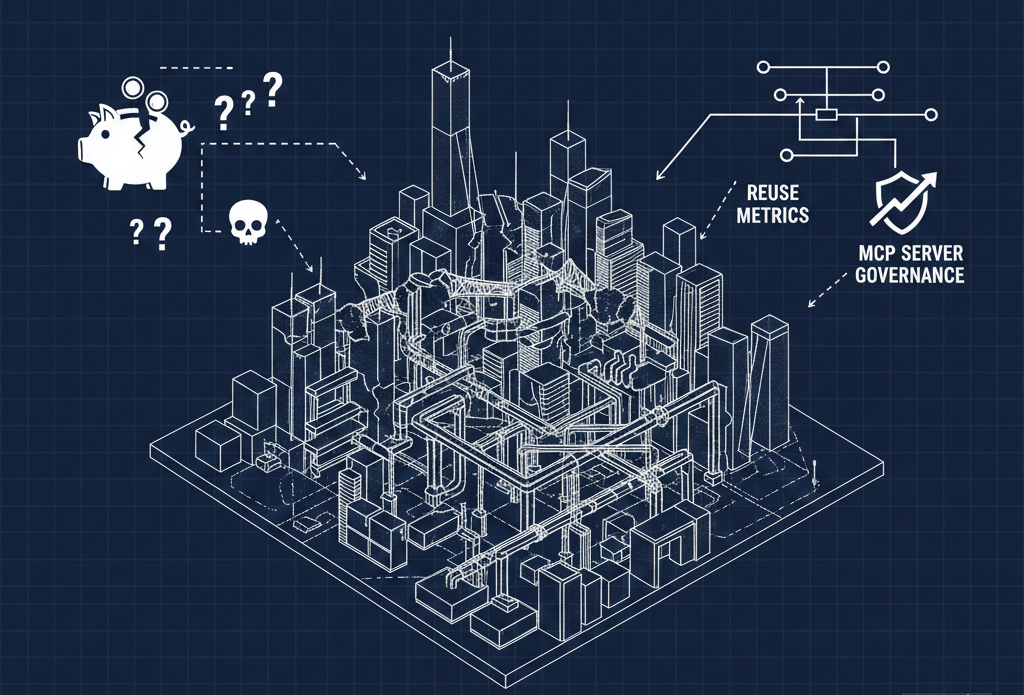

But as the 2025 DORA report put it, AI is an amplifier. If your best practices are solid, AI makes things better. If they're not, it accelerates the mess. Jennifer sees security, guardrails, and established engineering practices falling through the cracks as organizations rush to adopt AI without the foundations to support it.

Her take? These are solvable problems — and platform engineering is the conduit. Guardrails work. Sometimes gates are necessary. The tooling and the thinking already exist; the question is whether organizations will actually use them.

The Employment Crisis Nobody Wants to Talk About

Where Jennifer gets more worried — and where our conversation got heavier — is employment. She pointed to 900,000 young people currently unemployed in the UK, roughly the population of the country's third-largest city. The U.S. picture isn't better, and the at-will employment structure makes things even more precarious for American workers.

People who were making excellent money in tech a couple of years ago are now struggling. LinkedIn has become a stream of layoff announcements mixed with ICE raid anxieties from technologists in cities like Minneapolis and Chicago. Jennifer's core point landed hard: if we're going to use AI to replace parts of people's jobs, we have to build a human safety net.

Junior Developers Still Matter

We talked about what this means for the next generation. New developers — the ones Jennifer calls "baby developers" who are truly AI-native — are entering the field trusting AI output without always understanding what they're looking at. That's a dangerous pattern.

Jennifer is a vocal advocate for junior developers, and her logic is straightforward: how else do you make senior developers? You still need to train people. You still need mentorship. Her suggestion is something like a "throuple" model for pair programming — two humans and an AI working together, combining domain expertise, technical skill, and machine capability.

Platform Engineering Is the Bridge

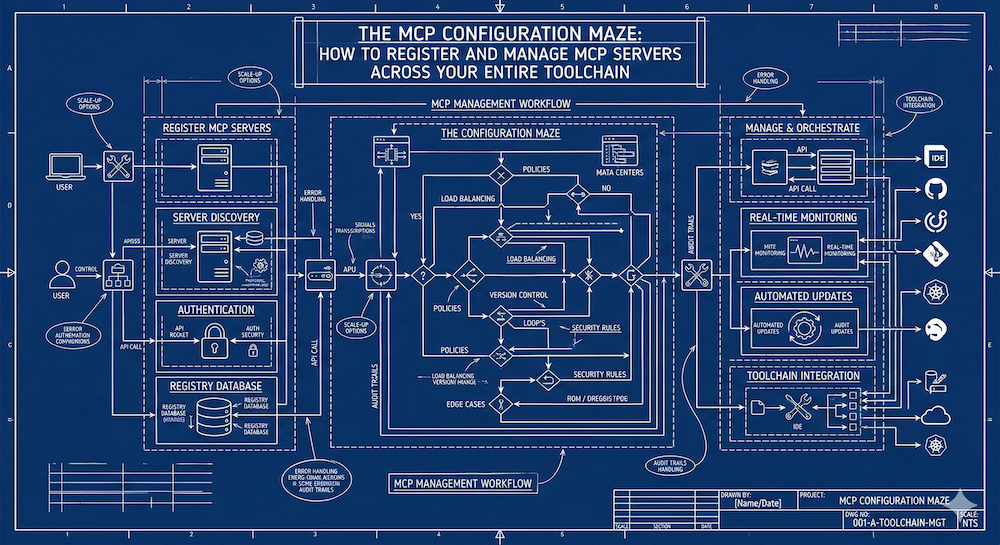

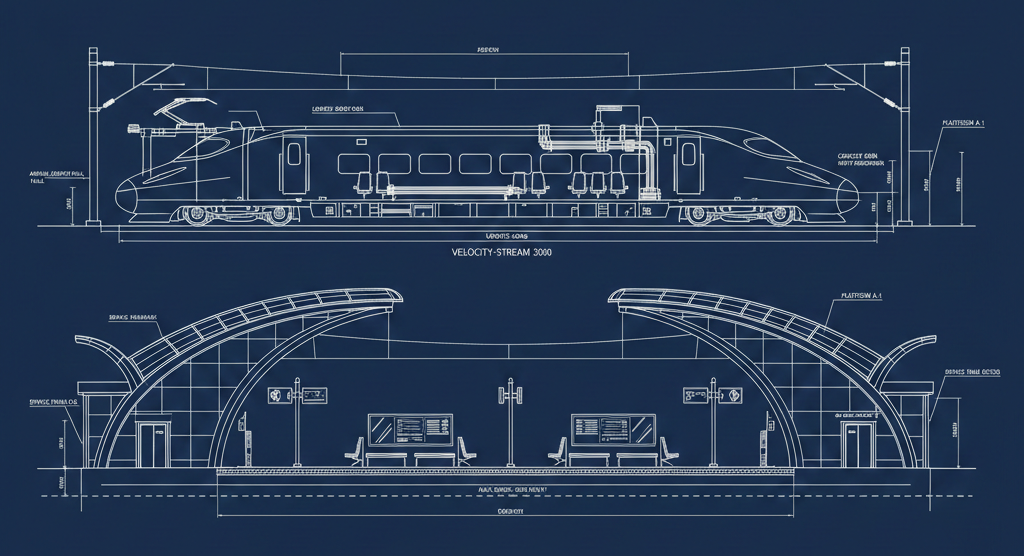

The most forward-looking part of our conversation centered on how platform engineering and AI naturally fit together. Both aim to eliminate tedium from developers' work. Both need guardrails. Both can wander off the golden path if left unchecked — or, as Jennifer put it with a Wizard of Oz reference, they can "get high in a poppy field or get snatched up by flying monkeys."

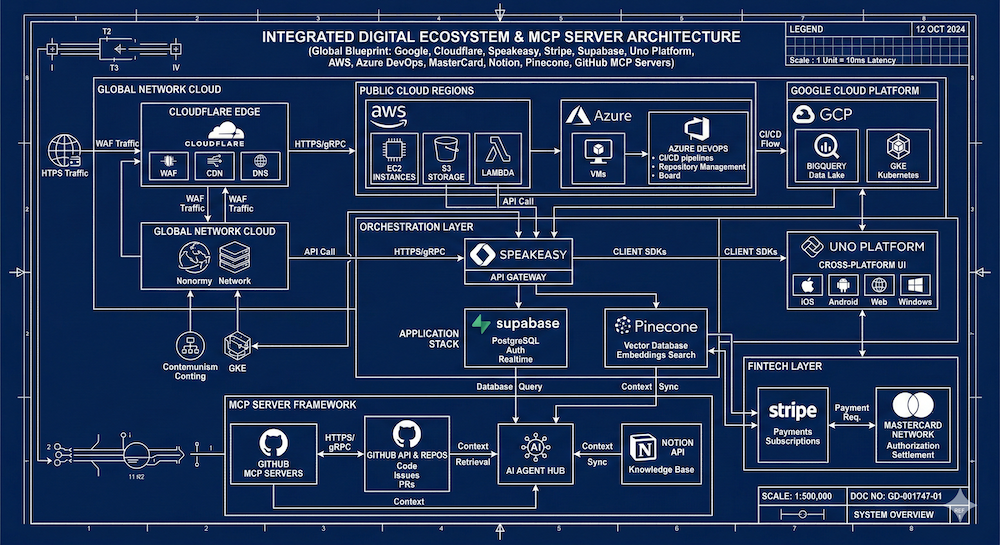

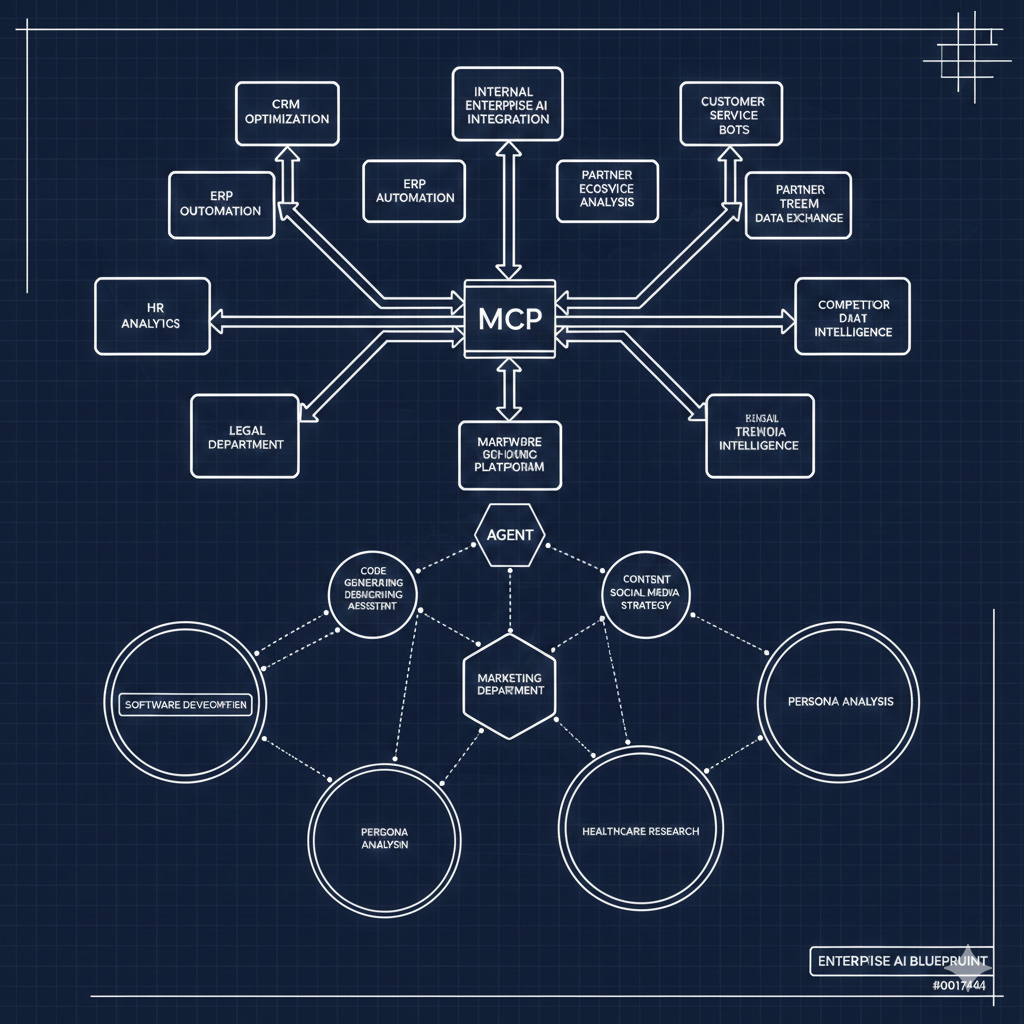

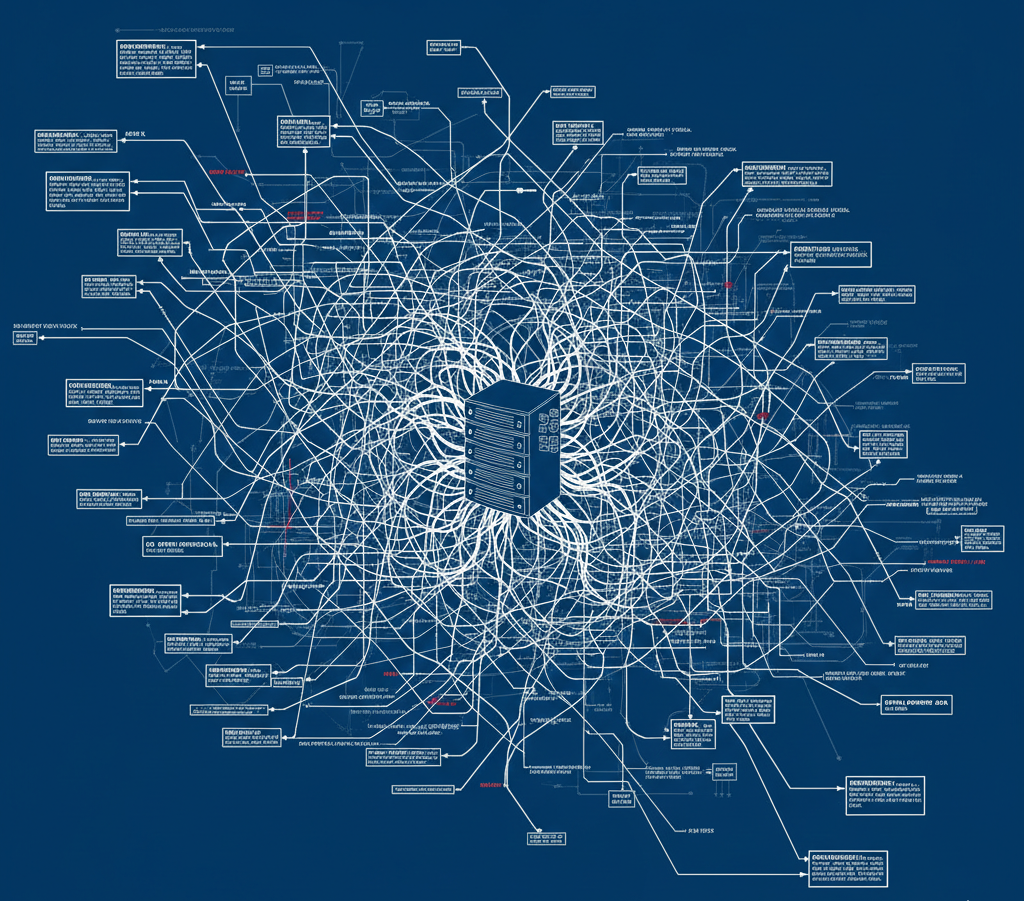

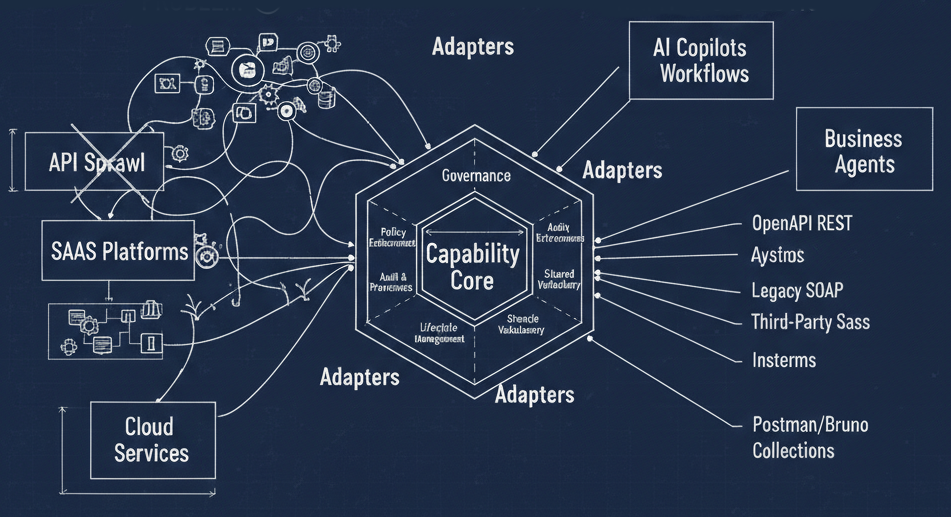

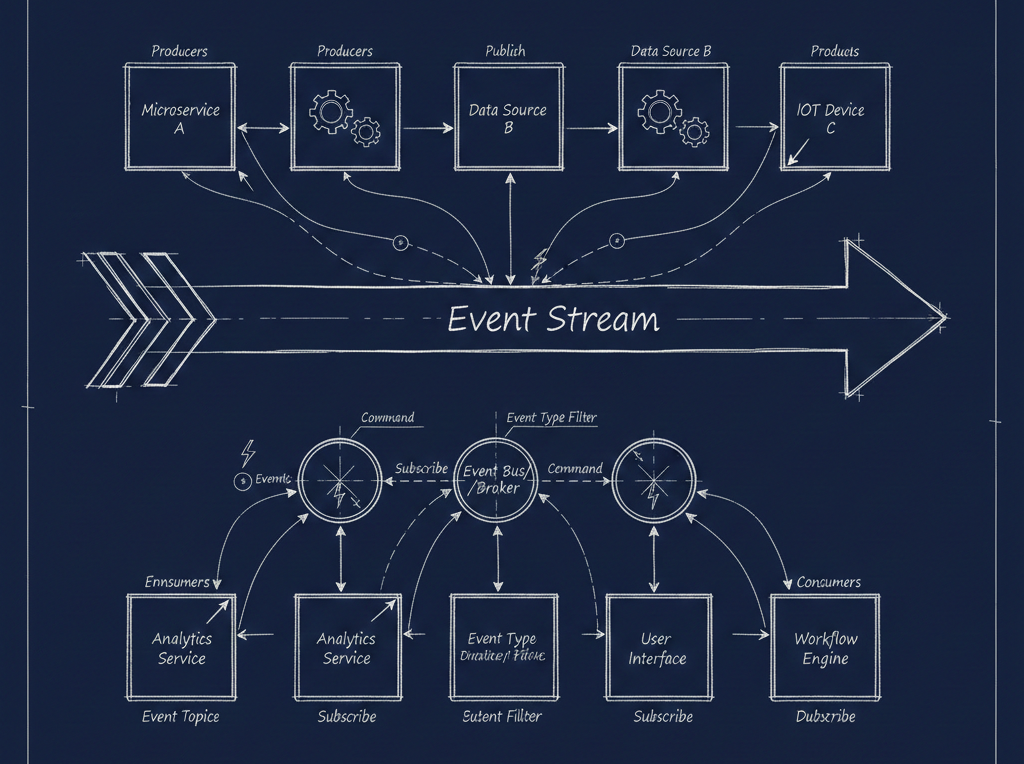

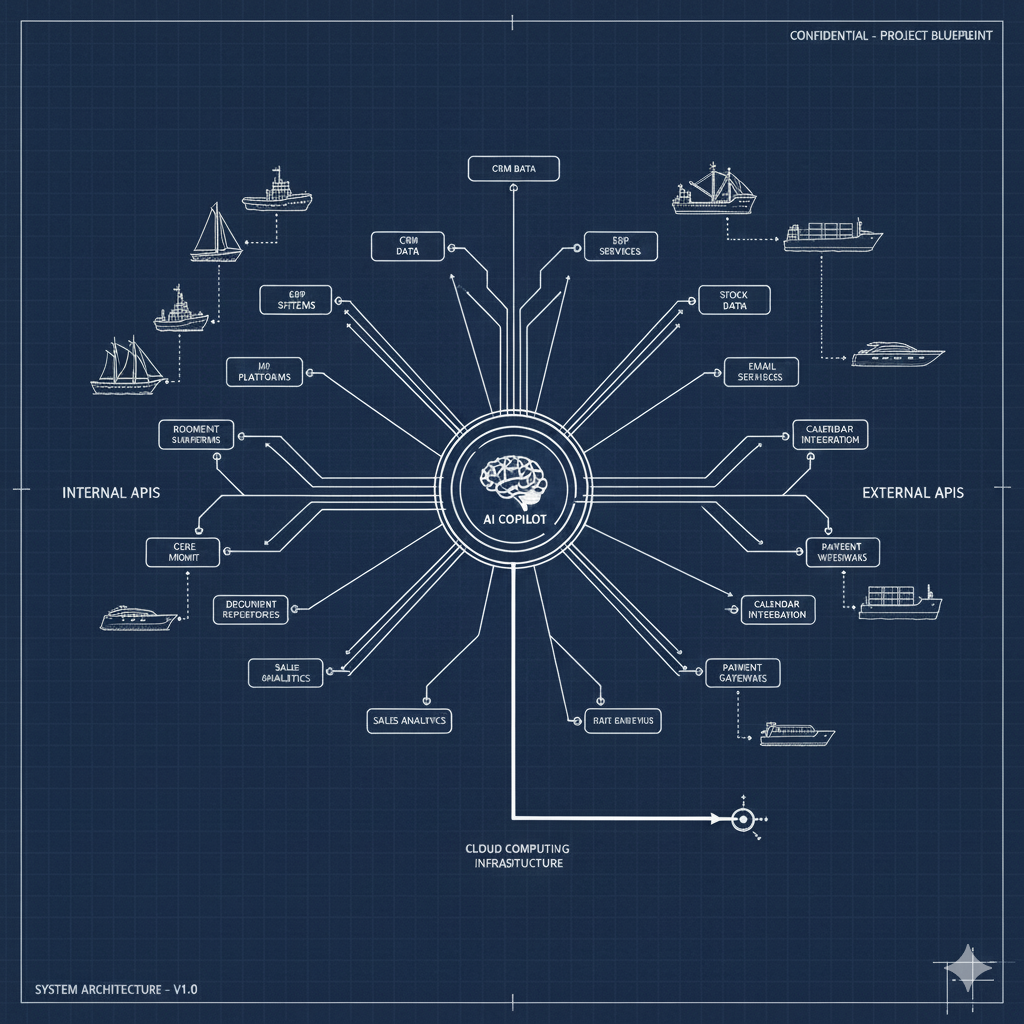

But here's the reality check: enterprise adoption of agentic AI is still nascent. Jennifer recently spoke with Rachel Laycock, CTO of ThoughtWorks, who confirmed that agent swarms just aren't happening at the enterprise level yet. The fundamentals — data integration, breaking down silos, connecting legacy systems with cloud-native ones — still aren't solved. And no amount of autonomous agents will fix that if the underlying data and integration layer is broken.

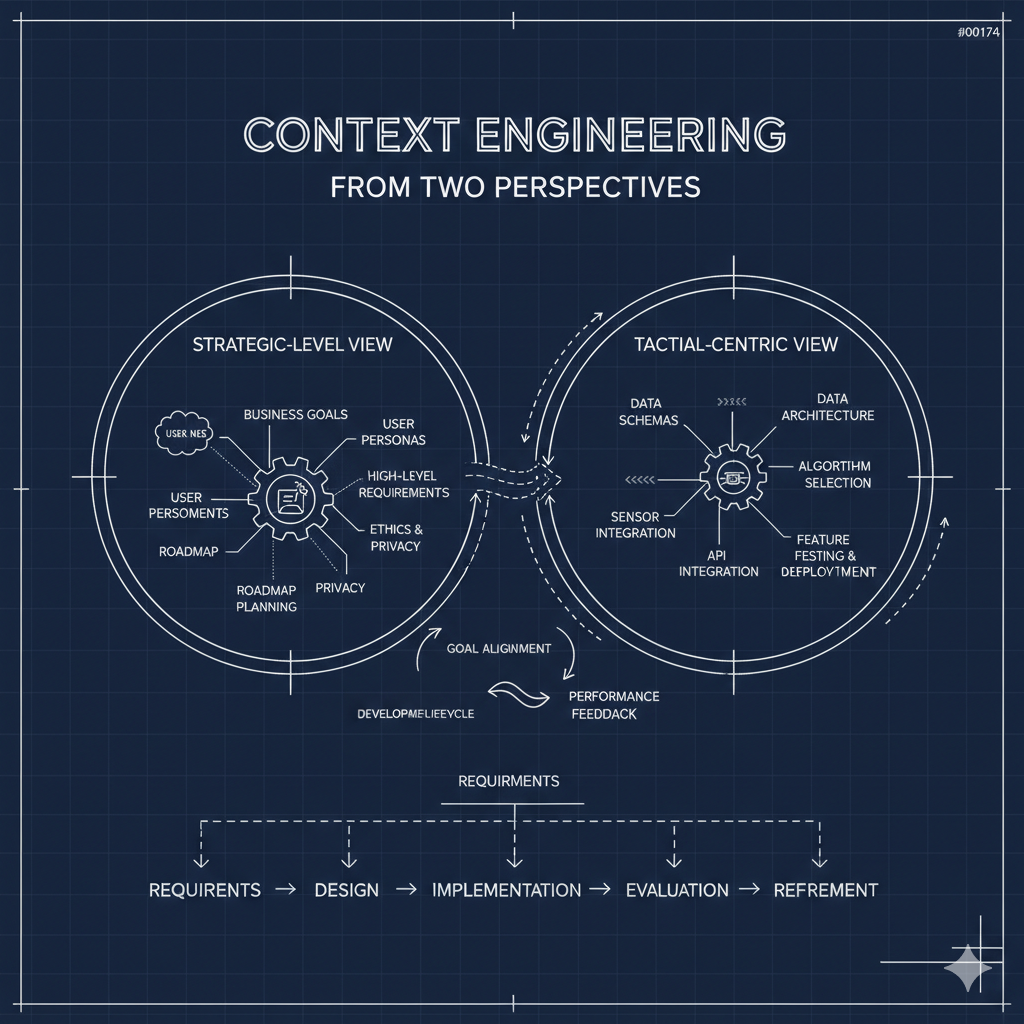

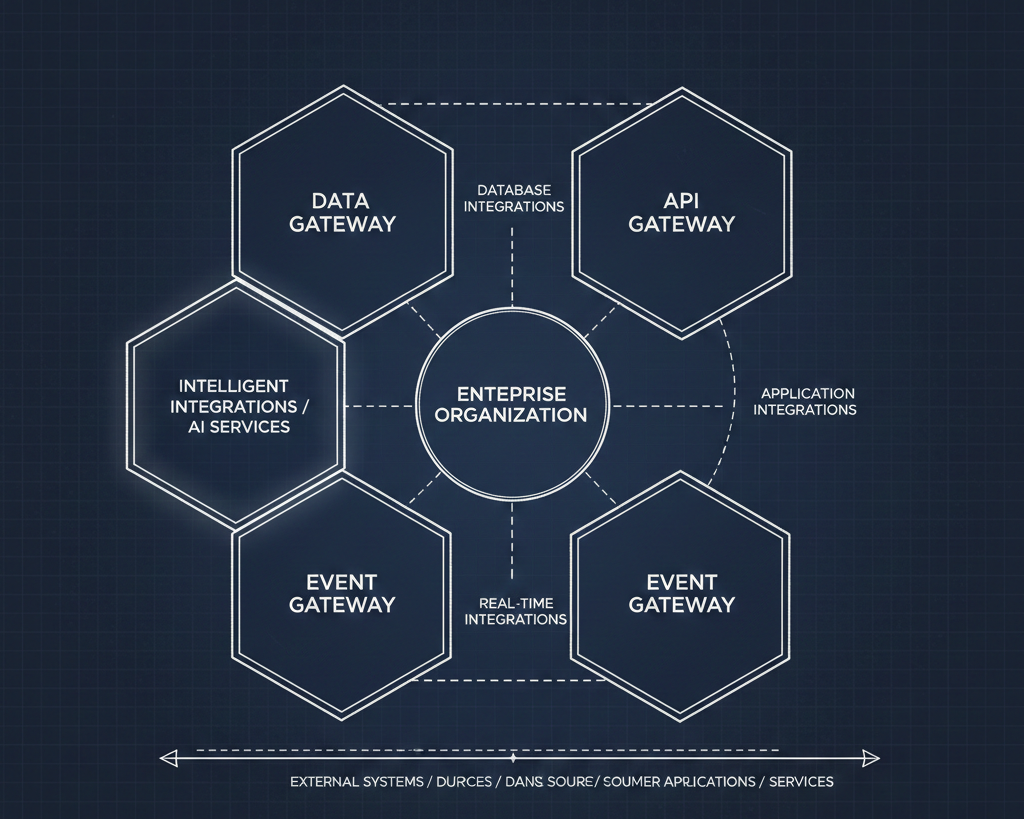

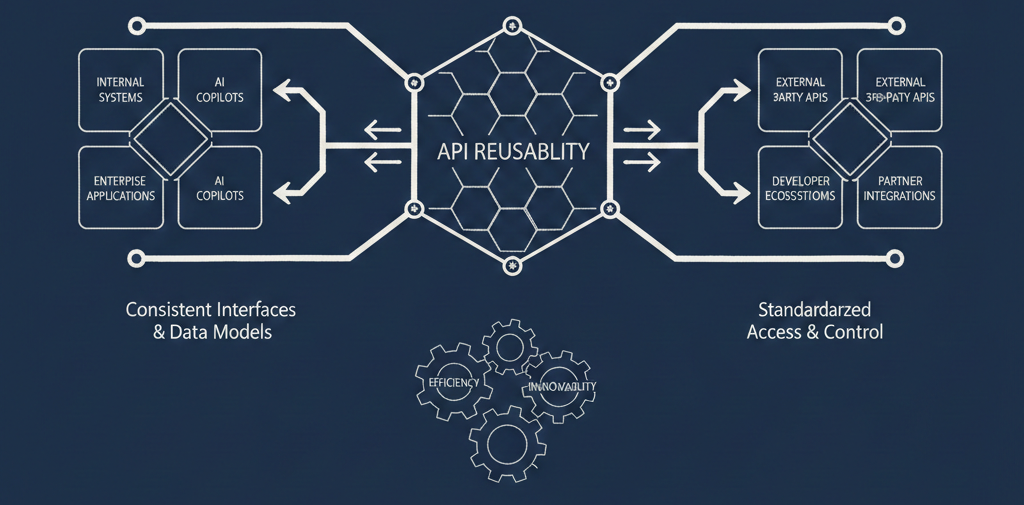

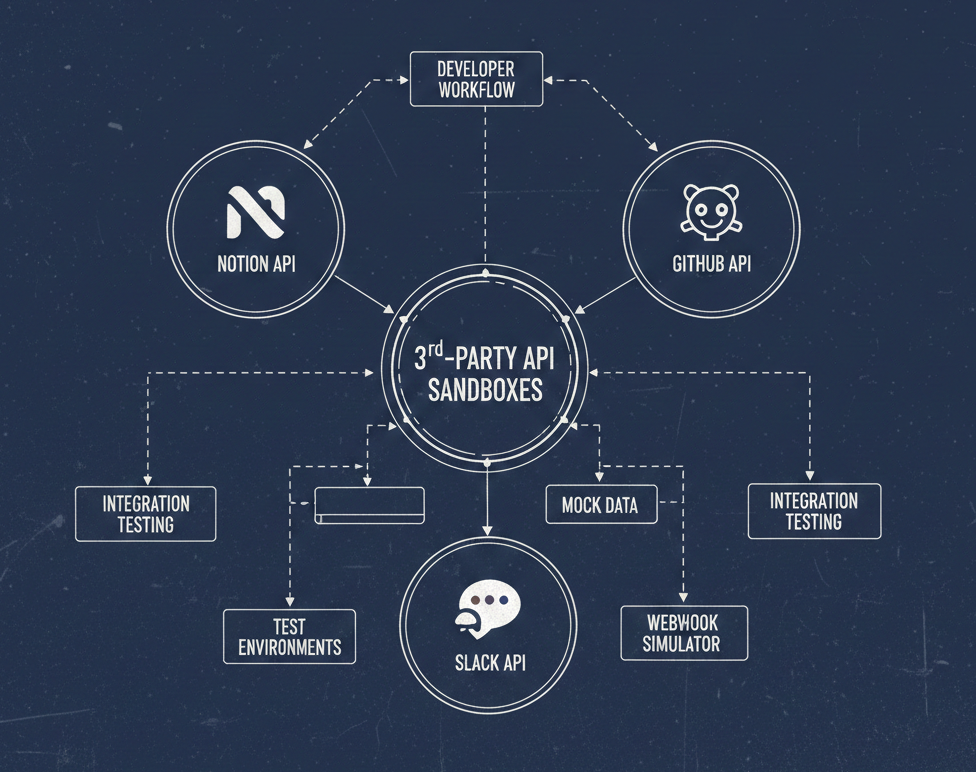

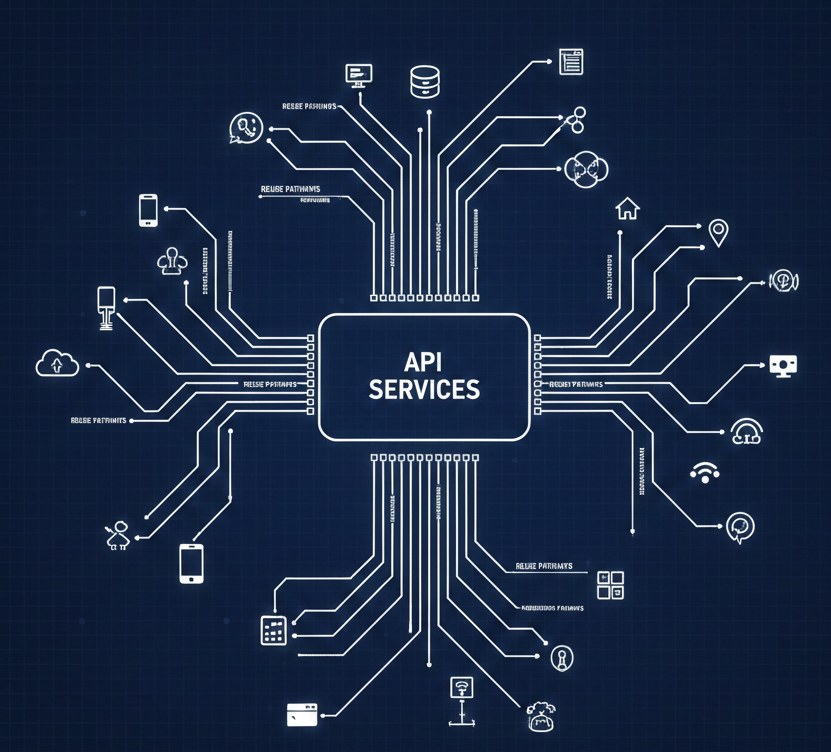

This is where platforms shine. A good internal platform creates a common language across departments and technology stacks. It bridges the mainframe and the cloud-native. And with a conversational AI layer on top, it lets people self-serve and ask better questions of the systems and each other.

Jennifer compared it to her own experience using AI to prepare for doctor's appointments — not for diagnosis, but to organize her thoughts, surface good questions, and make the most of limited time with a human expert. That's the model: AI as a tool for better human conversations, mediated through platforms that provide structure and guardrails.

Where This Leaves Us

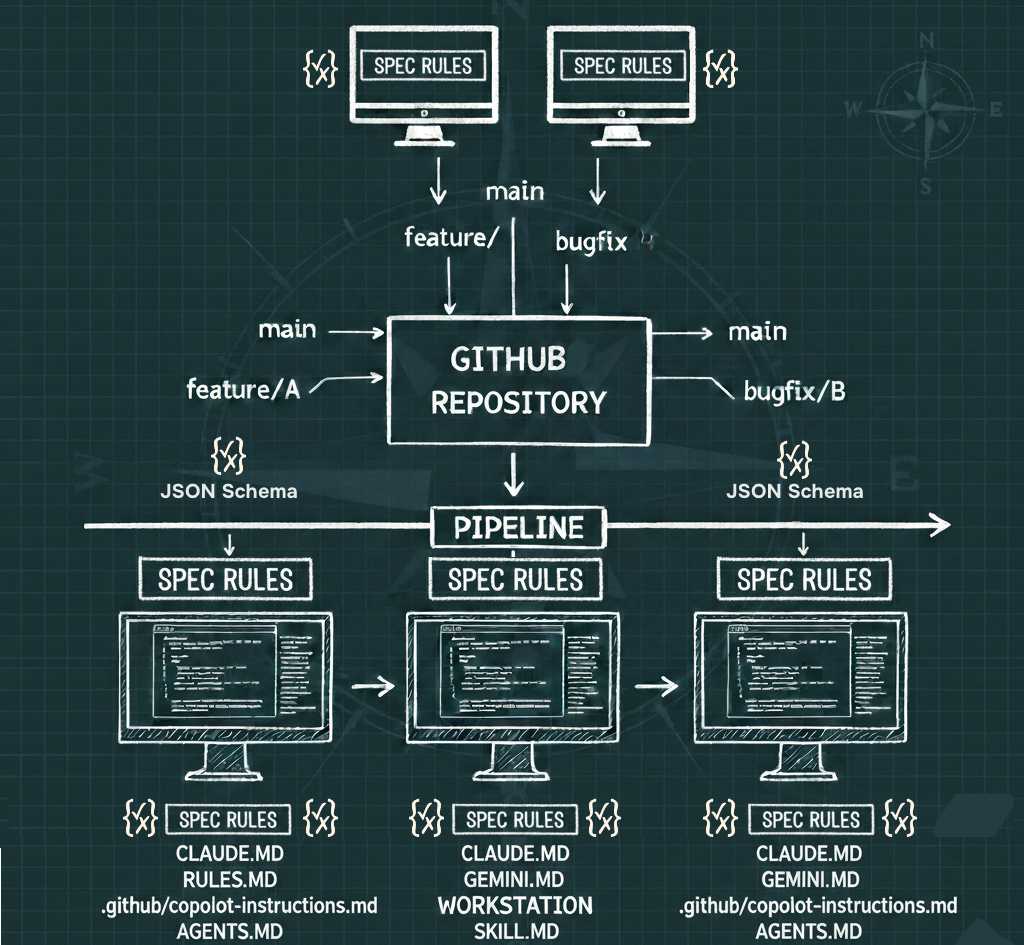

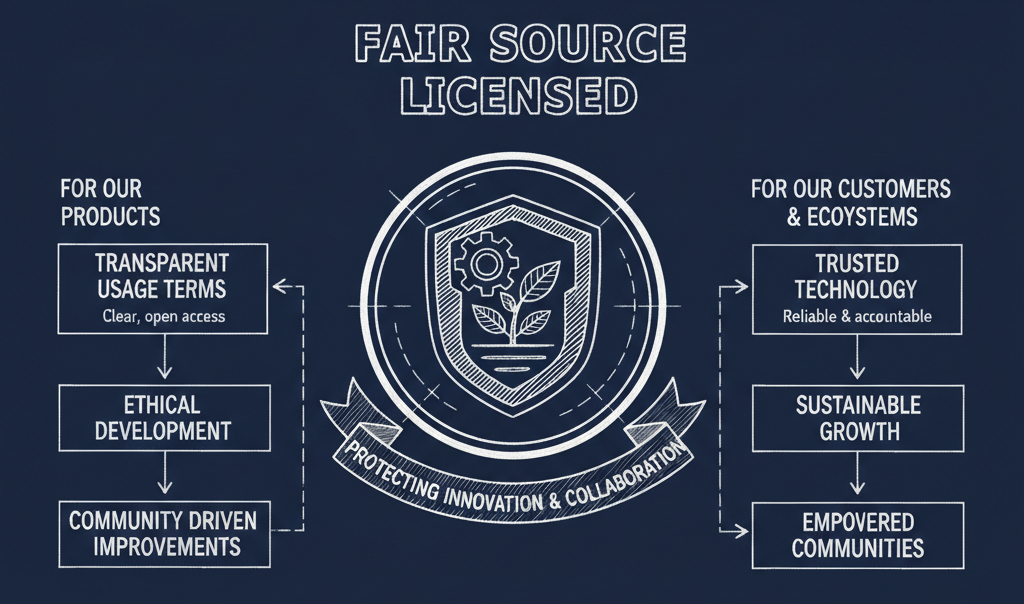

I walked away from this conversation feeling more grounded about the path forward. The platform work our industry has invested in isn't wasted — it's the foundation that makes responsible AI adoption possible. Continuous testing, version control, progressive delivery, CI/CD — all of it still matters, maybe more than ever.

But we can't ignore the human cost of this transition. Writing code was never the bottleneck, as Jennifer reminded me. Documentation might finally be getting better thanks to AI (after 15 years of developers complaining about docs while refusing to write them). But none of that matters if we lose sight of the people — the junior developers who need mentoring, the experienced engineers facing layoffs, and the broader communities affected by how we choose to deploy this technology.

Jennifer's parting thought stuck with me: we're behaving in a small-minded way about our technology adoption. We need to be more people-centric. The technical and sociotechnical solutions exist. The question is whether we have the will to use them.

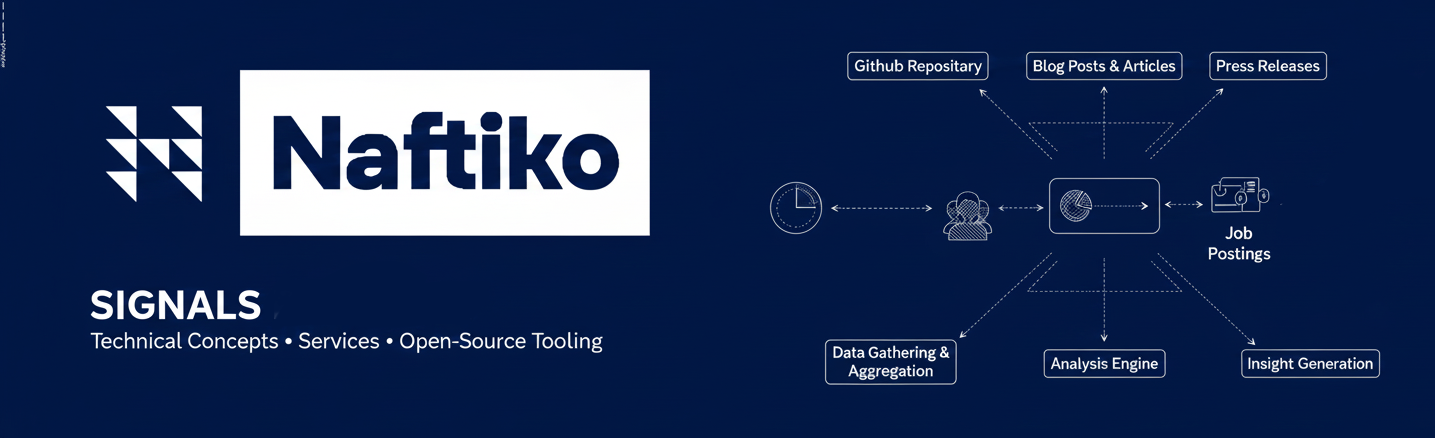

Want to dig deeper? Check out Jennifer Riggins' writing at The New Stack, and look for her free ebook on AI for the enterprise. And if you're working in enterprise APIs and integration, I'd love to hear from you — I'm currently profiling around 250 companies through my Naftiko Signals research, tracking their public signals around technology investment. Reach out on LinkedIn, Bluesky, or at kin.lane@naftiko.io.